Quote for the day:

“It is never too late to be what you might have been.” -- George Eliot

Cybersecurity Trends: What's in Store for Defenders in 2026?

For hackers of all stripes, a ready supply of easily procured, useful tools

abounds. Numerous breaches trace to information stealing malware, which grabs

credentials from a system, or log. Automated "clouds of logs" make it easy for

info stealer subscribers to monetize their attacks. ... Clop, aka Cl0p, again

stole data and held it for ransom. How many victims paid a ransom isn't known,

although the group's repeated ability to pay for zero-days suggests it's making

a tidy profit. Other cybercrime groups appear to have learned from Clop's

successes, including The Com cybercrime collective spinoff lately calling itself

Scattered Lapsus$ Hunters. One repeat target of that group has been third-party

software that connects to customer relationship management software platform

Salesforce, allowing them to steal OAuth tokens and gain access to Salesforce

instances and customer data. ... Beyond the massive potential illicit revenue

being earned by these teenagers, what's also notable is the sheer brutality of

many of these attacks, such as data breaches involving children's nurseries

including Kiddo and disrupting the British economy to the tune of $2.5 billion

through a single attack against Jaguar Land Rover that shut down assembly lines

and supply chains. ... Well-designed defenses help blunt many an attacker, or at

least slow an intrusion. Enforcing least-privileged access to resources and

multifactor authentication always helps, as do concrete security practices

designed to block CEO fraud, tricking help desk ploys and other forms of forms

of social engineering.

For hackers of all stripes, a ready supply of easily procured, useful tools

abounds. Numerous breaches trace to information stealing malware, which grabs

credentials from a system, or log. Automated "clouds of logs" make it easy for

info stealer subscribers to monetize their attacks. ... Clop, aka Cl0p, again

stole data and held it for ransom. How many victims paid a ransom isn't known,

although the group's repeated ability to pay for zero-days suggests it's making

a tidy profit. Other cybercrime groups appear to have learned from Clop's

successes, including The Com cybercrime collective spinoff lately calling itself

Scattered Lapsus$ Hunters. One repeat target of that group has been third-party

software that connects to customer relationship management software platform

Salesforce, allowing them to steal OAuth tokens and gain access to Salesforce

instances and customer data. ... Beyond the massive potential illicit revenue

being earned by these teenagers, what's also notable is the sheer brutality of

many of these attacks, such as data breaches involving children's nurseries

including Kiddo and disrupting the British economy to the tune of $2.5 billion

through a single attack against Jaguar Land Rover that shut down assembly lines

and supply chains. ... Well-designed defenses help blunt many an attacker, or at

least slow an intrusion. Enforcing least-privileged access to resources and

multifactor authentication always helps, as do concrete security practices

designed to block CEO fraud, tricking help desk ploys and other forms of forms

of social engineering.

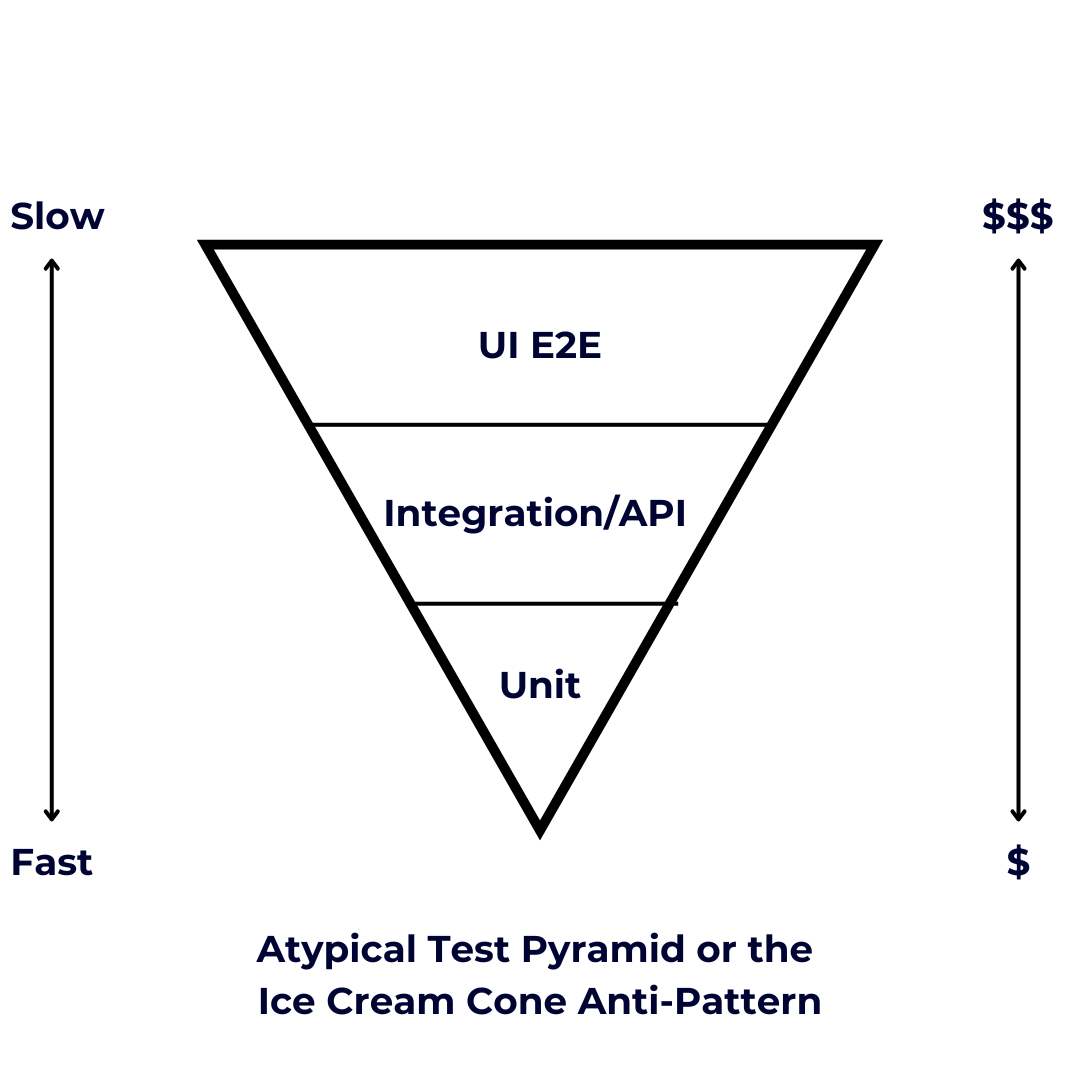

4 New Year’s resolutions for devops success

“Develop a growth mindset that AI models are not good or bad, but rather a new

nondeterministic paradigm in software that can both create new issues and new

opportunities,” says Matthew Makai, VP of developer relations at DigitalOcean.

“It’s on devops engineers and teams to adapt to how software is created,

deployed, and operated.” ... A good place to start is improving observability

across APIs, applications, and automations. “Developers should adopt an

AI-first, prevention-first mindset, using observability and AIops to move from

reactive fixes to proactive detection and prevention of issues,” says Alok

Uniyal, SVP and head of process consulting at Infosys. ... “Integrating

accessibility into the devops pipeline should be a top resolution, with

accessibility tests running alongside security and unit tests in CI as automated

testing and AI coding tools mature,” says Navin Thadani, CEO of Evinced. “As AI

accelerates development, failing to fix accessibility issues early will only

cause teams to generate inaccessible code faster, making shift-left

accessibility essential. Engineers should think hard about keeping accessibility

in the loop, so the promise of AI-driven coding doesn’t leave inclusion behind.”

... For engineers ready to step up into leadership roles but concerned about

taking on direct reports, consider mentoring others to build skills and

confidence. “There is high-potential talent everywhere, so aside from learning

technical skills, I would challenge devops engineers to also take the time to

mentor a junior engineer in 2026,” says Austin Spires

“Develop a growth mindset that AI models are not good or bad, but rather a new

nondeterministic paradigm in software that can both create new issues and new

opportunities,” says Matthew Makai, VP of developer relations at DigitalOcean.

“It’s on devops engineers and teams to adapt to how software is created,

deployed, and operated.” ... A good place to start is improving observability

across APIs, applications, and automations. “Developers should adopt an

AI-first, prevention-first mindset, using observability and AIops to move from

reactive fixes to proactive detection and prevention of issues,” says Alok

Uniyal, SVP and head of process consulting at Infosys. ... “Integrating

accessibility into the devops pipeline should be a top resolution, with

accessibility tests running alongside security and unit tests in CI as automated

testing and AI coding tools mature,” says Navin Thadani, CEO of Evinced. “As AI

accelerates development, failing to fix accessibility issues early will only

cause teams to generate inaccessible code faster, making shift-left

accessibility essential. Engineers should think hard about keeping accessibility

in the loop, so the promise of AI-driven coding doesn’t leave inclusion behind.”

... For engineers ready to step up into leadership roles but concerned about

taking on direct reports, consider mentoring others to build skills and

confidence. “There is high-potential talent everywhere, so aside from learning

technical skills, I would challenge devops engineers to also take the time to

mentor a junior engineer in 2026,” says Austin Spires

New framework simplifies the complex landscape of agentic AI

Agent adaptation involves modifying the foundation model that underlies the

agentic system. This is done by updating the agent’s internal parameters or

policies through methods like fine-tuning or reinforcement learning to better

align with specific tasks. Tool adaptation, on the other hand, shifts the focus

to the environment surrounding the agent. Instead of retraining the large,

expensive foundation model, developers optimize the external tools such as

search retrievers, memory modules, or sub-agents. ... If the agent struggles to

use generic tools, don't retrain the main model. Instead, train a small,

specialized sub-agent (like a searcher or memory manager) to filter and format

data exactly how the main agent likes it. This is highly data-efficient and

suitable for proprietary enterprise data and applications that are high-volume

and cost-sensitive. Use A1 for specialization: If the agent fundamentally fails

at technical tasks you must rewire its understanding of the tool's "mechanics."

A1 is best for creating specialists in verifiable domains like SQL or Python or

your proprietary tools. For example, you can optimize a small model for your

specific toolset and then use it as a T1 plugin for a generalist model. Reserve

A2 (agent output signaled) as the "nuclear option": Only train a monolithic

agent end-to-end if you need it to internalize complex strategy and

self-correction. This is resource-intensive and rarely necessary for standard

enterprise applications.

Agent adaptation involves modifying the foundation model that underlies the

agentic system. This is done by updating the agent’s internal parameters or

policies through methods like fine-tuning or reinforcement learning to better

align with specific tasks. Tool adaptation, on the other hand, shifts the focus

to the environment surrounding the agent. Instead of retraining the large,

expensive foundation model, developers optimize the external tools such as

search retrievers, memory modules, or sub-agents. ... If the agent struggles to

use generic tools, don't retrain the main model. Instead, train a small,

specialized sub-agent (like a searcher or memory manager) to filter and format

data exactly how the main agent likes it. This is highly data-efficient and

suitable for proprietary enterprise data and applications that are high-volume

and cost-sensitive. Use A1 for specialization: If the agent fundamentally fails

at technical tasks you must rewire its understanding of the tool's "mechanics."

A1 is best for creating specialists in verifiable domains like SQL or Python or

your proprietary tools. For example, you can optimize a small model for your

specific toolset and then use it as a T1 plugin for a generalist model. Reserve

A2 (agent output signaled) as the "nuclear option": Only train a monolithic

agent end-to-end if you need it to internalize complex strategy and

self-correction. This is resource-intensive and rarely necessary for standard

enterprise applications.

Radio signals could give attackers a foothold inside air-gapped devices

For an attack to work, sensitivity needs to be predictable. Multiple copies of

the same board model were tested using the same configurations and signal

settings. Several sensitivity patterns appeared consistently across samples,

meaning an attacker could characterize one device and apply those findings to

another of the same model. They also measured stability over 24 hours to assess

whether the effect persisted beyond short test windows. Most sensitive frequency

regions remained consistent over time, with modest drift in some paths ... Once

sensitive paths were identified, the team tested data reception. They used

on-off keying, where the transmitter switches a carrier on for a one and off for

a zero. This choice matched the observed behavior, which distinguishes between

presence and absence of a signal. Under ideal synchronization, several paths

achieved bit error rates below 1 percent when estimated received power reached

about 10 milliwatts. One path stayed below 2 percent at roughly 1 milliwatt.

Bandwidth tests showed that symbol rates up to 100 kilobits per second remained

distinguishable, even as transitions blurred at higher rates. In a longer test,

the researchers transmitted about 12,000 bits at 1 kilobit per second. At three

meters, reception produced no errors. At 20 meters, the bit error rate reached

about 6.2 percent. Errors appeared in bursts that standard error correction

could address.

For an attack to work, sensitivity needs to be predictable. Multiple copies of

the same board model were tested using the same configurations and signal

settings. Several sensitivity patterns appeared consistently across samples,

meaning an attacker could characterize one device and apply those findings to

another of the same model. They also measured stability over 24 hours to assess

whether the effect persisted beyond short test windows. Most sensitive frequency

regions remained consistent over time, with modest drift in some paths ... Once

sensitive paths were identified, the team tested data reception. They used

on-off keying, where the transmitter switches a carrier on for a one and off for

a zero. This choice matched the observed behavior, which distinguishes between

presence and absence of a signal. Under ideal synchronization, several paths

achieved bit error rates below 1 percent when estimated received power reached

about 10 milliwatts. One path stayed below 2 percent at roughly 1 milliwatt.

Bandwidth tests showed that symbol rates up to 100 kilobits per second remained

distinguishable, even as transitions blurred at higher rates. In a longer test,

the researchers transmitted about 12,000 bits at 1 kilobit per second. At three

meters, reception produced no errors. At 20 meters, the bit error rate reached

about 6.2 percent. Errors appeared in bursts that standard error correction

could address.

Smart Companies Are Taking SaaS In-House with Agentic Development

The uncomfortable truth: when your critical business processes depend on an AI

SaaS vendor’s survival, you’ve outsourced your competitive advantage to their

cap table. ... But the deeper risk isn’t operational disruption — it’s strategic

surrender. When you pipe your proprietary business context through external AI

platforms, you’re training their models on your differentiation. You’re

converting what should be permanent strategic assets into recurring operational

expenses that drag down EBITDA. For companies evaluating AI SaaS alternatives,

the real question is no longer whether to build or buy — but what parts of the

AI stack must be owned to protect long‑term competitive advantage. ... “Who

maintains these apps?” It’s the right question, with a surprising answer: 1.

SaaS Maintenance Isn’t Free — Vendors deprecate APIs, change pricing, pivot

features. Your team still scrambles to adapt. Plus, the security risk often

comes from having an external third party connecting to internal data. 2. Agents

Lower Maintenance Costs Dramatically — Updating deprecated libraries? Agents

excel at this, especially with typed languages. The biggest hesitancy —

knowledge loss when developers leave — evaporates when agents can explain the

codebase to anyone. 3. You Control the Update Schedule — With owned

infrastructure, you decide when to upgrade dependencies, refactor components, or

add features. No vendor forcing breaking changes on their timeline.

The uncomfortable truth: when your critical business processes depend on an AI

SaaS vendor’s survival, you’ve outsourced your competitive advantage to their

cap table. ... But the deeper risk isn’t operational disruption — it’s strategic

surrender. When you pipe your proprietary business context through external AI

platforms, you’re training their models on your differentiation. You’re

converting what should be permanent strategic assets into recurring operational

expenses that drag down EBITDA. For companies evaluating AI SaaS alternatives,

the real question is no longer whether to build or buy — but what parts of the

AI stack must be owned to protect long‑term competitive advantage. ... “Who

maintains these apps?” It’s the right question, with a surprising answer: 1.

SaaS Maintenance Isn’t Free — Vendors deprecate APIs, change pricing, pivot

features. Your team still scrambles to adapt. Plus, the security risk often

comes from having an external third party connecting to internal data. 2. Agents

Lower Maintenance Costs Dramatically — Updating deprecated libraries? Agents

excel at this, especially with typed languages. The biggest hesitancy —

knowledge loss when developers leave — evaporates when agents can explain the

codebase to anyone. 3. You Control the Update Schedule — With owned

infrastructure, you decide when to upgrade dependencies, refactor components, or

add features. No vendor forcing breaking changes on their timeline.

6 cyber insurance gotchas security leaders must avoid

Before committing to a specific insurer, Lindsay recommends consulting an

attorney with experience in cyber insurance contracts. “A policy is a legal

document with complex definitions,” he notes. “An attorney can flag ambiguous

terms, hidden carve-outs, or obligations that could create disputes at claim

time,” Lindsay says. ... It’s hardly surprising, but important to remember, that

the language contained in cybersecurity policies generally favors the insurer,

not the insured. “Businesses often misinterpret the language from their

perspective and overlook the risks that the very language of the policy

creates,” Polsky warns. ... You may believe your policy will cover all

cyberattack losses, yet a look at the fine print may revealed that it’s riddled

with exclusions and warranties that can’t be realistically met, particularly in

areas such as social engineering, ransomware, and business interruption. ...

Many enterprises believe they’re fully secure, yet when they file a claim the

insurer points to the fine print about security measures you didn’t know were

required, Mayo says. “Now you’re stuck with cleanup costs, legal fees, and

potential lawsuits — all without support from your insurance provider.” ... The

retroactive date clause can be the biggest cyber insurance trap, warns Paul

Pioselli, founder and CEO of cybersecurity services firm Solace. ... Perhaps the

biggest mistake an insurance seeker can make is failing to understand the

difference between first-party coverage and third-party coverage, and therefore

failing to acquire a policy that includes both, says Dylan Tate

Before committing to a specific insurer, Lindsay recommends consulting an

attorney with experience in cyber insurance contracts. “A policy is a legal

document with complex definitions,” he notes. “An attorney can flag ambiguous

terms, hidden carve-outs, or obligations that could create disputes at claim

time,” Lindsay says. ... It’s hardly surprising, but important to remember, that

the language contained in cybersecurity policies generally favors the insurer,

not the insured. “Businesses often misinterpret the language from their

perspective and overlook the risks that the very language of the policy

creates,” Polsky warns. ... You may believe your policy will cover all

cyberattack losses, yet a look at the fine print may revealed that it’s riddled

with exclusions and warranties that can’t be realistically met, particularly in

areas such as social engineering, ransomware, and business interruption. ...

Many enterprises believe they’re fully secure, yet when they file a claim the

insurer points to the fine print about security measures you didn’t know were

required, Mayo says. “Now you’re stuck with cleanup costs, legal fees, and

potential lawsuits — all without support from your insurance provider.” ... The

retroactive date clause can be the biggest cyber insurance trap, warns Paul

Pioselli, founder and CEO of cybersecurity services firm Solace. ... Perhaps the

biggest mistake an insurance seeker can make is failing to understand the

difference between first-party coverage and third-party coverage, and therefore

failing to acquire a policy that includes both, says Dylan Tate

7 major IT disasters of 2025

In July, US cleaning product vendor Clorox filed a $380 million lawsuit against

Cognizant, accusing the IT services provider’s helpdesk staff of handing over

network passwords to cybercriminals who called and asked for them. ... Zimmer

Biomet, a medical device company, filed a $172 million lawsuit against Deloitte

in September, accusing the IT consulting company of failing to deliver promised

results in a large-scale SAP S/4HANA deployment. ... In September, a massive

fire at the National Information Resources Service (NIRS) government data center

in South Korea resulted in the loss of 858TB of government data stored there.

... Multiple Google cloud services, including Gmail, Docs, Drive, Maps, and

Gemini, were taken down during a massive outage in June. The outage was

triggered by an earlier policy change to Google Service Control, a control plan

service that provides functionality for managed services, with a null-pointer

crash loop breaking APIs across several products. ... In late October, Amazon

Web Services’ US-EAST-1 region was hit with a significant outage, lasting about

three hours during early morning hours. The problem was related to DNS

resolution of the DynamoDB API endpoint in the region, causing increased error

rates, latency, and new instance launch failures for multiple AWS services. ...

In late July, services in Microsoft’s Azure East US region were disrupted, with

customers experiencing allocation failures when trying to create or update

virtual machines. The problem? A lack of capacity, with a surge in demand

outstripping Microsoft’s computing resources.

In July, US cleaning product vendor Clorox filed a $380 million lawsuit against

Cognizant, accusing the IT services provider’s helpdesk staff of handing over

network passwords to cybercriminals who called and asked for them. ... Zimmer

Biomet, a medical device company, filed a $172 million lawsuit against Deloitte

in September, accusing the IT consulting company of failing to deliver promised

results in a large-scale SAP S/4HANA deployment. ... In September, a massive

fire at the National Information Resources Service (NIRS) government data center

in South Korea resulted in the loss of 858TB of government data stored there.

... Multiple Google cloud services, including Gmail, Docs, Drive, Maps, and

Gemini, were taken down during a massive outage in June. The outage was

triggered by an earlier policy change to Google Service Control, a control plan

service that provides functionality for managed services, with a null-pointer

crash loop breaking APIs across several products. ... In late October, Amazon

Web Services’ US-EAST-1 region was hit with a significant outage, lasting about

three hours during early morning hours. The problem was related to DNS

resolution of the DynamoDB API endpoint in the region, causing increased error

rates, latency, and new instance launch failures for multiple AWS services. ...

In late July, services in Microsoft’s Azure East US region were disrupted, with

customers experiencing allocation failures when trying to create or update

virtual machines. The problem? A lack of capacity, with a surge in demand

outstripping Microsoft’s computing resources.Stop Guessing, Start Improving: Using DORA Metrics and Process Behavior Charts

The DORA framework consists of several key metrics. Among them, Change Lead Time (CLT) shows how quickly a team can deliver change. Deployment Frequency (DF) shows what the team actually delivers. While important, DF is often more volatile, influenced by team size, vacations, and the type of work being done. Finally, the instability metrics and reliability SLOs serve as a counterbalance. ... Beyond spotting special causes, PBCs are also useful for detecting shifts, moments when the entire system moves to a new performance level. In the commute example above, these shifts appear as clear drops in the average commute time whenever a real improvement is introduced, such as buying a bike or finding a shorter route. Technically, a shift occurs when several consecutive points fall above or below the previous mean, signaling that the process has fundamentally changed. ... Sustainable improvement is rarely linear. It depends on a series of strategic bets whose effects emerge over time. Some succeed, others fail, and external factors, from tooling changes to team turnover, often introduce temporary setbacks. ... According to DORA research, these metrics have a predictive relationship with broader outcomes such as organizational performance and team well-being. In other words, teams that score higher on DORA metrics are statistically more likely to achieve better business results and report higher satisfaction.5 Threats That Defined Security in 2025

Salt Typhoon is a Chinese state-sponsored threat actor best known in recent

memory for targeting telecom giants — including Verizon, AT&T, Lumen

Technologies, and multiple others — discovered last fall, targeting the systems

used by police for court-authorized wiretapping. The group, also known as

Operator Panda, uses sophisticated techniques to conduct espionage against

targets and pre-position itself for longer-term attacks. ... CISA layoffs,

indirectly, mark a threat of a different kind. At the beginning of the year, the

Trump administration cut all advisory committee members within the Cyber Safety

Review Board (CSRB), a group run by public and private sector experts to

research and make judgments about large issues of the moment. As the CSRB was

effectively shuttered, it was working on a report about Salt Typhoon. ...

React2Shell describes CVE-2025-55182, a vulnerability disclosed early this month

affecting the React Server Components (RSC) open source protocol. Caused by

unsafe deserialization, vulnerability was considered easily exploitable and

highly dangerous, earning it a maximum CVSS score of 10. Even worse, React is

fairly ubiquitous, and at the time of disclosure it was thought that a third of

cloud providers were vulnerable. ... In September, a self-replicating malware

emerged known as Shai-Hulud. It's an infostealer that infects open source

software components; when a user downloads a package infected by the worm,

Shai-Hulud infects other packages maintained by the user and publishes poisoned

versions, automatically and without much direct attacker input.

Salt Typhoon is a Chinese state-sponsored threat actor best known in recent

memory for targeting telecom giants — including Verizon, AT&T, Lumen

Technologies, and multiple others — discovered last fall, targeting the systems

used by police for court-authorized wiretapping. The group, also known as

Operator Panda, uses sophisticated techniques to conduct espionage against

targets and pre-position itself for longer-term attacks. ... CISA layoffs,

indirectly, mark a threat of a different kind. At the beginning of the year, the

Trump administration cut all advisory committee members within the Cyber Safety

Review Board (CSRB), a group run by public and private sector experts to

research and make judgments about large issues of the moment. As the CSRB was

effectively shuttered, it was working on a report about Salt Typhoon. ...

React2Shell describes CVE-2025-55182, a vulnerability disclosed early this month

affecting the React Server Components (RSC) open source protocol. Caused by

unsafe deserialization, vulnerability was considered easily exploitable and

highly dangerous, earning it a maximum CVSS score of 10. Even worse, React is

fairly ubiquitous, and at the time of disclosure it was thought that a third of

cloud providers were vulnerable. ... In September, a self-replicating malware

emerged known as Shai-Hulud. It's an infostealer that infects open source

software components; when a user downloads a package infected by the worm,

Shai-Hulud infects other packages maintained by the user and publishes poisoned

versions, automatically and without much direct attacker input.

/articles/AI-legacy-modernization/en/smallimage/AI-legacy-modernization-thumbnail-1746528855677.jpg)

.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)