Embracing the co-evolution: AI's role in enriching the workforce

AI's capabilities in data processing and predictive analytics are undeniably

impressive, yet it falls short in embodying human experience–empathy, contextual

comprehension, and emotional intelligence. This raises the question: what can AI

achieve without human involvement? For example, several automotive companies are

adopting LLMs into their vehicles and systems. They use it to conduct routine

checks and assist with on-road safety and predictive maintenance. But in this

case, AI cannot fix any of the problems that it detects. To ensure that the

challenges detected by AI are addressed, businesses will always need skilled

human workers. ... If things with AI aren’t that bad, why is the popular

narrative suggesting otherwise? The simple answer is, timing. The economic

conditions coupled with the aftermath of the pandemic has left people bracing

themselves for the next big disruption. Add the popularity of LLMs into the mix,

and you have what seems like the next catastrophe. But that’s far from the

truth.

Burnout: An IT epidemic in the making

Even among those who report low or moderate levels of burnout, 25% express a

desire to leave their company in the near future. And burnout is also impacting

skills acquisition, as 43% of Yerbo survey respondents said they had to stop

studying for a certification exam because they were unable to find time due to

their workloads. Further, burned-out employees who do leave are highly likely to

negatively impact your company’s reputation by sharing their frustrations online

and on review sites, where other potential candidates can see them. With tech

talent markets always tight, increased burnout within your organization can

quickly become not only a retention issue, but a recruitment problem as well.

... Burnout can’t be fixed overnight. Turning around burnout in your

organization will require consistency and dedication to improving the employee

experience. You’ll need to consider increases in resources, mentoring,

opportunities for advancement, as well as evaluating boundaries around work-life

balance and ensuring that a healthy balance is reflected and modeled all the way

to the top.

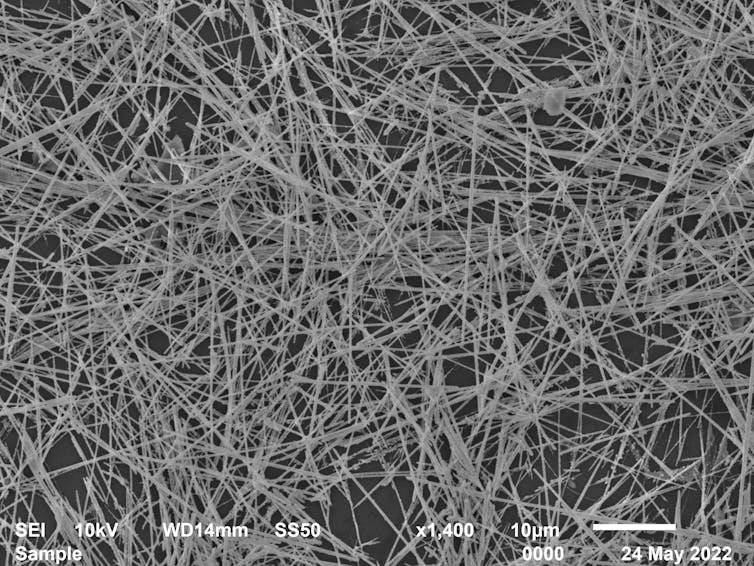

Edge and beyond: How to meet the increasing demand for memory

What is needed is a way to improve direct access to offboard memory by providing

on-demand access to memory across servers. The industry has recognized this and

has been working on a software-defined memory solution for many years in the

form of CXL. However, CXL 3.0, which provides complete caching capability, is

still several years away, will require new server architecture, and will only be

available in forthcoming generations of hardware. Concerns about latency

compromises are surfacing, too. Even CXL 3.0 is still piggybacking on the PCI

Express (PCIe) physical layer and relying on physical memory paired with PCIe,

so one would ordinarily incur a penalty on a key critical metric—latency.

Generally, the farther the memory is from the CPU, the higher the latency and

the poorer the performance. Workloads at the heart of everything from HPC to AI

have significant memory requirements. But designers struggle to make use of the

additional cores available in modern CPUs. The leap forward in the number of CPU

cores is mismatched with a lack of memory bandwidth.

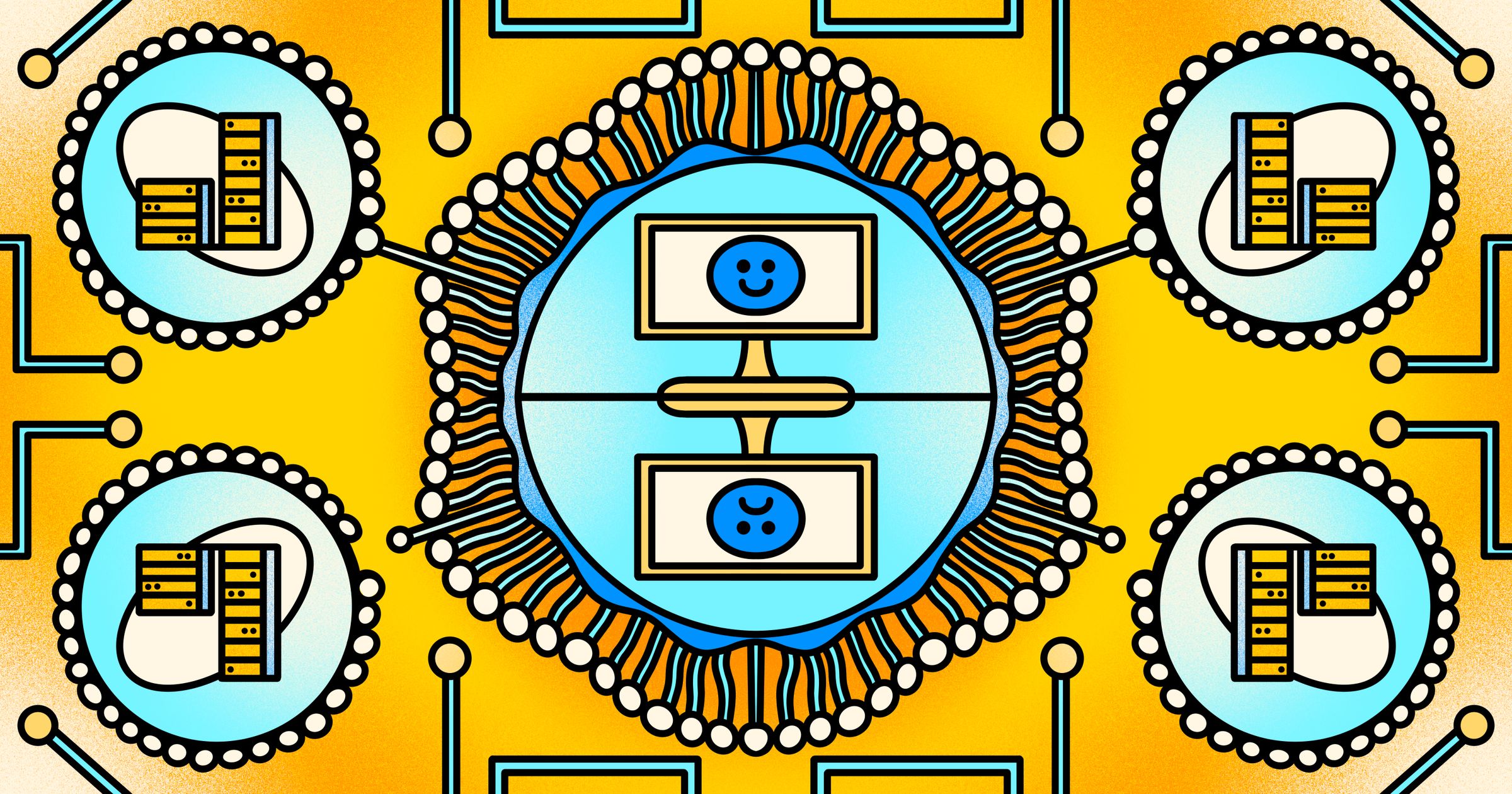

The False Dichotomy of Monolith vs. Microservices

Sure, microservices are more difficult to work with than a monolith -- I’ll give

you that. But that argument doesn’t pan out once you’ve seen a microservices

architecture with good automation. Some of the most seamless and

easy-to-work-with systems I have ever used were microservices with good

automation. On the other hand, one of the most difficult projects I have worked

on was a large old monolith with little to no automation. We can’t assume we

will have a good time just because we choose monolith over microservices. Is the

fear of microservices a backlash to the hype? Yes, microservices have been

overhyped. No, microservices are not a silver bullet. Like all potential

solutions, they can’t be applied to every situation. When you apply any

architecture to the wrong problem (or worse, were forced to apply the wrong

architecture by management), then I can understand why you might passionately

hate that architecture. Is some of the fear from earlier days when microservices

were genuinely much more difficult?

The Software Testing Odyssey That You Need to Take

Let’s illustrate a practical scenario where a financial services company is

adding new transactional functionalities to its application. Its team uses

AI-powered test creation to transform its user stories and requirements into

functional test scripts. The AI uses natural language processing to analyze

descriptions of test requirements and convert them into executable scripts

that simulate user interactions within the banking application. During

testing, which is automated and runs at predefined times, a minor application

layout UI change occurs. This results in a number of tests failing as the

pre-existing automated tests cannot locate the update element. This is where

AI-powered self-healing comes in. The AI algorithm, powered by classification

AI techniques, will inspect the failed tests meticulously and compare them

with previous test versions. Through this analysis, the AI identifies the UI

element change that caused the failures and autonomously updates the test

scripts with new locators for the UI element changes.

3 Ways of Protecting Your Public Cloud Against DDoS Attacks

While basic DDoS protection offered by CSPs is free, more advanced, or

comprehensive protection options come with additional costs. This becomes

quite expensive because you will need to pay a monthly fee for each account or

resource, and if you need more visibility into the traffic, you must turn on

and pay for an additional service. All the additional charges add up quick and

turn out to be quite expensive. Best for: All in all, the native DDoS

protection offered by cloud service providers offers basic protection which

provides good coverage for most network-layer attacks. This will be good for

those looking for cheap, no hassle, integrated protection with low latency.

... Third-party DDoS mitigation services are best for organizations looking

for dedicated, advanced DDoS protection, particularly of missions-critical

applications. It is also suitable for organizations which are frequently

attacked, and need constant, high-grade protection. In summary, DDoS

protection is a fundamental component of cybersecurity in public cloud

environments.

What is data security posture management?

As defined by Gartner, “data security posture management (DSPM) provides

visibility as to where sensitive data is, who has access to that data, how it

has been used and what the security posture of the data stored or application

is.” The DSPM approach aims to help organizations in three ways to improve

their security posture: cloud data visibility, cloud data movement and cloud

data protection. Cloud data visibility: Discover shadow data rapidly expanding

in the cloud with autonomous data discovery. This capability provides a

powerful and frictionless way to find data that sprawls within cloud service

providers and Software-as-a-Service (SaaS) apps. Understanding where your data

resides helps to shrink your attack surface and reduce data risks. Cloud data

movement: Analyze potential and actual data flows across the cloud.

Identifying where and how data moves will help provide clarity on which data

access controls and policies can best prevent vulnerabilities and

misconfigurations. Cloud data protection: Uncover vulnerabilities in data and

compliance controls and posture.

Harnessing Conflict To Create An Ideal Company Culture

The ideal work environment encourages open communication and provides

psychological safety for team members to share their views and opinions in a

respectful way. Cultivating this type of workplace takes time, practice and

training. Effective communication is a skill that not all employees are

taught, especially when it comes to expressing dissent or differing points of

view. Occasional training, coaching sessions and/or other materials may be

necessary to teach team members how to communicate respectfully. Courses can

walk through theoretical conversations and provide practical tips on how to

thoughtfully explain one’s point of view without offense or personally

attacking those who see things differently. Coaching sessions could also be a

valuable resource so that teammates can have a person available to help them

evaluate real-life scenarios that they may encounter. Often business coaching

can include role-play in those scenarios that allow people to practice their

new skills. Successful leaders acknowledge and appreciate a diversity of

voices—even the dissenters—in their company culture.

The House of Data and Data Stewardship with Dr. James Barker

“The House of Data is loosely based on Toyota’s House of Quality, which was a

hot topic when I got my master’s degree,” Barker began. “When I was at

Honeywell getting their first data governance council going, we had a diagram

that included things such as master data management (MDM), data quality,

standards, and enforcement as part of it, but it really wasn’t resonating with

people. Then I saw an example of a pillar diagram at a conference and took it

back to my team to apply to our work.” The original House of Data diagram had

four pillars -- data quality, data security, MDM, and compliance -- with data

architecture as the floor and the governance council itself as the roof. ...

At the primary level, you have your lead data stewards working together to

keep things moving forward, whether aligned around a specific function (such

as finance or manufacturing) or around a line of business. This type of

council works best at large organizations, includes a mix of LOB and

functional representation, and often meets weekly to stay up to date on what’s

working and what’s not.

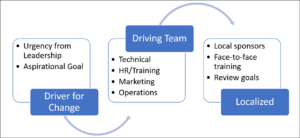

Why IT and Cybersecurity Need Apprenticeships Now More Than Ever

From the apprentice’s perspective, this pathway promises numerous benefits:

acquisition of in-demand skills, paid learning opportunities, valuable

field-specific experience, and networking avenues with potential employers.

Often, apprenticeships culminate in full-time job offers, presenting a clear

trajectory for career advancement. Businesses, in parallel, stand to gain

significantly. Through apprenticeships, they can nurture a workforce tailored

to their unique needs, potentially reducing turnover, diversifying their

teams, and boosting overall morale and productivity. However, hiring

apprentices is not the slam-dunk some government agencies make it out to be.

Although companies can be reimbursed for the training costs for registering an

apprentice program, participation has drawbacks. The application process is

time-consuming, and most states require an Apprenticeship Governance Board to

approve or reject an application. While this process is admirable to retain

rigor in programs, it can be streamlined. After successful registration, there

are compliance steps, related training and instruction, and mentor

assignments.

Quote for the day:

“Identify your problems but give your

power and energy to solutions.” -- Tony Robbins