Quote for the day:

“Rarely have I seen a situation where doing less than the other guy is a good strategy.” -- Jimmy Spithill

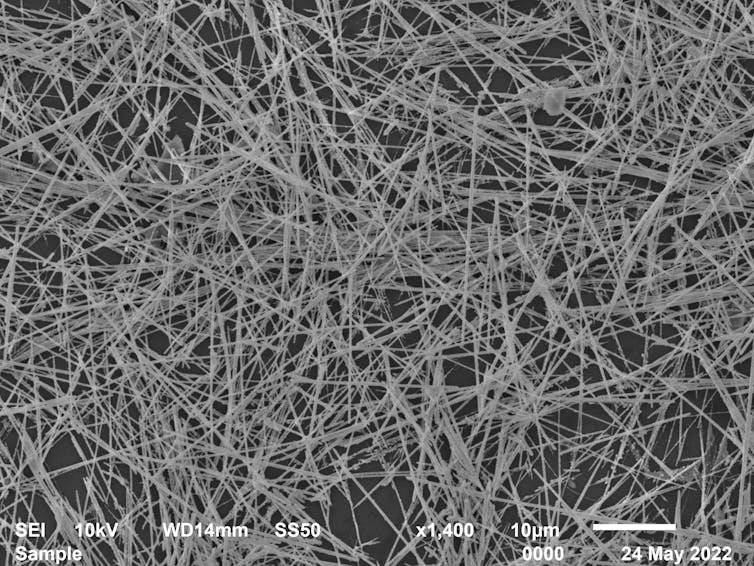

CyberArk: Rise in Machine Identities Poses New Risks

The CyberArk report outlines the substantial business consequences of failing to

protect machine identities, leaving organizations vulnerable to costly outages

and breaches. Seventy-two percent of organizations experienced at least one

certificate-related outage over the past year - a sharp increase compared to

prior years. Additionally, 50% reported security incidents or breaches stemming

from compromised machine identities. Companies that have experienced non-human

identity security breaches include xAI, Uber, Schneider Electric, Cloudflare and

BeyondTrust, among others. "Machine identities of all kinds will continue to

skyrocket over the next year, bringing not only greater complexity but also

increased risks," said Kurt Sand, general manager of machine identity security

at CyberArk. "Cybercriminals are increasingly targeting machine identities -

from API keys to code-signing certificates - to exploit vulnerabilities,

compromise systems and disrupt critical infrastructure, leaving even the most

advanced businesses dangerously exposed." ... Fifty percent of security leaders

reported security incidents or breaches linked to compromised machine identities

in the previous year. These incidents led to delays in application launches for

51% companies, customer-impacting outages for 44% and unauthorized access to

sensitive systems for 43%.

The CyberArk report outlines the substantial business consequences of failing to

protect machine identities, leaving organizations vulnerable to costly outages

and breaches. Seventy-two percent of organizations experienced at least one

certificate-related outage over the past year - a sharp increase compared to

prior years. Additionally, 50% reported security incidents or breaches stemming

from compromised machine identities. Companies that have experienced non-human

identity security breaches include xAI, Uber, Schneider Electric, Cloudflare and

BeyondTrust, among others. "Machine identities of all kinds will continue to

skyrocket over the next year, bringing not only greater complexity but also

increased risks," said Kurt Sand, general manager of machine identity security

at CyberArk. "Cybercriminals are increasingly targeting machine identities -

from API keys to code-signing certificates - to exploit vulnerabilities,

compromise systems and disrupt critical infrastructure, leaving even the most

advanced businesses dangerously exposed." ... Fifty percent of security leaders

reported security incidents or breaches linked to compromised machine identities

in the previous year. These incidents led to delays in application launches for

51% companies, customer-impacting outages for 44% and unauthorized access to

sensitive systems for 43%.

What Can Businesses Do About Ethical Dilemmas Posed by AI?

Digital discrimination is a product of bias incorporated into the AI

algorithms and deployed at various levels of development and deployment. The

biases mainly result from the data used to train the large language models

(LLMs). If the data reflects previous iniquities or underrepresents certain

social groups, the algorithm has the potential to learn and perpetuate those

iniquities. Biases may occasionally culminate in contextual abuse when an

algorithm is used beyond the environment or audience for which it was intended

or trained. Such a mismatch may result in poor predictions,

misclassifications, or unfair treatment of particular groups. Lack of

monitoring and transparency merely adds to the problem. In the absence of

oversight, biased results are not discovered. ... Human-in-the-loop systems

allow intervention in real time whenever AI acts unjustly or unexpectedly,

thus minimizing potential harm and reinforcing trust. Human judgment makes

choices more inclusive and socially sensitive by including cultural,

emotional, or situational elements, which AI lacks. When humans remain in the

loop of decision-making, accountability is shared and traceable. This removes

ethical blind spots and holds users accountable for consequences.

Digital discrimination is a product of bias incorporated into the AI

algorithms and deployed at various levels of development and deployment. The

biases mainly result from the data used to train the large language models

(LLMs). If the data reflects previous iniquities or underrepresents certain

social groups, the algorithm has the potential to learn and perpetuate those

iniquities. Biases may occasionally culminate in contextual abuse when an

algorithm is used beyond the environment or audience for which it was intended

or trained. Such a mismatch may result in poor predictions,

misclassifications, or unfair treatment of particular groups. Lack of

monitoring and transparency merely adds to the problem. In the absence of

oversight, biased results are not discovered. ... Human-in-the-loop systems

allow intervention in real time whenever AI acts unjustly or unexpectedly,

thus minimizing potential harm and reinforcing trust. Human judgment makes

choices more inclusive and socially sensitive by including cultural,

emotional, or situational elements, which AI lacks. When humans remain in the

loop of decision-making, accountability is shared and traceable. This removes

ethical blind spots and holds users accountable for consequences.Beyond the hype: AI disruption in India’s legal practice

The competitive dynamics are stark. When AI can complete a ten-hour task in

two hours, firms face a pricing paradox: how to maintain profitability while

passing efficiency gains to the clients? Traditional hourly billing models

become unsustainable when the underlying time economics change dramatically.

... Effective AI integration hinges on a strong technological foundation,

encompassing secure data architecture, advanced cybersecurity measures and a

seamless and hassle-free interoperability between systems and already

existing platforms. SAM’s centralised Harvey AI approach and CAM’s

multi-tool strategy both imply significant investment in these backend

capabilities. ... Merely automating existing workflows fails to leverage

AI’s transformative potential. To unlock AI’s full transformative value,

firms must rethink their legal processes – streamlining tasks, reallocating

human resources to higher order functions and embedding AI at the core of

decision-making processes and document production cycles. ... AI enables

alternative service models that go beyond the billable hour. Firms that

rethink on how they can price say, by offering subscription-based or

outcome-driven services, and position themselves as strategic partners

rather than task executors, will be best positioned to capture long-term

client value in an AI-first legal economy.

The competitive dynamics are stark. When AI can complete a ten-hour task in

two hours, firms face a pricing paradox: how to maintain profitability while

passing efficiency gains to the clients? Traditional hourly billing models

become unsustainable when the underlying time economics change dramatically.

... Effective AI integration hinges on a strong technological foundation,

encompassing secure data architecture, advanced cybersecurity measures and a

seamless and hassle-free interoperability between systems and already

existing platforms. SAM’s centralised Harvey AI approach and CAM’s

multi-tool strategy both imply significant investment in these backend

capabilities. ... Merely automating existing workflows fails to leverage

AI’s transformative potential. To unlock AI’s full transformative value,

firms must rethink their legal processes – streamlining tasks, reallocating

human resources to higher order functions and embedding AI at the core of

decision-making processes and document production cycles. ... AI enables

alternative service models that go beyond the billable hour. Firms that

rethink on how they can price say, by offering subscription-based or

outcome-driven services, and position themselves as strategic partners

rather than task executors, will be best positioned to capture long-term

client value in an AI-first legal economy.‘Chronodebt’: The lose/lose situation few CIOs can escape

One needn’t be an expert in the field of technical architecture to know that

basing a capability as essential as air traffic control on such obviously

obsolete technology is a bad idea. Someone should lose their job over this.

And yet, nobody has lost their job over this, nor should they have. That’s

because the root cause of the FAA’s woes — poor chronodebt management, in

case you haven’t been paying attention — is a discipline that’s rarely

tracked by reliable metrics and almost-as-rarely budgeted for. Metrics

first: While the discipline of IT project estimation is far from reliable,

it’s good enough to be useful in estimating chronodebt’s remediation costs —

in the FAA’s case what it would have to spend to fix or replace its

integrations and the integration platforms on which those integrations rely.

That’s good enough, with no need for precision. Those running the FAA for

all these years could, that is, estimate the cost of replacing the programs

used to export and update its repositories, and replacing the 3 ½” diskettes

and paper strips on which they rely. But, telling you what you already

know, good business decisions are based not just on estimated costs, but on

benefits netted against those costs. The problem with chronodebt is that

there are no clear and obvious ways to quantify the benefits to be had by

reducing it.

One needn’t be an expert in the field of technical architecture to know that

basing a capability as essential as air traffic control on such obviously

obsolete technology is a bad idea. Someone should lose their job over this.

And yet, nobody has lost their job over this, nor should they have. That’s

because the root cause of the FAA’s woes — poor chronodebt management, in

case you haven’t been paying attention — is a discipline that’s rarely

tracked by reliable metrics and almost-as-rarely budgeted for. Metrics

first: While the discipline of IT project estimation is far from reliable,

it’s good enough to be useful in estimating chronodebt’s remediation costs —

in the FAA’s case what it would have to spend to fix or replace its

integrations and the integration platforms on which those integrations rely.

That’s good enough, with no need for precision. Those running the FAA for

all these years could, that is, estimate the cost of replacing the programs

used to export and update its repositories, and replacing the 3 ½” diskettes

and paper strips on which they rely. But, telling you what you already

know, good business decisions are based not just on estimated costs, but on

benefits netted against those costs. The problem with chronodebt is that

there are no clear and obvious ways to quantify the benefits to be had by

reducing it.Can System Initiative fix devops?

System Initiative turns traditional devops on its head. It translates what

would normally be infrastructure configuration code into data, creating

digital twins that model the infrastructure. Actions like restarting servers

or running complex deployments are expressed as functions, then chained

together in a dynamic, graphical UI. A living diagram of your infrastructure

refreshes with your changes. Digital twins allow the system to automatically

infer workflows and changes of state. “We’re modeling the world as it is,”

says Jacob. For example, when you connect a Docker container to a new Amazon

Elastic Container Service instance, System Initiative recognizes the

relationship and updates the model accordingly. Developers can turn workflows

— like deploying a container on AWS — into reusable models with just a few

clicks, improving speed. The GUI-driven platform auto-generates API calls to

cloud infrastructure under the hood. ... An abstraction like System Initiative

could embrace this flexibility while bringing uniformity to how infrastructure

is modeled and operated across clouds. The multicloud implications are

especially intriguing, given the rise in adoption of multiple clouds and the

scarcity of strong cross-cloud management tools. A visual model of the

environment makes it easier for devops teams to collaborate based on a shared

understanding, says Jacob — removing bottlenecks, speeding feedback loops, and

accelerating time to value.

System Initiative turns traditional devops on its head. It translates what

would normally be infrastructure configuration code into data, creating

digital twins that model the infrastructure. Actions like restarting servers

or running complex deployments are expressed as functions, then chained

together in a dynamic, graphical UI. A living diagram of your infrastructure

refreshes with your changes. Digital twins allow the system to automatically

infer workflows and changes of state. “We’re modeling the world as it is,”

says Jacob. For example, when you connect a Docker container to a new Amazon

Elastic Container Service instance, System Initiative recognizes the

relationship and updates the model accordingly. Developers can turn workflows

— like deploying a container on AWS — into reusable models with just a few

clicks, improving speed. The GUI-driven platform auto-generates API calls to

cloud infrastructure under the hood. ... An abstraction like System Initiative

could embrace this flexibility while bringing uniformity to how infrastructure

is modeled and operated across clouds. The multicloud implications are

especially intriguing, given the rise in adoption of multiple clouds and the

scarcity of strong cross-cloud management tools. A visual model of the

environment makes it easier for devops teams to collaborate based on a shared

understanding, says Jacob — removing bottlenecks, speeding feedback loops, and

accelerating time to value.

An exodus evolves: The new digital infrastructure market

Regulatory pressures have crystallised around concerns over reliance on a

small number of US-based cloud providers. With some hyperscalers openly

admitting that they cannot guarantee data stays within a jurisdiction during

transfer, other types of infrastructure make it easier to maintain compliance

with UK and EU regulations. This is a clear strategy to avoid future financial

and reputational damage. ... 2025 is a pivotal year for digital

infrastructure. Public cloud will remain an essential part of the IT

landscape. But the future of data strategy lies in making informed, strategic

decisions, leveraging the right mix of infrastructure solutions for specific

workloads and business needs. As part of our research, we assessed the shape

of this hybrid market. ... With one eye to the future, UK-based cloud

providers must be positioned as a strategic advantage, offering benefits such

as data sovereignty, regulatory compliance, and reduced latency. Businesses

will need to situate themselves ever more precisely on the spectrum of digital

infrastructure. Their location will reflect how they embrace a hybrid model

that balances public cloud, private cloud, colocation and on-premise options.

This approach will not only optimise performance and costs but also provide

long-term resilience in an evolving digital economy.

Regulatory pressures have crystallised around concerns over reliance on a

small number of US-based cloud providers. With some hyperscalers openly

admitting that they cannot guarantee data stays within a jurisdiction during

transfer, other types of infrastructure make it easier to maintain compliance

with UK and EU regulations. This is a clear strategy to avoid future financial

and reputational damage. ... 2025 is a pivotal year for digital

infrastructure. Public cloud will remain an essential part of the IT

landscape. But the future of data strategy lies in making informed, strategic

decisions, leveraging the right mix of infrastructure solutions for specific

workloads and business needs. As part of our research, we assessed the shape

of this hybrid market. ... With one eye to the future, UK-based cloud

providers must be positioned as a strategic advantage, offering benefits such

as data sovereignty, regulatory compliance, and reduced latency. Businesses

will need to situate themselves ever more precisely on the spectrum of digital

infrastructure. Their location will reflect how they embrace a hybrid model

that balances public cloud, private cloud, colocation and on-premise options.

This approach will not only optimise performance and costs but also provide

long-term resilience in an evolving digital economy.

How Trump's Cyber Cuts Dismantle Federal Information Sharing

"The budget cuts, personnel reductions and other policy changes have decreased

the volume and frequency of CISA's information sharing activities in both

formal and informal channels," Daniel told ISMG. While sector-specific ISACs

still share information, threat sharing efforts tied to federal funding - such

as the Multi-State ISAC, which supports state and local governments - "have

been negatively affected," he said . One former CISA staffer who recently

accepted the administration's deferred resignation offer told ISMG the

agency's information-sharing efforts "were among the first to take a hit" from

the administration's cuts, with many feeling pressured into silence. ...

Analysts have also warned that cuts to cyber staff across federal agencies and

risks to initiatives including the National Vulnerability Database and Common

Vulnerabilities and Exposures program could harm cybersecurity far beyond U.S.

borders. The CVE program is dealing with backlogs and a recent threat to shut

down funding over a federal contracting issue. Failure of the CVE Program

"would have wide impacts on vulnerability management efficiency and

effectiveness globally," said John Banghart, senior director for cybersecurity

services at Venable and a key architect of the Obama administration's

cybersecurity policy as a former director for federal cybersecurity for the

National Security Council.

"The budget cuts, personnel reductions and other policy changes have decreased

the volume and frequency of CISA's information sharing activities in both

formal and informal channels," Daniel told ISMG. While sector-specific ISACs

still share information, threat sharing efforts tied to federal funding - such

as the Multi-State ISAC, which supports state and local governments - "have

been negatively affected," he said . One former CISA staffer who recently

accepted the administration's deferred resignation offer told ISMG the

agency's information-sharing efforts "were among the first to take a hit" from

the administration's cuts, with many feeling pressured into silence. ...

Analysts have also warned that cuts to cyber staff across federal agencies and

risks to initiatives including the National Vulnerability Database and Common

Vulnerabilities and Exposures program could harm cybersecurity far beyond U.S.

borders. The CVE program is dealing with backlogs and a recent threat to shut

down funding over a federal contracting issue. Failure of the CVE Program

"would have wide impacts on vulnerability management efficiency and

effectiveness globally," said John Banghart, senior director for cybersecurity

services at Venable and a key architect of the Obama administration's

cybersecurity policy as a former director for federal cybersecurity for the

National Security Council.

Securing vehicles as they become platforms for code and data

Recently security researchers have demonstrated real-world attacks against connected cars, such as wireless brake manipulation on heavy trucks by spoofing J-bus diagnostic packets. Another very recent example is successful attacks against autonomous car LIDAR systems. While the distribution of EV and advanced cars becomes more pervasive across our society, we expect these types of attacks and methods to continue to grow in complexity. Which makes a continuous, real-time approach to securing the entire ecosystem (from charger, to car, to driver) even more so important. ... Over-the-air (OTA) update hijacking is very real and often enabled by poor security design, such as lack of encryption, improper authentication between the car and backend, and lack of integrity or checksum validation. Attack vectors that the traditional computer industry has dealt with for years are now becoming a harsh reality in the automotive sector. Luckily, many of the same approaches used to mitigate these risks in IT can also apply here ... When we look at just the automobile, we have a variety of connected systems which typically all come from different manufacturers (Android Automotive, or QNX as examples) which increases the potential for supply chain abuse. We also have devices which the driver introduces which interacts with the car’s APIs creating new entry points for attackers.Strategizing with AI: How leaders can upgrade strategic planning with multi-agent platforms

Building resiliency and optionality into a strategic plan challenges humans’

cognitive (and financial) bandwidth. The seemingly endless array of future

scenarios, coupled with our own human biases, conspires to anchor our

understanding of the future in what we’ve seen in the past. Generative AI

(GenAI) can help overcome this common organizational tendency for entrenched

thinking, and mitigate the challenges of being human, while exploiting LLMs’

creativity as well as their ability to mirror human behavioral patterns. ...

In fact, our argument reflects our own experience using a multi-agent LLM

simulation platform built by the BCG Henderson Institute. We’ve used this

platform to mirror actual war games and scenario planning sessions we’ve led

with clients in the past. As we’ve seen firsthand, what makes an LLM

multi-agent simulation so powerful is the possibility of exploiting two unique

features of GenAI—its anthropomorphism, or ability to mimic human behavior,

and its stochasticity, or creativity. LLMs can role-play in remarkably

human-like fashion: Research by Stanford and Google published earlier this

year suggests that LLMs are able to simulate individual personalities closely

enough to respond to certain types of surveys with 85% accuracy as the

individuals themselves.

Building resiliency and optionality into a strategic plan challenges humans’

cognitive (and financial) bandwidth. The seemingly endless array of future

scenarios, coupled with our own human biases, conspires to anchor our

understanding of the future in what we’ve seen in the past. Generative AI

(GenAI) can help overcome this common organizational tendency for entrenched

thinking, and mitigate the challenges of being human, while exploiting LLMs’

creativity as well as their ability to mirror human behavioral patterns. ...

In fact, our argument reflects our own experience using a multi-agent LLM

simulation platform built by the BCG Henderson Institute. We’ve used this

platform to mirror actual war games and scenario planning sessions we’ve led

with clients in the past. As we’ve seen firsthand, what makes an LLM

multi-agent simulation so powerful is the possibility of exploiting two unique

features of GenAI—its anthropomorphism, or ability to mimic human behavior,

and its stochasticity, or creativity. LLMs can role-play in remarkably

human-like fashion: Research by Stanford and Google published earlier this

year suggests that LLMs are able to simulate individual personalities closely

enough to respond to certain types of surveys with 85% accuracy as the

individuals themselves.

The Network Challenges of IoT Integration

IoT interoperability and compatible security protocols are a particular

challenge. Although NIST and ISO, among other organizations, have issued IoT

standards, smaller IoT manufacturers don't always have the resources to follow

their guidance. This becomes a network problem because companies have to

retool these IoT devices before they can be used on their enterprise networks.

Moreover, because many IoT gadgets are delivered with default security

settings that are easy to undo, each device has to be hand-configured to

ensure it meets company security standards. To avoid potential

interoperability pitfalls, network staff should evaluate prospective

technology before anything is purchased. ... First, to achieve high QoS, every

data pipeline on the network must be analyzed -- as well as every single

system, application and network device. Once assessed, each component must be

hand-calibrated to run at the highest performance levels possible. This is a

detailed and specialized job. Most network staff don't have trained QoS

technicians on board, so they must go externally for help. Second, which areas

of the business get maximum QoS, and which don't? A medical clinic, for

example, requires high QoS to support a telehealth application where doctors

and patients communicate.

IoT interoperability and compatible security protocols are a particular

challenge. Although NIST and ISO, among other organizations, have issued IoT

standards, smaller IoT manufacturers don't always have the resources to follow

their guidance. This becomes a network problem because companies have to

retool these IoT devices before they can be used on their enterprise networks.

Moreover, because many IoT gadgets are delivered with default security

settings that are easy to undo, each device has to be hand-configured to

ensure it meets company security standards. To avoid potential

interoperability pitfalls, network staff should evaluate prospective

technology before anything is purchased. ... First, to achieve high QoS, every

data pipeline on the network must be analyzed -- as well as every single

system, application and network device. Once assessed, each component must be

hand-calibrated to run at the highest performance levels possible. This is a

detailed and specialized job. Most network staff don't have trained QoS

technicians on board, so they must go externally for help. Second, which areas

of the business get maximum QoS, and which don't? A medical clinic, for

example, requires high QoS to support a telehealth application where doctors

and patients communicate.

/dq/media/media_files/2024/10/22/9kpYE8VNMpfddG60WHio.png)