New Year’s resolutions for cloud pros

We live in days when cloud skills are defined by specialization. People aren’t

just cloud database experts, they are experts on a specific cloud database on a

specific cloud provider. The same can be said for cloud-based business

intelligence, a specific SaaS provider, or cloud operations focused on a

specific OS configuration. We seem to fall into niches. This limits your options

if your specific cloud technology becomes less popular. It’s better to have a

skill waiting in the wings than to learn one at the last minute. Look at job

sites to see what skills are most in demand that are somewhat related to your

current skills and obtain the basic chops that will allow you to talk your way

into a new gig if needed. For instance, if you’re focused just on a single cloud

object database, perhaps learn about one or two other object databases on

another cloud provider. This should be a relatively easy transition given that

the concepts are much the same. You can diversify even more, such as

learning about cloud-native development if you’re currently a cloud developer.

The one real problem with synthetic media

Synthetic media promises a very near future in which advertisements are custom

generated for each customer, super realistic AI customer service agents answer

the phone even at small and medium-sized companies, and all marketing,

advertising and business imagery is generated by AI, rather than human

photographers and graphics people. The technology promises AI that writes

software, handles SEO, and posts on social media without human intervention.

Great, right? The trouble is that few are thinking about the legal

ramifications. Let’s say you want your company’s leadership to be presented on

an “About Us” page on your website. Companies now are pumping existing selfies

into an AI tool, choosing a style, then generating fake photos that all look

like photos taken in the same studio with the same lighting, or painted by the

same artist with the same style and palate of colors. But the styles are often

“learned” by the AI by processing (in legal terms) the intellectual property

of specific photographers or artists.

The Curious Case of Linux: It’s for Everyone, but Nobody Uses it

There are three main reasons that users shy away from using Linux. The first

is the perceived unintuitiveness of the OS, which is the biggest fear of new

users. The second is the lack of support for applications, games, and devices

– a problem that has plagued Linux forever. The third, and most questionable,

is the toxic fanbase associated with the operating system, which commonly

undermines the efforts of newcomers to the ecosystem. Command line interface

nightmares are the most-quoted reasons for newcomers to join the ecosystem. In

addition to this, software developers rarely optimise applications for use in

Linux, making compatibility a nightmare for creators and power users. To

combat this, the community has come up with distros that inherently require

less technical know-how than others. One of the best examples of this is

Pop!_OS. ... Another major problem that average users have with Linux is not

only the lack of software, but a lack of support for games.

Building Security Champions

A Security Champion is a team member that takes on the responsibility of

acting as the primary advocate for security within the team and acting as the

first line of defense for security issues within the team. Or, more plainly:

The person who is most excited about security on a team. They want to read the

book, fix the bug, or ask security questions. Every time. Security champions

are your communicators. They deliver security messages to each dev team,

teaching, sharing, and helping. They are your point of contact, delivering

messages to and from the security team and keeping you up to date on what

matters to your team. They are your advocate. They perform security work, for

their dev team, with your help. They also advocate for security, asking

questions in situations you would have been left out of. Raising concerns you

might have missed. They are a peer for everyone on their team and can

influence in ways that you yourself cannot. In the next few paragraphs, we

will cover how to build an amazing security champions program!

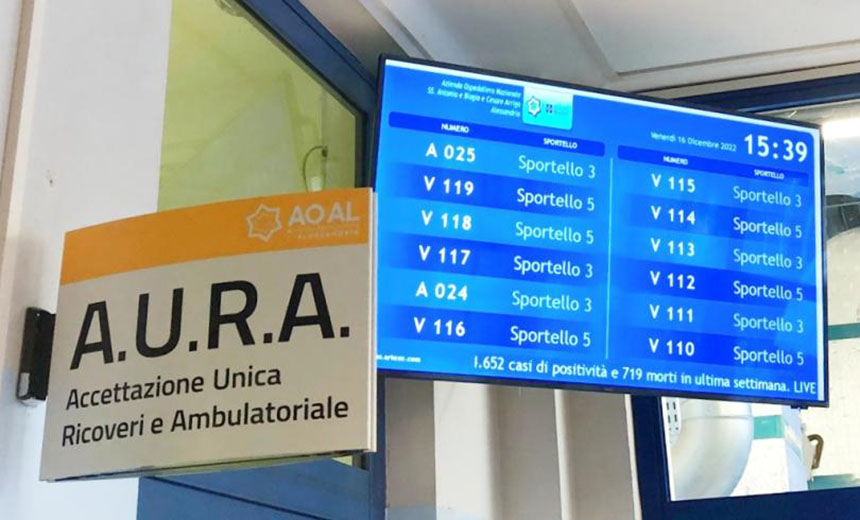

Italian Healthcare Group Targeted in Data-Leaking Shakedown

The criminals claim they reached out directly to hospital staff: "We has

also ask some of employees during phone calls about the incident but they

answered that they didn't heard about any breach. So, they were asked to

review the evidence in Live Chat and we have repeatedly tried to make it

clear that hundreds of thousands of personal data have been compromised due

to their negligence." The criminals add: "Our advise is to replace the

entire IT staff and have them undergo proficiency tests and check them for

budget wasting as well." Take all such posturing and self-serving

announcements with a big grain of salt, says Brett Callow, a threat analyst

at security firm Emsisoft who closely tracks ransomware groups' activities.

... "Why do they do this? It's all about PR and branding. They think that

organizations may be less likely to want to hand money to the type of evil

criminals who are happy to put lives at risk by carrying out financially

motivated attacks on hospitals."

Workplace Trends You Need to Know for 2023

As we near the end of 2022, a shift is happening — for the better. The U.S.

Surgeon General reported that 71% of employees believe their employer is

more concerned about their mental health and wellbeing than ever before.

This is a huge step forward and one we must grasp and run with. In response,

the U.S. Surgeon General released a framework that aims to support

workplaces in better improving the mental health and wellbeing of their

employees. This includes: Ensuring there is an opportunity for growth,

valuing employee contributions, enhancing social connections in the

workplace and focusing on achieving better work-life integration. We're

likely to see more mental wellbeing initiatives and strategies employed

across businesses that deliver meaningful and practical help to their

employees — from self-care days off once a month to increased wellbeing

benefits, mental health first aid training and even adaptations to the

workplace.

US Congress funds cybersecurity initiatives in FY2023 spending bill

The bill stipulates that no government agency may use their funds to buy

telecom equipment from Chinese tech giants Huawei or ZTE for “high or

moderate impact information systems,” as determined by the National

Institute of Standards and Technology (NIST). It further states that

agencies cannot use any of their funds for technology, including

biotechnology, digital, telecommunications, and cyber, developed by the

People’s Republic of China unless the secretary of state, in consultation

with the USAID administrator and the heads of other federal agencies, as

appropriate, determines that such use does not adversely impact the national

security of the United States. Moreover, no agency can spend funds on

entities owned, directed, or subsidized by China, Iran, North Korea, or

Russia unless the FBI or other appropriate federal entity has assessed any

risk of cyber espionage or sabotage associated with acquisitions from these

entities. ... Finally, the bill amends the Federal Food, Drug, and Cosmetic

Act to make medical device makers meet specific cybersecurity

standards.

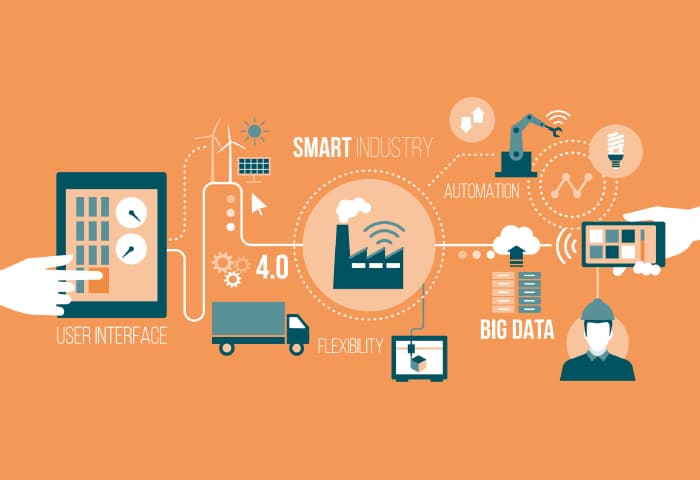

Cloud Adoption Plans Accelerate, Highlighting Need for Qualified IT

As organizations transition to providing digital solutions in a digital

workplace, public, multi, and hybrid cloud adoption is on the rise. Farid

Roshan, global head of digital enablement practice at Altimetrik, says the

transitional data center mindset leads to high sunk costs for procuring

appliances and difficulty in attaining talent to support data center

maintenance activities. “Organizations lose precious time and energy

focusing on managing infrastructure vs. building products that bring value

to their customers,” he says. From his perspective, public cloud platforms

provide IT teams the ability to focus on creating innovative solutions and

attracting highly skilled talent to develop products that drive business

growth, while reducing overall IT cost of ownership. Roshan adds cloud

adoption can lead to unexpected delays and failure in transforming

organizations if the cloud strategy is not well understood across the

organization. “Understanding the goals for moving to the cloud as well as

implementing an executive cloud strategy, defining a roadmap and OKRs, will

allow for business and IT groups to align their annual and quarterly goals,”

he says.

What is the role of the data manager?

The data manager’s function is essentially to oversee the value chain and

ensure data is delivered effectively, says Carruthers. “This means helping

create data which is accessible, usable and safe. Information can then be

delivered to the right place and in a good condition so it can be used in

the most effective way possible.” Carruthers compares the role of data

manager to the conductor in an orchestra. “The manager is there to oversee

the whole data team, rather than frantically trying to play every instrument

themselves. As the orchestra analogy suggests, it is a data manager’s role

to ensure the song sheet is followed by every team member. This means

managing the use of data to ensure it goes through the correct value chain.”

The data manager role is not just about being “good with data”. It involves

a combination of technical and interpersonal skills, says Andy Bell, vice

president global data product management at data integrity specialist

Precisely. As well as technical skills, he says data managers need to have

“a thorough understanding about the application of technology”.

Cybercriminals create new methods to evade legacy DDoS defenses

Attackers will continue to make their mark in 2023 by trying to develop new

ways to evade legacy DDoS defenses. We saw Carpet Bomb attacks rearing their

head in 2022 by leveraging the aggregate power of multiple small attacks,

designed specifically to circumvent legacy detect-and-redirect DDoS

protections or neutralize ‘black hole’ sacrifice-the-victim mitigation

tactics. This kind of cunning will be on display as DDoS attackers look for

new ways of wreaking havoc across the internet and attempt to outsmart

existing thinking around DDoS protection. In 2023, the cyberwarfare that we

have witnessed with the conflict in Ukraine will undoubtedly continue. DDoS

will continue to be a key weapon in the Ukrainian and other conflicts both to

paralyse key services and to drive political propaganda objectives. DDoS

attack numbers rose significantly after the Russian invasion in February and

DDoS continues to be used as an asymmetric weapon in the ongoing struggle.

Quote for the day:

"If you don't demonstrate leadership

character, your skills and your results will be discounted, if not

dismissed." -- Mark Miller