Prepping for 2023: What’s Ahead for Frontend Developers

WebAssembly will work alongside JavaScript, not replace it, Gardner said. If you

don’t know one of the languages used by WebAssembly — which acts as a compiler —

Rust might be a good one to learn because it’s new and Gardner said it’s gaining

the most traction. Another route to explore: Blending JavaScript with

WebAssembly. “Rust to WebAssembly is one of the most mature paths because

there’s a lot of overlap between the communities, a lot of people are interested

in both Rust and WebAssembly at the same time,” he said. “Plus, it’s possible to

blend WebAssembly with JavaScript so it’s not an either-or situation

necessarily.” That in turn will yield new high-performing applications running

on the web and mobile, Gardner added. “You’re not going to see necessarily

a ‘Made with WebAssembly’ banner show up on websites, or anything along those

lines, but you are going to see some very high-performing applications running

on the web and then also on mobile, built off of WebAssembly,” he said. ...

“Organizations are trying to automate and improve their test automation, and

part of that shift to shipping faster means, you have to find ways to optimize

what you’re doing,” DeSanto said.

What is FinOps? Your guide to cloud cost management

“FinOps brings financial accountability — including financial control and

predictability — to the variable spend model of cloud,” says J.R. Storment,

executive director of the FinOps Foundation. “This is increasingly important as

cloud spending makes up ever more of IT budgets.” It also enables organizations

to make informed trade-offs between speed, cost, and quality in their cloud

architecture and investment decisions, Storment says. “And organizations get

maximum business value by helping engineering, finance, technology, and business

teams collaborate on data-driven spending decisions,” he says. Aside from

bringing together the key people who can help an organization gain better

control of its cloud spending, FinOps can help reduce cloud waste, which IDC

estimates between 10% to 30% for organizations today. “Moving from show-back

cloud accounting, where IT still pays and budgets for cloud spending, to a

charge-back model, where individual departments are accountable for cloud

spending in their budget, is key to accelerating savings and ensuring only

necessary cloud projects are implemented,” Jensen says.

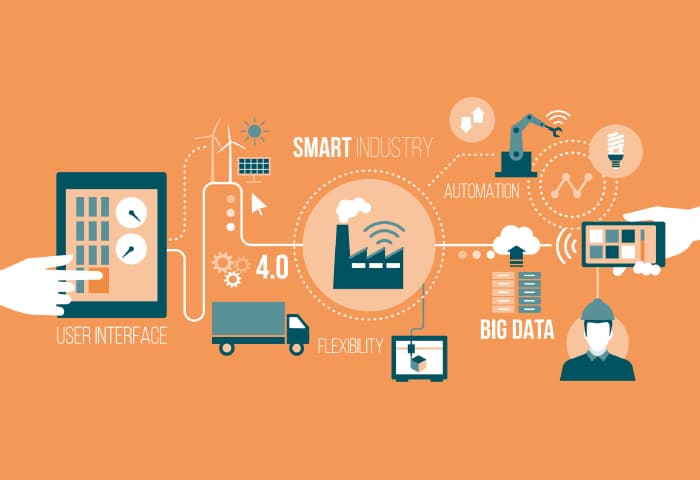

IoT Analytics: Making Sense of Big Data

The principles that guide enterprises in the way they approach IoT analytics

data are: Data is an asset: Data is an asset that has a specific and measurable

value for the enterprise.Data is shared: Data must be shared across the

enterprise and its business units. Users have access to the data that is

necessary to perform their activities; Data trustees: Each data element has

trustees accountable for data quality; Common vocabulary and data definitions:

Data definition is consistent, and the taxonomy is understandable throughout the

enterprise; Data security: Data must be protected from unauthorised users and

disclosure; Data privacy: Privacy and data protection is considered throughout

the life cycle of a Big Data project. All data sharing conforms to the relevant

regulatory and business requirements; and Data integrity and the

transparency of processes: Each party to a Big Data analytics project must be

aware of and abide by their responsibilities regarding the provision of source

data and the obligation to establish and maintain adequate controls over the use

of personal or other sensitive data.

Reframing our understanding of remote work

The remote and hybrid work trend is the most disruptive change in how businesses

work since the introduction of the personal computer and mobile devices. Then,

like now, the conversation was lost in the weeds. Should we allow PCs? Should we

allow employees to bring their own devices? Should we issue pagers, feature

phones, then smartphones to employees or let them use their own? In hindsight,

it's clear that all these concerns were utterly pointless. The PC revolution was

a tsunami of certainty that would wash away old ways of doing everything. So the

only question should have been: How do we ensure these devices are empowering,

secure, and usable? All focus should have been on the massive learning curve by

organizations (what's the best way to deploy, update, secure, provision,

purchase, and network these devices for maximum benefit) And by end users. In

other words, while everyone gnashed their teeth over whether to allow devices —

or what kind or level of devices to allow — the energy could have been much

better spent realizing the entire issue was about skills and knowledge.

Developing Successful Data Products at Regions Bank

Misra said that there are a few especially important components involved in the

success of the data product partner role and the discipline of product

management for analytics and AI initiatives. One is to ensure that the partner

role is strategic, proactive, and focused on critical business needs, and not

simply an on-demand service within the company. All data products should address

a critical business priority for partners and, when deployed, should deliver

substantial incremental value to the business. The teams that work on the

products should employ agile methods and include data scientists, data managers,

data visualization experts, user interface designers, and platform and

infrastructure developers. Misra is a fan of software engineering disciplines —

systematic techniques for the analysis, design, implementation, testing, and

maintenance of software programs — and believes that they should be employed in

data science and data products as well. This product orientation also requires

that there’s a big-picture focus, not just by the data product partners but by

everyone on the product development teams.

Amplified security trends to watch out for in 2023

Cybercriminals target employees across different industries to surreptitiously

recruit them as insiders, offering them financial enticements to hand over

company credentials and access to systems where sensitive information is stored.

This approach isn’t new, but it is gaining popularity. A decentralized work

environment makes it easier for criminals to target employees through private

social channels, as the employee does not feel that they are being watched as

closely as they would in a busy office setting. Aside from monitoring user

behavior and threat patterns, it’s important to be aware of and be sensitive

about the conditions that could make employees vulnerable to this kind of

outreach – for example, the announcement of a massive corporate restructuring or

a round of layoffs. Not every employee affected by a restructuring suddenly

becomes a bad guy, but security leaders should work with Human Resources or

People Operations and people managers to make them aware of this type of

criminal scheme, so that they can take the necessary steps to offer support to

employees who could be affected by such organizational or personal

matters.

What is the Best Cloud Strategy for Cost Optimization?

More often than not, some resources are underutilized. This usually stems from

overbudgeting for certain processes. For instance, a cloud computing instance

may be underutilized to the point that it uses less than 5% of its CPU. Note

that with cloud services, you pay for the storage and computing power, rather

than the space. In the instance highlighted above, it’s clear that there’s a

case of significant waste. In your bid to optimize costs, it’s best to

identify these idle instances and consolidate the workload into fewer cloud

instances. It can be difficult to understand how much power the system uses

without adequate visualization. Heat maps are highly useful in cloud cost

optimization. This infographic tool highlights computing demand and

consumption’s high and low points. This data can be useful in establishing

stop and start times for cost reduction. Visual tools like heat maps can help

you identify clogged-up sections before they become problematic. When a system

load becomes one-directional, you know it’s time to adjust and balance it

before it disrupts your processes.

Server supply chain undergoes shift due to geopolitical risks

Adding to the motivation to exit China and Taiwan was the saber rattling and

increasingly bellicose tone from Beijing to Taiwan, along with fairly severe

sanctions on semiconductor sales from the U.S. Department of Commerce. This

has led some US-based cloud service providers, such as Google, AWS, Meta, and

Microsoft, to look at adding server production lines outside Taiwan as a

precautionary measure, according to TrendForce. There have been a number of

other moves as well. In the US, Intel is spending $20 billion on an Arizona

fab and another $20 billion on fabs in Ohio. TSMC is spending $40 billion on

fabs in Arizona as well, and Apple is moving production to the US, Mexico,

India, and Vietnam. TrendForce also noted a phenomenon it calls

“fragmentation” as an emerging model in the management of the server supply

chain. It used to be that server production and the assembly process were

handled entirely by ODMs. In the future, the assembly task of a server project

will be given to not only an ODM partner but also a system integrator.

What’s the Difference Between Kubernetes and OpenShift?

Red Hat provides automated installation and upgrades for most common public

and private clouds, allowing you to update on your own schedule and without

disrupting operations. This process is perhaps one of the biggest

differentiations between OpenShift and the standard Kubernetes environment, as

it provides a runbook for updates and uses this to avoid disruption. If you’re

running a cluster of OpenShift servers, you will be able to upgrade while

applications continue to run, with OpenShift’s orchestration tools moving

nodes and containers as required. When it comes to managed on-premises

Kubernetes OpenShift is perhaps best compared with Microsoft’s Azure Arc

tooling, which brings Azure’s managed Kubernetes to on-premises, using the

Azure Portal as a management tool, or VMware’s Tanzu. They are all based on

certified Kubernetes, adding their own management tooling and access control.

OpenShift is more a sign of Kubernetes’ importance to enterprise application

development than anything else.

CISO Budget Constraints Drive Consolidation of Security Tools

Piyush Pandey, CEO at Pathlock, a provider of unified access orchestration,

says budget constraints will affect both solution purchases, but also

potentially the staff required to run them. “This will likely drive the

consolidation of solutions that span across multiple organizations, such as

access, compliance, and security tools,” he says. “This consolidation into

platforms will help organizations prioritize their resources -- time, money,

and people.” He says organizations that focus on comprehensive solutions can

drive more synergies across different departments to be compliant. “This won't

just be about cost savings, however -- it will also help reduce the complexity

of their infrastructure, eliminating multiple standalone tools and solutions,”

Pandey adds. Mike Parkin, senior technical engineer at Vulcan Cyber, a

provider of SaaS for enterprise cyber risk remediation, explains the global

financial downturn has hit multiple sectors, which means budgets are short

overall. “The challenge will be keeping cybersecurity postures strong, even in

the face of budget cuts,” he says.

Quote for the day:

"Leadership development is a lifetime

journey, not a quick trip." -- John Maxwell

No comments:

Post a Comment