5 Reasons Why IT Security Tools Don't Work For OT

While IT and OT both seek to ensure confidentiality (the protection of sensitive

data and assets), integrity (the fidelity of data over its lifecycle), and

availability (the accessibility and responsiveness of resources and

infrastructure), they prioritize different pieces of this CIA triad.IT's highest

priority is confidentiality. IT deals in data, and the stakeholders of IT

concern themselves with protecting that data — from trade secrets to the

personal information of users and customers. OT's highest priority is

availability. OT processes operate heavy-duty equipment in the physical realm,

and for them, availability means safety. Downtime is simply untenable when

shutting off a blast furnace or industrial boiler tank. For the sake of

availability and responsiveness, most OT components weren't built to accommodate

security implementations at all. ... Almost all IT-based tools require downtime

for installation, updates, and patching. These activities are generally a

non-starter for industrial environments, no matter how significant a

vulnerability may be. Again, downtime for OT systems means putting safety at

risk.

Oshkosh CIO Anu Khare on IT’s pursuit of value

VSP stands for value, strategic fit, and passionate sponsor. The framework ties

to my fundamental philosophy of letting cost, value, and the customer decide

what is valuable and what is not valuable for our customers. We didn’t start

with VSP, but it evolved as a guiding framework, as we looked at our portfolio

enablement process and asked ourselves, what’s the simplest way to approach

project portfolio management? First, we decided to focus on the value. We

started working with the business sponsors to articulate where and what impact

the technology will have on the business. We then validate with finance, and if

it has a hard savings, it gets No. 1 priority in terms of investment. The

relentless focus on value also leads to the second point, which is strategic

fit. The project may be valuable, but in any organization, the list of things

the organization can do is always bigger than what the organization can afford

or should afford. This is a capital allocation discussion? So we focus on the

strategic fit.

Cisco spotlights generative AI in security, collaboration

Security and IT administrators will be able to describe granular security

policies and the assistant willl evaluate how to best implement them across

different aspects of their security infrastructure, Patel said. At the Live!

event, Cisco demoed how a generative Cisco Policy Assistant can reason with the

existing set of firewall policy rules to implement and simplify them within the

Cisco Secure Firewall Management Center. Cisco says it is the first of many

examples of how generative AI can reimagine policy management across the Cisco

Security Cloud. ... In addition, he said the security assistant will let

customers describe and contextualize events across email, the web, endpoints,

and the network to tell security operation center (SOC) analyst exactly what

happened, the impact, and best next steps to take to remediate problems and set

new policies. The SOC Assistant will provide a comprehensive situation analysis

for analysts, correlating intel across the Cisco Security Cloud, relaying

potential impacts, and providing recommended actions with the goal of reducing

the time needed for SOC teams to respond to potential threats, he said.

How WASM (and Rust) Unlocks the Mysteries of Quantum Computing

Rather than picking from fixed specs, quantum programming can require you to

define the setup of your quantum hardware, describing the quantum circuit that

will be formed by the qubits and as well as the algorithm that will run on it —

and error-correcting the qubits while the job is running — with a language like

OpenQASM; that’s rather like controlling an FPGA with a hardware description

language like Verilog. You can’t measure a qubit to check for errors directly

while it’s working or you’d end the computation too soon, but you can measure an

extra qubit and extrapolate the state of the working qubit from that. What you

get is a pattern of measurements called a syndrome. In medicine, a syndrome is a

pattern of symptoms used to diagnose a complicated medical condition like

fibromyalgia. In quantum computing, you have to “diagnose” or decode qubit

errors from the pattern of measurements, using an algorithm that can also decide

what needs to be done to reverse the errors and stop the quantum information in

the qubits from decohering before the quantum computer finishes running the

program.

Energy security needs a secure IoT

The IoT has a central role to play as governments and industries work to reduce

dependence on fossil fuels, establish new forms of energy generation and

implement sufficient means of storing, managing and distributing energy. ... IoT

connected devices and systems can contribute carbon tracking and smart-meter

energy monitoring; they can enable data exchange for microgrids and support

mechanisms for selling energy directly back into the network. These solutions

will transmit data so that energy companies can monitor devices and conditions,

control devices in remote locations, track performance to predict maintenance

cycles and act on alerts. They will be able to monitor energy consumption for

smart metering through connected meters and sensors for load balancing on the

grid. In this way, connectivity is part of the intelligent, efficient, renewable

energy model, however it must be cybersecure. As new and additional devices are

deployed, they could present more pathways for potential cyberattacks. That is a

significant risk and safeguards are therefore needed to protect against

unauthorised access to devices, networks, management platforms and cloud

infrastructure.

How to Get Unstuck From Stress and Find Solutions Inside Yourself

The balance of sympathetic and parasympathetic states is critical both for our

well-being and for the cultivation of presence. Neither state is superior to the

other. They are opposite and equal in their importance. Both are needed to

dynamically maintain the homeostasis of the body. (Remember, a state of polarity

is the ability to go from one state to the other in alternation, as needed.) As

with any ecosystem, complementary forces are necessary to preserve harmony. The

trouble is that our regular thinking and doing in the world of business are

sympathetically activating. It is not possible to use only the mind to become

relaxed and restore balance to the nervous system. We need to counterbalance our

SNS (sympathetic nervous system) activation through feeling and being. This is a

whole new mode that many high-powered leaders are less familiar with and may not

entirely trust. The good news, however, is that when we are in a relaxed,

parasympathetic state, we can access the capabilities of our higher intelligence

that we need for presence and collaboration, such as visualization and

spontaneous generative creativity.

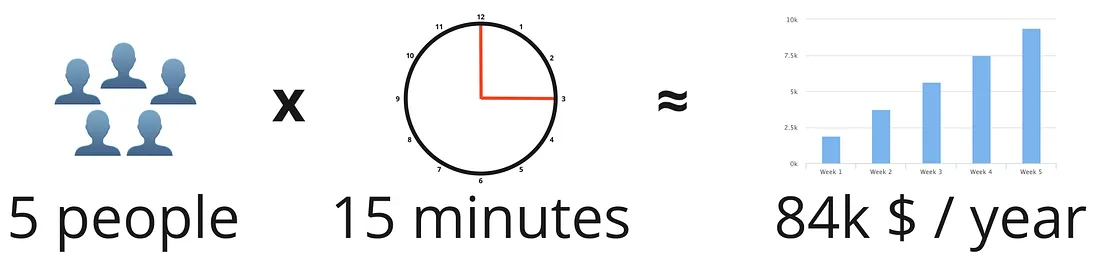

Daily Standups May Not Improve Your Team’s Agility

To make sure every team member gets the support they need, I highly recommend

having at least once per week a longer team meeting, something we call “team

time”. This meeting should be 30–45 min long and ensure there is enough time to

really get to the bottom of a problem and find a solution. Every team member can

propose a topic and the team discusses it together. If there are no challenges

to discuss, this is also a great forum for other ways of knowledge share. When

you are summing up these costs, you will be in a similar or even more expensive

range than daily standups, but those meetings are actually helpful since they

allow the team to solve problems and share knowledge and, with that, replace

other meetings and make work more efficient. The social aspect is something that

is rarely stated as a need for daily standups. But, for me, this is a

misconception. A healthy and social team will always be an efficient team.

Developing a proper team atmosphere and spirit should be key and in the interest

of everyone.

Everything Is Connected: Five IoT Trends Moving Forward

In what sounds like old news at this point, cybersecurity will continue to be at

the forefront of business decision making. What is different this year is the

rise of artificial intelligence (AI) and ML. AI and ML are making malicious

actors more efficient and potentially more effective when carrying out attacks.

Natural Language Models such as ChatGPT have opened new directions of attack as

well as lowering the overall threshold for creating effective malicious code.

Additionally, the changing legislative landscape around privacy will spur

companies to take a hard look at the way that they collect, use, and retain

sensitive personal data. This may require a complete redesign of products,

procedures, or in fact, entire business models. ... Finally, it is no secret

that the tech labor market is in a state of upheaval. Many companies are

reducing or restricting their workforces as they seek efficiency or profits.

This exodus of talented tech professionals has created severe knowledge gaps

that must be addressed.

API Management Is a Commodity: What’s Next?

As API management software unbundles the gateway and adapts to the multi-gateway

world, new and emerging software vendors are looking to fill the resulting

requirement gaps for API design and development, security, analytics, portals,

and marketplaces. Alex Walling, field CTO for Rapid, sees that developers

need a layer of abstraction on top of their existing API gateways, such as those

from WSO2, Kong, and Apigee so that they can find APIs easily and check whether

someone has already developed an API for what they need. Moreover, Derric

Gilling, CEO of Moesif, said he believes that API Gateways will become just one

of the specialized pieces of the API stack developers and organizations will

need to assemble to meet the growing adoption of APIs. He sees business models

for APIs evolving beyond simply charging for API invocation counts, and the need

for a specialized analytics solution to keep pace. Along with the continued

explosion of interest in APIs, especially as organizations use more third-party

APIs, the development and testing process becomes more complex and

time-consuming.

AI: Interpreting regulation and implementing good practice

Emerging standards, guidance and regulation for AI are being created worldwide,

and it will be important to align this and create a common understanding for

producers and consumers. Organizations such as ETSI, ENISA, ISO and NIST are

creating helpful cross-referenced frameworks for us to follow, and regional

regulators, such as the EU, are considering how to penalize bad practices. In

addition to being consistent, however, the principles of regulation should be

flexible, both to cater for the speed of technological development and to enable

businesses to apply appropriate requirements to their capabilities and risk

profile. An experimental mindset, as demonstrated by the Singapore Land

Transport Authority’s testing of autonomous vehicles, can allow academia,

industry and regulators to develop appropriate measures. These fields need to

come together now to explore AI systems’ safe use and development. Cooperation,

rather than competition, will enable safer use of this technology more

quickly.

Quote for the day:

"Men who are in earnest are not afraid

of consequences." -- Marcus Garvey

/filters:no_upscale()/articles/eda-mediator/en/resources/27figure-1-1685547198564.jpg)