The bottom line is that ICOs are being constructed with serious holes in them. Worse still, as the numbers from EY show, cyber criminals are taking advantage. Companies running ICOs are drawing huge sums of money in a very narrow window of time. If something goes wrong once the ICO is live, there little room for manoeuvring and precious little legal recourse that can realistically be taken. It’s the perfect conditions for cyber criminals to exploit. There is a high financial motivation and they’ve been drawn to ICOs like sharks drawn to churn in the water. The consequence of an attack? Well there’s two parties that could be affected there: the ICO organisers and the investors. Just one vulnerability is enough for attackers to steal investors’ money and do irreparable damage to the corporate reputation of the ICO organiser. The need to patch these holes is apparent but organisations are working on short time frames and might not realise where they are most vulnerable. So what are the main points of weakness?

Rolls-Royce Is Building Cockroach-Like Robots to Fix Plane Engines

Rolls-Royce believes these tiny insect-inspired robots will save engineers time by serving as their eyes and hands within the tight confines of an airplane’s engine. According to a report by The Next Web, the company plans to mount a camera on each bot to allow engineers to see what’s going on inside an engine without have to take it apart. Rolls-Royce thinks it could even train its cockroach-like robots to complete repairs. “They could go off scuttling around reaching all different parts of the combustion chamber,” Rolls-Royce technology specialist James Cell said at the airshow, according to CNBC. “If we did it conventionally it would take us five hours; with these little robots, who knows, it might take five minutes.” Rolls-Royce has already created prototypes of the little bot with the help of robotics experts from Harvard University and University of Nottingham. But they are still too large for the company’s intended use. The goal is to scale the roach-like robots down to stand about half-an-inch tall and weigh just a few ounces, which a Rolls-Royce representative told TNW should be possible within the next couple of years.

While tight integration is desirable, these systems have many of the same networking challenges as other data center deployments, including requirements for scalability, automation, security and management of traffic flows. Additionally, they need to link to other data center resources inside the data center, at remote data centers and in the cloud. Software-defined networking architecture can ease some of the scaling, automation, security and connectivity challenges of hyper-converged system deployments. Hyper-converged systems integrate storage, computing and networking into a single system -- a box or pod -- in order to reduce data center complexity and ease deployment challenges associated with traditional data center architectures. A hyper-converged system comprises a hypervisor, software-defined storage and internal networking, all of which are managed as a single entity. Multiple pods can be networked together to create pools of shared compute and storage.

Big Tech is Throwing Money and Talent at Robots for the Home

Whether or not the robots catch on with consumers right away is almost beside the point because they’ll give these deep-pocketed companies bragging rights and a leg up in the race to build truly useful automatons. “Robots are the next big thing,” said Gene Munster, co-founder of Loup Ventures, who expects the U.S. market for home robots to quadruple to more than $4 billion by 2025. “You know it will be a big deal because the companies with the biggest balance sheets are entering the game.” Many companies have attempted to build domestic robots before. Nolan Bushnell, a co-founder of Atari, introduced the 3-foot-tall, snowman-shaped Topo Robot back in 1983. Though it could be programmed to move around by an Apple II computer, it did little else and sold poorly. Subsequent efforts to produce useful robotic assistants in the U.S., Japan and China have performed only marginally better. IRobot Corp.’s Roomba is the most successful, having sold more than 20 million units since 2002, but it only does one thing: vacuum.

“Enterprise Architecture As A Service” - How To Reach For The Stars

Educate those implementing your value chain in best open practices. To deliver EA As A Service, one would do well by ensuring services are delivered through best practices that are open because this enables an organization to train easily, hire selectively, and produce consistently. Of course, one might ask about differentiation – the secret sauce for differentiation will be in your proven ability to deliver fast and on target! Apply the best in class tools proven to improve production capability. Similar to the about decided upon and utilizing a consistent set of best in class tools helps ensure that deliverables are consistent among clients and enable reuse which can improve speed and quality of delivery. Tools that support the best open practices add even more. Collaborate with partners to evolve the best open practices. Keeping in mind that differentiation comes in how well you deliver EA As A Service, collaboration on the best open practices provides an avenue to improve the best practices based on real experiences, improves market perception, and helps keep the bar raised for the industry.

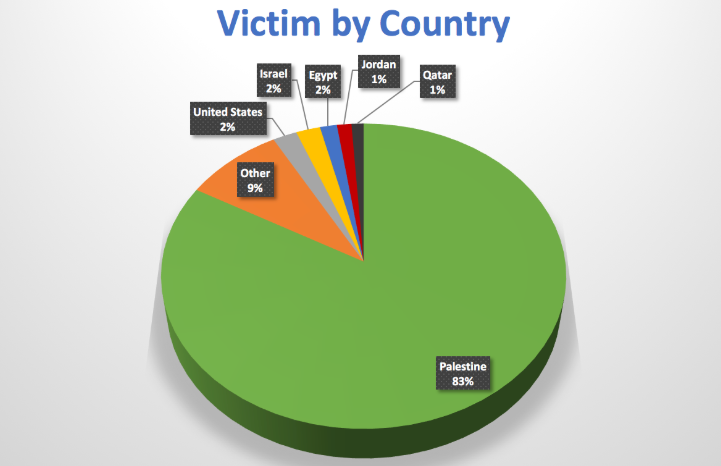

Micropsia Malware

Controlled by Micropsia operators, the malware is able to register to an event of USB volume insertion to detect new connected USB flash drives. This functionality is detailed in an old blog post. Once an event is triggered, Micropsia executes an RAR tool to recursively archive files based on a predefined list of file extensions ... Most of the malware capabilities mentioned above have outputs written to the file system which are later uploaded to the C2 server. Each module writes its own output in a different format, but surprisingly in a non-compressed and non-encrypted fashion. Micropsia’s developers decided to solve these issues by implementing an archiver component that executes the WinRAR tool. The malware first looks for an already installed WinRAR tool on the victim’s machine, searching in specific locations. In the event a WinRAR tool is not found, Micropsia drops the RAR tool found in its Windows Portable Executable (PE) resource section to the file system.

FinTech’s road to financial wellness

It’s one thing to build up a pot of money (saving), but it’s also vital to make that money work hard for you (investing). Investment platforms like Moneybox and Nutmeg are giving everyday people the ability to make their money go further. Robo-advice in particular is making it considerably easier for consumers to invest their money in a way that matches their circumstances and attitude to risk. A key benefit of these start-ups is that they often have low minimum investment limits, which has led to younger generations and those with small savings pots being able to invest. ... A recent report found the insurance sector lags only behind the utilitiessector when it comes to disappointing customers with a poor online customer experience. These bad experiences are causing consumers to be put off dealing with insurance and insurers, meaning those consumers often aren’t financially protected. InsurTech companies like Lemonade, however, are using behavioural economics and new technology to create aligned incentives between the insurer and the customer.

Securing Our Interconnected Infrastructure

While it's encouraging that the House is leaning forward on industrial cybersecurity and committed to authorizing and equipping the Department of Homeland Security to protect our critical infrastructure, this still remains largely a private sector problem. After all, over 80% of America's critical infrastructure is privately owned and the owners and operators of these assets are best positioned to address their risks. In doing so, one of the questions companies are asking themselves is how to reconcile the risks and rewards of the interconnected world. Should we simply retreat into technological isolationism and eschew the benefits of connectivity in the interest of security, or is there a better way to manage the risk? The former is gaining a growing chorus, especially among security researchers. The latest call comes from Andy Bochman of the Department of Energy's Idaho National Labs. Bochman argued this past May in Harvard Business Review that the best way to address the cyber-risk to critical infrastructure is "to reduce, if not eliminate, the dependency of critical functions on digital technologies and their connections to the Internet."

The race to build the best blockchain

/https%3A%2F%2Fblueprint-api-production.s3.amazonaws.com%2Fuploads%2Fcard%2Fimage%2F815871%2F755acf4d-a6d5-4d7e-9216-92df6290695b.jpg)

Things move incredibly fast in the blockchain world. Ethereum is three years old. Projects like Cardano and EOS, sometimes called "blockchain 2.0" projects, are already considered to be giants in the space. They have a combined token market cap of roughly $11.8 billion despite barely being operational. Cardano, which focuses on a slow and steady approach, with every iteration of the software being peer reviewed by scientists, is promising, but it hasn't fully launched its smart contract platform yet. EOS, an incredibly well-funded startup that launched in June, is another huge contender. However, EOS has a complicated governance process which caused a fair amount of trouble right after the launch, together with a slew of freshly discovered bugs. With an estimated $4 billion in pocket, EOS has the means to do big things, but it will take some time to see whether it can live up to the promise. But there's already a new breed of blockchain startups coming. They've been working, often in the shadows, to develop new concepts and technologies that may make the promise of a fast, decentralized app platform a reality.

Serverless vs. containers: What's best for event-driven apps?

Event processing is very different from typical transaction processing. An event is a signal that something is happening, and it often requires only a simple response rather than complex edits and updates. Transactions are also fairly predictable since they come from specific sources in modest numbers. Events, however, can originate anywhere, and frequency of events can range from nothing at all to tens of thousands per second. These important differences between transactions and events launched the serverless trend and also precipitated the strategy called functional programming. Functional programming is pretty simple. A function -- or lambda, as it is often called -- is a software component that contains outputs based only on the input. If Y is a function of X, then Y varies only as X does. For practical reasons, functions don't store data that could change their outputs internally. Therefore, any copy of a function can process the same input and produce the same output. This facilitates highly resilient and scalable applications.

Quote for the day:

"Rarely have I seen a situation where doing less than the other guy is a good strategy." -- Jimmy Spithill