Quote for the day:

"The ability to summon positive emotions during periods of intense stress lies at the heart of effective leadership." -- Jim Loehr

Weak cyber defenses are exposing critical infrastructure — how enterprises can proactively thwart cunning attackers to protect us all

Weak cybersecurity isn’t merely a corporate issue — it’s a national security

risk. The 2021 Colonial Pipeline attack disrupted energy supplies and exposed

vulnerabilities in critical industries. Rising geopolitical tensions,

especially with China, amplify these risks. Recent breaches attributed to

state-sponsored actors have exploited outdated telecommunications equipment

and other legacy systems, revealing how complacency in updating technology can

put national security in danger. For instance, last year’s hack of U.S. and

international telecommunications companies exposed phone lines used by top

officials and compromised data from systems for surveillance requests,

threatening national security. Weak cybersecurity at these companies risks

long-term costs, allowing state-sponsored actors to access sensitive

information, influence political decisions and disrupt intelligence efforts.

... No company can face today’s cyber threats on its own. Collaboration

between private businesses and government agencies is more than helpful — it’s

imperative. Sharing threat intelligence in real-time allows organizations to

respond faster and stay ahead of emerging risks. Public-private partnerships

can also level the playing field by offering smaller companies access to

resources like funding and advanced security tools they might not otherwise

afford.

Evaluating the CISO

Delegation skills are an essential component that should be evaluated

separately in this area. Effective delegation is essential to prevent

becoming a bottleneck, as micromanagement is unsuitable for the CISO role.

Delegating complex tasks not only lightens your load but also helps foster

the team’s overall competence. Without strong delegation skills, CISOs

cannot rate themselves highly in their relationship with the internal

security team. ... A CISO is hired to lead, manage, and support specific

projects or programs such as migrating to a cloud or hybrid infrastructure,

implementing zero-trust principles, launching security awareness

initiatives, or assessing risks and creating a roadmap for post-quantum

cryptography implementation. The success of these initiatives ultimately

falls under the CISO’s responsibility. To execute these programs

effectively, the CISO relies heavily on its team and internal organizational

peers. As such, building strong relationships with both is essential for

successfully delivering projects. ... A CISO must have responsibility for

the information security budget, which includes funding for the team, tools,

and services. Without direct control over the budget, it becomes challenging

to rate the relationship with management highly, as budget ownership is a

critical aspect of the CISO’s role.

Unraveling Large Language Model Hallucinations

You might have seen model hallucinations. They are the instances where LLMs

generate incorrect, misleading, or entirely fabricated information that

appears plausible. These hallucinations happen because LLMs do not “know”

facts in the way humans do; instead, they predict words based on patterns in

their training data. ... Supervised Fine-Tuning makes the model capable.

However, even a well-trained model can generate misleading, biased, or

unhelpful responses. Therefore, Reinforcement Learning with Human Feedback

is required to align it with human expectations. We start with the assistant

model, trained by SFT. For a given prompt we generate multiple model

outputs. Human labelers rank or score multiple model outputs based on

quality, safety, and alignment with human preferences. We use these data to

train a whole separate neural network that we call a reward model. The

reward model imitates human scores. It is a simulator of human preferences.

It is a completely separate neural network, probably with a transformer

architecture, but it is not a language model in the sense that it generates

diverse language. It’s just a scoring model.

How to Communicate the Business Value of Master Data Management

In an ideal scenario, MDM is integral to a broader D&A strategy,

highlighting how D&A supports the organization's strategic goals. The

strategy aligns with these goals, prioritizes the business outcomes it will

support, and details what is needed to achieve them. Therefore, leaders must

first understand and prioritize the explicit business outcomes that MDM will

support before creating an MDM strategy. In other words, "improving

decision-making" is not good enough. "Increase customer service levels by 5%

by end of December 2025" is the level of detail required. D&A leaders

may recognize that master data is causing a problem or limiting an

opportunity, which is where they would rely on an MDM. If this is the case,

those D&A leaders should consider questions that help identify the

problem, KPIs, and key stakeholders in these cases. These questions help

identify potential business outcomes that MDM could support. Figure 1

provides a worksheet to build this initial picture and facilitate

stakeholder discussions. The worksheet maps high-level goals onto a

run-grow-transform framework, which could also be represented by three

columns for the primary business value drivers: risk, revenue, and cost.

4 ways to get your business ready for the agentic AI revolution

Agents could be used eventually, but only once a partnership approach

identifies the right opportunities. "Agents are becoming a big part of how

generative AI and machine learning are used in business today. The way

agents will be used in travel will be fascinating to watch. I think this

technology will certainly be a part of the mix," he said. "The process for

Hyatt will be to find the right technologies -- and we'll do that in close

partnership with our business leaders and the technology teams that run the

applications. We'll then provide the AI services to drive those transitions

for the business." ... Keith Woolley, chief digital and information officer

at the University of Bristol, is another digital leader who sees the

potential benefits of agents. However, he said these advantages will become

manifest over the longer term. "We are looking at agentic AI, but we're not

implementing it yet," he said. "We sit as a management team and ask

questions like, 'Should we do our admissions process using agentic AI? What

would be the advantage?'" Woolley told ZDNET he could envision a situation

in which AI and automation help assess and inform candidates worldwide about

the status of their applications.

Cloud Giants Collaborate on New Kubernetes Resource Management Tool

/filters:no_upscale()/news/2025/02/kube-resource-orchestrator/en/resources/11_oVHxwcn.max-1200x1200-1740757016392.jpg)

The core innovation of kro is the introduction of the

ResourceGraphDefinition custom resource. kro encapsulates a Kubernetes

deployment and its dependencies into a single API, enabling custom end-user

interfaces that expose only the parameters applicable to a non-platform

engineer. This masking hides the complexity of API endpoints for Kubernetes

and cloud providers that are not useful in a deployment context. ... Kro

works seamlessly with the existing cloud provider Kubernetes extensions that

are available to manage cloud resources from Kubernetes. These are AWS

Controllers for Kubernetes (ACK), Google's Config Connector (KCC), and Azure

Service Operator (ASO). kro enables standardised, reusable service templates

that promote consistency across different projects and environments, with

the benefit of being entirely Kubernetes-native. It is still in the early

stages of development. "As an early-stage project, kro is not yet ready for

production use, but we still encourage you to test it out in your own

Kubernetes development environments," the post states. ... Most

significantly for the Crossplane community, Farcic questioned kro's purpose

given its functional overlap with existing tools. "kro is serving more or

less the same function as other tools created a while ago without any

compelling improvement," he observed.

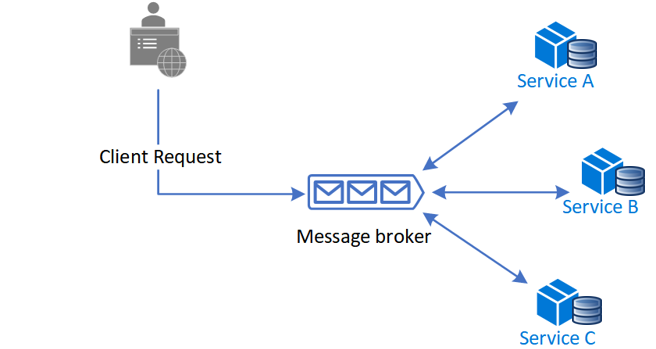

Why a different approach to AIOps is needed for SD-WAN

AIOps tools enhance efficiency by seamlessly integrating with IT management

tools, enabling proactive issue identification and streamlining IT

management processes. But more than that, they optimize an organization’s

network by improving the performance, efficiency, and dependability of its

network resources to ensure optimal user experience. Regarding

infrastructure, many organizations now rely on SD-WAN – software-defined

wide area network – to manage and optimize data traffic across different

types of networks efficiently. SD-WAN is an effective way to connect the

organization and provide users with application access. It helps businesses

improve their network performance, cut costs, and be more flexible by easily

connecting to various network types. ... AIOps tools use the information

extracted from SD-WAN systems and autonomously resolve issues without human

intervention. In other words, AIOps tools utilize predictive analytics to

forecast future events or outcomes related to network operations. This makes

the whole system run smoother and more reliably, while machine learning

algorithms can use this historical data to make predictions and proactively

improve the performance of critical applications.

AI-Driven Threat Detection and the Need for Precision

AI algorithms, particularly those based on machine learning, excel at

sifting through massive datasets and identifying patterns that would be

nearly impossible for us mere humans to spot. An AI system might analyze

network traffic patterns to identify unusual data flows that could indicate

a data exfiltration attempt. Alternatively, it could scan email attachments

for malicious code that traditional antivirus software might miss.

Ultimately, AI feeds on context and content. The effectiveness of these

systems in protecting your security posture(link is external) is

inextricably linked to the quality of the data they are trained on and the

precision of their algorithms. ... Finally, AI-driven threat detection may

not eradicate human expertise. Skilled security professionals should still

oversee AI systems and make informed decisions based on their own contextual

expertise and experience. Human oversight validates the AI's findings, and

threat detection algorithms may not be able to totally replace the critical

thinking and intuition of human analysts. There may come a time when human

professionals exist in AI's shadow. Yet, at this time, combining the power

of AI with human knowledge and a commitment to continuous learning can form

the building blocks for a sophisticated defense program.

From Ambiguity to Accountability: Analyzing Recommender System Audits under the DSA

In these early years of the DSA, a range of stakeholders – online platforms,

civil society, the European Commission (EC), and national Digital Service

Coordinators (DSCs) – must experiment, identify good practices, and share

lessons learned. Such iteration is important to ensure an adaptive DSA

regime that spurs innovation and responds to shifting technologies, risks,

and mitigation strategies. The need for iteration and flexibility, however,

should not mean the audits fail to deliver on their potential as vehicles

for transparency and accountability. The first round of independent audits

of recommender systems reveals clear areas for immediate improvement.

Because the core definitions and methodologies were developed independently

by platforms and auditors, significant inconsistencies exist in both risk

assessment and audit processes. ... The DSA requires the main parameters of

recommender systems to be spelled out in plain and intelligible language.

What does this concretely mean in the recommender system context? Is it free

of “acronyms or complex/technical terminology” (Pinterest), “straightforward

vocabulary and easy to perceive, understand, or interpret” (Snap), or

“written for a general audience with varying technical skill levels,

inclusive of all users” (TikTok)? There's a subtle difference in

expectations associated with each framing. These terms don’t need to be

defined in a vacuum.

Cybersecurity in retail: What does the future hold?

In the coming year, cybersecurity experts predict attackers will

increasingly target Generative AI models used by retailers, creating

significant potential for operational disruptions and data breaches. These

AI systems, now critical to retail operations, are vulnerable to

sophisticated attacks that could compromise customer service efficiency and

expose critical business vulnerabilities. The core risk lies in the

sophisticated ways attackers can exploit AI’s complex decision-making

processes, turning what was once a technological advantage into a potential

security liability. Retailers must recognise that their AI systems are not

just technological tools, but potential entry points for cybercriminal

activities. ... The complexity and distribution of digital ecosystems make

them prime targets during high-demand periods. For example, as we have seen

in the past, cyberattacks that hit supply chains can cause major delays and

financial loss. These incidents underscore the vulnerabilities in supply

chains during peak times of the year. In 2025, expect a rise in supply

chain attacks during the holiday season, targeting ecommerce platforms and

logistics providers, which could disrupt product availability and shipping.