Quote for the day:

“What seems to us as bitter trials are often blessings in disguise.” -- Oscar Wilde

This new AI benchmark measures how much models lie

Scheming, deception, and alignment faking, when an AI model knowingly pretends

to change its values when under duress, are ways AI models undermine their

creators and can pose serious safety and security threats. Research shows

OpenAI's o1 is especially good at scheming to maintain control of itself, and

Claude 3 Opus has demonstrated that it can fake alignment. To clarify, the

researchers defined lying as, "(1) making a statement known (or believed) to be

false, and (2) intending the receiver to accept the statement as true," as

opposed to other false responses, such as hallucinations. The researchers said

the industry hasn't had a sufficient method of evaluating honesty in AI models

until now. ... "Many benchmarks claiming to measure honesty in fact simply

measure accuracy -- the correctness of a model's beliefs -- in disguise," the

report said. Benchmarks like TruthfulQA, for example, measure whether a model

can generate "plausible-sounding misinformation" but not whether the model

intends to deceive, the paper explained. ... "As a result, more capable models

can perform better on these benchmarks through broader factual coverage, not

necessarily because they refrain from knowingly making false statements," the

researchers said. In this way, MASK is the first test to differentiate accuracy

and honesty.

EU looks to tech sovereignty with EuroStack amid trade war

“Software forms the operational core of digital infrastructure, encompassing

operating systems, application platforms, and algorithmic frameworks,” the

report notes. “It powers critical functions such as identity management,

electronic payments, transactions, and document delivery, forming the foundation

of digital public infrastructures.” EuroStack could also help empower citizens

and businesses through digital identity systems, secure payments and data

platforms. It envisions digital IDs as the gateway to Europe’s digital

infrastructure and a way to enable seamless access while safeguarding privacy

and sovereignty according to EU regulations. “By overcoming the limitations seen

in models like India Stack, which rely on centralized biometric IDs and foreign

cloud infrastructure, the EuroStack offers a federated, privacy-preserving

platform,” the study explains. EuroStack’s ambitious goals to support indigenous

technology will require plenty of funds: As much as 300 billion euros (US$324.9

billion) for the next 10 years, according to the study. Chamber of Progress, a

tech industry trade group that includes U.S. tech companies, puts the price tag

even higher, at 5 trillion euros ($5.4 trillion). But according to EuroStack’s

proponents, the results are worth it.

Companies are drowning in high-risk software security debt — and the breach outlook is getting worse

Organizations are taking longer to fix security flaws in their software, and the

security debt involved is becoming increasingly critical as a result. According

to application security vendor Veracode’s latest State of Software Security

report, the average fix time for security flaws has increased from 171 days to

252 days over the past five years. ... Chris Wysopal, co-founder at chief

security evangelist at Veracode, told CSO that one aspect of application

security that has gotten progressively worse over the years is the time it takes

to fix flaws. “There are many reasons for this, but the ever-growing scope and

complexity of the software ecosystem is a core issue,” Wysopal said.

“Organizations have more applications and vastly more code to keep on top of,

and this will only increase as more teams adopt AI for code generation” — an

issue compounded by the potential security implications of AI-generated code

across in-house software and third-party dependencies alike. ... “Most

organizations suffer from fragmented visibility over the software flaws and

risks within their applications, with sprawling toolsets that create ‘alert

fatigue’ at the same time as silos of data to interpret and make decisions

about,” Wysopal said. “The key factors that help them address the security

backlog are the ability to prioritize remediation of flaws based on

risk.”

AI Coding Assistants Are Reshaping Engineering — Not Replacing Engineers

The next big leap in AI coding assistants will be when they start learning from

how developers work in real time. Right now, AI doesn’t recognize coding

patterns within a session. If I perform the same action 10 times in a row, none

of the current tools ask, “Do you want me to do this for the next 100 lines?”

But Vi and Emacs solved this problem decades ago with macros and automated

keystroke reduction. AI coding assistants haven’t even caught up to that

efficiency level yet. Eventually, AI assistants might become plugin-based so

developers can choose the best AI-powered features for their preferred editor.

Deeply integrated IDE experiences will probably offer more functionality, but

many developers won’t want to switch IDEs. ... Software engineering is a

fast-paced career. Languages, frameworks, and technologies come and go, and the

ability to learn and adapt separates those who thrive from those who fall

behind. AI coding assistants are another evolution in this cycle. They won’t

replace engineers but will change how engineering is done. The key isn’t

resisting these tools; it’s learning how to use them properly and staying

curious about their capabilities and limitations. Until these tools improve, the

best engineers will be the ones who know when to trust AI, when to double-check

its output, and how to integrate it into their workflow without becoming

dependent on it.

Building generative AI? Get ready for generative UI

Generative UI takes the concept of generative AI and applies it to how we

interact with data or systems. Just as generative AI makes data interactive

and available in natural language, or creates new images or sound in response

to a prompt, so generative UI builds interactive context into how data is

displayed, depending on what you are asking for. The goal is to deliver the

content that the user wants but also in a format that makes the most of that

data for the user too. ... To deliver generative UI, you will have to link up

your application with your generative AI components, like your large language

model (LLM) and sources of data, and with the tools you use to build the site

like Vercel and Next.js. For generative UI, by using React Server Components,

you can change the way that you display the output from your LLM service.

These components can deliver information that is updated in real time, or is

delivered in different ways depending on what formats are best suited to the

responses. As you create your application, you will have to think about some

of the options that you might want to deliver. As a user asks a question, the

generative AI system must understand the request, determine the appropriate

function to use, then choose the appropriate React Server Component to display

the response back.

Four essential strategies to bolster cyber resilience in critical infrastructure

Cyber resilience isn’t possible when teams operate in silos. In fact, 59% of

government leaders report that their inability to synthesize data across

people, operations, and finances weakens organizational agility. To bolster

cyber resilience, organizations must break down these siloes by fostering

cross-departmental collaboration and making it as seamless as possible.

Achieving this requires strategic investment in a triad of technologies: A

customized, secure collaboration platform; A project management tool like

Asana, Trello, or Jira; A knowledge-sharing solution like Confluence or

Notion. Once these three foundational tools are in place, organizations should

deploy the final piece of the puzzle: a dashboarding or reporting tool. These

technologies can help IT leaders pinpoint any silos that exist and start

figuring out how to break them down. ... Most organizations understand

security’s importance but often treat it as an afterthought. To strengthen

cyber resilience, organizations must adopt a security-first mindset, baking

security into everything they do. Too often, security teams are siloed from

the rest of the organization; they’re roped in at the end when they should be

fully integrated from the start. Truly resilient organizations treat security

as a shared responsibility, ensuring it’s part of every decision, project, and

process.

Did we all just forget diverse tech teams are successful ones?

The reality is that diverse teams are more productive and report better

financial performance. This has been a key advantage of diversity in tech for

many years, and it’s continued to this day. Research from McKinsey’s Diversity

Matters report showed that those committed to DEI and multi-ethnic

representation exhibit a “39% increased likelihood of outperformance” compared

to those that aren’t. These same companies also showed an average 27% financial

advantage over others. The same performance boosts can be found in executive

teams that focus heavily on improving gender diversity, McKinsey found.

Companies with representation of women exceeding 30% are “significantly more

likely to financially outperform those with 30% or fewer,” the study noted. ...

Are you willing to alienate huge talent pools because you want to foster a more

‘masculine’ culture in your company? If you are, then you’re fighting a losing

battle and in my opinion deserve to fail. Tech bro culture counts for nothing

when that runway comes to an end and you’ve no MVP. Yet again, what this entire

debacle comes down to is a highly vocal minority seeking to hamper progress. Big

tech might just be going with the flow and pandering to the current prevailing

ideological sentiment. In time they might come back around, but that’s what

makes it worse.

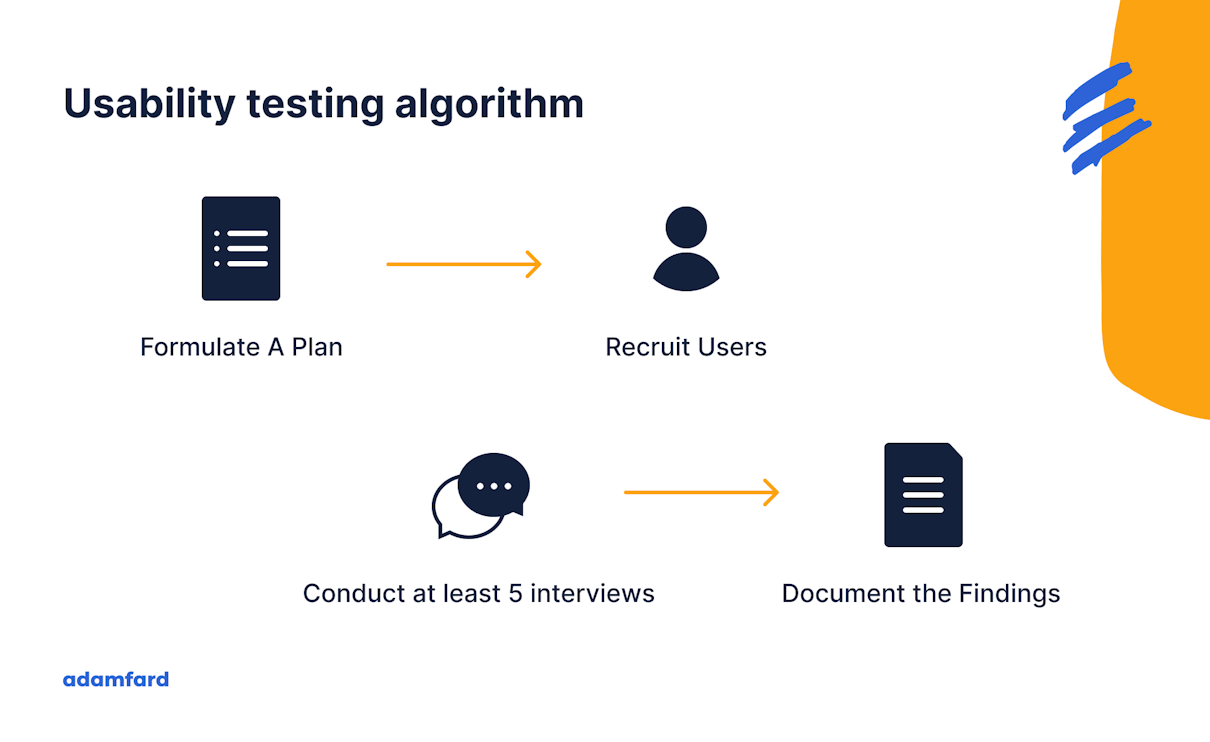

With critical thinking in decline, IT must rethink application usability

The more IT’s business analysts and developers learn the end business, the

better prepared they will be to deliver applications that fit the forms and

functions of business processes, and integrate seamlessly into these processes.

Part of IT engagement with the business involves understanding business goals

and how the business operates, but it’s equally important to understand the

skill levels of the employees who will be using the apps. ... The 80/20 rule —

i.e., 80% of applications developed are seldom or never used, and 20% are useful

— still applies. And it often also applies within that 20% of useful apps, in

terms of useful features and functionality. IT must work to ensure what it

develops hits a higher target of utility. Users are under constant pressure to

do work fast. They meet the challenges by finding ways to do the least possible

work per app and may never look at some of the more embedded, complicated, and

advanced functionality an app offers. ... Especially in user areas with

high turnover, or in other domains that require a moderate to high level of

skill, user training and mentoring should be major milestone tasks in every

application project, and an ongoing routine after a new application is

installed. Business analysts from IT can help with some of this, but the

ultimate responsibility falls on non-IT functions, which should have subject

matter experts available to mentor and train employees when questions arise.

How digital academies can boost business-ready tech skills for the future

Niche tech skills are becoming essential for complex software projects. With

requirements evolving for highly technical roles, there’s a greater need for

more competency in using digital tools. Technology professionals need to know

how to use the tools effectively and valuably to make meaningful decisions

around adoption and implementation. ... In creating links between educational

institutions and a hub of tech and digital sector businesses, via digital

academies, this can vastly improve how training opportunities can be

constructed. Whether an organisation is looking to make digital transformation

real and upskill on the tools and technology available, or a person wants to

career switch into software development, digital academies can support these

skilling or upskilling programmes through training on a range of digital tools.

An effective digital academy is one with technical experts in software delivery

that design, deliver and assess the courses. An academy such as Headforwards

Digital Academy can intensively train a person in deep software engineering,

taking them from no-coding knowledge to becoming a junior software developer in

as little as 16 weeks. These industry-led tech training programmes are a more

agile and nimble response to education, as they are validated by employers and

receive so much support.

Smart cybersecurity spending and how CISOs can invest where it matters

“The most pervasive waste in cybersecurity isn’t from insufficient tools – it’s

from investments that aren’t tied to validated risk models. When security

spending isn’t part of a closed-loop system that connects real-world threats to

measurable outcomes, you’re essentially paying for digital theater rather than

actual protection,” Alex Rice, CTO at HackerOne, told Help Net Security. “Many

CISOs operate with fragmented security architectures where tools work in

isolation, creating dangerous blind spots. As attack surfaces expand across

code, AI systems, cloud infrastructure, and traditional IT, this siloed approach

isn’t just inefficient – it’s dangerous. Defense in depth requires coordinated

visibility across all domains,” Rice added. ... “A HackerOne survey revealed

most CISOs don’t find traditional ROI measures useful for security investments.

This isn’t surprising – cybersecurity is notoriously difficult to quantify with

conventional metrics. More meaningful approaches like Return on Mitigation,

which accounts for potential losses prevented, offer a more accurate picture of

security’s true business value,” Rice explained. “The uncomfortable truth? We’ve

created a tangled ecosystem of point solutions that often disguise rather than

address fundamental security gaps. Before purchasing the next shiny tool, ask:

Does this solution provide meaningful transparency into your actual security

posture?

:max_bytes(150000):strip_icc():format(webp)/GettyImages-1223790532-b9202544771f4246912063b14cc0e41a.jpg)