Quote for the day:

“Being responsible sometimes means pissing people off.” -- Colin Powell

The new paradigm for raising up secure software engineers

CISOs were already struggling to help developers keep up with secure code

principles at the speed of DevOps. Now, with AI-assisted development reshaping

how code gets written and shipped, the challenge is rapidly intensifying. ...

What is needed to get thrown out are traditional training methods. Consensus

among security leaders is that dev training needs to be bite-sized, hands-on,

and mostly embedded in developer tool chains. ... Rather than focus on

preparing developers for line-by-line code review, the emphasis moves toward

evaluating whether their features and functions behave securely in context of

deployment conditions, says Hasan Yasar ... Developers need to recognize when

AI-generated code introduces unsafe assumptions, insecure defaults, or

integrations that can scale vulnerabilities across systems. And with more

security enforcement built into automated engineering pipelines, developers

should ideally also be trained to understand what automated gates catch, and

what still requires human judgment. “Security awareness in engineering has

shifted to a system-level approach rather than focusing on individual

vulnerabilities,” Pinna says. ... The data from guardrails and controls being

triggered can be used by the AppSec team to drive creation and delivery of

more in-depth, but targeted education. When the same vulnerability or

integration pattern pops up again and again, that’s a signal for focused

training on a subject.

CISOs were already struggling to help developers keep up with secure code

principles at the speed of DevOps. Now, with AI-assisted development reshaping

how code gets written and shipped, the challenge is rapidly intensifying. ...

What is needed to get thrown out are traditional training methods. Consensus

among security leaders is that dev training needs to be bite-sized, hands-on,

and mostly embedded in developer tool chains. ... Rather than focus on

preparing developers for line-by-line code review, the emphasis moves toward

evaluating whether their features and functions behave securely in context of

deployment conditions, says Hasan Yasar ... Developers need to recognize when

AI-generated code introduces unsafe assumptions, insecure defaults, or

integrations that can scale vulnerabilities across systems. And with more

security enforcement built into automated engineering pipelines, developers

should ideally also be trained to understand what automated gates catch, and

what still requires human judgment. “Security awareness in engineering has

shifted to a system-level approach rather than focusing on individual

vulnerabilities,” Pinna says. ... The data from guardrails and controls being

triggered can be used by the AppSec team to drive creation and delivery of

more in-depth, but targeted education. When the same vulnerability or

integration pattern pops up again and again, that’s a signal for focused

training on a subject.New agent framework matches human-engineered AI systems — and adds zero inference cost to deploy

In experiments on complex coding and software engineering tasks, GEA

substantially outperformed existing self-improving frameworks. Perhaps most

notably for enterprise decision-makers, the system autonomously evolved agents

that matched or exceeded the performance of frameworks painstakingly designed

by human experts. ... Unlike traditional systems where an agent only learns

from its direct parent, GEA creates a shared pool of collective experience.

This pool contains the evolutionary traces from all members of the parent

group, including code modifications, successful solutions to tasks, and tool

invocation histories. Every agent in the group gains access to this collective

history, allowing them to learn from the breakthroughs and mistakes of their

peers. ... The results demonstrated a massive leap in capability without

increasing the number of agents used. This collaborative approach also makes

the system more robust against failure. In their experiments, the researchers

intentionally broke agents by manually injecting bugs into their

implementations. GEA was able to repair these critical bugs in an average of

1.4 iterations, while the baseline took 5 iterations. The system effectively

leverages the "healthy" members of the group to diagnose and patch the

compromised ones. ... The success of GEA stems largely from its ability to

consolidate improvements. The researchers tracked specific innovations

invented by the agents during the evolutionary process.

In experiments on complex coding and software engineering tasks, GEA

substantially outperformed existing self-improving frameworks. Perhaps most

notably for enterprise decision-makers, the system autonomously evolved agents

that matched or exceeded the performance of frameworks painstakingly designed

by human experts. ... Unlike traditional systems where an agent only learns

from its direct parent, GEA creates a shared pool of collective experience.

This pool contains the evolutionary traces from all members of the parent

group, including code modifications, successful solutions to tasks, and tool

invocation histories. Every agent in the group gains access to this collective

history, allowing them to learn from the breakthroughs and mistakes of their

peers. ... The results demonstrated a massive leap in capability without

increasing the number of agents used. This collaborative approach also makes

the system more robust against failure. In their experiments, the researchers

intentionally broke agents by manually injecting bugs into their

implementations. GEA was able to repair these critical bugs in an average of

1.4 iterations, while the baseline took 5 iterations. The system effectively

leverages the "healthy" members of the group to diagnose and patch the

compromised ones. ... The success of GEA stems largely from its ability to

consolidate improvements. The researchers tracked specific innovations

invented by the agents during the evolutionary process. GitHub readies agents to automate repository maintenance

In order to help developers and enterprises manage the operational drag of

maintaining repositories, GitHub is previewing Agentic Workflows, a new

feature that uses AI to automate most routine tasks associated with repository

hygiene. It won’t solve maintenance problems all by itself, though. Developers

will still have to describe the automation workflows in natural language that

agents can follow, storing the instructions as Markdown files in the repo

created either from the terminal via the GitHub CLI or inside an editor such

as Visual Studio Code. ... “Mid-sized engineering teams gain immediate

productivity benefits because they struggle most with repetitive maintenance

work like triage and documentation drift,” said Dion Hinchcliffe ... Patel

also warned that beyond precision and signal-to-noise concerns, there is a

more prosaic risk teams may underestimate at first: As agentic workflows scale

across repositories and run more frequently, the underlying compute and

model-inference costs can quietly compound, turning what looks like a

productivity boost into a growing operational line item if left unchecked.

This can become a boardroom issue for engineering heads and CIOs because they

must justify return on investment, especially at a time when they are

grappling with what it really means to let software agents operate inside

production workflows, Patel added.

In order to help developers and enterprises manage the operational drag of

maintaining repositories, GitHub is previewing Agentic Workflows, a new

feature that uses AI to automate most routine tasks associated with repository

hygiene. It won’t solve maintenance problems all by itself, though. Developers

will still have to describe the automation workflows in natural language that

agents can follow, storing the instructions as Markdown files in the repo

created either from the terminal via the GitHub CLI or inside an editor such

as Visual Studio Code. ... “Mid-sized engineering teams gain immediate

productivity benefits because they struggle most with repetitive maintenance

work like triage and documentation drift,” said Dion Hinchcliffe ... Patel

also warned that beyond precision and signal-to-noise concerns, there is a

more prosaic risk teams may underestimate at first: As agentic workflows scale

across repositories and run more frequently, the underlying compute and

model-inference costs can quietly compound, turning what looks like a

productivity boost into a growing operational line item if left unchecked.

This can become a boardroom issue for engineering heads and CIOs because they

must justify return on investment, especially at a time when they are

grappling with what it really means to let software agents operate inside

production workflows, Patel added.One stolen credential is all it takes to compromise everything

Identity-based compromise dominated incident response activity in 2025.

Identity weaknesses played a material role in almost 90% of investigations.

Initial access was driven by identity-based techniques in 65% of cases,

including phishing, stolen credentials, brute force attempts, and insider

activity. ... Rubin said the growing dominance of identity attacks reflects

how enterprise environments have changed over the past few years, creating

more opportunities for adversaries to quietly slip in through legitimate

access pathways. “The increasing role of identity as the main attack vector is

a result of a fundamental change in the enterprise environment,” Rubin said.

“This dynamic is driven by two key factors.” He said the first driver is the

rapid expansion of SaaS adoption, cloud infrastructure, and machine

identities, which in many organizations now outnumber human accounts. That

shift has created what he described as a “massive, unmanaged shadow estate,”

where each integration represents “a new, potentially unmonitored, path into

the network.” ... The time window for defenders is shrinking. The fastest 25%

of intrusions reached data exfiltration in 72 minutes in 2025. The same metric

was 285 minutes in 2024. A separate simulation described an AI-assisted attack

that reached exfiltration in 25 minutes. Threat actors also began automating

extortion operations. Unit 42 negotiators observed consistent tone and cadence

in ransom communications, suggesting partial automation or AI-assisted

negotiation messaging.

The emerging enterprise AI stack is missing a trust layer

This is not simply a technology problem. It is an architectural one. Today’s

enterprise AI stack is built around compute, data and models, but it is

missing its most critical component: a dedicated trust layer. As AI systems

move from suggesting answers to taking actions, this gap is becoming the

single biggest barrier to scale. ... Our ability to generate AI outputs is

scaling exponentially, while our ability to understand, govern and trust those

outputs remains manual, retrospective and fragmented across point solutions.

... This layer isn’t a single tool; it’s a governance plane. I often think of

it as the avionics system in a modern aircraft. It doesn’t make the plane fly

faster, but it continuously measures conditions and makes adjustments to keep

the flight within safe parameters. Without it, you’re flying blind —

especially at scale. ... Agentic systems collapse the distance between

recommendation and action. When decisions are automated, there is far less

tolerance for opacity or after-the-fact explanations. If an AI-driven action

cannot be reconstructed, justified and owned, the risk is no longer

theoretical — it is operational. This is why trust is becoming a prerequisite

for autonomy. Governance models built for dashboards and quarterly reviews are

not sufficient when systems act in real time. CIOs need architectures that

assume scrutiny, not exception handling and that treat accountability as a

design constraint rather than a policy requirement.

This is not simply a technology problem. It is an architectural one. Today’s

enterprise AI stack is built around compute, data and models, but it is

missing its most critical component: a dedicated trust layer. As AI systems

move from suggesting answers to taking actions, this gap is becoming the

single biggest barrier to scale. ... Our ability to generate AI outputs is

scaling exponentially, while our ability to understand, govern and trust those

outputs remains manual, retrospective and fragmented across point solutions.

... This layer isn’t a single tool; it’s a governance plane. I often think of

it as the avionics system in a modern aircraft. It doesn’t make the plane fly

faster, but it continuously measures conditions and makes adjustments to keep

the flight within safe parameters. Without it, you’re flying blind —

especially at scale. ... Agentic systems collapse the distance between

recommendation and action. When decisions are automated, there is far less

tolerance for opacity or after-the-fact explanations. If an AI-driven action

cannot be reconstructed, justified and owned, the risk is no longer

theoretical — it is operational. This is why trust is becoming a prerequisite

for autonomy. Governance models built for dashboards and quarterly reviews are

not sufficient when systems act in real time. CIOs need architectures that

assume scrutiny, not exception handling and that treat accountability as a

design constraint rather than a policy requirement.India Is Not a Back Office — It’s a Core Engine of Our Global Innovation

We have a very clear data and AI strategy. We are running multiple proof-of-concept initiatives across the organisation to ensure AI becomes more than just a buzzword. The key question is: how does AI create real value for Volvo Cars? It helps us become more agile and faster, whether in product development, improving internal process efficiency, or enhancing decision-making quality. India plays a crucial role here. We have a large team working on data analytics, intelligent automation, and AI, supporting these initiatives and shaping our agenda. ... It’s not just access to talent, it’s also the mindset. Indian society is highly adaptable. You often face unforeseen situations and must find solutions quickly. That agility and ability to always have a “Plan B” drive innovation, creativity, and speed. ... Data protection is a global priority. Many regions have introduced regulations, India’s Data Privacy Act, GDPR in the European Union, and similar laws in China. For global organisations, managing how data is transferred and processed across borders is a significant challenge. For example, certain data, like Chinese customer data, may need to remain within that country. Beyond regulatory compliance, cybersecurity threats are constant. Like most organisations, we experience attempted attacks on our networks. We have a robust cybersecurity team working continuously to secure both data and infrastructure.AI likely to put a major strain on global networks—are enterprises ready?

Retrieval-heavy architecture types such as retrieval augmented generation—an

AI framework that boosts large language models by first retrieving relevant,

current information from external sources—create significant network traffic

because data is moving across regions, object stores, and vector indexes, Kale

says. “Agent-like, multi-step workflows further amplify this by triggering an

additional set of retrievals and evaluations at each step,” Kale says. “All of

these patterns create fast and unpredictable bursts of network traffic that

today’s networks were never designed to handle. These trends will not abate,

as enterprises transition from piloting AI services to running them

continually.” ... In 2026, “we will see significant disruption from

accelerated appetite for all things AI,” research firm Forrester noted in a

late-year predictions post. “Business demands of AI systems, network

connectivity, AI for IT operations, the conversational AI-powered service

desk, and more are driving substantial changes that tech leaders must enable

within their organizations.” ... “Inference workloads in particular create

continuous, high-intensity, globally distributed traffic patterns,” Barrow

says. “A single AI feature can trigger millions of additional requests per

hour, and those requests are heavier—higher bandwidth, higher concurrency, and

GPU-accelerated compute on the other side of the network.”

Retrieval-heavy architecture types such as retrieval augmented generation—an

AI framework that boosts large language models by first retrieving relevant,

current information from external sources—create significant network traffic

because data is moving across regions, object stores, and vector indexes, Kale

says. “Agent-like, multi-step workflows further amplify this by triggering an

additional set of retrievals and evaluations at each step,” Kale says. “All of

these patterns create fast and unpredictable bursts of network traffic that

today’s networks were never designed to handle. These trends will not abate,

as enterprises transition from piloting AI services to running them

continually.” ... In 2026, “we will see significant disruption from

accelerated appetite for all things AI,” research firm Forrester noted in a

late-year predictions post. “Business demands of AI systems, network

connectivity, AI for IT operations, the conversational AI-powered service

desk, and more are driving substantial changes that tech leaders must enable

within their organizations.” ... “Inference workloads in particular create

continuous, high-intensity, globally distributed traffic patterns,” Barrow

says. “A single AI feature can trigger millions of additional requests per

hour, and those requests are heavier—higher bandwidth, higher concurrency, and

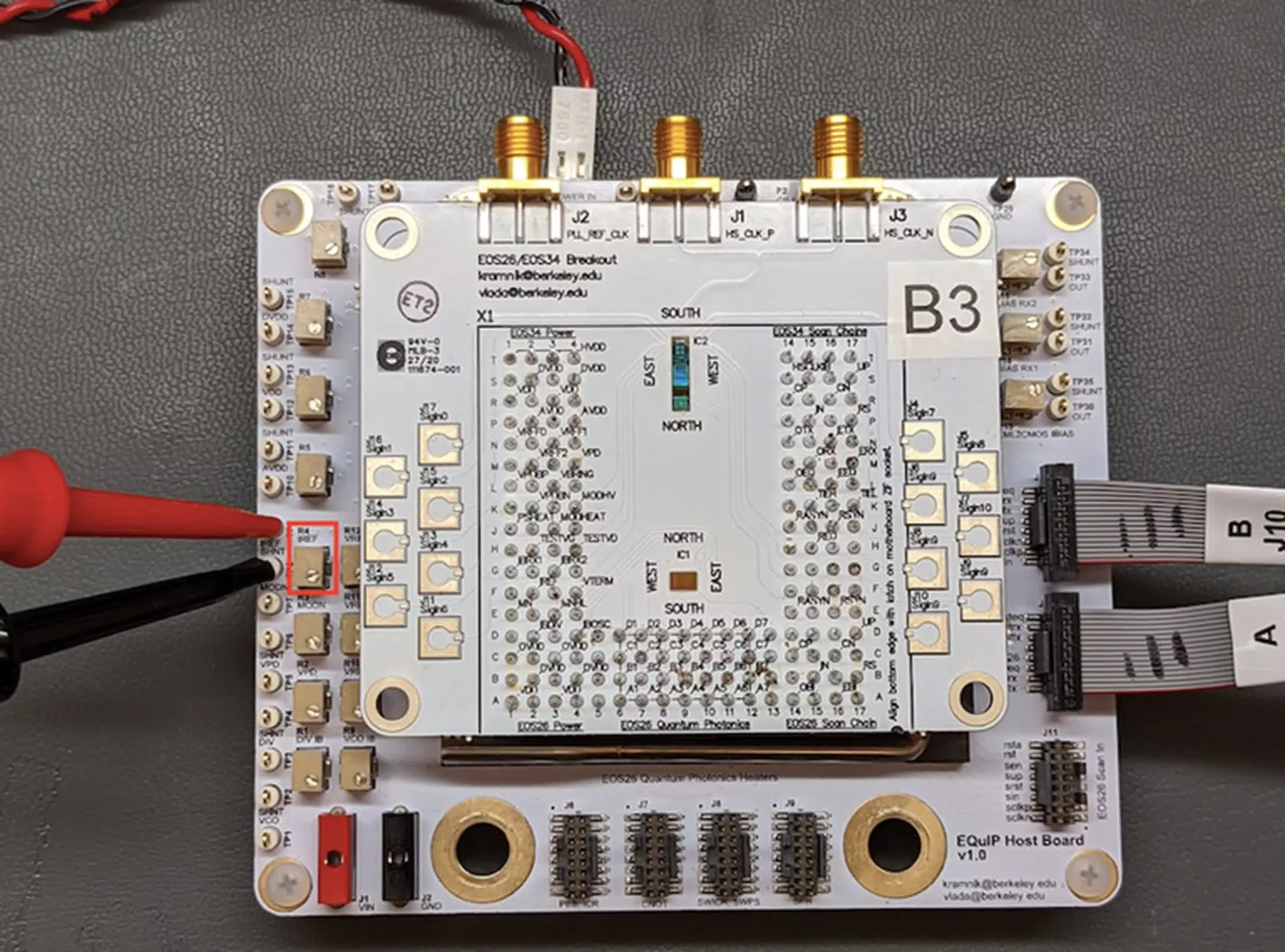

GPU-accelerated compute on the other side of the network.”Quantum Scientists Publish Manifesto Opposing Military Use of Quantum Research

The scientists’ primary goals include: to express a unified rejection of military uses of quantum research; to open debate within the quantum community about ethical implications; to create a forum for researchers concerned about militarization; and to advocate for a public database listing all research projects at public universities funded by military or defense agencies. Quantum technologies rely on the behavior of matter and light at the smallest scales, enabling ultra-secure communication, highly sensitive sensors and powerful computing systems. According to the manifesto, these capabilities are increasingly being folded into defense strategies worldwide. ... The manifesto places these developments in the context of rising defense budgets, particularly in Europe following Russia’s invasion of Ukraine. The scientists write in the manifesto that the research and development sector is not exempt from the broader rearmament trend and that dual-use technologies — those that can serve both civilian and military ends — are increasingly prioritized in policy documents. The scientists acknowledge that quantum technologies are not inherently military tools. However, according to the manifesto, once such systems are developed, their applications may be difficult to control. The scientists argue that closer institutional ties between universities and defense agencies risk undermining academic independence. .From pilot purgatory to productive failure: Fixing AI's broken learning loop

"Model performance can drift with data changes, user behavior, and policy

updates, so a 'set it and forget it' KPI can reward the wrong thing, too late,"

Manos said. The penalty for CIOs, however, comes from the time lag between the

misread KPI signal and the CIO's moves to correct it. Timing is everything, and

"by the time a quarterly metric flags a problem, the root cause has already

compounded across workflows," Manos said. ... Waiting until the end of a POC to

figure out why a concept doesn't scale is clearly too late, but neither is it

prudent to abandon a "trial, observation, and refine" cycle entirely, Alex

Tyrrell, head of advanced technologies at Wolters Kluwer and CTO at Wolters

Kluwer Health, said. Instead, Tyrrell argues for refining the interaction

process itself to detect issues earlier in a safe setting, particularly in

regulated, high-trust environments like healthcare. He recommends pairing each

iteration with both predictive and diagnostic signals, so IT teams can intervene

before the error ripples down to the customer level. ... AI pilots fail for the

same non-technical reasons that have always plagued technology performance, such

as a governance vacuum, organizational unreadiness, low usage rates, or

"measurement theater," which is when tech performance can't be tied to a

specific business value, explained Baker.

"Model performance can drift with data changes, user behavior, and policy

updates, so a 'set it and forget it' KPI can reward the wrong thing, too late,"

Manos said. The penalty for CIOs, however, comes from the time lag between the

misread KPI signal and the CIO's moves to correct it. Timing is everything, and

"by the time a quarterly metric flags a problem, the root cause has already

compounded across workflows," Manos said. ... Waiting until the end of a POC to

figure out why a concept doesn't scale is clearly too late, but neither is it

prudent to abandon a "trial, observation, and refine" cycle entirely, Alex

Tyrrell, head of advanced technologies at Wolters Kluwer and CTO at Wolters

Kluwer Health, said. Instead, Tyrrell argues for refining the interaction

process itself to detect issues earlier in a safe setting, particularly in

regulated, high-trust environments like healthcare. He recommends pairing each

iteration with both predictive and diagnostic signals, so IT teams can intervene

before the error ripples down to the customer level. ... AI pilots fail for the

same non-technical reasons that have always plagued technology performance, such

as a governance vacuum, organizational unreadiness, low usage rates, or

"measurement theater," which is when tech performance can't be tied to a

specific business value, explained Baker.