Quote for the day:

"The only way to do great work is to love what you do." -- Steve Jobs

Amazon AI coding agent hacked to inject data wiping commands

The hacker gained access to Amazon’s repository after submitting a pull

request from a random account, likely due to workflow misconfiguration or

inadequate permission management by the project maintainers. ... On July 23,

Amazon received reports from security researchers that something was wrong

with the extension and the company started to investigate. Next day, AWS

released a clean version, Q 1.85.0, which removed the unapproved code. “AWS is

aware of and has addressed an issue in the Amazon Q Developer Extension for

Visual Studio Code (VSC). Security researchers reported a potential for

unapproved code modification,” reads the security bulletin. “AWS Security

subsequently identified a code commit through a deeper forensic analysis in

the open-source VSC extension that targeted Q Developer CLI command

execution.” “After which, we immediately revoked and replaced the credentials,

removed the unapproved code from the codebase, and subsequently released

Amazon Q Developer Extension version 1.85.0 to the marketplace.” AWS assured

users that there was no risk from the previous release because the malicious

code was incorrectly formatted and wouldn’t run on their environments.

The hacker gained access to Amazon’s repository after submitting a pull

request from a random account, likely due to workflow misconfiguration or

inadequate permission management by the project maintainers. ... On July 23,

Amazon received reports from security researchers that something was wrong

with the extension and the company started to investigate. Next day, AWS

released a clean version, Q 1.85.0, which removed the unapproved code. “AWS is

aware of and has addressed an issue in the Amazon Q Developer Extension for

Visual Studio Code (VSC). Security researchers reported a potential for

unapproved code modification,” reads the security bulletin. “AWS Security

subsequently identified a code commit through a deeper forensic analysis in

the open-source VSC extension that targeted Q Developer CLI command

execution.” “After which, we immediately revoked and replaced the credentials,

removed the unapproved code from the codebase, and subsequently released

Amazon Q Developer Extension version 1.85.0 to the marketplace.” AWS assured

users that there was no risk from the previous release because the malicious

code was incorrectly formatted and wouldn’t run on their environments.How to migrate enterprise databases and data to the cloud

Migrating data is only part of the challenge; database structures, stored

procedures, triggers and other code must also be moved. In this part of the

process, IT leaders must identify and select migration tools that address the

specific needs of the enterprise, especially if they’re moving between

different database technologies (heterogeneous migration). Some things they’ll

need to consider are: compatibility, transformation requirements and the

ability to automate repetitive tasks. ... During migration, especially

for large or critical systems, IT leaders should keep their on-premises and

cloud databases synchronized to avoid downtime and data loss. To help

facilitate this, select synchronization tools that can handle the data change

rates and business requirements. And be sure to test these tools in advance:

High rates of change or complex data relationships can overwhelm some

solutions, making parallel runs or phased cutovers unfeasible. ... Testing is

a safety net. IT leaders should develop comprehensive test plans that cover

not just technical functionality, but also performance, data integrity and

user acceptance. Leaders should also plan for parallel runs, operating both

on-premises and cloud systems in tandem, to validate that everything works as

expected before the final cutover. They should engage end users early in the

process in order to ensure the migrated environment meets business needs.

Migrating data is only part of the challenge; database structures, stored

procedures, triggers and other code must also be moved. In this part of the

process, IT leaders must identify and select migration tools that address the

specific needs of the enterprise, especially if they’re moving between

different database technologies (heterogeneous migration). Some things they’ll

need to consider are: compatibility, transformation requirements and the

ability to automate repetitive tasks. ... During migration, especially

for large or critical systems, IT leaders should keep their on-premises and

cloud databases synchronized to avoid downtime and data loss. To help

facilitate this, select synchronization tools that can handle the data change

rates and business requirements. And be sure to test these tools in advance:

High rates of change or complex data relationships can overwhelm some

solutions, making parallel runs or phased cutovers unfeasible. ... Testing is

a safety net. IT leaders should develop comprehensive test plans that cover

not just technical functionality, but also performance, data integrity and

user acceptance. Leaders should also plan for parallel runs, operating both

on-premises and cloud systems in tandem, to validate that everything works as

expected before the final cutover. They should engage end users early in the

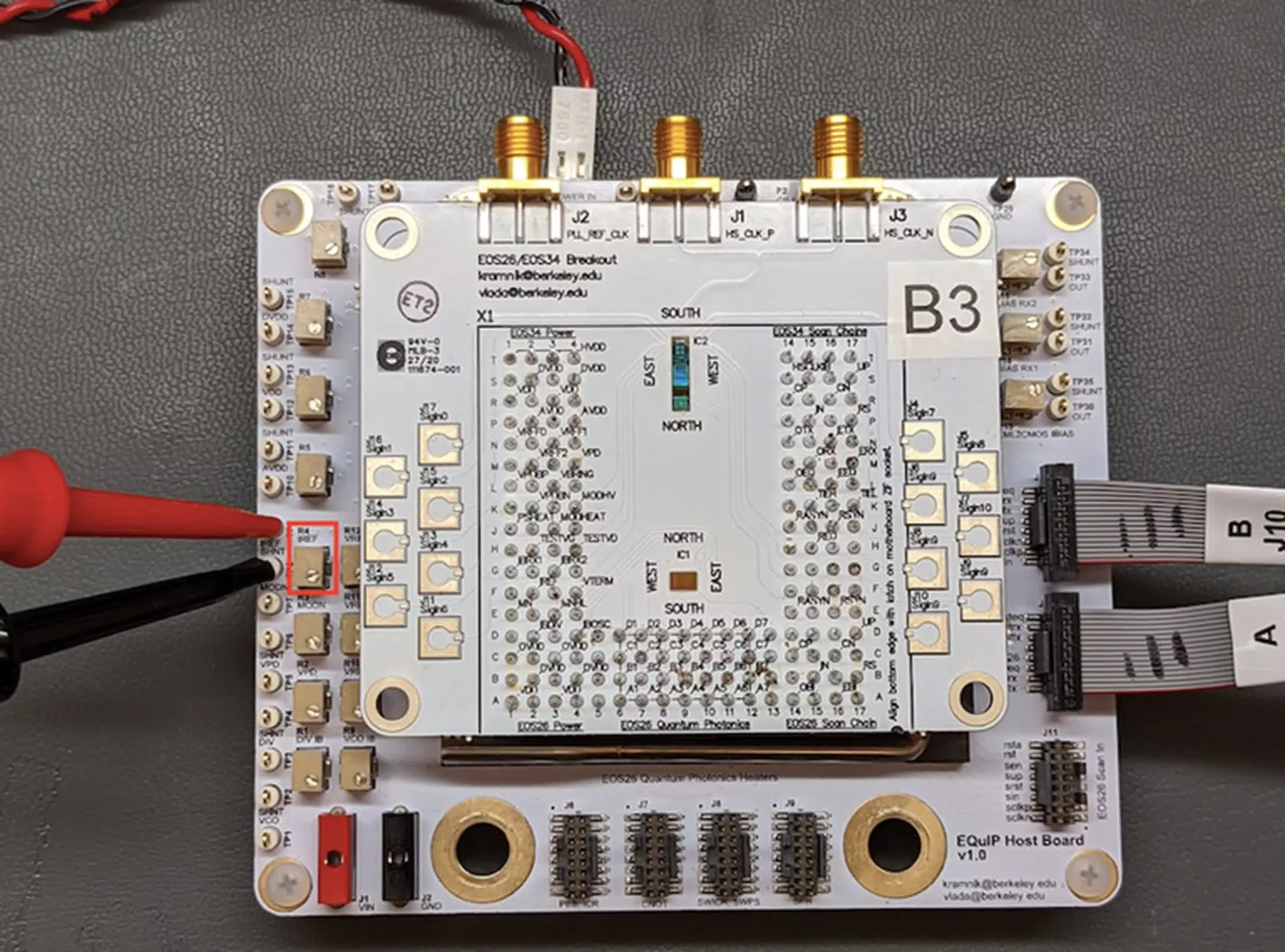

process in order to ensure the migrated environment meets business needs.Researchers build first chip combining electronics, photonics, and quantum light

The new chip integrates quantum light sources and electronic controllers using a

standard 45-nanometer semiconductor process. This approach paves the way for

scaling up quantum systems in computing, communication, and sensing, fields that

have traditionally relied on hand-built devices confined to laboratory settings.

"Quantum computing, communication, and sensing are on a decades-long path from

concept to reality," said Miloš Popović, associate professor of electrical and

computer engineering at Boston University and a senior author of the study.

"This is a small step on that path – but an important one, because it shows we

can build repeatable, controllable quantum systems in commercial semiconductor

foundries." ... "What excites me most is that we embedded the control

directly on-chip – stabilizing a quantum process in real time," says Anirudh

Ramesh, a PhD student at Northwestern who led the quantum measurements. "That's

a critical step toward scalable quantum systems." This focus on stabilization is

essential to ensure that each light source performs reliably under varying

conditions. Imbert Wang, a doctoral student at Boston University specializing in

photonic device design, highlighted the technical complexity.

The new chip integrates quantum light sources and electronic controllers using a

standard 45-nanometer semiconductor process. This approach paves the way for

scaling up quantum systems in computing, communication, and sensing, fields that

have traditionally relied on hand-built devices confined to laboratory settings.

"Quantum computing, communication, and sensing are on a decades-long path from

concept to reality," said Miloš Popović, associate professor of electrical and

computer engineering at Boston University and a senior author of the study.

"This is a small step on that path – but an important one, because it shows we

can build repeatable, controllable quantum systems in commercial semiconductor

foundries." ... "What excites me most is that we embedded the control

directly on-chip – stabilizing a quantum process in real time," says Anirudh

Ramesh, a PhD student at Northwestern who led the quantum measurements. "That's

a critical step toward scalable quantum systems." This focus on stabilization is

essential to ensure that each light source performs reliably under varying

conditions. Imbert Wang, a doctoral student at Boston University specializing in

photonic device design, highlighted the technical complexity.

Product Manager vs. Product Owner: Why Teams Get These Roles Wrong

While PMs work on the strategic plane, Product Owners anchor delivery. The PO is

the guardian of the backlog. They translate the product strategy into epics and

user stories, groom the backlog, and support the development team during

sprints. They don’t just manage the “what” — they deeply understand the “how.”

They answer developer questions, clarify scope, and constantly re-evaluate

priorities based on real-time feedback. In Agile teams, they play a central role

in turning strategic vision into working software. Where PMs answer to the

business, POs are embedded with the dev team. They make trade-offs, adjust

scope, and ensure the product is built right. ... Some products need to grow

fast. That’s where Growth PMs come in. They focus on the entire user lifecycle,

often structured using the PIRAT funnel: Problem, Insight, Reach, Activation,

and Trust (a modern take on traditional Pirate Metrics, such as Acquisition,

Activation, Retention, Referral, and Revenue). This model guides Growth PMs in

identifying where user friction occurs and what levers to pull for meaningful

impact. They conduct experiments, optimize funnels, and collaborate closely with

marketing and data science teams to drive user growth.

While PMs work on the strategic plane, Product Owners anchor delivery. The PO is

the guardian of the backlog. They translate the product strategy into epics and

user stories, groom the backlog, and support the development team during

sprints. They don’t just manage the “what” — they deeply understand the “how.”

They answer developer questions, clarify scope, and constantly re-evaluate

priorities based on real-time feedback. In Agile teams, they play a central role

in turning strategic vision into working software. Where PMs answer to the

business, POs are embedded with the dev team. They make trade-offs, adjust

scope, and ensure the product is built right. ... Some products need to grow

fast. That’s where Growth PMs come in. They focus on the entire user lifecycle,

often structured using the PIRAT funnel: Problem, Insight, Reach, Activation,

and Trust (a modern take on traditional Pirate Metrics, such as Acquisition,

Activation, Retention, Referral, and Revenue). This model guides Growth PMs in

identifying where user friction occurs and what levers to pull for meaningful

impact. They conduct experiments, optimize funnels, and collaborate closely with

marketing and data science teams to drive user growth.

Ransomware payments to be banned – the unanswered questions

With thresholds in place, businesses/organisations may choose to operate

differently so that they aren’t covered by the ban, such as lowering turnover or

number of employees. All of this said, rules like this could help to get a

better picture of what’s going on with ransomware threats in the UK. Arda

Büyükkaya, senior cyber threat intelligence analyst at EclecticIQ, explains

more: “As attackers evolve their tactics and exploit vulnerabilities across

sectors, timely intelligence-sharing becomes critical to mounting an effective

defence. Encouraging businesses to report incidents more consistently will help

build a stronger national threat intelligence picture something that’s important

as these attacks grow more frequent and become sophisticated. To spare any

confusion, sector-specific guidance should be provided by government on how

resources should be implemented, making resources clear and accessible. “Many

victims still hesitate to come forward due to concerns around reputational

damage, legal exposure, or regulatory fallout,” said Büyükkaya. “Without

mechanisms that protect and support victims, underreporting will remain a

barrier to national cyber resilience.” Especially in the earlier days of the

legislation, organisations may still feel pressured to pay in order to keep

operations running, even if they’re banned from doing so.

With thresholds in place, businesses/organisations may choose to operate

differently so that they aren’t covered by the ban, such as lowering turnover or

number of employees. All of this said, rules like this could help to get a

better picture of what’s going on with ransomware threats in the UK. Arda

Büyükkaya, senior cyber threat intelligence analyst at EclecticIQ, explains

more: “As attackers evolve their tactics and exploit vulnerabilities across

sectors, timely intelligence-sharing becomes critical to mounting an effective

defence. Encouraging businesses to report incidents more consistently will help

build a stronger national threat intelligence picture something that’s important

as these attacks grow more frequent and become sophisticated. To spare any

confusion, sector-specific guidance should be provided by government on how

resources should be implemented, making resources clear and accessible. “Many

victims still hesitate to come forward due to concerns around reputational

damage, legal exposure, or regulatory fallout,” said Büyükkaya. “Without

mechanisms that protect and support victims, underreporting will remain a

barrier to national cyber resilience.” Especially in the earlier days of the

legislation, organisations may still feel pressured to pay in order to keep

operations running, even if they’re banned from doing so.

AI Unleashed: Shaping the Future of Cyber Threats

AI optimizes reconnaissance and targeting, giving hackers the tools to scour

public sources, leaked and publicly available breach data, and social media to

build detailed profiles of potential targets in minutes. This enhanced data

gathering lets attackers identify high-value victims and network vulnerabilities

with unprecedented speed and accuracy. AI has also supercharged phishing

campaigns by automatically crafting phishing emails and messages that mimic an

organization’s formatting and reference real projects or colleagues, making them

nearly indistinguishable from genuine human-originated communications. ... AI is

also being weaponized to write and adapt malicious code. AI-powered malware can

autonomously modify itself to slip past signature-based antivirus defenses,

probe for weaknesses, select optimal exploits, and manage its own

command-and-control decisions. Security experts note that AI accelerates the

malware development cycle, reducing the time from concept to deployment.

... AI presents more than external threats. It has exposed a new category

of targets and vulnerabilities, as many organizations now rely on AI models for

critical functions, such as authentication systems and network monitoring. These

AI systems themselves can be manipulated or sabotaged by adversaries if proper

safeguards have not been implemented.

AI optimizes reconnaissance and targeting, giving hackers the tools to scour

public sources, leaked and publicly available breach data, and social media to

build detailed profiles of potential targets in minutes. This enhanced data

gathering lets attackers identify high-value victims and network vulnerabilities

with unprecedented speed and accuracy. AI has also supercharged phishing

campaigns by automatically crafting phishing emails and messages that mimic an

organization’s formatting and reference real projects or colleagues, making them

nearly indistinguishable from genuine human-originated communications. ... AI is

also being weaponized to write and adapt malicious code. AI-powered malware can

autonomously modify itself to slip past signature-based antivirus defenses,

probe for weaknesses, select optimal exploits, and manage its own

command-and-control decisions. Security experts note that AI accelerates the

malware development cycle, reducing the time from concept to deployment.

... AI presents more than external threats. It has exposed a new category

of targets and vulnerabilities, as many organizations now rely on AI models for

critical functions, such as authentication systems and network monitoring. These

AI systems themselves can be manipulated or sabotaged by adversaries if proper

safeguards have not been implemented.

Agile and Quality Engineering: Building a Culture of Excellence Through a Holistic Approach

Could Metasurfaces be The Next Quantum Information Processors?

Broadly speaking, the work embodies metasurface-based quantum optics which,

beyond carving a path toward room-temperature quantum computers and networks,

could also benefit quantum sensing or offer “lab-on-a-chip” capabilities for

fundamental science Designing a single metasurface that can finely control

properties like brightness, phase, and polarization presented unique challenges

because of the mathematical complexity that arises once the number of photons

and therefore the number of qubits begins to increase. Every additional photon

introduces many new interference pathways, which in a conventional setup would

require a rapidly growing number of beam splitters and output ports. To bring

order to the complexity, the researchers leaned on a branch of mathematics

called graph theory, which uses points and lines to represent connections and

relationships. By representing entangled photon states as many connected lines

and points, they were able to visually determine how photons interfere with each

other, and to predict their effects in experiments. Graph theory is also used in

certain types of quantum computing and quantum error correction but is not

typically considered in the context of metasurfaces, including their design and

operation. The resulting paper was a collaboration with the lab of Marko Loncar,

whose team specializes in quantum optics and integrated photonics and provided

needed expertise and equipment.

Broadly speaking, the work embodies metasurface-based quantum optics which,

beyond carving a path toward room-temperature quantum computers and networks,

could also benefit quantum sensing or offer “lab-on-a-chip” capabilities for

fundamental science Designing a single metasurface that can finely control

properties like brightness, phase, and polarization presented unique challenges

because of the mathematical complexity that arises once the number of photons

and therefore the number of qubits begins to increase. Every additional photon

introduces many new interference pathways, which in a conventional setup would

require a rapidly growing number of beam splitters and output ports. To bring

order to the complexity, the researchers leaned on a branch of mathematics

called graph theory, which uses points and lines to represent connections and

relationships. By representing entangled photon states as many connected lines

and points, they were able to visually determine how photons interfere with each

other, and to predict their effects in experiments. Graph theory is also used in

certain types of quantum computing and quantum error correction but is not

typically considered in the context of metasurfaces, including their design and

operation. The resulting paper was a collaboration with the lab of Marko Loncar,

whose team specializes in quantum optics and integrated photonics and provided

needed expertise and equipment.

New AI architecture delivers 100x faster reasoning than LLMs with just 1,000 training examples

When faced with a complex problem, current LLMs largely rely on chain-of-thought

(CoT) prompting, breaking down problems into intermediate text-based steps,

essentially forcing the model to “think out loud” as it works toward a

solution. While CoT has improved the reasoning abilities of LLMs, it has

fundamental limitations. In their paper, researchers at Sapient Intelligence

argue that “CoT for reasoning is a crutch, not a satisfactory solution. It

relies on brittle, human-defined decompositions where a single misstep or a

misorder of the steps can derail the reasoning process entirely.” ... To move

beyond CoT, the researchers explored “latent reasoning,” where instead of

generating “thinking tokens,” the model reasons in its internal, abstract

representation of the problem. This is more aligned with how humans think; as

the paper states, “the brain sustains lengthy, coherent chains of reasoning with

remarkable efficiency in a latent space, without constant translation back to

language.” However, achieving this level of deep, internal reasoning in AI is

challenging. Simply stacking more layers in a deep learning model often leads to

a “vanishing gradient” problem, where learning signals weaken across layers,

making training ineffective.

When faced with a complex problem, current LLMs largely rely on chain-of-thought

(CoT) prompting, breaking down problems into intermediate text-based steps,

essentially forcing the model to “think out loud” as it works toward a

solution. While CoT has improved the reasoning abilities of LLMs, it has

fundamental limitations. In their paper, researchers at Sapient Intelligence

argue that “CoT for reasoning is a crutch, not a satisfactory solution. It

relies on brittle, human-defined decompositions where a single misstep or a

misorder of the steps can derail the reasoning process entirely.” ... To move

beyond CoT, the researchers explored “latent reasoning,” where instead of

generating “thinking tokens,” the model reasons in its internal, abstract

representation of the problem. This is more aligned with how humans think; as

the paper states, “the brain sustains lengthy, coherent chains of reasoning with

remarkable efficiency in a latent space, without constant translation back to

language.” However, achieving this level of deep, internal reasoning in AI is

challenging. Simply stacking more layers in a deep learning model often leads to

a “vanishing gradient” problem, where learning signals weaken across layers,

making training ineffective.

For the love of all things holy, please stop treating RAID storage as a backup

Although RAID is a backup by definition, practically, a backup doesn't look

anything like a RAID array. That's because an ideal backup is offsite. It's not

on your computer, and ideally, it's not even in the same physical location.

Remember, RAID is a warranty, and a backup is insurance. RAID protects you from

inevitable failure, while a backup protects you from unforeseen failure.

Eventually, your drives will fail, and you'll need to replace disks in your RAID

array. This is part of routine maintenance, and if you're operating an array for

long enough, you should probably have drive swaps on a schedule of several years

to keep everything operating smoothly. A backup will protect you from everything

else. Maybe you have multiple drives fail at once. A backup will protect you.

Lord forbid you fall victim to a fire, flood, or other natural disaster and your

RAID array is lost or damaged in the process. A backup still protects you. It

doesn't need to be a fire or flood for you to get use out of a backup. There are

small issues that could put your data at risk, such as your PC being infected

with malware, or trying to write (and replicate) corrupted data. You can dream

up just about any situation where data loss is a risk, and a backup will be able

to get your data back in situations where RAID can't.

Although RAID is a backup by definition, practically, a backup doesn't look

anything like a RAID array. That's because an ideal backup is offsite. It's not

on your computer, and ideally, it's not even in the same physical location.

Remember, RAID is a warranty, and a backup is insurance. RAID protects you from

inevitable failure, while a backup protects you from unforeseen failure.

Eventually, your drives will fail, and you'll need to replace disks in your RAID

array. This is part of routine maintenance, and if you're operating an array for

long enough, you should probably have drive swaps on a schedule of several years

to keep everything operating smoothly. A backup will protect you from everything

else. Maybe you have multiple drives fail at once. A backup will protect you.

Lord forbid you fall victim to a fire, flood, or other natural disaster and your

RAID array is lost or damaged in the process. A backup still protects you. It

doesn't need to be a fire or flood for you to get use out of a backup. There are

small issues that could put your data at risk, such as your PC being infected

with malware, or trying to write (and replicate) corrupted data. You can dream

up just about any situation where data loss is a risk, and a backup will be able

to get your data back in situations where RAID can't.

No comments:

Post a Comment