Why Qualcomm believes its new always-on camera for phones isn’t a security risk

Judd Heap, VP of Product Management at Qualcomm’s Camera, Computer Vision and

Video departments, told TechRadar, “The always-on aspect is frankly going to

scare some people so we wanted to do this responsibly. “The low power aspect

where the camera is always looking for a face happens without ever leaving the

Sensing Hub. All of the AI and the image processing is done in that block, and

that data is not even exportable to DRAM. “We took great care to make sure that

no-one can grab that data and so someone can’t watch you through your phone.”

This means the data from the always-on camera won’t be usable by other apps on

your phone or sent to the cloud. It should stick in this one area of the phone’s

chipset - that’s what Heap is referring to as the Sensing Hub - for detecting

your face. Heap continues, “We added this specific hardware to the Sensing Hub

as we believe it’s the next step in the always-on body of functions that need to

be on the chip. We’re already listening, so we thought the camera would be the

next logical step.”

The HaloDoc Chaos Engineering Journey

The platform is composed of several microservices hosted across hybrid

infrastructure elements, mainly on a managed Kubernetes cloud, with an

intricately designed communication framework. We also leverage AWS cloud

services such as RDS, Lambda and S3, and consume a significant suite of open

source tooling, especially from the Cloud Native Computing Foundation landscape,

to support the core services. As the architect and manager of site reliability

engineering (SRE) at HaloDoc, ensuring smooth functioning of these services is

my core responsibility. In this post, I’d like to provide a quick snapshot of

why and how we use chaos engineering as one of the means to maintain resilience.

While operating a platform of such scale and churn (newer services are onboarded

quite frequently), one is bound to encounter some jittery situations. We had a

few incidents with newly added services going down that, despite being

immediately mitigated, caused concern for our team. In a system with the kind of

dependencies we had, it was necessary to test and measure service availability

across a host of failure scenarios.

Zero trust, cloud security pushing CISA to rethink its approach to cyber services

“When agencies hear the IG say something about how things are going with FISMA,

they really pay attention. If we’re in a position to help influence that in a

positive way, it’s absolutely critical that we do so,” he said. “We’ve got to

pare down what we’re spending on IT and really focus on those things that

matter. We have to adjust to a risk management approach in terms of how we apply

architecture and capabilities across the enterprise to support the varying

degrees of risk that we can absorb or manage within the within a given agency

network. That’s like a huge part of what we need to continue to advocate for.

But, to me, that is a significant element of the culture shift that needs to

happen.” One way CISA is going to drive some of the culture and technology

changes to help agencies achieve a zero trust environment is through the

continuous diagnostics and mitigation program. CISA released a request for

information for endpoint detection and response capabilities in October that

vendors under the CDM program will implement for agencies.

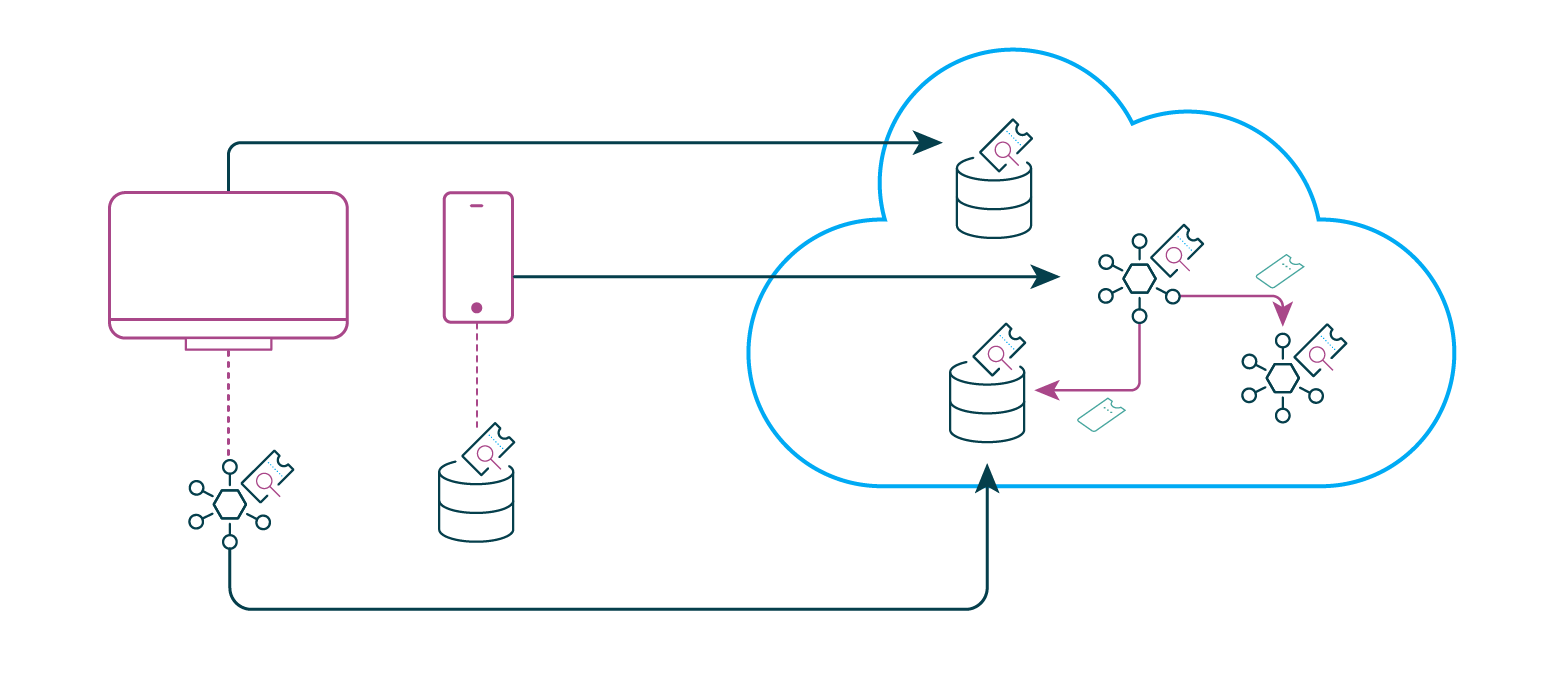

DeFi’s Decentralization Is an Illusion: BIS Quarterly Review

“The decentralised nature of DeFi raises the question of how to implement any

policy provisions,” the report said. “We argue that full decentralisation in

DeFi is an illusion.” One element that could break this illusion is DeFi’s

governance tokens, which are cryptocurrencies that represent voting power in

decentralized systems, according to the report. Governance-token holders can

influence a DeFi project by voting on proposals or changes to the governance

system. These governing bodies are called decentralised autonomous organizations

(DAO) and each one can oversee multiple DeFi projects. “This element of

centralisation can serve as the basis for recognising DeFi platforms as legal

entities similar to corporations,” the report said. It gave an example of how

DAOs can register as limited liability companies in the state of Wyoming. “These

groups, and the governance protocols on which their interactions are based, are

the natural entry points for policymakers,” the report said. During Monday’s

briefing, Shin explained that there are three areas regulators could address

through these centralized organizational bodies.

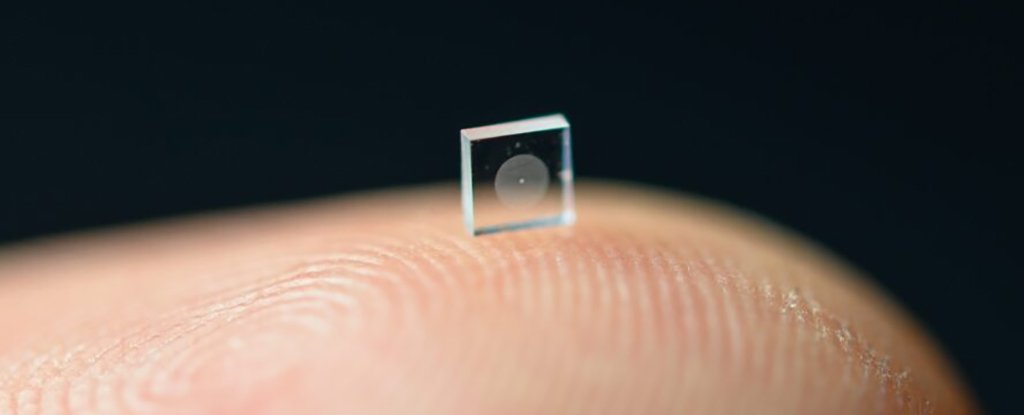

This New Ultra-Compact Camera Is The Size of a Grain of Salt And Takes Stunning Photos

Using a technology known as a metasurface, which is covered with 1.6 million

cylindrical posts, the camera is able to capture full-color photos that are as

good as images snapped by conventional lenses some half a million times bigger

than this particular camera. And the super-small contraption has the potential

to be helpful in a whole range of scenarios, from helping miniature soft robots

explore the world, to giving experts a better idea of what's going on deep

inside the human body. "It's been a challenge to design and configure these

little microstructures to do what you want," says computer scientist Ethan Tseng

from Princeton University in New Jersey. ... One of the camera's special tricks

is the way it combines hardware with computational processing to improve the

captured image: Signal processing algorithms use machine learning techniques to

reduce blur and other distortions that otherwise occur with cameras this size.

The camera effectively uses software to improve its vision.

Top Internet of Things (IoT) Trends for 2022: The Future of IoT

Hyperconnectivity and ultra-low latency are necessary to power successful IoT

solutions. 5G is the connectivity that will make more widespread IoT access

possible. Currently, cellular companies and other enterprises are working to

make 5G technology available in their areas to support further IoT development.

Bjorn Andersson, senior director of global IoT marketing at Hitachi Vantara, an

IT service management and top-performing IoT company, explained why the next

wave of wider 5G access will make all the difference for new IoT use cases and

efficiencies. “With commercial 5G networks already live worldwide, the next wave

of 5G expansion will allow organizations to digitalize with more mobility,

flexibility, reliability, and security,” Andersson said. “Manufacturing plants

today must often hardwire all their machines, as Wi-Fi lacks the necessary

reliability, bandwidth, or security. “5G delivers the best of two worlds: the

flexibility of wireless with the reliability, performance, and security of

wires. 5G is creating a tipping point.

Zero Trust: Time to Get Rid of Your VPN

OAuth and OpenID Connect (OIDC) are standards that enable a token-based

architecture, a pattern that fits exceptionally well with a ZTA. In fact, you

could argue that zero trust architecture is a token-based architecture. So, how

does a token-based architecture work? First, it determines who the user is or

what system or service is requesting access. Then, it issues an access token.

The token itself will contain different claims, depending on the resource that

is being requested as well as contextual information. The claims given in the

token can, for example, be determined by a policy engine such as Open Policy

Agent (OPA). A policy describes the allowed access and which claims are needed

to access certain resources. In the context of the access request, the token

service can issue a token with appropriate claims based on that defined policy.

Resources that are being accessed need to verify the identity. In modern

architectures, this is typically some type of API. When the request to the API

is received, the API validates the access token sent with the request.

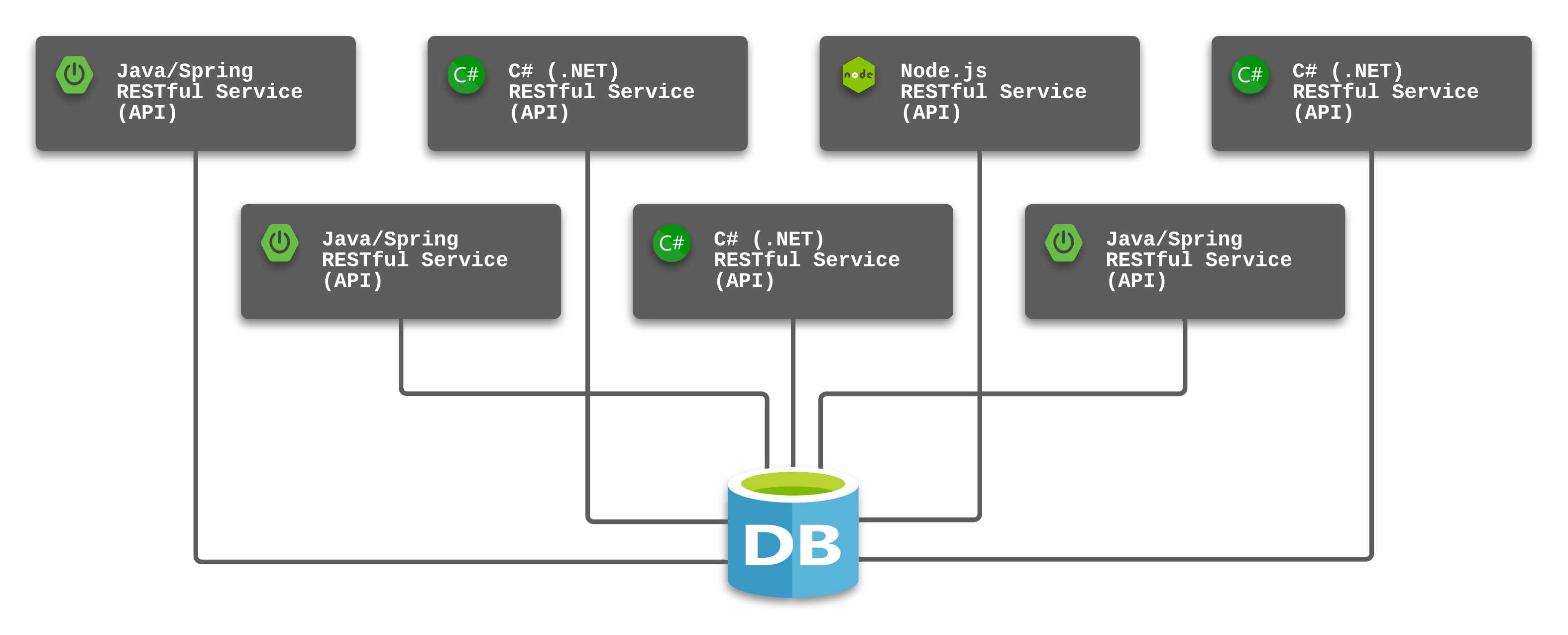

Breaking Up a Monolithic Database with Kong

The RESTful API software style provides an easy manner for client applications

to gain access to the resources (data) they need to meet business needs. In

fact, it did not take long for Javascript-based frameworks like Angular, React,

and Vue to rely on RESTful APIs and lead the market for web-based applications.

This pattern of RESTful service APIs and frontend Javascript frameworks sparked

a desire for many organizations to fund projects migrating away from monolithic

or outdated applications. The RESTful API pattern also provided a much-needed

boost in the technology economy which was still recovering from the impact of

the Great Recession. ... My recommended approach is to isolate a given

microservice with a dedicated database. This allows the count and size of the

related components to match user demand while avoiding additional costs for

elements that do not have the same levels of demand. Database administrators are

quick to defend the single-database design by noting the benefits that

constraints and relationships can provide when all of the elements of the

application reside in a single database.

Securing identities for the digital supply chain

As the world becomes more connected, governing and securing digital certificates

is a business essential. As certificates’ lifespans continue to shrink,

enterprises need to deploy ever more into their digital infrastructure. With

greater numbers of certificates entering an organisations’ cyber space, there is

more room for dangerous expirations to go unnoticed. From business-ending

outages to crippling cyber attacks, the potential downside to bad management of

this vital utility is huge. Unfortunately, digital certificates are still

woefully mismanaged by businesses and governments world-wide. The volume of

certificates being used to secure digital identities is growing exponentially,

and businesses are faced with new management challenges that can’t be solved

with legacy certificate automation models or outdated on-premises solutions. ...

Today’s digital-first enterprise requires a modern approach to managing the

exponential growth of certificates, regardless of the issuing certificate

authority (CA), and one built to work within today’s complex zero trust IT

infrastructure.

Lightweight External Business Rules

Traditional rule engines that enable Domain-experts to author rule sets and

behaviors outside the codebase, are highly useful for a complex and large

business landscape. But for smaller and less complex systems, they often turn

out to be overkill and remain underutilised given the recurring cost of an

on-premises or Cloud infrastructure they run on, License cost, etc. For a small

team, adding any component requiring an additional skill set is a waste of its

bandwidth. Some of the commercial rule engines have steep learning curves. In

this article, we attempt to illustrate how we succeeded in maintaining rules

outside source code to execute a medium scale system running on Java tech-stack

like Spring Boot, making it easier for other users to customize these rules.

This approach is suitable for a team that cannot afford a dedicated rule engine,

its infrastructure, maintenance , recurring cost etc. and its domain experts

have a foundation of Software or people within the team wear multiple hats.

Quote for the day:

"Coaching is unlocking a person's

potential to maximize their own performance. It is helping them to learn

rather than teaching them." -- John Whitmore

No comments:

Post a Comment