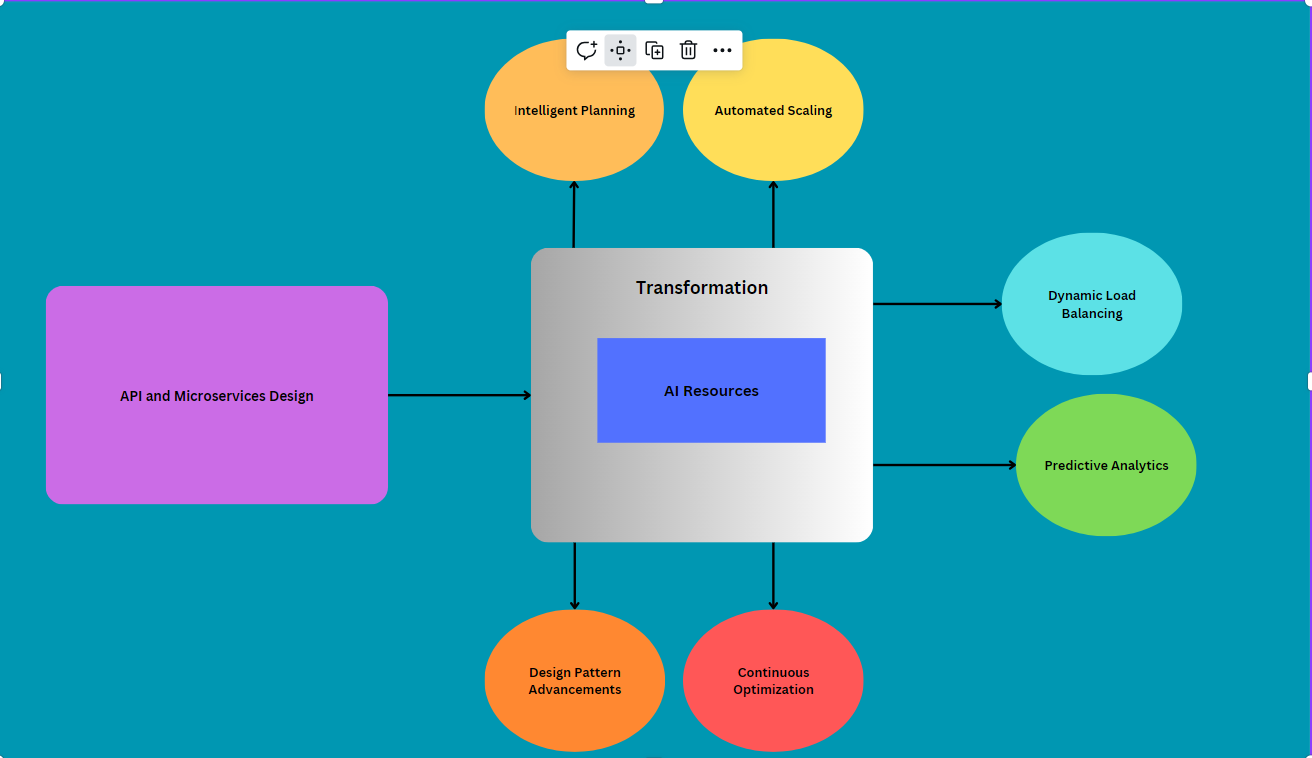

Optimize AI at Scale With Platform Engineering for MLOps

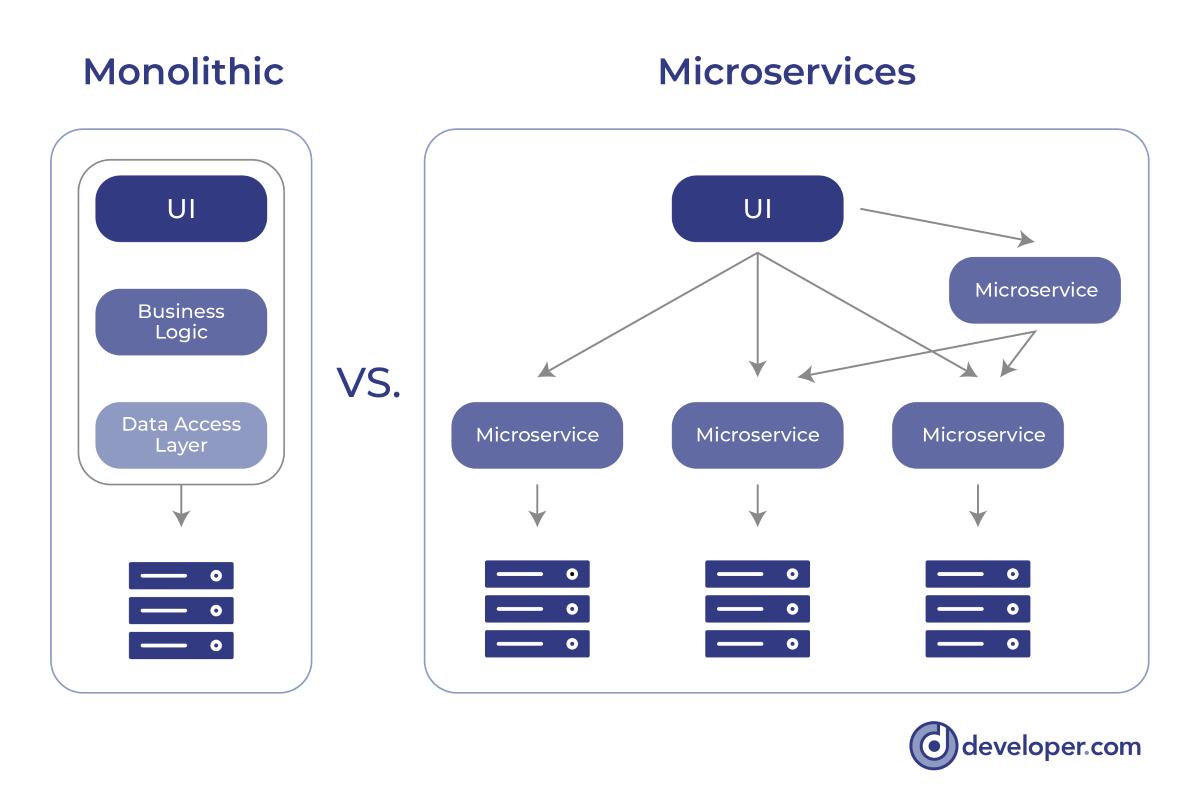

Just as platform engineering emerged from the DevOps movement to streamline app

development workflows, so too must platform engineering streamline the workflows

of MLOps. To achieve this, one must first recognize the fundamental differences

between DevOps and MLOps. Only then can one produce an effective platform

engineering solution for ML engineers. To enable AI at scale, enterprises must

commit to developing, deploying and maintaining platform engineering solutions

that are purpose-built for MLOps. Whether due to data governance requirements or

practical concerns about moving vast volumes of data over significant

geographical distances, MLOps at scale require enterprises to utilize a

spoke-and-wheel approach. Model development and training occurs centrally,

trained models are distributed to edge locations for fine-tuning on local data,

and fined-tuned models are deployed close to where end users interact with them

and the AI applications they leverage. ... Enterprises should hire engineers

with MLOps experience to fill platform engineering roles appropriately.

According to research from the World Economic Forum, AI is projected to create

around 97 million new jobs by 2025.

The Blockchain Integrity Act: Latest Attempt to Restrict Financial Privacy

In short, the Blockchain Integrity Act would first establish a two‐year

moratorium that prohibits financial institutions from going anywhere near

cryptocurrency that has been routed through a mixer. With that two‐year

moratorium in place, the Blockchain Integrity Act would then require the

Department of the Treasury to study how people use mixers and other

privacy‐enhancing technology. ... The second half of the legislation—the

request for a study—is less concerning if it’s considered alone and without the

surrounding context. The request seeks information regarding different types of

privacy‐enhancing technology, illicit and legitimate use history, and an

analysis of what the government’s role might be here. Those are all reasonable

inquiries. Again, without additional context, it’s an encouraging sign that

Representative Casten is interested in learning more about how this technology

is used for both better and worse. Yet what isn’t encouraging is that

Representative Casten introduced the bill saying that “until we’ve studied

[privacy enhancing technologies like mixers] and have a good audit trail, the

presumption should be that these are money laundering channels.”

Some strategies for CISOs freaked out by the specter of federal indictments

“Some CISOs feel like they’re the frog that’s in the water that’s starting to

boil, and they don’t like that feeling, and they want to make sure that

they’re doing the right things to navigate that heat,” Sullivan said during a

panel discussion, “CISOs Under Indictment: Case Studies, Lessons Learned, and

What’s Next,” at this year’s RSA Conference. The panel of current and former

CISOs emphasized that in this environment, CISOs need to document their roles

and responsibilities, involve the right people in incident response and

decision-making processes, and have the courage to stand up for their

convictions to minimize the risk that they will face the same fates as

Sullivan and Brown. ... “The heat is up because the reality is you’ve got

these entities in government who are responding to a huge rise in cybercrime

in a way that no one can hide. It’s not like in the old days when if an

incident happened, most people wouldn’t notice when stuff happens. Today, the

whole world notices,” he said. Blauner’s bottom-line advice to CISOs to

protect themselves is to “take a look at every governance document you’ve got

and really make sure that it’s crystal clear about roles and responsibilities,

especially around who makes risk management decisions.”

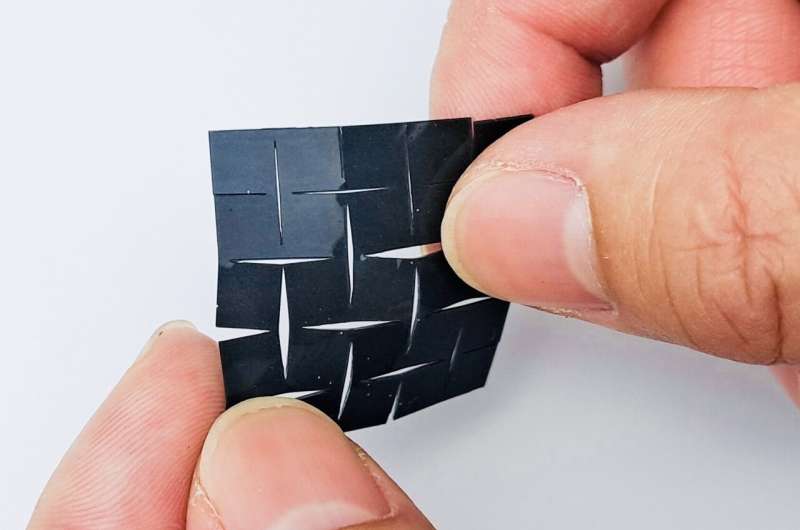

Wearable devices can now harvest our brain data. Australia needs urgent privacy reforms

In a background paper published earlier this year, the Australian Human Rights

Commission identified several risks to human rights that neurotechnology may

pose, including rights to privacy and non-discrimination. Legal scholars,

policymakers, lawmakers and the public need to pay serious attention to the

issue. The extent to which tech companies can harvest cognitive and neural

data is particularly concerning when that data comes from children. This is

because children fall outside of the protection provided by Australia’s

privacy legislation, as it doesn’t specify an age when a person can make their

own privacy decisions. The government and relevant industry associations

should conduct a candid inquiry to investigate the extent to which

neurotechnology companies collect and retain this data from children in

Australia. The private data collected through such devices is also

increasingly fed into AI algorithms, raising additional concerns. These

algorithms rely on machine learning, which can manipulate datasets in ways

unlikely to align with any consent given by a user.

Cloud environments beyond the Big Three

The resurgence and innovation in edge computing and on-premises technology

further support the trend toward diversification as data generation and

consumption locations continue to spread geographically. ... Edge computing

addresses these limitations by processing data closer to where it is

generated. This drastically reduces latency and enhances the user experience

in applications such as IoT, retail tech, and smart manufacturing. Although

many consider edge computing to be small devices, it also includes entire data

centers and smaller server installations that exist to serve a specific

business location. Many enterprises don’t see the wisdom of sending their data

on a 2,000-mile round trip to the point of presence for a public cloud

provider, which happens more often than we understand. Additionally, although

the cloud offers good scalability and flexibility, concerns over data

sovereignty and security continue to push certain industries towards

on-premises solutions. Sensitive data and critical applications in sectors

such as finance, government, and healthcare often necessitate keeping data

in-house under strict regulatory frameworks.

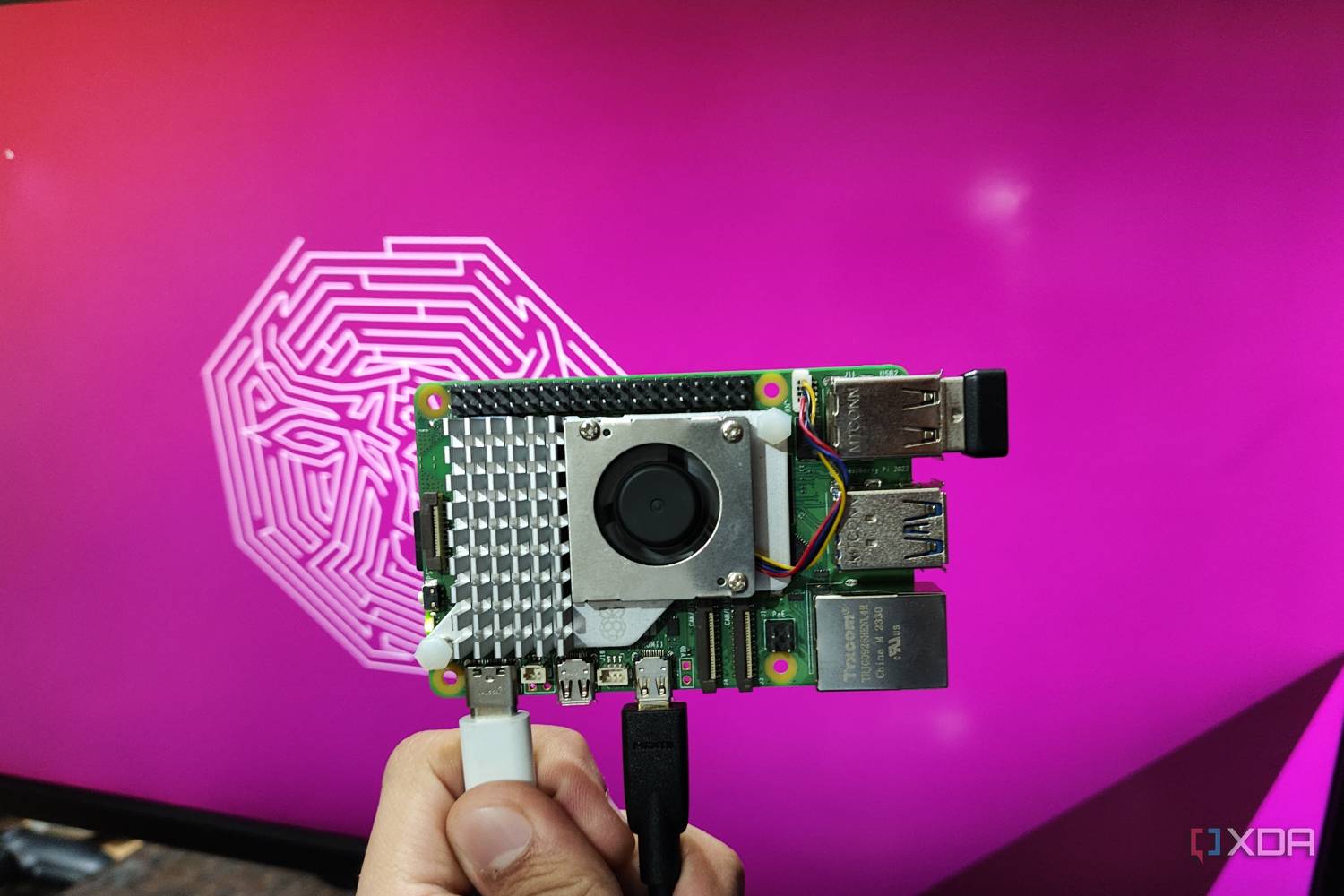

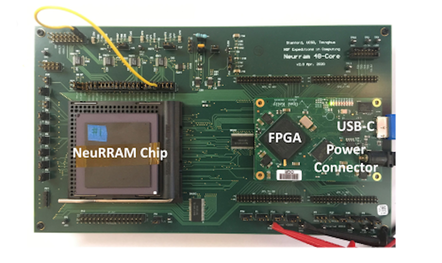

Controlling chaos using edge computing hardware: Digital twin models promise advances in computing

Using machine learning tools to create a digital twin (a virtual copy) of an

electronic circuit that exhibits chaotic behavior, researchers found that they

were successful at predicting how it would behave and at using that

information to control it. Many everyday devices, like thermostats and cruise

control, utilize linear controllers—which use simple rules to direct a system

to a desired value. Thermostats, for example, employ such rules to determine

how much to heat or cool a space based on the difference between the current

and desired temperatures. Yet because of how straightforward these algorithms

are, they struggle to control systems that display complex behavior, like

chaos. As a result, advanced devices like self-driving cars and aircraft often

rely on machine learning-based controllers, which use intricate networks to

learn the optimal control algorithm needed to operate efficiently. However,

these algorithms have significant drawbacks, the most demanding of which is

that they can be extremely challenging and computationally expensive to

implement.

Digital recreations of dead people need urgent regulation, AI ethicists say

Such services, which are already technically possible to create and legally

permissible, could let users upload their conversations with dead relatives to

“bring grandma back to life” in the form of a chatbot, researchers from the

University of Cambridge suggest. They may be marketed at parents with terminal

diseases who want to leave something behind for their child to interact with,

or simply sold to still-healthy people who want to catalogue their entire life

and create an interactive legacy. But in each case, unscrupulous companies and

thoughtless business practices could cause lasting psychological harm and

fundamentally disrespect the rights of the deceased, the paper argues. “Rapid

advancements in generative AI mean that nearly anyone with internet access and

some basic knowhow can revive a deceased loved one,” said Dr Katarzyna

Nowaczyk-Basińska, one of the study’s co-authors at Cambridge’s Leverhulme

centre for the future of intelligence (LCFI). “This area of AI is an ethical

minefield. It’s important to prioritise the dignity of the deceased, and

ensure that this isn’t encroached on by financial motives of digital afterlife

services, for example.”

How To Take The A-I-M Approach To Leadership

I like to break down the concept of taking aim into three components, which I

call the A-I-M approach: appreciation, imagination and motivation. The common

thread across all three of these principles is communication—and leaders

cannot be effective without it. Showing genuine gratitude is a foundational

aspect of effective leadership. Expressing heartfelt encouragement

demonstrates empathy and humility. And this simple show of appreciation

directly benefits the organization by motivating employees to continue

contributing to the company’s success and nurturing their loyalty. ... A

leader’s job is not to be the author of all ideas but to inspire team members

to tap into their imaginations and present fresh approaches to solving

problems, delivering solutions and communicating with clients. ... One

of the responsibilities of a leader is to understand what moves their teams

into action. As author and leadership coach John Maxwell famously wrote, “A

leader is great not because of his or her power, but because of his or her

ability to empower others.” I call that motivation.

Colorado AI legislation further complicates compliance equation

CIOs might struggle with the bill’s language because the focus is on whether

AI — in any form — helps make “consequential decisions” that could impact

Colorado residents. The bill defines consequential decision as being any

decision “that has a material legal or similarly significant effect on the

provision or denial to any consumer,” which includes educational enrollment,

employment or employment opportunity, financial or lending service, healthcare

services, housing, insurance, or a legal service. ... Another provision could

prove onerous for CIOs who do not have full knowledge of every AI

implementation in use in their environment, as it requires companies to make

“a publicly available statement summarizing the types of high-risk systems

that the deployer currently deploys, how the deployer manages any known or

reasonably foreseeable risks of algorithmic discrimination that may arise from

deployment of each of these high-risk systems and the nature, source, and

extent of the information collected and used.” ... One especially dicey area

in the legislation that should concern CIOs is when AI — especially generative

AI — acts on its own.

AI's Game-Changing Role in Finance and Audit Processes

Auditors can face several risks when using AI. These risks include

over-reliance on AI-generated insights, potential biases and quality issues

from incomplete or poor-quality data andcybersecurity threats such as

consequences in terms of hacking of the confidential data from the AI

websites. Thus, it is necessary to ensure compliance and implement

safeguarding measures. Following are some of the possible measures that can be

implemented to mitigate the above-mentioned risks. Human judgement: While AI

is a great tool to be incorporated in the professional world to help auditors

and organisations streamline their existing processes, AI work on standard

algorithm that can’t be customised on case-to-case basis. Therefore, to ensure

the accuracy of the results, a human review can be placed in practice to

review from and validate the accuracy of output results. Updating back-end

algorithms: The better the algorithms, the better the results. Regular updates

to the back-end algorithms can yield more accurate and improved outputs,

adapting to changing scenarios and data formats, ultimately mitigating the

risk of incorrect or inaccurate results..

Quote for the day:

"Don't find fault, find a remedy." --

Henry Ford

/filters:no_upscale()/articles/kubernetes-stateful-applications/en/resources/4image1-1646437036290.jpg)

:format(webp)/cdn.vox-cdn.com/uploads/chorus_image/image/69754581/VRG_1777_Android_12_002.0.jpg)