Quote for the day:

"Give whatever you are doing and whoever you are with the gift of your attention." -- Jim Rohn

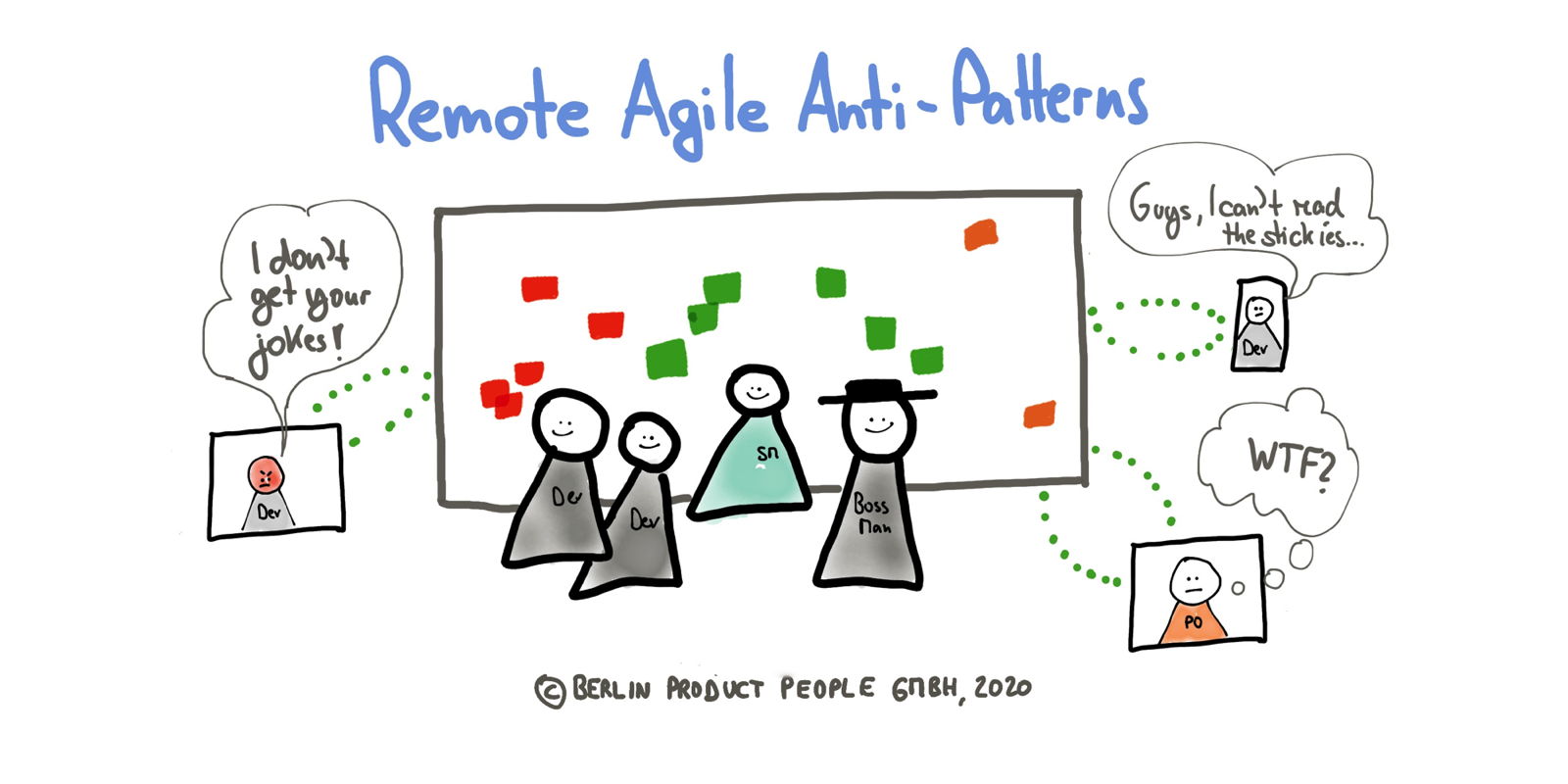

The incredible shrinking shelf life of IT skills

IT workers have seen the half-life of IT skills compressed even more

dramatically, with researchers saying some skills today go from hot to not in

less than two years — sometimes mere months. It’s putting a lot of pressure on

IT teams. As Anand says, “Technology is developing faster than tech workers can

upskill.” Ever-quickening churn in the IT skills market is upending more than

individuals’ career plans, too. It is impacting the entire IT function and the

organization as a whole. That in turn is forcing CIOs, HR leaders, and other

executives to devise strategies to create an environment where workers are

capable of reinvention at a rapid clip. ... CIOs and IT advisers also say the

shortening shelf life of skills is not experienced universally, as some

organizations still have a lot of legacy tech in place. Data from the 2025 Tech

Salary Report from Dice, a job-searching platform for tech professionals, hints

at these dual realities. ... “Certain skills will come up very quickly and then

go away very quickly, so now that person has to be seen as someone who can build

up skills quickly,” he adds. Info-Tech Research Group’s Leier-Murray says CIOs

must free up time for their staffers to upskill and provide more coaching to

their team members to ensure they keep pace with the work demands of a modern IT

shop. She and others advise CIOs to hire workers with or cultivate in existing

staffers a growth mindset.

IT workers have seen the half-life of IT skills compressed even more

dramatically, with researchers saying some skills today go from hot to not in

less than two years — sometimes mere months. It’s putting a lot of pressure on

IT teams. As Anand says, “Technology is developing faster than tech workers can

upskill.” Ever-quickening churn in the IT skills market is upending more than

individuals’ career plans, too. It is impacting the entire IT function and the

organization as a whole. That in turn is forcing CIOs, HR leaders, and other

executives to devise strategies to create an environment where workers are

capable of reinvention at a rapid clip. ... CIOs and IT advisers also say the

shortening shelf life of skills is not experienced universally, as some

organizations still have a lot of legacy tech in place. Data from the 2025 Tech

Salary Report from Dice, a job-searching platform for tech professionals, hints

at these dual realities. ... “Certain skills will come up very quickly and then

go away very quickly, so now that person has to be seen as someone who can build

up skills quickly,” he adds. Info-Tech Research Group’s Leier-Murray says CIOs

must free up time for their staffers to upskill and provide more coaching to

their team members to ensure they keep pace with the work demands of a modern IT

shop. She and others advise CIOs to hire workers with or cultivate in existing

staffers a growth mindset.... “The way that everybody is working is continuously being redefined,” Jones says.

Are Organizations Overinvesting in an AI Bubble? - Part 1

Demand for generative AI reasoning is driving investment, said Arun

Chandrasekaran, distinguished vice president analyst at Gartner. "These

partnerships signal the model providers' insatiable need for compute to satisfy

the enormous growth and usage, mainly in the consumer AI space." When asked to

confirm an AI bubble, Chandrasekaran said, "It is hard to predict if there is a

bubble and when it will burst. But we'll likely see a correction and shake-out

among players that can't deliver value to users and build profitable growth

strategies." Continuous investment with a large amount of money being invested,

at high valuations for AI companies, "is unsustainable," Umesh Padval, investor,

entrepreneur and former managing director of Thomvest Ventures, told Information

Security Media Group. ... "Enterprises are excited about gen AI's speed of

delivery. However, the punitively high cost of maintaining, fixing or replacing

AI-generated artifacts such as code, content and design can erode gen AI's

promised return on investments," Chandrasekaran said. "By establishing clear

standards for reviewing and documenting AI-generated assets and tracking

technical debt metrics in IT dashboards, enterprises can take proactive steps to

prevent costly disruptions." Chandrasekaran warns about overinvestment without

determining the "value path." He said organizations should realize that the

expected payoff, including ROI, is much more long term, which can lead to

risks.

Demand for generative AI reasoning is driving investment, said Arun

Chandrasekaran, distinguished vice president analyst at Gartner. "These

partnerships signal the model providers' insatiable need for compute to satisfy

the enormous growth and usage, mainly in the consumer AI space." When asked to

confirm an AI bubble, Chandrasekaran said, "It is hard to predict if there is a

bubble and when it will burst. But we'll likely see a correction and shake-out

among players that can't deliver value to users and build profitable growth

strategies." Continuous investment with a large amount of money being invested,

at high valuations for AI companies, "is unsustainable," Umesh Padval, investor,

entrepreneur and former managing director of Thomvest Ventures, told Information

Security Media Group. ... "Enterprises are excited about gen AI's speed of

delivery. However, the punitively high cost of maintaining, fixing or replacing

AI-generated artifacts such as code, content and design can erode gen AI's

promised return on investments," Chandrasekaran said. "By establishing clear

standards for reviewing and documenting AI-generated assets and tracking

technical debt metrics in IT dashboards, enterprises can take proactive steps to

prevent costly disruptions." Chandrasekaran warns about overinvestment without

determining the "value path." He said organizations should realize that the

expected payoff, including ROI, is much more long term, which can lead to

risks.The CISO’s greatest risk? Department leaders quitting

The trend of talented and dedicated functional security leaders quietly eyeing

the exit is not an anomaly — it’s a predictable outcome of systemic issues that

have been building within the profession for years, says Brandyn Fisher, V-CISO

capability lead at Centric Consulting. “As CISOs, we are seeing our most

critical layer of management, our directors and senior managers, burn out,’’

Fisher says. “This isn’t happening in a vacuum. It’s the result of a dangerous

convergence of unrealistic expectations, resource starvation, and a

fundamentally broken career model.” Security leaders operate on an unsustainable

premise, Fisher says. “We expect our leaders to be right every single time,

while an attacker only needs to be right once. This creates a culture of

hyper-vigilance that is simply not sustainable 24/7/365.” ... Another issue is

tool creep, with 40-plus security tools managing the same alerts and poor

integrations, Malik says. There is also “role overload and context switching” on

projects, as well as relentless audit cycles, reviews, and meetings, which Malik

says leaves little time for career development. “Many organizations have a CISO

plus a flat layer of ‘heads of X’” who don’t always have a clear path to moving

into higher levels, she says. And CISOs are constantly asking their leaders to

do more with less, Fisher adds. “As cybersecurity is still widely viewed as a

cost center rather than a business enabler, budgets are the first to be slashed

while the threat landscape grows exponentially,’’ he says.

The trend of talented and dedicated functional security leaders quietly eyeing

the exit is not an anomaly — it’s a predictable outcome of systemic issues that

have been building within the profession for years, says Brandyn Fisher, V-CISO

capability lead at Centric Consulting. “As CISOs, we are seeing our most

critical layer of management, our directors and senior managers, burn out,’’

Fisher says. “This isn’t happening in a vacuum. It’s the result of a dangerous

convergence of unrealistic expectations, resource starvation, and a

fundamentally broken career model.” Security leaders operate on an unsustainable

premise, Fisher says. “We expect our leaders to be right every single time,

while an attacker only needs to be right once. This creates a culture of

hyper-vigilance that is simply not sustainable 24/7/365.” ... Another issue is

tool creep, with 40-plus security tools managing the same alerts and poor

integrations, Malik says. There is also “role overload and context switching” on

projects, as well as relentless audit cycles, reviews, and meetings, which Malik

says leaves little time for career development. “Many organizations have a CISO

plus a flat layer of ‘heads of X’” who don’t always have a clear path to moving

into higher levels, she says. And CISOs are constantly asking their leaders to

do more with less, Fisher adds. “As cybersecurity is still widely viewed as a

cost center rather than a business enabler, budgets are the first to be slashed

while the threat landscape grows exponentially,’’ he says.

Preparing for the Next Wave of AI: Agentic Workflows

Agentic AI blends intelligence and automation into a single operational layer that can manage outcomes rather than just execute steps. Instead of relying on humans to define every possible rule, agentic systems understand goals and context. They can reason through multiple inputs, choose the best path forward, and adapt as conditions change. ... Optimizing for agentic AI isn’t just about adding smarter tools, it begins re-architecting the environment those tools inhabit. Organizations that thrive will have integrated, high-quality data foundations and unified workflows. Fragmented systems or poor data hygiene can cripple an AI agent’s ability to reason effectively. For many enterprises, this means modernizing their systems of record – CRMs, ERPs, and HR platforms – that make up digital operations. Equally important is the need for well-defined guardrails. Businesses must define what good decisions look like, the limits of an agent’s autonomy, and the ethical or compliance constraints that must be followed. This balance between freedom and control is critical. Too many restrictions, and the AI can’t act usefully, but too few and it risks acting outside the organization’s intentions. ... On the flip side, unclear use cases/business value was the top answer for other respondents. While both groups cited risk and compliance concerns as a top challenge, it’s clear there’s a divide on where employees fit into the agentic AI puzzle.The privacy tension driving the medical data shift nobody wants to talk about

Current frameworks lock data into silos. These isolated systems make it

difficult to combine information across hospitals, labs, and research groups.

This limits what can be learned from real-world evidence, which is especially

important for improving treatments, studying outcomes, and reducing costs. ...

Outdated rules can worsen inequities by limiting access to new tools and

restricting research to well-funded institutions. This contradicts the principle

of justice, which is meant to promote fairness and access. The authors emphasize

that privacy still matters. They write that, “privacy protections exist for many

reasons, addressing risks to individual patients as well as the public at

large.” But they argue that privacy cannot stand alone as the primary value in a

system where data powers both scientific progress and new forms of risk. ... The

most significant proposal in the research is a gradual move toward an open data

model. In this approach, healthcare data would be treated as a shared resource

rather than locked property. Access would come with responsibilities and

consequences for misuse instead of blanket restrictions on legitimate use. ... A

key argument is that penalties should target bad behavior rather than access.

Current rules assume data must be kept behind walls to prevent harm, even though

perfect anonymization is no longer possible. The researchers argue that the

system should focus on preventing malicious reidentification and unethical use.

This approach, they say, is more realistic and gives space for innovation.

Current frameworks lock data into silos. These isolated systems make it

difficult to combine information across hospitals, labs, and research groups.

This limits what can be learned from real-world evidence, which is especially

important for improving treatments, studying outcomes, and reducing costs. ...

Outdated rules can worsen inequities by limiting access to new tools and

restricting research to well-funded institutions. This contradicts the principle

of justice, which is meant to promote fairness and access. The authors emphasize

that privacy still matters. They write that, “privacy protections exist for many

reasons, addressing risks to individual patients as well as the public at

large.” But they argue that privacy cannot stand alone as the primary value in a

system where data powers both scientific progress and new forms of risk. ... The

most significant proposal in the research is a gradual move toward an open data

model. In this approach, healthcare data would be treated as a shared resource

rather than locked property. Access would come with responsibilities and

consequences for misuse instead of blanket restrictions on legitimate use. ... A

key argument is that penalties should target bad behavior rather than access.

Current rules assume data must be kept behind walls to prevent harm, even though

perfect anonymization is no longer possible. The researchers argue that the

system should focus on preventing malicious reidentification and unethical use.

This approach, they say, is more realistic and gives space for innovation.

The expanding role of the CISO

New research from HackerOne has revealed that 84 per cent of CISOs are now

responsible for AI security, while 82 per cent are charged with protecting data

privacy. The result is an already burdened CISO being asked to monitor and

secure technologies that are evolving at breakneck speed. New technology is

constantly being implemented across businesses, and when complex technologies

such as AI are adopted by 78 per cent of organisations – a 23 per cent increase

from the previous year – the scale and intensity of the task become clear. This

rapid adoption, often driven by different parts of the business eager for a

competitive edge, creates entirely new attack surfaces which must remain under

constant surveillance to ensure no security risks go unnoticed. For a CISO, this

task can seem insurmountable – even the most skilled internal teams will

struggle if they lack the specialised knowledge. Faced with a variety of unique

vulnerabilities, CISOs will need the right tools and support in order to keep

the business safe. ... Unfortunately, the lack of talent and resources serves as

a significant barrier to adopting this full-scale offensive security programme,

with 39 per cent of CISOs highlighting this lack of skilled personnel as a major

challenge. On a global scale, the cybersecurity industry urgently needs around

four million more professionals to bridge the current gap in key roles. However,

taking a crowdsourced security approach offers a powerful, scalable solution for

businesses to tackle this problem.

New research from HackerOne has revealed that 84 per cent of CISOs are now

responsible for AI security, while 82 per cent are charged with protecting data

privacy. The result is an already burdened CISO being asked to monitor and

secure technologies that are evolving at breakneck speed. New technology is

constantly being implemented across businesses, and when complex technologies

such as AI are adopted by 78 per cent of organisations – a 23 per cent increase

from the previous year – the scale and intensity of the task become clear. This

rapid adoption, often driven by different parts of the business eager for a

competitive edge, creates entirely new attack surfaces which must remain under

constant surveillance to ensure no security risks go unnoticed. For a CISO, this

task can seem insurmountable – even the most skilled internal teams will

struggle if they lack the specialised knowledge. Faced with a variety of unique

vulnerabilities, CISOs will need the right tools and support in order to keep

the business safe. ... Unfortunately, the lack of talent and resources serves as

a significant barrier to adopting this full-scale offensive security programme,

with 39 per cent of CISOs highlighting this lack of skilled personnel as a major

challenge. On a global scale, the cybersecurity industry urgently needs around

four million more professionals to bridge the current gap in key roles. However,

taking a crowdsourced security approach offers a powerful, scalable solution for

businesses to tackle this problem.

A Day in the Life of a Connected Patient: How Real-Time Data Is Powering Smarter Care

/dq/media/media_files/2025/11/24/medtech-2025-11-24-09-59-39.png) Health data arrives in bursts and fragments. It comes from different tools,

moves at different speeds, and rarely follows the same format. Making sense of

it all takes more than storage. It takes design that expects disorder—and knows

how to organize it. Data pipelines help bridge this complexity. They link

together systems like EHRs, insurance claims, wearables, and diagnostic tools—so

that the information can move securely and consistently. Standards like HL7 and

FHIR help make these handoffs work, even across aging platforms. As the data

moves, it’s shaped into something usable. Behind the scenes, it’s cleaned,

structured, and enriched before reaching analytics teams or clinical systems.

The work happens in moments, but its impact is lasting. ... Discharge no longer

means disconnection. For patients managing chronic conditions, remote care

programs have changed what happens after they leave the hospital. One such

initiative pulled continuous data from wearables, implants, and diagnostic

devices into a secure cloud system. Care teams could monitor trends, identify

risks early, and step in before issues got worse. In patients with chronic

conditions, timely support made a measurable difference. Readmissions dropped by

almost 40%. Simple check-ins and reminders helped people stay on course—not

through pressure, but with steady, well-timed guidance. At scale, the results

were even clearer. For every 10,000 patients, the program saved more than USD 1

million a year.

Health data arrives in bursts and fragments. It comes from different tools,

moves at different speeds, and rarely follows the same format. Making sense of

it all takes more than storage. It takes design that expects disorder—and knows

how to organize it. Data pipelines help bridge this complexity. They link

together systems like EHRs, insurance claims, wearables, and diagnostic tools—so

that the information can move securely and consistently. Standards like HL7 and

FHIR help make these handoffs work, even across aging platforms. As the data

moves, it’s shaped into something usable. Behind the scenes, it’s cleaned,

structured, and enriched before reaching analytics teams or clinical systems.

The work happens in moments, but its impact is lasting. ... Discharge no longer

means disconnection. For patients managing chronic conditions, remote care

programs have changed what happens after they leave the hospital. One such

initiative pulled continuous data from wearables, implants, and diagnostic

devices into a secure cloud system. Care teams could monitor trends, identify

risks early, and step in before issues got worse. In patients with chronic

conditions, timely support made a measurable difference. Readmissions dropped by

almost 40%. Simple check-ins and reminders helped people stay on course—not

through pressure, but with steady, well-timed guidance. At scale, the results

were even clearer. For every 10,000 patients, the program saved more than USD 1

million a year.

Micro-Frontends: A Sociotechnical Journey Toward a Modern Frontend Architecture

/articles/adopt-micro-frontends/en/smallimage/adopt-micro-frontends-thumbnail-1762948103768.jpg) As organisations demand faster delivery, greater autonomy, and continuous

modernisation, our frontend architectures must evolve in step with our teams.

The distributed frontend era is here, but it’s not defined by new frameworks or

fancy tooling. It’s defined by the way we align people, processes, and

architecture around a shared goal: delivering value faster without losing

control. ... Micro-frontends are often introduced as a technical pattern - a way

to break a large frontend into smaller, independently deployable pieces. But

that framing misses the point. Micro-frontends are not a new stack; they are a

new way of structuring work. They represent a sociotechnical shift - one that

mirrors Conway’s Law, which tells us that system design reflects communication

structures. When teams are forced to coordinate through a single release train,

decision-making slows. When every change requires syncing across multiple

domains, creativity fades. The result is not just technical debt but

organisational inertia. Micro-frontends reverse that dynamic. They allow teams

to own slices of the product end-to-end - domain, design, delivery - without

waiting for centralised approval. ... But micro-frontends are not a silver

bullet. For small teams or products with limited complexity, the overhead might

outweigh the benefits. The goal is not to adopt a pattern for its own sake but

to solve concrete problems: delivery bottlenecks, scaling limits, and the

inability to modernise safely.

As organisations demand faster delivery, greater autonomy, and continuous

modernisation, our frontend architectures must evolve in step with our teams.

The distributed frontend era is here, but it’s not defined by new frameworks or

fancy tooling. It’s defined by the way we align people, processes, and

architecture around a shared goal: delivering value faster without losing

control. ... Micro-frontends are often introduced as a technical pattern - a way

to break a large frontend into smaller, independently deployable pieces. But

that framing misses the point. Micro-frontends are not a new stack; they are a

new way of structuring work. They represent a sociotechnical shift - one that

mirrors Conway’s Law, which tells us that system design reflects communication

structures. When teams are forced to coordinate through a single release train,

decision-making slows. When every change requires syncing across multiple

domains, creativity fades. The result is not just technical debt but

organisational inertia. Micro-frontends reverse that dynamic. They allow teams

to own slices of the product end-to-end - domain, design, delivery - without

waiting for centralised approval. ... But micro-frontends are not a silver

bullet. For small teams or products with limited complexity, the overhead might

outweigh the benefits. The goal is not to adopt a pattern for its own sake but

to solve concrete problems: delivery bottlenecks, scaling limits, and the

inability to modernise safely.

Software Testing in the AI Era - Evolving Beyond the Pyramid

The rise (and fall?) of shadow AI

“The security surface extends far beyond traditional concerns. For AI systems,

the model and data become the primary attack vectors,” said Meerah Rajavel,

chief information officer at Palo Alto Networks, on the company’s own blog.

“While frontier models from providers like Google and OpenAI carry lower risk

due to extensive testing, most AI applications incorporate multiple specialised

models.” ... “Organisations must scan models for vulnerabilities, manage

permissions appropriately and protect data access. Runtime security becomes

critical because prompts function like code and the LLM acts as an operating

system. That has to be protected like a software supply chain,” said Rajavel.

... Shadow AI detection and control is a growing marketplace. Other vendors that

operate here include Netskope with its Netskope One platform, which includes AI

security capabilities to detect shadow AI usage. Not exactly a like-for-like

competitor but still in the same core operational arena, the SaaS management

toolset from Zylo is built to discover and manage all their SaaS applications,

including unauthorised AI tools, by centralising data, risk scores and usage.

“To address the risk [of shadow AI], CIOs should define clear enterprise-wide

policies for AI tool usage, conduct regular audits for shadow AI activity and

incorporate GenAI risk evaluation into their SaaS assessment processes,” said

Arun Chandrasekaran at magical analyst house Gartner.

“The security surface extends far beyond traditional concerns. For AI systems,

the model and data become the primary attack vectors,” said Meerah Rajavel,

chief information officer at Palo Alto Networks, on the company’s own blog.

“While frontier models from providers like Google and OpenAI carry lower risk

due to extensive testing, most AI applications incorporate multiple specialised

models.” ... “Organisations must scan models for vulnerabilities, manage

permissions appropriately and protect data access. Runtime security becomes

critical because prompts function like code and the LLM acts as an operating

system. That has to be protected like a software supply chain,” said Rajavel.

... Shadow AI detection and control is a growing marketplace. Other vendors that

operate here include Netskope with its Netskope One platform, which includes AI

security capabilities to detect shadow AI usage. Not exactly a like-for-like

competitor but still in the same core operational arena, the SaaS management

toolset from Zylo is built to discover and manage all their SaaS applications,

including unauthorised AI tools, by centralising data, risk scores and usage.

“To address the risk [of shadow AI], CIOs should define clear enterprise-wide

policies for AI tool usage, conduct regular audits for shadow AI activity and

incorporate GenAI risk evaluation into their SaaS assessment processes,” said

Arun Chandrasekaran at magical analyst house Gartner.