AI vs humans: Why soft skills are your secret weapon

AI can certainly assist with some aspects of the creative process, but true creativity is something only humans can achieve, for several reasons. Firstly, it often involves intuition, emotion and empathy, as well as thinking outside the box and making connections between seemingly unrelated concepts. Creativity is often shaped by personal experiences and cultural background, making every individual’s creative work unique. ... Leadership and strategic management will continue to be driven by humans. When making decisions, people are able to consider various factors such as personal relationships or company culture. General awareness, intuition, understanding of broader contexts that lie beyond data and effective communication skills are all human traits. ... Humans possess a crucial trait that AI is unable to replicate (although it’s definitely coming closer): Empathy. AI can’t communicate with your team members at the same level, provide solutions to their problems or offer a listening ear when necessary. Managing a team means talking to people, listening and understanding their needs and motivations. The human touch is essential to make sure that everyone is on the same page.

How to Avoid Pitfalls and Mistakes When Coding for Quality

When code quantity is so exaggerated that redundancies emerge, "code bloat" occurs. An abundance of unnecessary code can adversely affect the site's performance and the code can become too complex to maintain. There are strategies for addressing redundancy; however, as code is implemented, it is crucial for it to be modularized or broken down into smaller modular complements with the proper encapsulation and extraction. Code that is modularized promotes reuse, simplifies maintenance, and keeps the size of the code base in check. ... There is a tendency to "reinvent the wheel" when writing code. A more practical solution is to reuse libraries whenever possible because they can be utilized within different parts of the code. Sometimes, code bloat results from a historically bloated code base without an easy option to conduct modularization, extraction, or library reuse. In this case, the most effective strategy is to turn to code refactoring. Regularly take initiatives to refactor code, eliminate any unnecessary or duplicate logic, and improve the overall code structure of the repository over time.

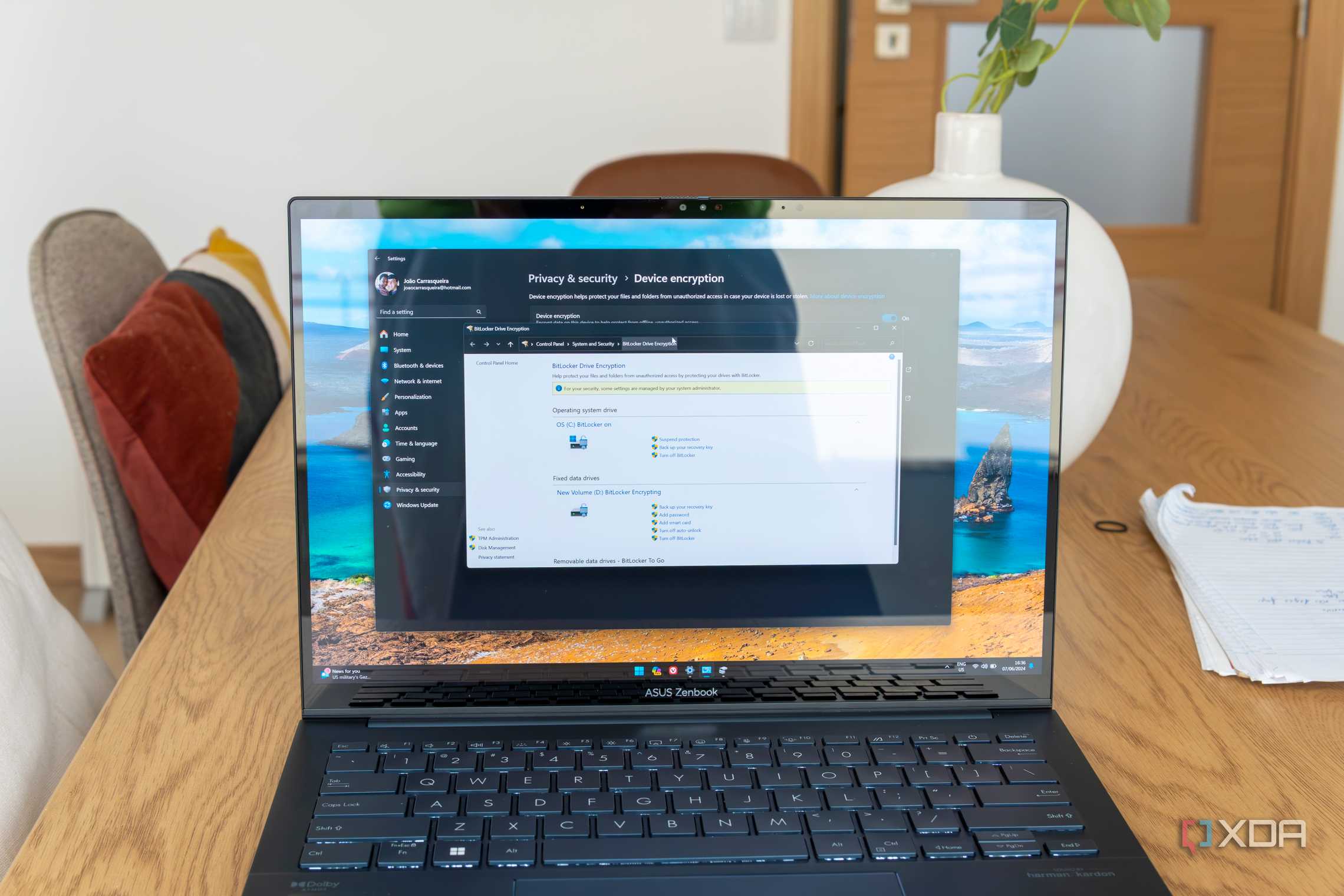

The BEC battleground: Why zero trust and employee education are your best line of defence

Even with extensive employee training, some BEC scams can bypass human

vigilance. Comprehensive security processes are essential to minimize their

impact. The zero-trust security model is crucial here. It assumes no inherent

trust for anyone, inside or outside the network. With zero trust, every user and

device must be continuously authenticated before accessing any resources. This

makes it much harder for attackers. Even if they steal a login credential, they

can’t automatically access the entire system. A key component of zero trust is

multi-factor authentication (MFA) which acts as multiple locks on every access

point. Just like a physical security system requiring multiple forms of

identification, MFA requires not just a username and password, but an additional

verification factor like a code from a phone app or fingerprint scan. This makes

unauthorised entry, including through BEC scams, much harder. So, any IT

infrastructure implemented must have zero trust and MFA at its core. A

complement to zero trust is the principle of least privilege access; granting

users only the minimum level of access required to perform their jobs.

Why CISOs need to build cyber fault tolerance into their business

For a rapidly evolving technology like GenAI, it is impossible to prevent all

attacks at all times. The ability to adapt to, respond, and recover from

inevitable issues is critical for organizations to explore GenAI successfully.

Therefore, effective CISOs are complementing their prevention-oriented guidance

for GenAI with effective response and recovery playbooks. Regarding third-party

cybersecurity risk management, no matter the cybersecurity function’s best

efforts, organizations will continue to work with risky third parties.

Cybersecurity’s real impact lies not in asking more due diligence questions, but

in ensuring the business has documented and tested third-party-specific business

continuity plans in place. “CISOs should be guiding the sponsors of third-party

partners to create a formal third-party contingency plan, including things like

an exit strategy, alternative suppliers list, and incident response playbooks,”

said Mixter. “CISOs tabletop everything else. It’s time to bring tabletop

exercises to third-party cyber risk management.”

AI system poisoning is a growing threat — is your security regime ready?

CISOs shouldn’t breathe a sigh of relief, McGladrey says, as their organizations

could be impacted by those attacks if they are using the vendor-supplied

corrupted AI systems. ... Security experts and CISOs themselves say many

organizations are not prepared to detect and respond to poisoning attacks.

“We’re a long way off from having truly robust security around AI because it’s

evolving so quickly,” Stevenson says. He points to the Protiviti client that

suffered a suspected poisoning attack, noting that workers at that company

identified the possible attack because its “data was not synching up, and when

they dived into it, they identified the issue. [The company did not find it

because] a security tool had its bells and whistles going off.” He adds: “I

don’t think many companies are set up to detect and respond to these kinds of

attacks.” ... “The average CISO isn’t skilled in AI development and doesn’t have

AI skills as a core competency,” says Jon France, CISO with ISC2. Even if they

were AI experts, they would likely face challenges in determining whether a

hacker had launched a successful poisoning attack.

Accelerate Transformation Through Agile Growth

The problem is that when you start the next calendar year in January, you get a

false sense of confidence because December is still 12 months away — all the

time in the world, or so it seems, to execute your annual strategic plan. But

then by April, after the first quarter has ended, chances are you’ll have

started to feel a bit behind. You won’t be overly worried, however; you know you

still have plenty of time to catch up. But then you’ll get to September and hit

the 100-day-sprint which typically comes right after Labor Day in the United

States. Now, panic will set in as you race to the end of the year desperately

trying to hit those annual goals that were established all the way back in

January. In growth cycles longer than 90 days, we tend to get off track. But it

doesn’t have to be this way. You can use the 90-Day Growth Method to bring your

team together every quarter to review and celebrate your progress over the past

90 days, refocus on goals and actions, and renew your commitment to achieving

them. Soon, you and your team will feel re-energized and ready to move forward

with courage and confidence for the next 90 days.

We need a Red Hat for AI

To be successful, we need to move beyond the confusing hype and help enterprises

make sense of AI. In other words, we need more trust (open models) and fewer

moving parts ... OpenAI, however popular it may be today, is not the solution.

It just keeps compounding the problem with proliferating models. OpenAI throws

more and more of your data into its LLMs, making them better but not any easier

for enterprises to use in production. Nor is it alone. Google, Anthropic,

Mistral, etc., etc., all have LLMs they want you to use, and each seems to be

bigger/better/faster than the last, but no clearer for the average enterprise.

... You’d expect the cloud vendors to fill this role, but they’ve kept to their

preexisting playbooks for the most part. AWS, for example, has built a $100

billion run-rate business by saving customers from the “undifferentiated heavy

lifting” of managing databases, operating systems, etc. Head to the AWS

generative AI page and you’ll see they’re lining up to offer similar services

for customers with AI. But LLMs aren’t operating systems or databases or some

other known element in enterprise computing. They’re still pixie dust and magic.

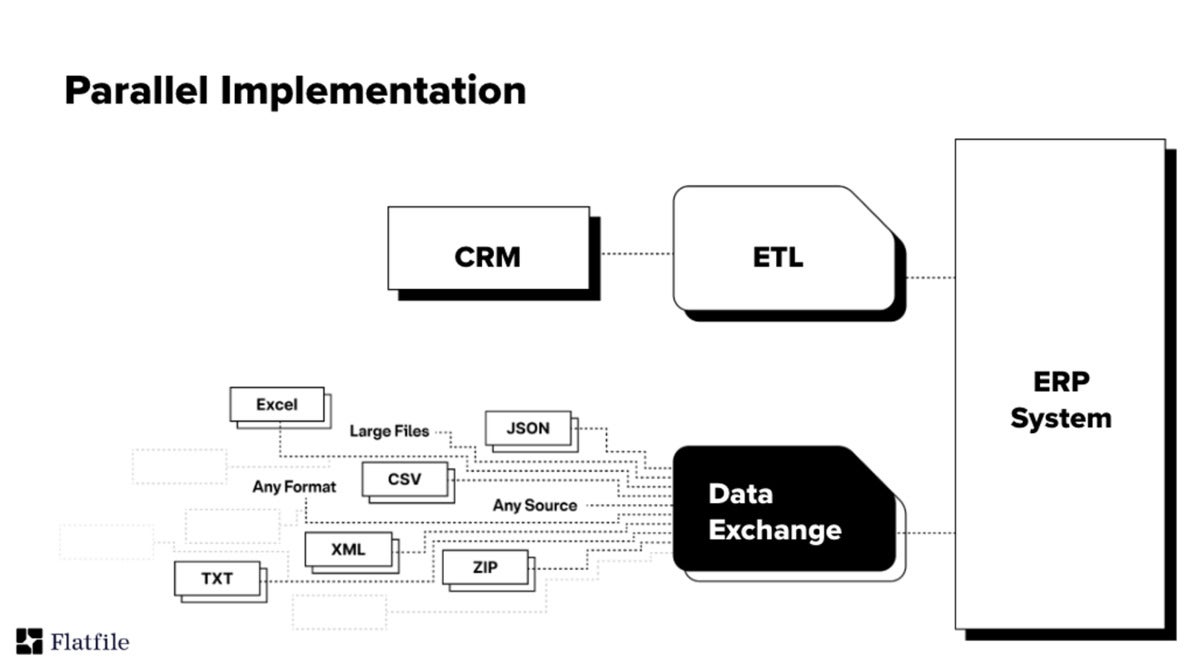

How Data Integration Is Evolving Beyond ETL

From an overall trend perspective, with the explosive growth of global data,

the emergence of large models, and the proliferation of data engines for

various scenarios, the rise of real-time data has brought data integration

back to the forefront of the data field. If data is considered a new energy

source, then data integration is like the pipeline of this new energy. The

more data engines there are, the higher the efficiency, data source

compatibility, and usability requirements of the pipeline will be. Although

data integration will eventually face challenges from Zero ETL, data

virtualization, and DataFabric, in the visible future, the performance,

accuracy, and ROI of these technologies have always failed to reach the level

of popularity of data integration. Otherwise, the most popular data engines in

the United States should not be SnowFlake or DeltaLake but TrinoDB. Of course,

I believe that in the next 10 years, under the circumstances of DataFabric x

large models, virtualization + EtLT + data routing may be the ultimate

solution for data integration. In short, as long as data volume grows, the

pipelines between data will always exist.

Protecting your digital transformation from value erosion

The first form of value erosion pertains to cost increases within your

project without an equivalent increase in the value or activities being

delivered. With project delays, for example, there are usually additional

costs incurred related to resource carryover because of the timeline

increase. In this instance, the absence of additional work being delivered,

or future work being pulled forward to offset the additional costs, is a

prime illustration of value erosion. ... Decrease in value without decreased

costs: A second form occurs when there’s a decrease in value without a cost

adjustment. This can happen due to changing business priorities or project

delays, especially within the build phase. As an alternative to extending

the project timeline, organizations may decide to prioritize and reduce

features to meet deadlines. ... Failure to Identify and plan for

potential risks leaves projects vulnerable to unforeseen complications and

budgetary concerns. Large variances in initial SI responses can be

attributed to different assumptions on scope and service levels

provided.

Ask a Data Ethicist: What Is Data Sovereignty?

Put simply, data sovereignty relates to who has the power to govern data. It

determines who is legally empowered to make decisions about the collection

and use of data. We can think about this in the context of two governments

negotiating between each other, each having sovereign powers of

self-determination. Indigenous governments are claiming their sovereign

rights to their people’s data. On the one hand, this is a response to the

atrocities that have taken place with respect to data gathered and taken

beyond the control of Indigenous communities by researchers, governments,

and other non-Indigenous parties. Yet, as data becomes increasingly

important, many countries are seeking to set regulatory standards for data.

It makes sense the Indigenous governments would assert similar rights with

respect to their people’s data. ... Data sovereignty is an important part of

Canada’s Truth and Reconciliation calls to action. The FNIGC governs the

relevant processes for those seeking to work with First Nations in Canada to

appropriately access data.

Quote for the day:

"The secret to success is good

leadership, and good leadership is all about making the lives of your team

members or workers better." -- Tony Dungy

_blickwinkel_Alamy.jpg?width=850&auto=webp&quality=95&format=jpg&disable=upscale)