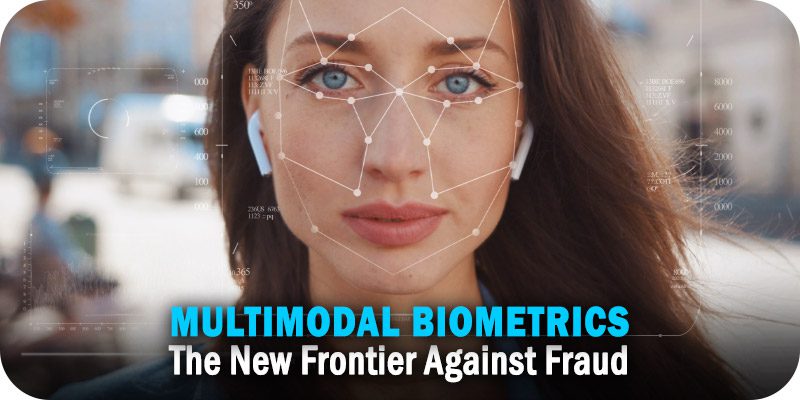

Multimodal Biometrics: The New Frontier Against Fraud

This new category includes instances when online criminals directly target

consumers, often through a text, call, or email, rather than by obtaining a

person’s personal information at the institutional level, a change in tactics in

recent years that has significant consequences for both individuals and the

companies they do business with. The consumer, Javelin says, has become “the

path of least resistance.” Consumers aren’t the only ones affected by this

change in approach. It has significantly altered the advice we give our banking

and financial services customers, as well. ... Identity verification platforms

with multi-modal biometrics and liveness detection offer next-generation levels

of security. Even better, platforms now entering the market combine multi-modal

biometrics and liveness detection with a frictionless, easy-to-use interface.

With some, customers simply look into their phones or laptop cameras and say a

phrase to easily and securely access an online account. This is the conversation

my colleagues and I are having with our banking and financial institution

customers.

The 5 Most Dangerous Cognitive Biases For Startup Founders

Confirmation bias is the tendency to search for information proving your

already-established worldview, rather than disproving it. It is obvious that

it’s crucial to try to avoid this when constructing your idea or product

validation tests or when talking to customers. Don’t try to defend your

assumptions and decisions - instead, try to gather unbiased feedback so that you

would have a higher confidence level in the results of your tests. Fake

confirmation of your ideas might make your life easier as it would give you a

scapegoat for your failure. Yet, in the long run, it’s much better to have to

overcome your ego and succeed than to defend it but ultimately fail. The

tendency to rely heavily on the first piece of information you have on a topic.

The anchoring bias is often used in negotiations as a trick to bring the

expectations of the opposing party closer to your desired outcome. In startups,

it is very important not to unwittingly play this trick on yourself. For

example, if you’ve been offering a service for free you might feel reluctant to

raise the price significantly even if it is the right thing to do for your

business.

The rise of metaverse shopping

Even as the metaverse continues to gain popularity, it’s important for retailers

to remember that it is still relatively new, she observed. “The reality is there

are so many other channels for retailers to engage customers, such as web,

mobile, in-store and social, and they need to also focus on strengthening those

experiences,” Estes said. Brands should not be trying to match virtual

experiences with traditional in-store experiences, Mason noted, as they are very

different mediums and have different strengths for connecting with customers.

“The key thing to remember is that metaverse experiences are new and opt-in,” he

said. “They need to be fun and engaging for the user to find something

worthwhile in them. After all, moving to a competing brand’s metaverse

experience is just a click or a hand-wave away. It is important for companies to

consider how their brand will translate to a new medium.” Brands should consider

how their brand representatives will greet consumers. Will they be serious, fun

or edgy? What kind of language and voice will be used, and how will their brand

avatar present itself visually?

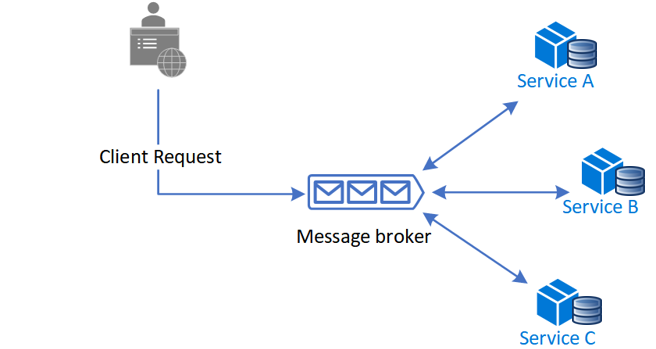

How intelligent automation will change the way we work

As organizations automate their business processes, there are many potential

hazards to avoid. “The main one is ignoring your people and underestimating

that,” Butterfield said. “Although the outcome is driven by using a technology,

everything up to the actual automation of a process is generally very

people-focused. A lack of change management will unfortunately cause many issues

in the long term. Organizations need to keep their people aligned with their

overall goals.” Security, mainly authentication, is also a key concern, Barbin

said. “Any automation, API [application programming interface] or other,

requires some means to pass access credentials,” he said. “If the systems that

automate and contain those credentials are compromised, access to the connected

systems could be too.” To help minimize that risk, Barbin suggests using SAML

2.0 and other technologies that take stored passwords out of the systems.

Another pitfall is selecting only one technology as the automation tool of

choice. Typically organizations need multiple technologies to get the best

results, said Maureen Fleming, program vice president for intelligent process

automation research at IDC.

How can IT leaders address ‘quiet quitting’?

While this is less likely to be an issue if staff are driven by the

organisation’s vision and purpose, as is often the case with tech startups, it

is still “important to look at what the expectations are on both sides, what’s

reasonable and where compromises could be made”, she says. Klotz also suggests

that part of the reason why some IT leaders, among others, have reacted so

negatively to the idea of quiet quitting idea is over concerns that “paying

extra for everything” could hit profit margins, which in turn could put the

company out of business, particularly in economically difficult times. But he

also points to the dynamic nature of the tech industry, which requires

discretionary working at times simply to deliver on projects. “It’s only if you

ask people to go above and beyond without compensation that it gets exploitative

rather than being part of a healthy functioning relationship,” Klotz says. “But

many companies ask employees to do extra almost as part of the job description,

which is partly why they provide amazing benefits and such good compensation –

people know what they’re getting into and are rewarded for it.”

Applying Enterprise Risk Management to Cyberrisk

Both the reality of cyberthreats and regulatory changes should make it clear to

boards, owners and management that there is a need for better management of

cybersecurity. Enterprise risk management (ERM) is a tool that management and

the board can use to help manage risk across the enterprise, including

cyberrisk. The Committee of Sponsoring Organizations of the Treadway Commission

(COSO) ERM framework and International Organization for Standardization (ISO)

31000 are two prominent frameworks for ERM. Both frameworks emphasize that for

effective ERM, an organization needs to have oversight from senior management,

organizational structure to support ERM and qualified staff. These and other

capabilities that are needed to support ERM are also necessary to support

cybersecurity and manage cyberrisk; therefore, the contents of both frameworks

are easily and aptly applied to cybersecurity. Organization can learn about the

consequences of ineffective enterprise management of cybersecurity from many

examples around the world including the 2021 ransomware attack on Ireland’s

Health Services Executive (HSE).

Why to Rethink and Update Approaches to Payment Security Management

,

“CISOs are increasingly challenged in their efforts to secure payment security

compliance, and in convincing board members and other stakeholders of the

importance and significance of securing strategic support and resources,” Hanson

explains. In the 2022 Payment Security Report, it's pointed out how CISOs are

often using outdated methods to secure support, and a change is needed for all

stakeholders in approach. “Rather than taking a check-the-box approach to

compliance, CISOs and other security leaders need to take an out-of-the box,

thinker’s approach that involves implementing frameworks and models,” Hanson

says. “This is especially true for those taking the Customized approach to

compliance.” MacLeod says there are several key stakeholders in organizations

who ensure payment security compliance, from the CEO and CIO across to the CISO

and CFO -- and these roles are changing as the payments industry evolves.

... As a result, stakeholders such as the CIO and CISO are playing an

increasingly important role in ensuring payment security compliance.

Five defence-in-depth layers to implement for business security success

Businesses have many wonderful applications at their fingertips, with the

average user having access to 5-10+ high-value business apps. These contain

sensitive resources such as customer information, intellectual property, and

financial data, making them a key target for attackers. Unfortunately, 80 per

cent of businesses have faced users misusing or abusing these apps in the last

year. Simply requiring a login is not enough to keep them safe – the moment a

user steps away from their screen while still logged in, all of that valuable

data is exposed. The defensive layer: a login only verifies a user’s identity at

one point – so effective security controls here will continue to monitor,

record, and audit user actions after authentication. Enhancing the visibility

available to security teams offers many benefits, including being able to

identify the source of a security incident (and therefore respond) much quicker.

... Almost all businesses benefit from using third party tools, but they offer

risks too, as integration often requires creating super-user access to clients’

systems.

The future of IT: decentralization and collaboration

As the role of IT evolves and collaboration increases, IT leaders are

increasingly working as partners – rather than technology gatekeepers – with

department heads. This collaboration and decentralization of IT across the

enterprise gives employees self-sufficiency and autonomy when making

technology decisions for their departments. They no longer depend on the IT

team for their process automation, tool choices, or technology operations. ...

IT personnel must clarify to all employees which applications are allowed on

the corporate network. Employees should always inform IT personnel about their

use of non-sanctioned applications and devices. If employees are downloading

non-sanctioned apps and using non-sanctioned devices to access the corporate

network, the IT department may have trouble preventing malware from accessing

the network. When employees are open and honest about the devices and

applications they use, it is much easier for IT personnel to mitigate rogue

downloads and keep the network safe. Also, with social engineering efforts on

the rise, IT must teach all other employees about popular attack methods, such

as phishing and business email compromise.

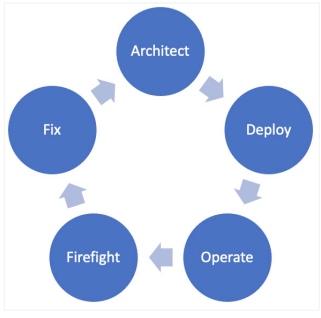

Craftleadership: Craft Your Leadership as Developers Craft Code

There are other common practices in software development that apply to

management. First, organize your budgeting process as a CI/CD pipeline. Make

budget definition something that is easily repeatable, and that fits in your

organization. CI/CD allows you to get rid of fastidious tasks by putting them

in a pipeline. Budgeting is one of the most fastidious things I have found I

have to do as a manager. Second, master your tools. If MS Excel is the tool

used by the managers in your organization, be an Excel master. Third, try to

be reactive in your decisions, as in reactive programming. Be asynchronous

when making decisions; as much as possible, try to reduce the “commit” phase,

that is, the meetings where everyone must be present to say they agree. In my

case, I think that it is necessary to maintain these meetings where everybody

agrees on different things. Yet, in these meetings, I never address an issue

that I haven’t had the time to discuss thoroughly with everyone beforehand-

this could be through a simple asynchronous email loop where everyone had a

chance to give his or her opinion.

Quote for the day:

"Successful leadership requires

positive self-regard fused with optimism about a desired outcome." --

Warren Bennis

/filters:no_upscale()/articles/product-mindset-devops/en/resources/22%20product%20market%20fit-1668435929840.jpeg)