Quote for the day:

"Be willing to make decisions. That's the most important quality in a good leader." -- General George S. Patton, Jr.

Building blocks – what’s required for my business to be SECURE?

Zero Trust Architecture involves a set of rules that will ensure that you will

not let anyone in without proper validation. You will assume there is a breach.

You will reduce privileges to their minimum and activate them only as needed and

you will make sure that devices connecting to your data are protected and

monitored. Enclave is all about aligning your data’s sensitivity with your

cybersecurity requirements. For example, to download a public document, no

authentication is required, but to access your CRM, containing all your

customers’ data, you will require a username, password, an extra factor of

authentication, and to be in the office. You will not be able to download the

data. Two different sensitivities, two experiences. ... The leadership team is

the compass for the rest of the company – their north star. To make the right

decision during a crisis, you much be prepared to face it. And how do you make

sure that you’re not affected by all this adrenaline and stress that is caused

by such an event? Practice. I am not saying that you must restore all your

company’s backups every weekend. I am saying that once a month, the company

executives should run through the plan. ... Most plans that were designed and

rehearsed five years ago are now full of holes.

Beyond Culture: Addressing Common Security Frustrations

A majority of security respondents (58%) said they have difficulty getting

development to prioritize remediation of vulnerabilities, and 52% reported

that red tape often slows their efforts to quickly fix vulnerabilities. In

addition, security respondents pointed to several specific frustrations

related to their jobs, including difficulty understanding security findings,

excessive false positives and testing happening late in the software

development process. ... If an organization sees many false positives, that

could be a sign that they haven’t done all they can to ensure their security

findings are high fidelity. Organizations should narrow the focus of their

security efforts to what matters. That means traditional static application

security testing (SAST) solutions are likely insufficient. SAST is a powerful

tool, but it loses much of its value if the results are unmanageable or lack

appropriate context. ... Although AI promises to help simplify software

development processes, many organizations still have a long road ahead. In

fact, respondents who are using AI were significantly more likely than those

not using AI to want to consolidate their toolchain, suggesting that the

proliferation of different point solutions running different AI models could

be adding complexity, not taking it away.

Significant Gap Exists in UK Cyber Resilience Efforts

A persistent lack of skilled cybersecurity professionals in the civil service

is one reason for the persistent gap in resilience, parliamentarians wrote.

"Government has been unwilling to pay the salaries necessary to hire the

experienced and skilled people it desperately needs to manage its

cybersecurity effectively." Government figures show the workforce has grown

and there are plans to recruit more experts - but a third of cybersecurity

roles are either vacant "or filled by expensive contractors," the report

states. "Experience suggests government will need to be realistic about how

many of the best people it can recruit and retain." The report also faults

government departments for not taking sufficient ownership over cybersecurity.

The prime minister's office for years relied on departments to perform a

cybersecurity self-assessment, until in 2023 when it launched GovAssure, a

program to bring in independent assessors. GovAssure turned the

self-assessments on their head, finding that the departments that ranked

themselves the highest through self-assessment were among the less secure.

Continued reliance on legacy systems have figured heavily in recent critiques

of British government IT, and it does in the parliamentary report, as well.

"It is unacceptable that the center of government does not know how many

legacy IT systems exist in government and therefore cannot manage the

associated cyber risks."

How CIOs Can Boost AI Returns With Smart Partnerships

CIOs face an overwhelming array of possibilities, making prioritization

critical. The CIO Playbook 2025 helps by benchmarking priorities across

markets and disciplines. Despite vast datasets, data challenges persist as

only a small, relevant portion is usable after cleansing. Generative AI helps

uncover correlations humans might miss, but its outputs require rigorous

validation for practical use. Static budgets, growing demands and a shortage

of skilled talent further complicate adoption. Unlike traditional IT, AI

affects sales, marketing and customer service, necessitating

cross-departmental collaboration. For example, Lenovo's AI unifies customer

service channels such as email and WhatsApp, creating seamless interactions.

... First, go slow to go fast. Spend days or months - not years - exploring

innovations through POCs. A customer who builds his or her own LLM faces

pitfalls; using existing solutions is often smarter. Second, prioritize

cross-collaboration, both internally across departments and externally with

the ecosystem. Even Lenovo, operating in 180 markets, relies on partnerships

to address AI's layers - the cloud, models, data, infrastructure and services.

Third, target high-ROI functions such as customer service, where CIOs expect a

3.6-fold return, to build boardroom support for broader adoption.

How to Stop Increasingly Dangerous AI-Generated Phishing Scams

With so many avenues of attack being used by phishing scammers, you need

constant vigilance. AI-powered detection platforms can simultaneously analyze

message content, links, and user behavior patterns. Combined with

sophisticated pattern recognition and anomaly identification techniques, these

systems can spot phishing attempts that would bypass traditional

signature-based approaches. ... Security awareness programs have progressed

from basic modules to dynamic, AI-driven phishing simulations reflecting

real-world scenarios. These simulations adapt to participant responses,

providing customized feedback and improving overall effectiveness. Exposing

team members to various sophisticated phishing techniques in controlled

environments better prepares them for the unpredictable nature of AI-powered

attacks. AI-enhanced incident response represents another promising

development. AI systems can quickly determine an attack's scope and impact by

automating phishing incident analysis, allowing security teams to respond more

efficiently and effectively. This automation not only reduces response time

but also helps prevent attacks from spreading by rapidly isolating compromised

systems.

Immutable Secrets Management: A Zero-Trust Approach to Sensitive Data in Containers

We address the critical vulnerabilities inherent in traditional secrets

management practices, which often rely on mutable secrets and implicit trust.

Our solution, grounded in the principles of Zero-Trust security, immutability,

and DevSecOps, ensures that secrets are inextricably linked to container

images, minimizing the risk of exposure and unauthorized access. We introduce

ChaosSecOps, a novel concept that combines Chaos Engineering with DevSecOps,

specifically focusing on proactively testing and improving the resilience of

secrets management systems. Through a detailed, real-world implementation

scenario using AWS services and common DevOps tools, we demonstrate the

practical application and tangible benefits of this approach. The e-commerce

platform case study showcases how immutable secrets management leads to

improved security posture, enhanced compliance, faster time-to-market, reduced

downtime, and increased developer productivity. Key metrics demonstrate a

significant reduction in secrets-related incidents and faster deployment

times. The solution directly addresses all criteria outlined for the Global

Tech Awards in the DevOps Technology category, highlighting innovation,

collaboration, scalability, continuous improvement, automation, cultural

transformation, measurable outcomes, technical excellence, and community

contribution.

The Network Impact of Cloud Security and Operations

Network security and monitoring also change. With cloud-based networks, the

network staff no longer has all its management software under its direct

control. It now must work with its various cloud providers on security. In

this environment, some small company network staff opt to outsource security

and network management to their cloud providers. Larger companies that want

more direct control might prefer to upskill their network staff on the

different security and configuration toolsets that each cloud provider makes

available. ... The move of applications and systems to more cloud services is

in part fueled by the growth of citizen IT. This is when end users in

departments have mini IT budgets and subscribe to new IT cloud services, of

which IT and network groups aren't always aware. This creates potential

security vulnerabilities, and it forces more network groups to segment

networks into smaller units for greater control. They should also implement

zero-trust networks that can immediately detect any IT resource, such as a

cloud service, that a user adds, subtracts or changes on the network. ...

Network managers are also discovering that they need to rewrite their disaster

recovery plans for cloud. The strategies and operations that were developed

for the internal network are still relevant.

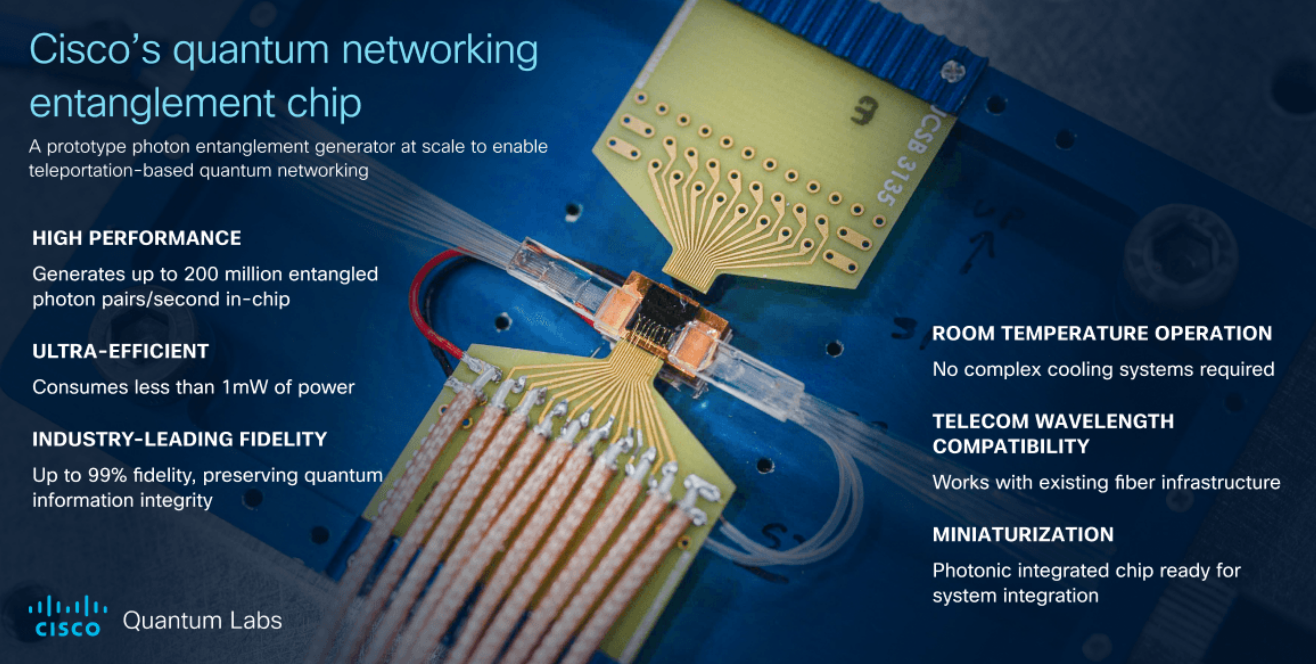

Three steps to integrate quantum computing into your data center or HPC facility

Just as QPU hardware has yet to become commoditized, the quantum computing

stack remains in development, with relatively little consistency in how

machines are accessed and programmed. Savvy buyers will have an informed

opinion on how to leverage software abstraction to accomplish their key goals.

With the right software abstractions, you can begin to transform quantum

processors from fragile, research-grade tools into reliable infrastructure for

solving real-world problems. Here are three critical layers of abstraction

that make this possible. First, there’s hardware management. Quantum devices

need constant tuning to stay in working shape, and achieving that manually

takes serious time and expertise. Intelligent autonomy provided by specialist

vendors can now handle the heavy lifting – booting, calibrating, and keeping

things stable – without someone standing by to babysit the machine. Then

there’s workload execution. Running a program on a quantum computer isn’t just

plug-and-play. You usually have to translate your high-level algorithm into

something that works with the quirks of the specific QPU being used, and

address errors along the way. Now, software can take care of that translation

and optimization behind the scenes, so users can just focus on building

quantum algorithms and workloads that address key research or business

needs.

Where Apple falls short for enterprise IT

First, enterprise tools in many ways could be considered a niche area of

software. As a result, enterprise functionality doesn’t get the same attention

as more mainstream features. This can be especially obvious when Apple tries to

bring consumer features into enterprise use cases — like managed Apple Accounts

and their intended integration with things like Continuity and iCloud, for

example — and things like MDM controls for new features such a Apple

Intelligence and low-level enterprise-specific functions like Declarative Device

Management. The second reason is obvious: any piece of software that isn’t ready

for prime time — and still makes it into a general release — is a potential

support ticket when a business user encounters problems. ... Deployment might be

where the lack of automation is clearest, but the issue runs through most

aspects of Apple device and user onboarding and management. Apple Business

Manager doesn’t offer any APIs that vendors or IT departments can tap into to

automate routine tasks. This can be anything from redeploying older devices,

onboarding new employees, assigning app licenses or managing user groups and

privileges. Although Apple Business Manager is a great tool and it functions as

a nexus for device management and identity management, it still requires more

manual lifting than it should.

Getting Started with Data Quality

Any process to establish or update a DQ program charter must be adaptable. For

example, a specific project management or a local office could start the initial

DQ offering. As other teams see the program’s value, they would show initiative.

In the meantime, the charter tenets change to meet the situation. So, any DQ

charter documentation must have the flexibility to transform into what is

currently needed. Companies must keep track of any charter amendments or

additions to provide transparency and accountability. Expect that various teams

will have overlapping or conflicting needs in a DQ program. These people will

need to work together to find a solution. They will need to know the discussion

rules to consistently advocate for the DQ they need and express their

challenges. Ambiguity will heighten dissent. So, charter discussions and

documentation must come from a well-defined methodology. As the white paper

notes, clarity, consistency, and alignment sit at the charter’s core. While

getting there can seem challenging, an expertly structured charter template can

prompt critical information to show the way. ... The best practices documented

by the charter stem from clarity, consistency, and alignment. They need to cover

the DQ objectives mentioned above and ground DQ discussions.