Quantum utility: The next milestone on the road to quantum advantage

“Quantum utility is a term that has only been coined recently, in the last 12

months or so. On the timeline that I’ve just described, there is a milestone

that sits between where we are now and the beginning of this quantum advantage

era. And that is this quantum utility concept. It’s basically where quantum

computers are able to demonstrate, or in this case, in recent demonstrations,

simulate a problem beyond the capabilities of just brute force classical

computation using sufficiently large quantum computational devices. So, in

this case, devices with more than 100 qubits,” she says. ... “It’s really an

indication of how close we are to demonstrating quantum advantage, and where

we can hopefully begin to see quantum computing computers serving as a

scientific tool to explore a new scale of problems beyond brute force,

classical simulation. So, it’s an indication of how close we are to quantum

advantage and ideally, we’ll be hoping to see some demonstration of that in

the next few years. No one really knows exactly when, but the idea is that

those who are able to harness this era of quantum utility will also be among

the first to achieve real quantum advantage as well.”

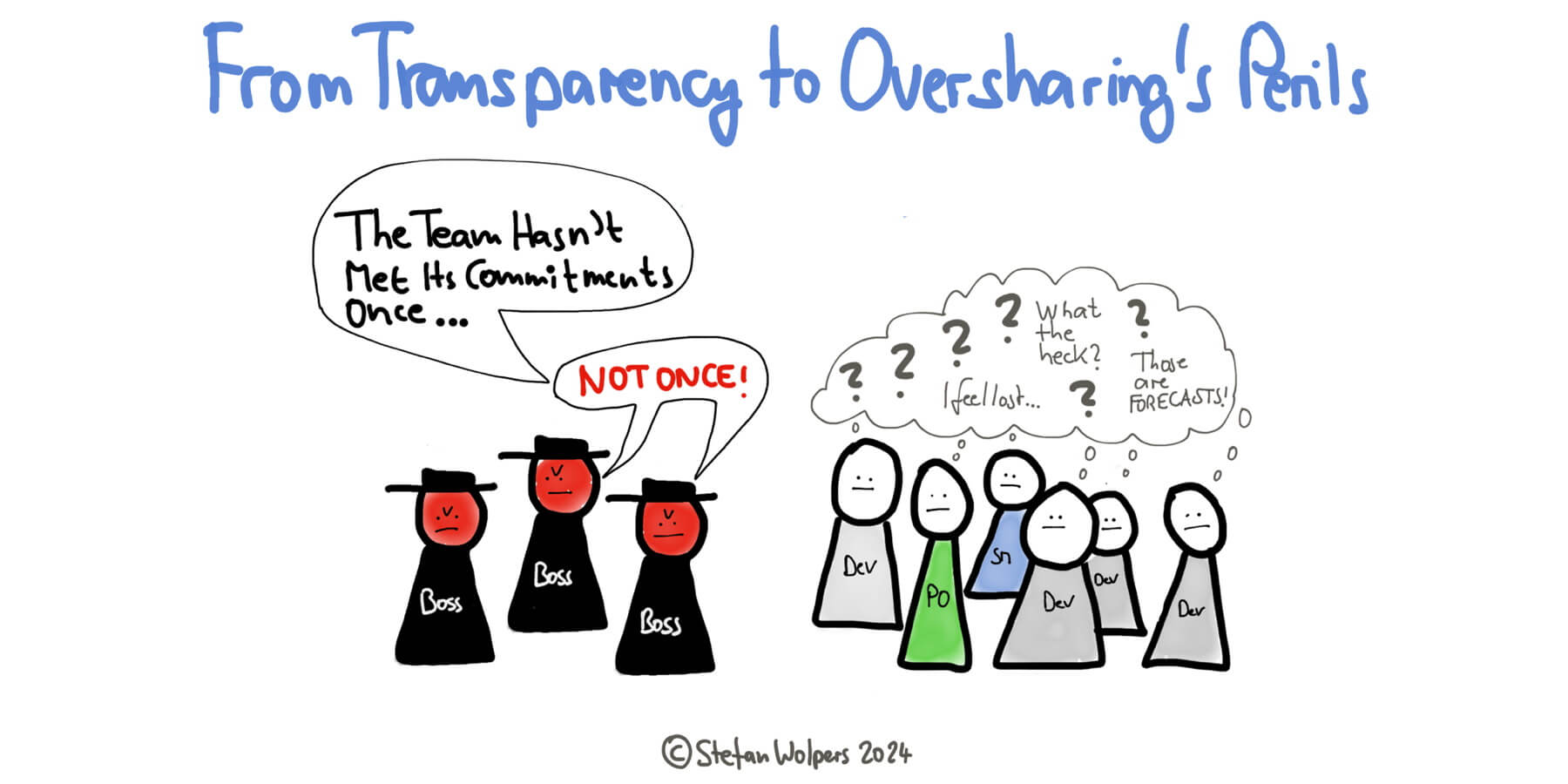

5 tips for switching to skills-based hiring

Skills come in a variety forms, such as hard skills, which comprise the

technical skills necessary to complete tasks; soft skills, which center around

a person’s interpersonal skills; and cognitive skills, which include problem

solving, decision making, and logical reasoning, among other skills. Before

embarking on a skills-based hiring strategy, it’s vital to have clear insight

into the skills your organization already has internally, in addition to all

the skills needed to complete projects and reach business goals. As you

identify and categorize skills, it’s important to review job descriptions as

well to ensure they’re up-to-date and don’t include any unnecessary skills or

vague requirements. It’s crucial as well to evaluate how your job descriptions

are written to ensure you’re drawing in the right talent for open roles.

Wording job descriptions can be especially tricky when it comes to soft

skills. For example, if your organization values someone who’s humble or

savvy, you’ll need to identify how that translates to a skill you can list on

a job description and, eventually, verify, says Hannah Johnson, senior VP for

strategy and market development at IT trade association CompTIA.

Could California's AI Bill Be a Blueprint for Future AI Regulation?

“If approved, legislation in an influential state like California could help

to establish industry best practices and norms for the safe and responsible

use of AI,” Ashley Casovan, managing director, AI Governance Center at

non-profit International Association of Privacy Professionals (IAPP), says in

an email interview. California is hardly the only place with AI regulation on

its radar. The EU AI Act passed earlier this year. The federal government in

the US released an AI Bill of Rights, though this serves as guidance rather

than regulation. Colorado and Utah enacted laws applying to the use of AI

systems. “I expect that there will be more domain-specific or

technology-specific legislation for AI emerging from all of the states in the

coming year,” says Casovan. As quickly as it seems new AI legislation, and the

accompanying debates, pops up, AI moves faster. “The biggest challenge here…is

that the law has to be broad enough because if it's too specific maybe by the

time it passes, it is already not relevant,” says Ruzzi. Another big part of

the AI regulation challenge is agreeing on what safety in AI even means. “What

safety means is…very multifaceted and ill-defined right now,” says Vartak.

Why and How to Secure GenAI Investments From Day Zero

Because GenAI remains a relatively novel concept that many companies are

officially using only in limited contexts, it can be tempting for business

decision-makers to ignore or downplay the security stakes of GenAI for the

time being. They assume there will be time to figure how to secure large

language models (LLMs) and mitigate data privacy risks later, once they’ve

established basic GenAI use cases and strategies. Unfortunately, this attitude

toward GenAI is a huge mistake, to put it mildly. It’s like learning to pilot

a ship without thinking about what you’ll do if the ship sinks, or taking up a

high-intensity sport without figuring out how to protect yourself from injury

until you’ve already broken a bone. A healthier approach to GenAI is one in

which organizations build security protections from the start. Here’s why,

along with tips on how to integrate security into your organization’s GenAI

strategy from day zero. ... GenAI security and data privacy challenges exist

regardless of the extent to which an organization has adopted GenAI or which

types of use cases it’s targeting. It’s not as if they only matter for

companies making heavy use of AI or using AI in domains where special

security, privacy or compliance risks apply.

US, UK and EU sign on to the Council of Europe’s high-level AI safety treaty

The high-level treaty sets out to focus on how AI intersects with three main

areas: human rights, which includes protecting against data misuse and

discrimination, and ensuring privacy; protecting democracy; and protecting the

“rule of law.” Essentially the third of these commits signing countries to

setting up regulators to protect against “AI risks.” The more specific

aim of the treaty is as lofty as the areas it hopes to address. “The treaty

provides a legal framework covering the entire lifecycle of AI systems,” the

COE notes. “It promotes AI progress and innovation, while managing the risks

it may pose to human rights, democracy and the rule of law. To stand the test

of time, it is technology-neutral.” ... The idea seems to be that if AI does

represent a mammoth change to how the world operates, if not watched

carefully, not all of those changes may turn out to be for the best, so it’s

important to be proactive. However there is also clearly nervousness among

regulators about overstepping the mark and being accused of crimping

innovation by acting too early or applying too broad a brush. AI companies

have also jumped in early to proclaim that they, too, are just as interested

in what’s come to be described as AI Safety.

Fight Against Ransomware and Data Threats

Ransomware as a Service (RaaS) is becoming a massive industry. The tools to

create ransomware attacks are readily available online, and it’s becoming

easier for people even those with limited technical skills to launch attacks.

We have the largest pool of software developers in the world, and

unfortunately, a small portion of them see ransomware as a way to make easy

money. There are even reports of recruitment drives in certain states to hire

engineers or tech-savvy individuals to develop ransomware software. ... The

industries most affected by ransomware tend to be those that are heavily

regulated, such as BFSI (Banking, Financial Services, and Insurance),

healthcare, and insurance. These industries deal with highly valuable,

critical data, which makes them prime targets for attackers. Because of the

sensitive nature of the data they handle, these organizations are often

willing to pay the ransom to get it back. The reason these industries are so

heavily regulated is that they’re dealing with data that is more critical than

in other industries. Healthcare companies, for example, are regulated by

agencies like the FDA in the U.S. and their Indian equivalent. Financial

services are regulated by the RBI or SEBI in India.

Cloud Security Assurance: Is Automation Changing the Game?

For cloud workloads, security assurance teams must assess and gather evidence

for each component’s adherence to security standards, including for components

and configurations the cloud provider runs. Luckily, cloud providers offer

downloadable assurance and compliance certificates. These certificates and

reports are essential for the cloud providers’ business. Larger customers,

especially, work only with vendors that adhere to the standards relevant to

these customers. The exact standards vary by the customers’ jurisdiction and

industry. Figure 3 illustrates the extensive range of global,

country-specific, and industry-specific standards Azure (for example) provides

for download to their customers and prospects. ... These cloud security

assurance reports cover the infrastructure layer and the security of the cloud

provider’s IaaS, PaaS, and SaaS services. They do not cover customer-specific

configurations, patching, or operations, including securing AWS S3 buckets

against unauthorized access or patching VMs (Figure 4). Whether customers

configure these services securely and put them adequately together is in the

customers’ hands – and the customer security assurance team must validate

that.

The Road from Chatbots and Co-Pilots to LAMs and AI Agents

We are beginning an evolution from knowledge-based, gen-AI-powered tools–say,

chatbots that answer questions and generate content–to gen AI–enabled ‘agents’

that use foundation models to execute complex, multistep workflows across a

digital world,” analysts with the consulting giant write. “In short, the

technology is moving from thought to action.” AI agents, McKinsey says, will

be able to automate “complex and open-ended use cases” thanks to three

characteristics they possess, including: the capability to manage

multiplicity; the capability to be directed by natural language; and the

capability to work with existing software tools and platforms. ... “Although

agent technology is quite nascent, increasing investments in these tools could

result in agentic systems achieving notable milestones and being deployed at

scale over the next few years,” the company writes. PC acknowledges that there

are some challenges to building automated applications with the LAM

architecture at this point. LLMs are probabilistic and sometimes can go off

the rails, so it’s important to keep them on track by combining them with

classical programming using deterministic techniques.

Are you ready for data hyperaggregation?

Data hyperaggregation is not simply a technological advancement. It’s a

strategic initiative that aligns with the broader trend of digital

transformation. Its ability to provide a unified view of disparate data

sources empowers organizations to harness their data effectively, driving

innovation and creating competitive advantages in the digital landscape. As

the field continues to evolve, the fusion of data hyperaggregation with

cutting-edge technologies will undoubtedly shape the future of cloud computing

and enterprise data strategies. The problems and solutions related to

enterprise data aggregation are familiar. Indeed, I wrote books about it in

the 1990s. In 2024, we still can’t get it right. The problems have actually

gotten much worse with the addition of cloud providers and the unwillingness

to break down data silos within enterprises. Things didn’t get simpler, they

got more complex. Now, AI needs access to most data sources that enterprises

maintain. Because universal access methodologies still don’t exist, we

invented a new buzzword, “data hyperaggregation.” If this iteration of data

gathering catches on, we get to solve the disparate data problem for more

reasons than just AI. I hold out hope. Am I naive? We’ll see.

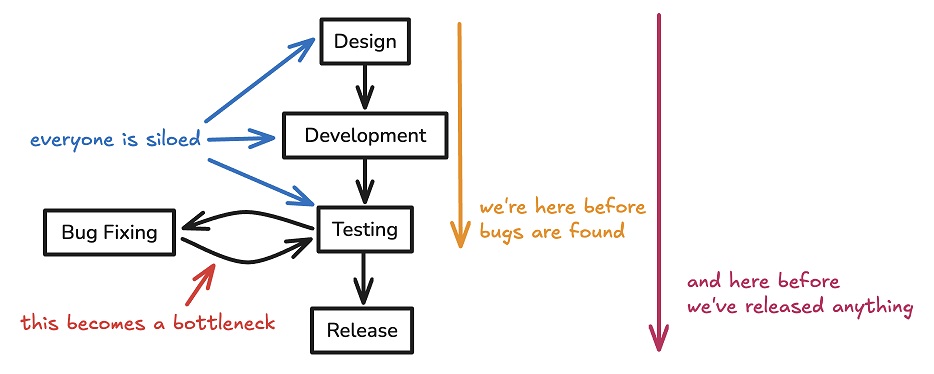

Unlock Business Value Through Effective DevOps Infrastructure Management

Whatever mix of architectures an organization uses, however, the best strategy

is rooted in their specific needs, focusing on profitability and customer

satisfaction. Overly complex systems not only cost more, but they also reduce

the return on investment (ROI) and efficiency. Innovation delivers services to

customers faster and more efficiently than before. With the plethora of

technologies available today, it's imperative for organizations to be clear

about what provides real value to reduce the cost and time spent on

infrastructure issues. ... Adopting DevOps infrastructure management practices

encourages the use of solutions like IaC, making deployments more repeatable,

scalable, and reliable. Automation and continuous monitoring free up resources

to focus on a broader range of tasks, including security, developer

experience, and time to market. Robust documentation processes are critical to

preserve this culture of continuous improvement, efficiency, and productivity

over time. Should a project be handed to a new team, documentation helps

maintain continuity and can reveal historical inefficiencies or

issues.

Quote for the day:

“People are not lazy. They simply have

important goals – that is, goals that do not inspire them.” -- Tony Robbins