Quote for the day:

"If you care enough for a result, you will most certainly attain it." -- William James

The data center gold rush is warping reality

The real impact isn’t people—it’s power, land, transmission capacity, and

water. When you drop 10 massive facilities into a small grid, demand spikes

don’t just happen inside the fence line. They ripple outward. Utilities must

upgrade substations, reinforce transmission lines, procure new-generation

equipment, and finance these investments. ... Here’s the part we don’t say out

loud often enough: High-tech companies are spending massive amounts of money

on data centers because the market rewards them for doing so. Capital

expenditures have become a kind of corporate signaling mechanism. On earnings

calls, “We’re investing aggressively” has become synonymous with “We’re

winning,” even when the investment is built on forecasts that are, at best,

optimistic and, at worst, indistinguishable from wishful thinking. ... The bet

is straightforward: When demand spikes, prices and utilization rise, and those

who built first make bank. Build the capacity, fill the capacity, charge a

premium for the scarce resource, and ride the next decade of digital

expansion. It’s the same playbook we’ve seen before in other infrastructure

booms, except this time the infrastructure is made of silicon and electrons,

and the pitch is wrapped in the language of transformation. ... Then there’s

the cost reality. AI systems, especially those that deliver meaningful,

production-grade outcomes, often cost five to ten times as much as traditional

systems once you account for compute, data movement, storage, tools, and the

people required to run them responsibly.

The real impact isn’t people—it’s power, land, transmission capacity, and

water. When you drop 10 massive facilities into a small grid, demand spikes

don’t just happen inside the fence line. They ripple outward. Utilities must

upgrade substations, reinforce transmission lines, procure new-generation

equipment, and finance these investments. ... Here’s the part we don’t say out

loud often enough: High-tech companies are spending massive amounts of money

on data centers because the market rewards them for doing so. Capital

expenditures have become a kind of corporate signaling mechanism. On earnings

calls, “We’re investing aggressively” has become synonymous with “We’re

winning,” even when the investment is built on forecasts that are, at best,

optimistic and, at worst, indistinguishable from wishful thinking. ... The bet

is straightforward: When demand spikes, prices and utilization rise, and those

who built first make bank. Build the capacity, fill the capacity, charge a

premium for the scarce resource, and ride the next decade of digital

expansion. It’s the same playbook we’ve seen before in other infrastructure

booms, except this time the infrastructure is made of silicon and electrons,

and the pitch is wrapped in the language of transformation. ... Then there’s

the cost reality. AI systems, especially those that deliver meaningful,

production-grade outcomes, often cost five to ten times as much as traditional

systems once you account for compute, data movement, storage, tools, and the

people required to run them responsibly.Chip-processing method could assist cryptography schemes to keep data secure

Just like each person has unique fingerprints, every CMOS chip has a

distinctive “fingerprint” caused by tiny, random manufacturing variations.

Engineers can leverage this unforgeable ID for authentication, to safeguard a

device from attackers trying to steal private data. But these cryptographic

schemes typically require secret information about a chip’s fingerprint to be

stored on a third-party server. This creates security vulnerabilities and

requires additional memory and computation. ... “The biggest advantage of this

security method is that we don’t need to store any information. All the

secrets will always remain safe inside the silicon. This can give a higher

level of security. As long as you have this digital key, you can always unlock

the door,” says Eunseok Lee, an electrical engineering and computer science

(EECS) graduate student and lead author of a paper on this security method.

... A chip’s PUF can be used to provide security just like the human

fingerprint identification system on a laptop or door panel. For

authentication, a server sends a request to the device, which responds with a

secret key based on its unique physical structure. If the key matches an

expected value, the server authenticates the device. But the PUF

authentication data must be registered and stored in a server for access

later, creating a potential security vulnerability.

Just like each person has unique fingerprints, every CMOS chip has a

distinctive “fingerprint” caused by tiny, random manufacturing variations.

Engineers can leverage this unforgeable ID for authentication, to safeguard a

device from attackers trying to steal private data. But these cryptographic

schemes typically require secret information about a chip’s fingerprint to be

stored on a third-party server. This creates security vulnerabilities and

requires additional memory and computation. ... “The biggest advantage of this

security method is that we don’t need to store any information. All the

secrets will always remain safe inside the silicon. This can give a higher

level of security. As long as you have this digital key, you can always unlock

the door,” says Eunseok Lee, an electrical engineering and computer science

(EECS) graduate student and lead author of a paper on this security method.

... A chip’s PUF can be used to provide security just like the human

fingerprint identification system on a laptop or door panel. For

authentication, a server sends a request to the device, which responds with a

secret key based on its unique physical structure. If the key matches an

expected value, the server authenticates the device. But the PUF

authentication data must be registered and stored in a server for access

later, creating a potential security vulnerability. What MCP Can and Cannot Do for Project Managers Today

The most mature MCPs for PM are official connectors from the platforms

themselves. Atlassian’s Rovo MCP Server connects Jira and Confluence,

generally available since late 2025. Wrike has its own MCP server for

real-time work management. Dart exposes task creation, updates, and querying

through MCP. ClickUp does not have an official MCP server, but multiple

community implementations wrap its API for task management, comments, docs,

and time tracking. ... Most PM work is human and stays human. No LLM replaces

the conversation where you talk a frustrated team member through a scope

change, or the negotiation where you push back on an unrealistic deadline from

the sponsor. No LLM runs a planning workshop or navigates the politics of

resource allocation. But woven through all of that is documentation. Every

conversation, every decision, every planning session produces written output.

The charter that captures what was agreed. ... Beyond documentation,

scheduling is where I expected MCP to add the most computational value. This

is where the investigation got interesting. Every PM builds schedules. The

standard method is CPM: define tasks, set dependencies, estimate durations,

calculate the critical path. MS Project does this. Primavera does this. A

spreadsheet with formulas does this. CPM is well understood and universally

used. CPM does exactly what it says: it calculates the critical path given

dependencies and durations.

The most mature MCPs for PM are official connectors from the platforms

themselves. Atlassian’s Rovo MCP Server connects Jira and Confluence,

generally available since late 2025. Wrike has its own MCP server for

real-time work management. Dart exposes task creation, updates, and querying

through MCP. ClickUp does not have an official MCP server, but multiple

community implementations wrap its API for task management, comments, docs,

and time tracking. ... Most PM work is human and stays human. No LLM replaces

the conversation where you talk a frustrated team member through a scope

change, or the negotiation where you push back on an unrealistic deadline from

the sponsor. No LLM runs a planning workshop or navigates the politics of

resource allocation. But woven through all of that is documentation. Every

conversation, every decision, every planning session produces written output.

The charter that captures what was agreed. ... Beyond documentation,

scheduling is where I expected MCP to add the most computational value. This

is where the investigation got interesting. Every PM builds schedules. The

standard method is CPM: define tasks, set dependencies, estimate durations,

calculate the critical path. MS Project does this. Primavera does this. A

spreadsheet with formulas does this. CPM is well understood and universally

used. CPM does exactly what it says: it calculates the critical path given

dependencies and durations. How to Write a Good Spec for AI Agents

Instead of overengineering upfront, begin with a clear goal statement and a

few core requirements. Treat this as a “product brief” and let the agent

generate a more elaborate spec from it. This leverages the AI’s strength in

elaboration while you maintain control of the direction. This works well

unless you already feel you have very specific technical requirements that

must be met from the start. ... Many developers using a strong model do

exactly this. The spec file persists between sessions, anchoring the AI

whenever work resumes on the project. This mitigates the forgetfulness that

can happen when the conversation history gets too long or when you have to

restart an agent. It’s akin to how one would use a product requirements

document (PRD) in a team: a reference that everyone (human or AI) can consult

to stay on track. ... Treat specs as “executable artifacts” tied to version

control and CI/CD. The GitHub Spec Kit uses a four-phase gated workflow that

makes your specification the center of your engineering process. Instead

of writing a spec and setting it aside, the spec drives the implementation,

checklists, and task breakdowns. Your primary role is to steer; the coding

agent does the bulk of the writing. ... Experienced AI engineers have learned

that trying to stuff the entire project into a single prompt or agent message

is a recipe for confusion. Not only do you risk hitting token limits; you also

risk the model losing focus due to the “curse of instructions”—too many

directives causing it to follow none of them well.

The arrival of quantum computing is future, but the threat is current.

Commercial and federal organizations need to protect against quantum computing

decryption now. Various new mathematical approaches have been developed for

PQC, but while they may be theoretically secure, they are not provably secure.

Ultimately, the only provably secure key distribution must be based on physics

rather than math. ... While this basic approach is secure, it is neither

efficient nor cheap. “Quantum key distribution is an expensive solution for

people that have really sensitive information,” continues Bruggeman. “So,

think military primarily, and some government agencies where nuclear weapons

and national security are involved.” Current implementations tend to use

available dark fiber that still has leasing costs. ... “The big advance from

NIST is they are able to provide single photons at a time, as opposed to

sending multiple photons,” continues Bruggeman. Single photons aren’t new, but

in the past, they’ve usually been photons in a stream of photons. “So, they

encode the key information on those strings, and that leads to replication.

And in cryptography, you don’t want to have replication of data.” There is

currently a comfort level in this redundancy, since if one photon in the

stream fails, the next one might succeed. But NIST has separately developed

Superconducting Nanowire Single-Photon Detectors (SNSPDs) which would allow

single photons to be reliably sent and received over longer distances – up to

600 miles.

The arrival of quantum computing is future, but the threat is current.

Commercial and federal organizations need to protect against quantum computing

decryption now. Various new mathematical approaches have been developed for

PQC, but while they may be theoretically secure, they are not provably secure.

Ultimately, the only provably secure key distribution must be based on physics

rather than math. ... While this basic approach is secure, it is neither

efficient nor cheap. “Quantum key distribution is an expensive solution for

people that have really sensitive information,” continues Bruggeman. “So,

think military primarily, and some government agencies where nuclear weapons

and national security are involved.” Current implementations tend to use

available dark fiber that still has leasing costs. ... “The big advance from

NIST is they are able to provide single photons at a time, as opposed to

sending multiple photons,” continues Bruggeman. Single photons aren’t new, but

in the past, they’ve usually been photons in a stream of photons. “So, they

encode the key information on those strings, and that leads to replication.

And in cryptography, you don’t want to have replication of data.” There is

currently a comfort level in this redundancy, since if one photon in the

stream fails, the next one might succeed. But NIST has separately developed

Superconducting Nanowire Single-Photon Detectors (SNSPDs) which would allow

single photons to be reliably sent and received over longer distances – up to

600 miles.

NIST’s Quantum Breakthrough: Single Photons Produced on a Chip

The arrival of quantum computing is future, but the threat is current.

Commercial and federal organizations need to protect against quantum computing

decryption now. Various new mathematical approaches have been developed for

PQC, but while they may be theoretically secure, they are not provably secure.

Ultimately, the only provably secure key distribution must be based on physics

rather than math. ... While this basic approach is secure, it is neither

efficient nor cheap. “Quantum key distribution is an expensive solution for

people that have really sensitive information,” continues Bruggeman. “So,

think military primarily, and some government agencies where nuclear weapons

and national security are involved.” Current implementations tend to use

available dark fiber that still has leasing costs. ... “The big advance from

NIST is they are able to provide single photons at a time, as opposed to

sending multiple photons,” continues Bruggeman. Single photons aren’t new, but

in the past, they’ve usually been photons in a stream of photons. “So, they

encode the key information on those strings, and that leads to replication.

And in cryptography, you don’t want to have replication of data.” There is

currently a comfort level in this redundancy, since if one photon in the

stream fails, the next one might succeed. But NIST has separately developed

Superconducting Nanowire Single-Photon Detectors (SNSPDs) which would allow

single photons to be reliably sent and received over longer distances – up to

600 miles.

The arrival of quantum computing is future, but the threat is current.

Commercial and federal organizations need to protect against quantum computing

decryption now. Various new mathematical approaches have been developed for

PQC, but while they may be theoretically secure, they are not provably secure.

Ultimately, the only provably secure key distribution must be based on physics

rather than math. ... While this basic approach is secure, it is neither

efficient nor cheap. “Quantum key distribution is an expensive solution for

people that have really sensitive information,” continues Bruggeman. “So,

think military primarily, and some government agencies where nuclear weapons

and national security are involved.” Current implementations tend to use

available dark fiber that still has leasing costs. ... “The big advance from

NIST is they are able to provide single photons at a time, as opposed to

sending multiple photons,” continues Bruggeman. Single photons aren’t new, but

in the past, they’ve usually been photons in a stream of photons. “So, they

encode the key information on those strings, and that leads to replication.

And in cryptography, you don’t want to have replication of data.” There is

currently a comfort level in this redundancy, since if one photon in the

stream fails, the next one might succeed. But NIST has separately developed

Superconducting Nanowire Single-Photon Detectors (SNSPDs) which would allow

single photons to be reliably sent and received over longer distances – up to

600 miles.Quantum security is turning into a supply chain problem

The core issue is timing. Sensitive supplier and contract data has a long shelf

life, and adversaries have already started collecting encrypted traffic for

future decryption. This is the “harvest now, decrypt later” model, where

encrypted records are stolen and stored until quantum computing becomes capable

of breaking current public-key encryption. That creates a practical security

problem for cybersecurity teams supporting procurement, third-party risk, and

supply chain operations. ... There’s growing pressure to adopt post-quantum

cryptography (PQC), including partner expectations, insurance scrutiny, and

regulatory direction. It argues that PQC adoption is increasingly being driven

through procurement requirements, especially from large enterprises and

public-sector organizations. Vendors without a PQC roadmap may face longer

audits or disqualification during sourcing decisions. ... Beyond cryptographic

threats, the researchers argue that quantum computing may eventually improve

supply chain risk management by addressing complex optimization problems that

overwhelm classical systems. It describes supply chain risk as a “wicked

problem,” where variables shift continuously and disruptions propagate in

unpredictable ways. ... Quantum readiness spans both cybersecurity and supply

chain management. For cybersecurity professionals, the near-term work focuses on

long-term encryption durability across vendor ecosystems, along with

cryptographic migration planning and third-party dependencies.

The core issue is timing. Sensitive supplier and contract data has a long shelf

life, and adversaries have already started collecting encrypted traffic for

future decryption. This is the “harvest now, decrypt later” model, where

encrypted records are stolen and stored until quantum computing becomes capable

of breaking current public-key encryption. That creates a practical security

problem for cybersecurity teams supporting procurement, third-party risk, and

supply chain operations. ... There’s growing pressure to adopt post-quantum

cryptography (PQC), including partner expectations, insurance scrutiny, and

regulatory direction. It argues that PQC adoption is increasingly being driven

through procurement requirements, especially from large enterprises and

public-sector organizations. Vendors without a PQC roadmap may face longer

audits or disqualification during sourcing decisions. ... Beyond cryptographic

threats, the researchers argue that quantum computing may eventually improve

supply chain risk management by addressing complex optimization problems that

overwhelm classical systems. It describes supply chain risk as a “wicked

problem,” where variables shift continuously and disruptions propagate in

unpredictable ways. ... Quantum readiness spans both cybersecurity and supply

chain management. For cybersecurity professionals, the near-term work focuses on

long-term encryption durability across vendor ecosystems, along with

cryptographic migration planning and third-party dependencies.

CEOs aren't seeing any AI productivity gains, yet some tech industry leaders are still convinced AI will destroy white collar work within two years

Most companies are yet to record any AI productivity gains despite widespread

adoption of the technology. That's according to a massive survey by the US

National Bureau of Economic Research (NBER), which asked 6,000 executives from a

range of firms across the US, UK, Germany, and Australia how they use AI. The

study found 70% of companies actively use AI, but the picture is different among

execs themselves. Among top executives – including CFOs and CEOs – a quarter

don't use the technology at all, while two-thirds say they use it for 1.5 hours

a week at most. ... "The most commonly cited uses are ‘text generation using

large language models’ followed by ‘visual content creation’ and ‘data

processing using machine learning’," the survey added. When it comes to

employment savings, 90% of execs said they'd seen no impact from AI over the

last three years, with 89% saying they saw no productivity boost, either. The

report noted that previous studies have found large productivity gains in

specific settings – in particular customer support and writing tasks. ...

Despite the lack of impact to date, business leaders still predict AI will start

to boost productivity and reduce the number of employees needed in the coming

years. Respondents predict a 1.4% productivity boost and 0.8% increase in output

thanks to the technology over the next three years, for example. Yet the NBER

survey also reveals a "sizable gap in expectations", with senior execs saying AI

would cut employment by 0.7% over the next three years — which the report said

would mean 1.75 million fewer jobs.

Most companies are yet to record any AI productivity gains despite widespread

adoption of the technology. That's according to a massive survey by the US

National Bureau of Economic Research (NBER), which asked 6,000 executives from a

range of firms across the US, UK, Germany, and Australia how they use AI. The

study found 70% of companies actively use AI, but the picture is different among

execs themselves. Among top executives – including CFOs and CEOs – a quarter

don't use the technology at all, while two-thirds say they use it for 1.5 hours

a week at most. ... "The most commonly cited uses are ‘text generation using

large language models’ followed by ‘visual content creation’ and ‘data

processing using machine learning’," the survey added. When it comes to

employment savings, 90% of execs said they'd seen no impact from AI over the

last three years, with 89% saying they saw no productivity boost, either. The

report noted that previous studies have found large productivity gains in

specific settings – in particular customer support and writing tasks. ...

Despite the lack of impact to date, business leaders still predict AI will start

to boost productivity and reduce the number of employees needed in the coming

years. Respondents predict a 1.4% productivity boost and 0.8% increase in output

thanks to the technology over the next three years, for example. Yet the NBER

survey also reveals a "sizable gap in expectations", with senior execs saying AI

would cut employment by 0.7% over the next three years — which the report said

would mean 1.75 million fewer jobs. Observability Without Cost Telemetry Is Broken Engineering

Cost isn't an operational afterthought. It's a signal as essential as CPU saturation or memory pressure, yet we've architected it out of the feedback loop engineers actually use. ... Engineers started evaluating architectural choices through a cost lens without needing MBA training. “Should we cache this aggressively?” became answerable with data: cache infrastructure costs $X/month, API calls saved cost $Y/month, net impact is measurable, not theoretical. ... The anti-pattern I see most often is siloed visibility. Finance gets billing dashboards. SREs get operational dashboards. Developers get APM traces. Nobody sees the intersection where cost and performance influence each other. You debug a performance issue — say, slow database queries. The fix is to add an index. Query time drops from 800 ms to 40 ms. Victory. Except the database is now using 30% more storage for that index, and your storage tier bills by the gigabyte-month. If you're on a flat-rate hosting plan, maybe that cost is absorbed. If you're on Aurora or Cosmos DB with per-IOPS pricing, you've just traded latency for dollars. Without cost telemetry, you won't notice until the bill arrives. ... Alerting without cost dimensions misses failure modes. Your error rate is fine. Latency is stable. But egress costs just doubled because a misconfigured service is downloading the same 200 GB dataset on every request instead of caching it.A New Way To Read the “Unreadable” Qubit Could Transform Quantum Technology

“Our work is pioneering because we demonstrate that we can access the

information stored in Majorana qubits using a new technique called quantum

capacitance,” continues the scientist, who explains that this technique “acts as

a global probe sensitive to the overall state of the system.” ... To better

understand this achievement, Aguado explains that topological qubits are “like

safe boxes for quantum information,” only that, instead of storing data in a

specific location, “they distribute it non-locally across a pair of special

states, known as Majorana zero modes.” That unusual structure is what makes them

attractive for quantum computing. “They are inherently robust against local

noise that produces decoherence, since to corrupt the information, a failure

would have to affect the system globally.” In other words, small disturbances

are unlikely to disrupt the stored information. Yet this strength has also

created a major experimental challenge. As Aguado notes, “this same virtue had

become their experimental Achilles’ heel: how do you “read” or “detect” a

property that doesn’t reside at any specific point?.” ... The project

brings together an advanced experimental platform developed primarily at Delft

University of Technology and theoretical work carried out by ICMM-CSIC.

According to the authors, this theoretical input was “crucial for understanding

this highly sophisticated experiment,” highlighting the importance of close

collaboration between theory and experiment in pushing quantum technology

forward.

“Our work is pioneering because we demonstrate that we can access the

information stored in Majorana qubits using a new technique called quantum

capacitance,” continues the scientist, who explains that this technique “acts as

a global probe sensitive to the overall state of the system.” ... To better

understand this achievement, Aguado explains that topological qubits are “like

safe boxes for quantum information,” only that, instead of storing data in a

specific location, “they distribute it non-locally across a pair of special

states, known as Majorana zero modes.” That unusual structure is what makes them

attractive for quantum computing. “They are inherently robust against local

noise that produces decoherence, since to corrupt the information, a failure

would have to affect the system globally.” In other words, small disturbances

are unlikely to disrupt the stored information. Yet this strength has also

created a major experimental challenge. As Aguado notes, “this same virtue had

become their experimental Achilles’ heel: how do you “read” or “detect” a

property that doesn’t reside at any specific point?.” ... The project

brings together an advanced experimental platform developed primarily at Delft

University of Technology and theoretical work carried out by ICMM-CSIC.

According to the authors, this theoretical input was “crucial for understanding

this highly sophisticated experiment,” highlighting the importance of close

collaboration between theory and experiment in pushing quantum technology

forward.

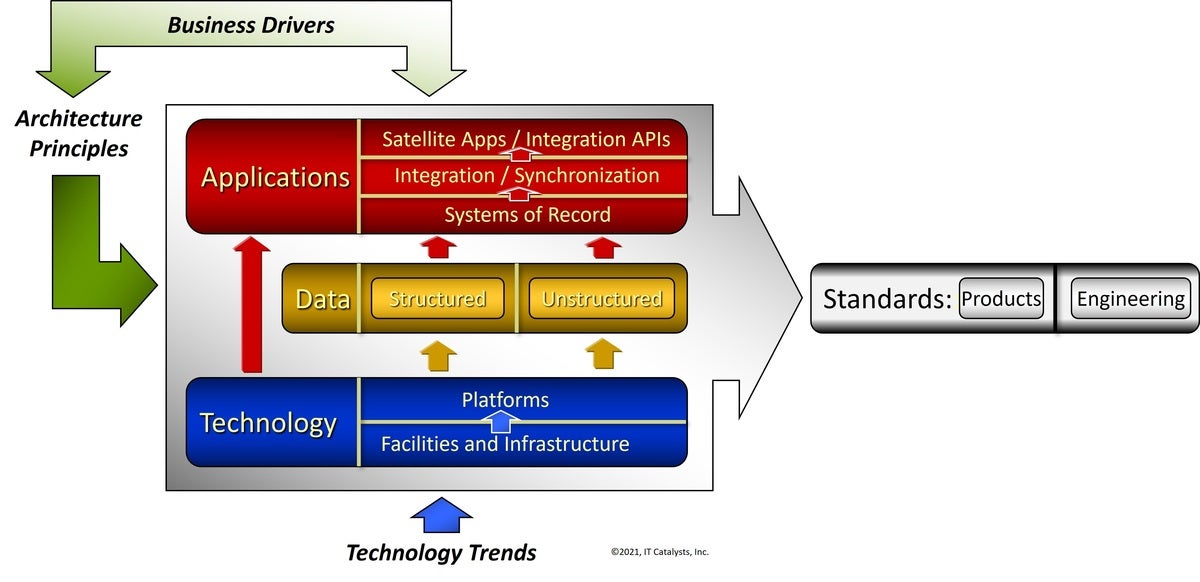

When Excellent Technology Architecture Fails to Deliver Business Results

Industry research consistently shows that most large-scale transformations fail

to achieve their expected business outcomes, even when the underlying technology

decisions are considered sound. This suggests that the issue is not technical

quality. It is structural. ... The real divergence begins later, in day-to-day

decision-making. Under delivery pressure, teams make choices driven by

deadlines, budget constraints, and individual accountability. Temporary

workarounds are accepted. Deviations are justified as exceptions. Risks are

taken implicitly rather than explicitly assessed. Architecture is often aware of

these decisions, but it is not structurally embedded in the moment where choices

are made. As a result, architecture remains correct, but unused. ... When

architecture cannot explain the economic and operational consequences of a

decision, it loses relevance. Statements such as “this violates architectural

principles” carry little weight if they are not translated into impact on cost

of change, delivery speed, or operational risk. ... What is critical is that

these compromises are rarely tracked, assessed cumulatively, or reintroduced

into management discussions. Architecture may be aware of them, but without a

mechanism to record and govern them, their impact remains invisible until

flexibility is lost and change becomes expensive. Architecture debt, in this

sense, is not a technical failure. It is a governance outcome. When decision

trade-offs remain unmanaged, architecture is blamed for consequences it was

never empowered to influence.

Industry research consistently shows that most large-scale transformations fail

to achieve their expected business outcomes, even when the underlying technology

decisions are considered sound. This suggests that the issue is not technical

quality. It is structural. ... The real divergence begins later, in day-to-day

decision-making. Under delivery pressure, teams make choices driven by

deadlines, budget constraints, and individual accountability. Temporary

workarounds are accepted. Deviations are justified as exceptions. Risks are

taken implicitly rather than explicitly assessed. Architecture is often aware of

these decisions, but it is not structurally embedded in the moment where choices

are made. As a result, architecture remains correct, but unused. ... When

architecture cannot explain the economic and operational consequences of a

decision, it loses relevance. Statements such as “this violates architectural

principles” carry little weight if they are not translated into impact on cost

of change, delivery speed, or operational risk. ... What is critical is that

these compromises are rarely tracked, assessed cumulatively, or reintroduced

into management discussions. Architecture may be aware of them, but without a

mechanism to record and govern them, their impact remains invisible until

flexibility is lost and change becomes expensive. Architecture debt, in this

sense, is not a technical failure. It is a governance outcome. When decision

trade-offs remain unmanaged, architecture is blamed for consequences it was

never empowered to influence.