Quote for the day:

"Little minds are tamed and subdued by misfortune; but great minds rise above it." -- Washington Irving

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

New Research Highlights Growing Digital Trust Crisis as AI Accelerates Online Threats

A recent report reveals that organizations are facing a mounting crisis of

digital trust as cyber threats increasingly move beyond traditional security

perimeters. Instead of merely attacking internal networks, attackers are now

targeting the public internet, focusing heavily on brand reputation, employee

identities, and customer relationships. The study found that while most

companies have experienced a significant security incident in the past year,

very few consider their defense programs mature enough to handle them. The

rapid advancement of artificial intelligence is accelerating this shift.

Attackers are using AI tools to create highly convincing deepfakes, voice

clones, and impersonation campaigns, making it much harder for people to spot

fraud through simple errors like poor grammar. Furthermore, as businesses

adopt AI agents to automate everyday tasks, they expose themselves to new

risks. Malicious instructions can be cleverly hidden in external content,

tricking these automated systems into taking unintended actions at speeds

faster than humans can intervene. To counter these evolving threats,

organizations must move beyond protecting only top executives and begin

defending their entire workforce. Over the next few years, businesses that

apply the same strict oversight to their artificial intelligence systems as

they do to their standard access controls will be in a much stronger position

to protect their operations and maintain public confidence.

A recent report reveals that organizations are facing a mounting crisis of

digital trust as cyber threats increasingly move beyond traditional security

perimeters. Instead of merely attacking internal networks, attackers are now

targeting the public internet, focusing heavily on brand reputation, employee

identities, and customer relationships. The study found that while most

companies have experienced a significant security incident in the past year,

very few consider their defense programs mature enough to handle them. The

rapid advancement of artificial intelligence is accelerating this shift.

Attackers are using AI tools to create highly convincing deepfakes, voice

clones, and impersonation campaigns, making it much harder for people to spot

fraud through simple errors like poor grammar. Furthermore, as businesses

adopt AI agents to automate everyday tasks, they expose themselves to new

risks. Malicious instructions can be cleverly hidden in external content,

tricking these automated systems into taking unintended actions at speeds

faster than humans can intervene. To counter these evolving threats,

organizations must move beyond protecting only top executives and begin

defending their entire workforce. Over the next few years, businesses that

apply the same strict oversight to their artificial intelligence systems as

they do to their standard access controls will be in a much stronger position

to protect their operations and maintain public confidence.The Invisible Invoice: The Cost of Building Software Without Understanding It

The software industry typically measures success by delivery speed and whether

an application works on launch day, but it rarely tracks the ongoing expense

of keeping it running years later. When teams build software without deeply

understanding the core business problem, they often rely on heavy, complicated

frameworks to speed up initial development. While these shortcuts might save a

few weeks upfront, they create an invisible invoice of hidden costs. Over

time, maintaining this code through security patches, version upgrades, and

changing requirements becomes incredibly expensive and drains precious time.

Because there is no alternative version of the same software to compare it

against, companies usually write off these escalating costs as unavoidable

technical debt or standard enterprise complexity. Building software is

ultimately a learning process where the true needs of the business are

discovered along the way. To avoid the invisible invoice trap, developers must

separate the strict rules of the business from the optional technical

plumbing. The primary goal should be to translate essential business logic

into a clear structure that both domain experts and programmers can easily

read and understand. By focusing intensely on the actual purpose of the

application rather than default technical conventions, teams can build

adaptable systems that evolve over time instead of rigid platforms that must

eventually be discarded.

The Scalable Innovation Playbook: Architecture Patterns, Governance, and Platforms

To successfully drive innovation at scale, organizations need a structured

approach that moves beyond temporary projects and isolated teams. The core of

this strategy relies on establishing flexible architecture patterns, practical

governance, and reliable internal platforms. Modern architecture patterns,

such as modular designs, allow development teams to build and modify

applications quickly without disrupting the entire system. However, this

flexibility requires clear governance to prevent operational chaos across the

business. Good governance acts as a set of helpful guardrails rather than a

rigid roadblock, ensuring that different teams follow consistent security

standards and reliable data practices without sacrificing their creative

independence. Supporting this critical balance are internal developer

platforms, which provide ready tools and infrastructure so engineers can focus

directly on solving core business problems instead of constantly setting up

basic software environments. By treating these platforms as internal products

built specifically for their own developers, companies greatly reduce wasted

effort and significantly speed up delivery times. Ultimately, scaling

innovation is not simply about adopting the newest technology trends, but

rather about creating a sustainable environment where technical teams have the

freedom to experiment safely. When architecture, governance, and platforms

work together smoothly, businesses can adapt to market changes and build new

solutions with predictable success and stability.

To successfully drive innovation at scale, organizations need a structured

approach that moves beyond temporary projects and isolated teams. The core of

this strategy relies on establishing flexible architecture patterns, practical

governance, and reliable internal platforms. Modern architecture patterns,

such as modular designs, allow development teams to build and modify

applications quickly without disrupting the entire system. However, this

flexibility requires clear governance to prevent operational chaos across the

business. Good governance acts as a set of helpful guardrails rather than a

rigid roadblock, ensuring that different teams follow consistent security

standards and reliable data practices without sacrificing their creative

independence. Supporting this critical balance are internal developer

platforms, which provide ready tools and infrastructure so engineers can focus

directly on solving core business problems instead of constantly setting up

basic software environments. By treating these platforms as internal products

built specifically for their own developers, companies greatly reduce wasted

effort and significantly speed up delivery times. Ultimately, scaling

innovation is not simply about adopting the newest technology trends, but

rather about creating a sustainable environment where technical teams have the

freedom to experiment safely. When architecture, governance, and platforms

work together smoothly, businesses can adapt to market changes and build new

solutions with predictable success and stability.When Adopting AI-Powered Cyber Tools, Proceed With Caution

As cyber threats evolve to become faster and more sophisticated, organizations

increasingly need intelligent defensive systems to protect their networks.

Hackers are now using automated technology to find and exploit unseen

vulnerabilities rapidly, meaning manual patching and traditional security

measures are no longer enough to keep up. While it is necessary to deploy

intelligent countermeasures to detect and respond to these attacks,

organizations must proceed with careful planning rather than rushing into

blind implementation. A thoughtful adoption strategy involves three practical

steps. First, security teams must analyze their environment and identify the

most critical assets. Less vital systems, like standard employee workstations,

can be updated first with proper review, while highly sensitive infrastructure

requires a more cautious approach. Second, before allowing automated systems

to make live configuration changes, organizations should run simulations to

understand the potential impact on user access and business operations.

Finally, frequent backups and system snapshots must be scheduled early in the

deployment process. If a newly integrated security tool makes an unintended or

unauthorized change, these backups ensure teams can immediately restore their

systems to a secure baseline. Ultimately, keeping enterprise environments

secure requires strict technical limits and strong access controls. By

implementing these practical safeguards, organizations can safely integrate

modern defensive tools without jeopardizing their core operations.

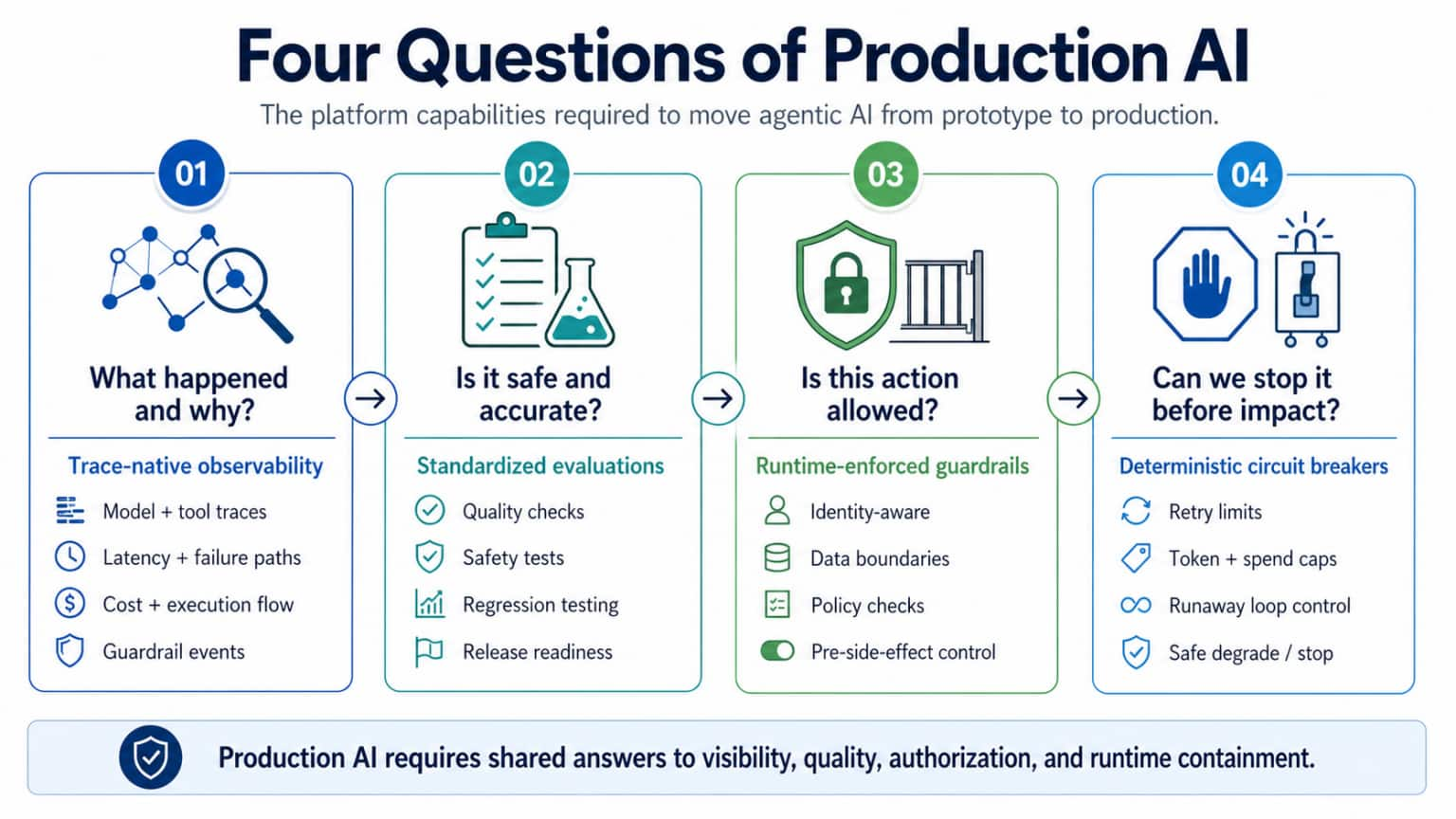

The Rise of the AI Development Life Cycle

Artificial intelligence is fundamentally changing how companies build

software, moving beyond simple coding assistants to a fully integrated AI

development life cycle. Initially, organizations saw modest productivity gains

by using AI to automate specific tasks like writing code or drafting tests.

Now, expectations are shifting toward a model where hybrid teams of humans and

AI handle entire workflows, potentially multiplying productivity several times

over. This evolution breaks down the traditional barriers between designing a

product and building it. Instead of moving in rigid, sequential steps, teams

can continuously define, develop, test, and refine software together. However,

many early efforts stall because companies focus too narrowly on isolated

tasks without updating their broader processes. To succeed, organizations must

undergo a complete structural change. This means adjusting team roles, such as

developers transitioning to orchestrators of AI tools, and establishing new

ways of working that prioritize clear instructions, continuous feedback, and

strict security rules. Furthermore, measuring success requires moving past

basic speed metrics. Companies must track system-wide outcomes, defect rates,

and overall risk to ensure that faster development does not introduce hidden

problems. Ultimately, adapting to this new era of software creation is not

simply a technology upgrade, but a comprehensive redesign of how a business

operates and delivers value.

Artificial intelligence is fundamentally changing how companies build

software, moving beyond simple coding assistants to a fully integrated AI

development life cycle. Initially, organizations saw modest productivity gains

by using AI to automate specific tasks like writing code or drafting tests.

Now, expectations are shifting toward a model where hybrid teams of humans and

AI handle entire workflows, potentially multiplying productivity several times

over. This evolution breaks down the traditional barriers between designing a

product and building it. Instead of moving in rigid, sequential steps, teams

can continuously define, develop, test, and refine software together. However,

many early efforts stall because companies focus too narrowly on isolated

tasks without updating their broader processes. To succeed, organizations must

undergo a complete structural change. This means adjusting team roles, such as

developers transitioning to orchestrators of AI tools, and establishing new

ways of working that prioritize clear instructions, continuous feedback, and

strict security rules. Furthermore, measuring success requires moving past

basic speed metrics. Companies must track system-wide outcomes, defect rates,

and overall risk to ensure that faster development does not introduce hidden

problems. Ultimately, adapting to this new era of software creation is not

simply a technology upgrade, but a comprehensive redesign of how a business

operates and delivers value.House Subcommittee on Cybersecurity and Infrastructure Protection Hosts Hearing on AI Security

During a recent House Subcommittee hearing, lawmakers and industry experts gathered to discuss how artificial intelligence is changing national cybersecurity and the resilience of critical infrastructure. The primary focus was the dual nature of advanced AI models. While these tools offer practical defensive benefits by finding and fixing software vulnerabilities quickly, they also provide malicious actors with the ability to discover and exploit weaknesses faster than human teams can patch them. Representative Andy Ogles highlighted the specific risk of foreign adversaries, particularly China, distributing inexpensive, open models that lack safety controls and could become the global standard, introducing serious security and censorship risks. Sandra Joyce, an executive at Google Threat Intelligence, confirmed that cybercriminals have already begun using AI to build novel digital exploits. To counter these accelerating threats, experts advised that traditional, reactive security measures are no longer sufficient. Organizations must transition to an automated, continuous process of scanning and repairing vulnerabilities before attackers can take advantage of them. The hearing underscored the practical need for a cohesive national strategy that prioritizes building security into software from the very beginning. This approach will be essential for ensuring the United States maintains a defensive advantage against increasingly autonomous cyber threats. The article examines Europe's vulnerable position within the global

"sovereignty triangle," a difficult balancing act dominated by the United

States and China. As modern infrastructure becomes deeply tied to national

security and economic health, Europe finds itself heavily reliant on foreign

products, particularly American cloud networks and Asian computer chips. The

piece argues that to avoid remaining a mere consumer of foreign tools, the

European Union must move past simply writing rules and regulations, such as

data privacy laws, and start actively building its own core technologies. This

shift requires overcoming divisions between member countries and committing to

serious financial investments in vital areas like artificial intelligence,

hardware manufacturing, and secure digital networks. True independence is not

about isolating from the world or closing borders, but having the practical

ability to make independent choices without being pressured by outside powers.

The text points out that Europe's best path forward involves smart

partnerships and industrial plans that encourage local development. By

creating solid alternatives and keeping strong alliances, Europe can protect

its political and economic freedom. Ultimately, this shared effort is

necessary to ensure the continent remains an equal player in shaping the

future, rather than just a rule maker caught between two massive powers.

The article examines Europe's vulnerable position within the global

"sovereignty triangle," a difficult balancing act dominated by the United

States and China. As modern infrastructure becomes deeply tied to national

security and economic health, Europe finds itself heavily reliant on foreign

products, particularly American cloud networks and Asian computer chips. The

piece argues that to avoid remaining a mere consumer of foreign tools, the

European Union must move past simply writing rules and regulations, such as

data privacy laws, and start actively building its own core technologies. This

shift requires overcoming divisions between member countries and committing to

serious financial investments in vital areas like artificial intelligence,

hardware manufacturing, and secure digital networks. True independence is not

about isolating from the world or closing borders, but having the practical

ability to make independent choices without being pressured by outside powers.

The text points out that Europe's best path forward involves smart

partnerships and industrial plans that encourage local development. By

creating solid alternatives and keeping strong alliances, Europe can protect

its political and economic freedom. Ultimately, this shared effort is

necessary to ensure the continent remains an equal player in shaping the

future, rather than just a rule maker caught between two massive powers.How Capital Allocation Changes When Agents Run the Stack

As businesses increasingly adopt autonomous artificial intelligence for their

daily operations, chief information officers face a complex challenge in

managing shifting costs and maintaining accountability. According to Arun

Ramchandran, CEO at QBurst, true autonomous commerce is not just an advanced

rules engine; it represents a sophisticated system capable of handling complex

goals, research, and execution without constant human intervention. However,

many leaders mistakenly treat this transition purely as a technology project

rather than a fundamental organizational design overhaul. Deploying these

systems successfully requires addressing three major areas of complexity.

First, organizations need clean, deeply contextual data, which often means

capturing the unrecorded institutional knowledge that employees hold. Second,

a strict governance structure is necessary to define accountability when

different systems interact and to prevent runaway operational costs from

endless processing loops. Finally, companies must carefully design the handoff

between human workers and autonomous systems, ensuring humans remain

appropriately involved when needed. Evaluating the total cost of ownership for

these systems also proves uniquely difficult. Because processing costs are

dropping while usage rates are soaring simultaneously, building a financial

model based on current transaction rates is highly unpredictable. Ultimately,

building a reliable infrastructure for autonomous operations demands a highly

thoughtful approach to data management, clear governance, and well-designed

integration with human teams.

How CIOs Can Prove the Value of Technology in the Age of AI

In today's fast-moving business landscape, technology leaders face increasing

pressure to justify their investments, especially as artificial intelligence

initiatives require significant capital. To successfully prove the value of

tech in the age of AI, Chief Information Officers must shift their focus from

traditional cost metrics to clear business outcomes. This means stepping away

from technical jargon and measuring success by how well technology improves

operational efficiency, drives revenue, or enhances the overall customer

experience. Instead of treating AI as a standalone project, technology leaders

should embed these tools directly into everyday business processes, ensuring

they solve real problems rather than just serving as interesting experiments.

Furthermore, proving value requires a strong partnership between the IT

department and other business units. CIOs need to collaborate closely with

finance and operations teams to establish shared goals and transparent

reporting frameworks. Building this trust also involves prioritizing human

elements, such as training employees to confidently use new AI systems safely

and effectively. This strategic alignment turns abstract concepts into

practical benefits. By connecting technology directly to core business

objectives and fostering a culture of cross-functional teamwork, CIOs can

demonstrate that their AI and technology investments are not merely expensive

operational costs, but essential drivers of long-term corporate growth and

sustainability.

In today's fast-moving business landscape, technology leaders face increasing

pressure to justify their investments, especially as artificial intelligence

initiatives require significant capital. To successfully prove the value of

tech in the age of AI, Chief Information Officers must shift their focus from

traditional cost metrics to clear business outcomes. This means stepping away

from technical jargon and measuring success by how well technology improves

operational efficiency, drives revenue, or enhances the overall customer

experience. Instead of treating AI as a standalone project, technology leaders

should embed these tools directly into everyday business processes, ensuring

they solve real problems rather than just serving as interesting experiments.

Furthermore, proving value requires a strong partnership between the IT

department and other business units. CIOs need to collaborate closely with

finance and operations teams to establish shared goals and transparent

reporting frameworks. Building this trust also involves prioritizing human

elements, such as training employees to confidently use new AI systems safely

and effectively. This strategic alignment turns abstract concepts into

practical benefits. By connecting technology directly to core business

objectives and fostering a culture of cross-functional teamwork, CIOs can

demonstrate that their AI and technology investments are not merely expensive

operational costs, but essential drivers of long-term corporate growth and

sustainability.CMMC Is Here, But AI Changes The Compliance Conversation

The integration of artificial intelligence into the defense sector offers

significant speed and convenience, but it also introduces serious compliance

risks under the Cybersecurity Maturity Model Certification (CMMC). As defense

contractors increasingly rely on coding assistants and chatbots to summarize

requirements or draft responses, they inadvertently create new, unmanaged data

environments. CMMC regulations demand strict accountability for sensitive

information, and these rules apply equally whether data is mishandled through

a traditional file share or a modern AI tool. Simply put, convenience is not

an acceptable security control. When employees upload technical notes or

contract details into an AI system, that information often becomes part of the

model's history, raising questions about data retention, access, and proper

handling. This exposure is especially critical across the supply chain, as a

single subcontractor using unauthorized AI can put an entire project at risk.

To navigate this safely, organizations must recognize that AI adoption

currently outpaces security maturity. They need to establish clear rules for

which AI tools are permissible and how they can be used. A responsible

approach requires implementing data classification guidelines, mandating human

reviews for AI-generated outputs, enforcing security standards across all

suppliers, and maintaining continuous oversight to ensure sensitive defense

information remains fully protected.