Quote for the day:

"You've got to get up every morning with determination if you're going to go to bed with satisfaction." -- George Lorimer

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

Cloud strategies have become more complicated than ever

Managing enterprise cloud infrastructure has shifted from simple migrations to

navigating a complex web of cost, regulation, and technical demands. While IT

leaders once felt they had cloud setups under control, the sudden rush to adopt

artificial intelligence has upended traditional architecture models, requiring

massive compute power and driving up expenses. Beyond the strain of artificial

intelligence, companies are trying to figure out exactly where workloads should

live, whether that means using public servers, private platforms, or returning

some systems back to local data centers. Budgeting has also turned into a

significant headache, as intricate vendor pricing structures can cause

unexpected spikes in monthly bills. This has forced technology and accounting

teams to work together much more closely to continually monitor spending rather

than reviewing it after the fact. Meanwhile, strict international data

sovereignty laws add more friction, forcing organizations to carefully track

where information is stored and processed to meet local legal requirements.

Experts suggest that instead of chasing every new technical trend, leaders

should focus on stable infrastructure planning, clear internal rules, and

building flexible teams that can pivot when conditions change. Ultimately, the

primary goal is no longer just about moving to the cloud, but learning how to

run it efficiently and sustainably over the long term.

Managing enterprise cloud infrastructure has shifted from simple migrations to

navigating a complex web of cost, regulation, and technical demands. While IT

leaders once felt they had cloud setups under control, the sudden rush to adopt

artificial intelligence has upended traditional architecture models, requiring

massive compute power and driving up expenses. Beyond the strain of artificial

intelligence, companies are trying to figure out exactly where workloads should

live, whether that means using public servers, private platforms, or returning

some systems back to local data centers. Budgeting has also turned into a

significant headache, as intricate vendor pricing structures can cause

unexpected spikes in monthly bills. This has forced technology and accounting

teams to work together much more closely to continually monitor spending rather

than reviewing it after the fact. Meanwhile, strict international data

sovereignty laws add more friction, forcing organizations to carefully track

where information is stored and processed to meet local legal requirements.

Experts suggest that instead of chasing every new technical trend, leaders

should focus on stable infrastructure planning, clear internal rules, and

building flexible teams that can pivot when conditions change. Ultimately, the

primary goal is no longer just about moving to the cloud, but learning how to

run it efficiently and sustainably over the long term.Digital identity must be built for interoperability from day one, says Margins CEO

At the ID4Africa 2026 conference, Moses Kwesi Baiden Jnr., the chief executive

of Margins ID Group, explained why countries should design national digital

identity systems to work together across different sectors right from the

start. He noted that older, disconnected identity programs often lead to

isolated databases that cannot communicate with one another. This

fragmentation slows down digital commerce and hurts ordinary people, who face

slow public services and higher costs due to administrative inefficiencies. To

fix this, Baiden suggested that governments focus on building a single, highly

trusted legal identity instead of trying to link separate systems later.

According to him, this process is less about the underlying technology and

more about creating a clear legal and operational framework that matches a

country's constitution. As a practical example, he pointed to the Ghana Card

system, which his company developed. The system has enrolled over nineteen

million people into a unified database, allowing both public agencies and

private businesses to verify identities safely without duplicating data

collection. This central registry tracks individuals accurately and reduces

the weaknesses that usually appear when people must register multiple times

across different offices. By integrating multiple applications into one

physical and digital tool, this approach lowers administrative costs and makes

it easier for citizens to access everyday services securely.

Tabletop exercises are excellent for refining incident response strategies,

provided you avoid common pitfalls that compromise their value. The most

frequent misstep is running simulations without clear, measurable goals.

Without specific targets, exercises drift into vague discussions rather than

testing critical processes like legal notifications or executive decision

rights. Another error is relying on familiar scenarios with obvious solutions.

Real incidents are messy and ambiguous, so providing incomplete information

helps teams practice decision-making under uncertainty instead of just

recalling a playbook. Similarly, failing to design business-relevant hazards

can make the exercise feel like a chore. Simulations must reflect your actual

environment, industry threats, and include all relevant stakeholders to be

effective. If scenarios lack plausible technical details, participants may

dismiss them as a waste of time. You should also avoid guiding teams down a

predefined happy path, as this emphasizes simple recall rather than true

problem-solving. Furthermore, keeping exercises too conceptual ignores the

friction points that happen during real crises, such as figuring out who has

the authority to isolate critical systems. Finally, overlooking internal

dependencies builds false confidence. To ensure actual readiness, you need to

test the specific handoffs and communication chains unique to your business

rather than relying on a generic blueprint.

Tabletop exercises are excellent for refining incident response strategies,

provided you avoid common pitfalls that compromise their value. The most

frequent misstep is running simulations without clear, measurable goals.

Without specific targets, exercises drift into vague discussions rather than

testing critical processes like legal notifications or executive decision

rights. Another error is relying on familiar scenarios with obvious solutions.

Real incidents are messy and ambiguous, so providing incomplete information

helps teams practice decision-making under uncertainty instead of just

recalling a playbook. Similarly, failing to design business-relevant hazards

can make the exercise feel like a chore. Simulations must reflect your actual

environment, industry threats, and include all relevant stakeholders to be

effective. If scenarios lack plausible technical details, participants may

dismiss them as a waste of time. You should also avoid guiding teams down a

predefined happy path, as this emphasizes simple recall rather than true

problem-solving. Furthermore, keeping exercises too conceptual ignores the

friction points that happen during real crises, such as figuring out who has

the authority to isolate critical systems. Finally, overlooking internal

dependencies builds false confidence. To ensure actual readiness, you need to

test the specific handoffs and communication chains unique to your business

rather than relying on a generic blueprint.

Europe is spending billions to build a digital sovereign cloud, introducing

rigorous security certifications like France’s SecNumCloud to shield regional

data from U.S. legal reach. However, these efforts completely overlook a

critical hardware vulnerability. Almost all of this certified cloud

infrastructure runs on Intel or AMD processors, which feature hidden built-in

management engines that operate entirely outside the control of standard

operating systems or firewalls. Because recent U.S. surveillance laws now

explicitly cover hardware manufacturers, companies like Intel and AMD can be

legally forced to grant American intelligence agencies access to these

systems, regardless of where the servers are located or who manages them.

Since these embedded engines function autonomously with their own memory and

network connections, they bypass the software and organizational safeguards

that European certifications rely on. Security experts warn that this creates

a fundamental blind spot, as any traffic they generate is practically

invisible to normal monitoring tools. While some argue that strict network

isolation can limit this exposure, others emphasize that motivated

nation-states could easily bypass these defenses. Ultimately, until

competitive open-source hardware alternatives like RISC-V become a reality,

Europe is attempting to build an independent, sovereign cloud infrastructure

on top of hardware foundations it does not truly control.

Europe is spending billions to build a digital sovereign cloud, introducing

rigorous security certifications like France’s SecNumCloud to shield regional

data from U.S. legal reach. However, these efforts completely overlook a

critical hardware vulnerability. Almost all of this certified cloud

infrastructure runs on Intel or AMD processors, which feature hidden built-in

management engines that operate entirely outside the control of standard

operating systems or firewalls. Because recent U.S. surveillance laws now

explicitly cover hardware manufacturers, companies like Intel and AMD can be

legally forced to grant American intelligence agencies access to these

systems, regardless of where the servers are located or who manages them.

Since these embedded engines function autonomously with their own memory and

network connections, they bypass the software and organizational safeguards

that European certifications rely on. Security experts warn that this creates

a fundamental blind spot, as any traffic they generate is practically

invisible to normal monitoring tools. While some argue that strict network

isolation can limit this exposure, others emphasize that motivated

nation-states could easily bypass these defenses. Ultimately, until

competitive open-source hardware alternatives like RISC-V become a reality,

Europe is attempting to build an independent, sovereign cloud infrastructure

on top of hardware foundations it does not truly control.

7 tabletop exercise mistakes that sabotage incident response

Tabletop exercises are excellent for refining incident response strategies,

provided you avoid common pitfalls that compromise their value. The most

frequent misstep is running simulations without clear, measurable goals.

Without specific targets, exercises drift into vague discussions rather than

testing critical processes like legal notifications or executive decision

rights. Another error is relying on familiar scenarios with obvious solutions.

Real incidents are messy and ambiguous, so providing incomplete information

helps teams practice decision-making under uncertainty instead of just

recalling a playbook. Similarly, failing to design business-relevant hazards

can make the exercise feel like a chore. Simulations must reflect your actual

environment, industry threats, and include all relevant stakeholders to be

effective. If scenarios lack plausible technical details, participants may

dismiss them as a waste of time. You should also avoid guiding teams down a

predefined happy path, as this emphasizes simple recall rather than true

problem-solving. Furthermore, keeping exercises too conceptual ignores the

friction points that happen during real crises, such as figuring out who has

the authority to isolate critical systems. Finally, overlooking internal

dependencies builds false confidence. To ensure actual readiness, you need to

test the specific handoffs and communication chains unique to your business

rather than relying on a generic blueprint.

Tabletop exercises are excellent for refining incident response strategies,

provided you avoid common pitfalls that compromise their value. The most

frequent misstep is running simulations without clear, measurable goals.

Without specific targets, exercises drift into vague discussions rather than

testing critical processes like legal notifications or executive decision

rights. Another error is relying on familiar scenarios with obvious solutions.

Real incidents are messy and ambiguous, so providing incomplete information

helps teams practice decision-making under uncertainty instead of just

recalling a playbook. Similarly, failing to design business-relevant hazards

can make the exercise feel like a chore. Simulations must reflect your actual

environment, industry threats, and include all relevant stakeholders to be

effective. If scenarios lack plausible technical details, participants may

dismiss them as a waste of time. You should also avoid guiding teams down a

predefined happy path, as this emphasizes simple recall rather than true

problem-solving. Furthermore, keeping exercises too conceptual ignores the

friction points that happen during real crises, such as figuring out who has

the authority to isolate critical systems. Finally, overlooking internal

dependencies builds false confidence. To ensure actual readiness, you need to

test the specific handoffs and communication chains unique to your business

rather than relying on a generic blueprint.

Europe’s sovereign cloud has a blind spot

Europe is spending billions to build a digital sovereign cloud, introducing

rigorous security certifications like France’s SecNumCloud to shield regional

data from U.S. legal reach. However, these efforts completely overlook a

critical hardware vulnerability. Almost all of this certified cloud

infrastructure runs on Intel or AMD processors, which feature hidden built-in

management engines that operate entirely outside the control of standard

operating systems or firewalls. Because recent U.S. surveillance laws now

explicitly cover hardware manufacturers, companies like Intel and AMD can be

legally forced to grant American intelligence agencies access to these

systems, regardless of where the servers are located or who manages them.

Since these embedded engines function autonomously with their own memory and

network connections, they bypass the software and organizational safeguards

that European certifications rely on. Security experts warn that this creates

a fundamental blind spot, as any traffic they generate is practically

invisible to normal monitoring tools. While some argue that strict network

isolation can limit this exposure, others emphasize that motivated

nation-states could easily bypass these defenses. Ultimately, until

competitive open-source hardware alternatives like RISC-V become a reality,

Europe is attempting to build an independent, sovereign cloud infrastructure

on top of hardware foundations it does not truly control.

Europe is spending billions to build a digital sovereign cloud, introducing

rigorous security certifications like France’s SecNumCloud to shield regional

data from U.S. legal reach. However, these efforts completely overlook a

critical hardware vulnerability. Almost all of this certified cloud

infrastructure runs on Intel or AMD processors, which feature hidden built-in

management engines that operate entirely outside the control of standard

operating systems or firewalls. Because recent U.S. surveillance laws now

explicitly cover hardware manufacturers, companies like Intel and AMD can be

legally forced to grant American intelligence agencies access to these

systems, regardless of where the servers are located or who manages them.

Since these embedded engines function autonomously with their own memory and

network connections, they bypass the software and organizational safeguards

that European certifications rely on. Security experts warn that this creates

a fundamental blind spot, as any traffic they generate is practically

invisible to normal monitoring tools. While some argue that strict network

isolation can limit this exposure, others emphasize that motivated

nation-states could easily bypass these defenses. Ultimately, until

competitive open-source hardware alternatives like RISC-V become a reality,

Europe is attempting to build an independent, sovereign cloud infrastructure

on top of hardware foundations it does not truly control.Why AI Will Move to the Endpoint

Artificial intelligence is gradually transitioning from remote cloud servers

directly to local devices, driven by the need to resolve high processing costs

and significant privacy concerns. Currently, running models in the cloud

requires sending sensitive data outside a company network, which introduces

risk and steep operating expenses. However, hardware advances are making local

processing practical. Modern computers now include specialized processors

capable of handling smaller, optimized language models directly on the device.

Moving artificial intelligence to user devices provides concrete benefits,

including offline functionality, faster response times, and stronger security,

as data never leaves the local machine. It also allows the software to adapt

more closely to an individual's specific work habits, improving overall

efficiency and reducing the burden on technical support teams. While setting

up these local systems manually remains complex today, organizations can

overcome this by adopting an integrated management approach. A structured

setup would include components for handling data, managing the lifecycle of

the models, and enforcing strict security controls. By establishing this

coordinated architecture, companies can avoid hidden or uncontrolled software

usage. Ultimately, adopting local artificial intelligence eliminates recurring

cloud fees and keeps sensitive information secure, giving teams a practical

way to safely apply these tools to their daily work.

Artificial intelligence is gradually transitioning from remote cloud servers

directly to local devices, driven by the need to resolve high processing costs

and significant privacy concerns. Currently, running models in the cloud

requires sending sensitive data outside a company network, which introduces

risk and steep operating expenses. However, hardware advances are making local

processing practical. Modern computers now include specialized processors

capable of handling smaller, optimized language models directly on the device.

Moving artificial intelligence to user devices provides concrete benefits,

including offline functionality, faster response times, and stronger security,

as data never leaves the local machine. It also allows the software to adapt

more closely to an individual's specific work habits, improving overall

efficiency and reducing the burden on technical support teams. While setting

up these local systems manually remains complex today, organizations can

overcome this by adopting an integrated management approach. A structured

setup would include components for handling data, managing the lifecycle of

the models, and enforcing strict security controls. By establishing this

coordinated architecture, companies can avoid hidden or uncontrolled software

usage. Ultimately, adopting local artificial intelligence eliminates recurring

cloud fees and keeps sensitive information secure, giving teams a practical

way to safely apply these tools to their daily work.Better Than the Truth: From AI Hallucinations to Imaginations

While artificial intelligence hallucinations are widely viewed as problematic

errors that can damage professional reputations and spread false information,

they might actually hold practical value. When a system generates plausible

but incorrect responses, it usually stems from limited data and a design that

prioritizes coherent answers over exact facts. Naturally, this causes

frustration in fields requiring strict accuracy, such as law and medicine.

However, these unintended inventions can sometimes spark genuine creativity.

Rather than simply dismissing them as mistakes, we can view them as a form of

automated imagination. For example, when artificial intelligence fabricates a

trend or invents a realistic book title based on a writer's background, it can

inspire researchers to explore ideas they might not have considered otherwise.

This suggests a potential future where software offers a deliberate

imagination feature alongside traditional factual searches. If developers

separate functions that search for facts from creative generation, users could

intentionally ask systems to invent alternate histories, draft narratives from

past events, or predict unconventional future scenarios. By doing so, the flaw

of generating false data becomes a useful tool. Instead of restricting

artificial intelligence strictly to established facts, allowing it to imagine

could help people see the world from different perspectives and enrich their

own thinking.

While artificial intelligence hallucinations are widely viewed as problematic

errors that can damage professional reputations and spread false information,

they might actually hold practical value. When a system generates plausible

but incorrect responses, it usually stems from limited data and a design that

prioritizes coherent answers over exact facts. Naturally, this causes

frustration in fields requiring strict accuracy, such as law and medicine.

However, these unintended inventions can sometimes spark genuine creativity.

Rather than simply dismissing them as mistakes, we can view them as a form of

automated imagination. For example, when artificial intelligence fabricates a

trend or invents a realistic book title based on a writer's background, it can

inspire researchers to explore ideas they might not have considered otherwise.

This suggests a potential future where software offers a deliberate

imagination feature alongside traditional factual searches. If developers

separate functions that search for facts from creative generation, users could

intentionally ask systems to invent alternate histories, draft narratives from

past events, or predict unconventional future scenarios. By doing so, the flaw

of generating false data becomes a useful tool. Instead of restricting

artificial intelligence strictly to established facts, allowing it to imagine

could help people see the world from different perspectives and enrich their

own thinking.

Why Firms Struggle With Vendor Security After They Sign

A recent study by the research firm KLAS shows that while healthcare

organizations are improving at vetting third party vendors before signing

contracts, they still struggle significantly to monitor those partners'

security over the long term. This lack of continuous oversight represents a

major safety flaw, especially since a prior survey revealed that three out of

four healthcare organizations suffered a vendor related data breach within a

brief two year window. The study indicates that companies pour substantial

resources into initial evaluations but frequently neglect checking on partners

after the deal is done. Consequently, unexpected risks crop up later through

regular software updates, business disruptions, or shifting safety rules.

Security experts point to several common internal issues causing this

disconnect, including a lack of executive leadership support, an absence of

organized systems to prioritize high risk partners, and insufficient tracking

of sensitive patient records. Furthermore, many organizations fail to strictly

mandate or enforce standard technical protections like multifactor

authentication and data encryption. These oversight gaps are particularly

severe for smaller healthcare providers, which generally have fewer resources

but often serve as easy entry points for digital attackers trying to reach

larger networks. Ultimately, the report emphasizes that organizational senior

executives and boards of directors hold full responsibility for addressing

these ongoing vendor threats.

A recent study by the research firm KLAS shows that while healthcare

organizations are improving at vetting third party vendors before signing

contracts, they still struggle significantly to monitor those partners'

security over the long term. This lack of continuous oversight represents a

major safety flaw, especially since a prior survey revealed that three out of

four healthcare organizations suffered a vendor related data breach within a

brief two year window. The study indicates that companies pour substantial

resources into initial evaluations but frequently neglect checking on partners

after the deal is done. Consequently, unexpected risks crop up later through

regular software updates, business disruptions, or shifting safety rules.

Security experts point to several common internal issues causing this

disconnect, including a lack of executive leadership support, an absence of

organized systems to prioritize high risk partners, and insufficient tracking

of sensitive patient records. Furthermore, many organizations fail to strictly

mandate or enforce standard technical protections like multifactor

authentication and data encryption. These oversight gaps are particularly

severe for smaller healthcare providers, which generally have fewer resources

but often serve as easy entry points for digital attackers trying to reach

larger networks. Ultimately, the report emphasizes that organizational senior

executives and boards of directors hold full responsibility for addressing

these ongoing vendor threats.

The Hidden Knowledge Debt Behind QA Outsourcing

n an article for Software Testing Magazine, Ann-Sofie Ollikainen outlines the

hidden risks companies face when they outsource software quality assurance

solely to lower operational costs. While third-party providers often promise

guaranteed quality based on predefined test cases and standardized metrics,

this transactional approach creates an invisible liability known as knowledge

debt. By shifting testing to external teams, organizations lose the deep

product context and historical understanding that internal teams develop

through long-term exposure to a system. External testers can technically

fulfill their contract requirements by running standard tests, yet they

frequently miss complex, structural defects because they do not understand why

specific features were built a certain way. This systemic loss of context

eventually leads to costly consequences, including repeated software

regressions, delayed product releases, slow problem-solving, and consumer

frustration. The author notes that organizations do not need to abandon

outsourcing entirely, but they must stop treating software testing as a mere

checkbox at the end of a project. Instead, sustainable software quality

requires a careful balance between immediate cost savings and long-term

product stability, ensuring that testing remains deeply connected to the

overall development process, business requirements, and product evolution over

time.

n an article for Software Testing Magazine, Ann-Sofie Ollikainen outlines the

hidden risks companies face when they outsource software quality assurance

solely to lower operational costs. While third-party providers often promise

guaranteed quality based on predefined test cases and standardized metrics,

this transactional approach creates an invisible liability known as knowledge

debt. By shifting testing to external teams, organizations lose the deep

product context and historical understanding that internal teams develop

through long-term exposure to a system. External testers can technically

fulfill their contract requirements by running standard tests, yet they

frequently miss complex, structural defects because they do not understand why

specific features were built a certain way. This systemic loss of context

eventually leads to costly consequences, including repeated software

regressions, delayed product releases, slow problem-solving, and consumer

frustration. The author notes that organizations do not need to abandon

outsourcing entirely, but they must stop treating software testing as a mere

checkbox at the end of a project. Instead, sustainable software quality

requires a careful balance between immediate cost savings and long-term

product stability, ensuring that testing remains deeply connected to the

overall development process, business requirements, and product evolution over

time.

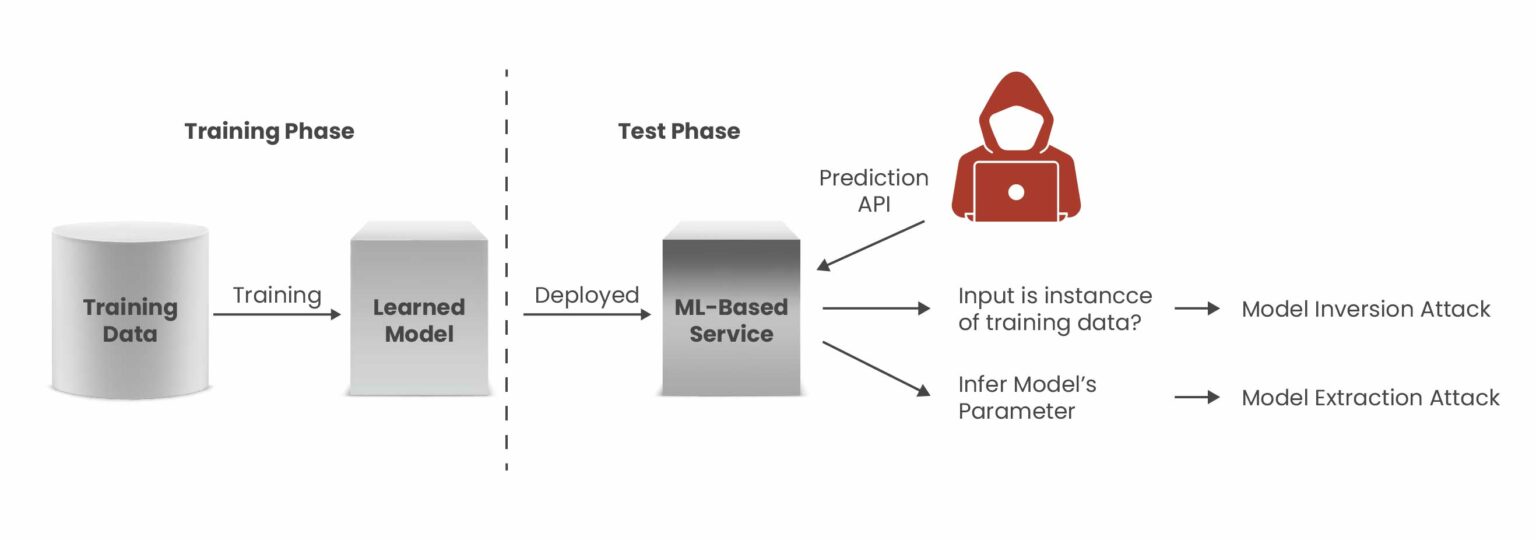

AI is shrinking attack windows, and it’s forcing a complete rethink of cyber resilience

The ITPro article outlines how the rapid acceleration of AI is reshaping

corporate cybersecurity by significantly shortening remediation windows.

Advanced models are discovering system vulnerabilities at an unprecedented

rate, enabling threat actors to automate and launch exploits almost instantly.

Security experts argue that this dramatic collapse in traditional response

times makes cyber resilience a fundamental daily operational requirement

rather than a plan used only after an incident occurs. To navigate this

changing threat landscape securely, organizations are advised to implement a

structured resilience framework based on four distinct steps. First, companies

should evaluate their recovery risks by thoroughly analyzing how existing

continuity plans hold up under rapid digital disruption. Second, isolating

critical backups from main corporate networks ensures clean fallback options

if defensive patching routines cannot keep pace. Third, teams must establish

strict recovery priorities for business critical services, taking care to map

out modern infrastructure components like data pipelines and machine learning

repositories. Finally, automating threat scanning and system restoration helps

reduce human delay while maintaining thorough, regular testing schedules. By

adopting these pragmatic, continuous validation measures, businesses can

confidently secure their essential operations and handle the complexities of

evolving software tools without overwhelming their defensive capabilities.

The ITPro article outlines how the rapid acceleration of AI is reshaping

corporate cybersecurity by significantly shortening remediation windows.

Advanced models are discovering system vulnerabilities at an unprecedented

rate, enabling threat actors to automate and launch exploits almost instantly.

Security experts argue that this dramatic collapse in traditional response

times makes cyber resilience a fundamental daily operational requirement

rather than a plan used only after an incident occurs. To navigate this

changing threat landscape securely, organizations are advised to implement a

structured resilience framework based on four distinct steps. First, companies

should evaluate their recovery risks by thoroughly analyzing how existing

continuity plans hold up under rapid digital disruption. Second, isolating

critical backups from main corporate networks ensures clean fallback options

if defensive patching routines cannot keep pace. Third, teams must establish

strict recovery priorities for business critical services, taking care to map

out modern infrastructure components like data pipelines and machine learning

repositories. Finally, automating threat scanning and system restoration helps

reduce human delay while maintaining thorough, regular testing schedules. By

adopting these pragmatic, continuous validation measures, businesses can

confidently secure their essential operations and handle the complexities of

evolving software tools without overwhelming their defensive capabilities.Why Vector Search Alone Isn't Enough: Hybrid Retrieval for RAG

When building internal search systems using Retrieval-Augmented Generation,

many engineering teams rely entirely on vector search. While vector embeddings

are excellent at finding general themes and similar concepts, they often

struggle with precision. Because embeddings function as approximation engines,

they cannot easily distinguish between exact details like version numbers,

error codes, or specific operational commands. For example, a search for a

runbook to enable a feature might return a document on how to disable it,

simply because the texts are semantically similar and occupy nearly the exact

same space in the embedding model. To solve this problem, developers need to

implement a hybrid retrieval stack. Rather than discarding vector search, you

pair it with traditional keyword matching functions like BM25. This ranking

function provides the specific precision that embeddings lack by weighting

rare distinguishing terms and adjusting for document length. By combining both

methods, you achieve strong conceptual relevance and exact term matching. To

merge these two different scoring systems without complex score normalization,

you can use Reciprocal Rank Fusion, which evaluates results based purely on

their rank positions. A mature retrieval architecture layers these approaches,

often followed by a final reranking stage to ensure the most accurate context

reaches the language model.

/articles/event-driven-banking-architecture/en/smallimage/event-driven-banking-architecture-thumbnail-1774430827143.jpg)

/articles/ai-generated-mvp/en/smallimage/thumbnail-ai-generated-mvp-1770282822570.jpg)