Quote for the day:

“Make sure you don’t start seeing yourself through the eyes of those who don’t value you.” -- Anonymous

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

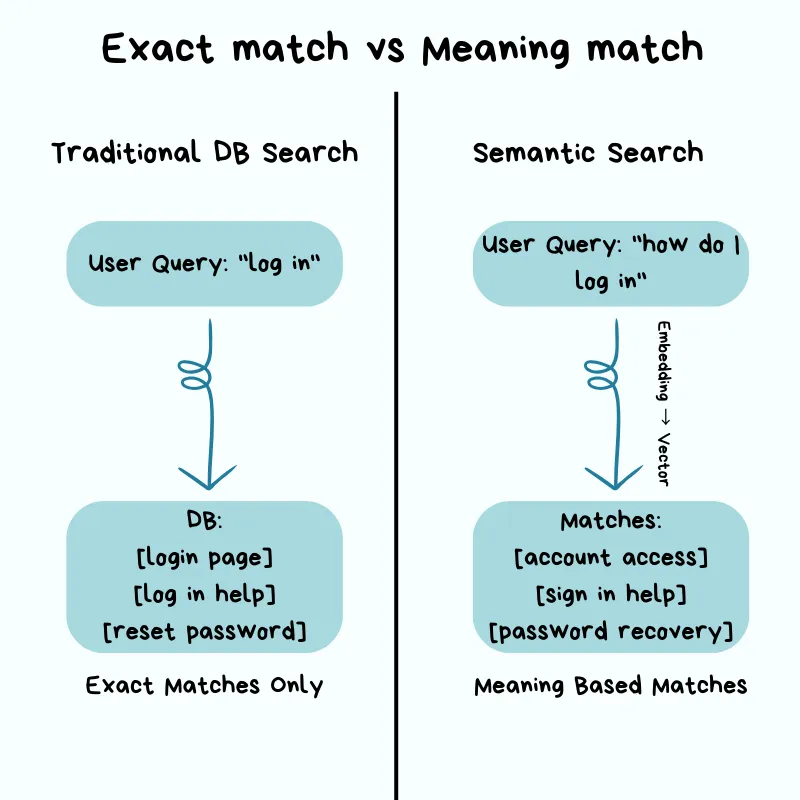

AI observability: How CIOs can see past their org blind spots

The article discusses AI observability, highlighting how traditional IT

monitoring tools are insufficient for evaluating artificial intelligence

performance. As AI applications expand across modern businesses, CIOs frequently

struggle with deep blind spots regarding system usage, model drift, performance

degradation, and unauthorized "shadow AI" tools. Unlike standard software that

relies on predictable metrics like uptime, AI systems operate probabilistically,

meaning the exact same inputs can yield wildly varying outcomes. This inherent

unpredictability creates compounding risks, especially as enterprises connect

multiple autonomous agents into complex workflows where minor data issues can

quietly corrupt downstream results for weeks before finally breaking. To address

these organizational vulnerabilities, experts suggest shifting from front-loaded

risk assessments to continuous, full-stack visibility. This comprehensive

approach involves setting up automated guardrails for model outputs, maintaining

a clear catalog of active systems, and establishing an integrated control plane.

By compiling system telemetry, semantic mapping, and risk thresholds into a

single shared interface, different corporate stakeholders, such as finance,

human resources, and security teams, can easily monitor the metrics relevant to

their own departments. Ultimately, treating observability as a core design

principle rather than an afterthought enables leadership to safely scale their

AI initiatives, manage ballooning costs, and build lasting organizational

trust.

The article discusses AI observability, highlighting how traditional IT

monitoring tools are insufficient for evaluating artificial intelligence

performance. As AI applications expand across modern businesses, CIOs frequently

struggle with deep blind spots regarding system usage, model drift, performance

degradation, and unauthorized "shadow AI" tools. Unlike standard software that

relies on predictable metrics like uptime, AI systems operate probabilistically,

meaning the exact same inputs can yield wildly varying outcomes. This inherent

unpredictability creates compounding risks, especially as enterprises connect

multiple autonomous agents into complex workflows where minor data issues can

quietly corrupt downstream results for weeks before finally breaking. To address

these organizational vulnerabilities, experts suggest shifting from front-loaded

risk assessments to continuous, full-stack visibility. This comprehensive

approach involves setting up automated guardrails for model outputs, maintaining

a clear catalog of active systems, and establishing an integrated control plane.

By compiling system telemetry, semantic mapping, and risk thresholds into a

single shared interface, different corporate stakeholders, such as finance,

human resources, and security teams, can easily monitor the metrics relevant to

their own departments. Ultimately, treating observability as a core design

principle rather than an afterthought enables leadership to safely scale their

AI initiatives, manage ballooning costs, and build lasting organizational

trust.The Validation Gap Is Costing You More Than You Think

According to a report on software delivery, development teams are writing more

code than ever, but less of it is actually reaching production. Analysis of

millions of workflows reveals that while development throughput has spiked,

main branch success rates have fallen to a five-year low of roughly seventy

percent. This drop stems from a gap in how software is validated. Traditional

continuous integration systems were designed for humans who commit code

gradually. Today, automated artificial intelligence tools generate code at a

rapid pace that completely overwhelms traditional review processes. When

errors are caught late in the shared integration system, it results in

expensive compute costs, wasted time, and broken focus as the automated tools

have already moved on to other tasks. To solve this dilemma, engineering teams

must shift testing much earlier into the initial writing phase. By running

smaller, targeted tests while the automated code generator is still actively

focused on a task, teams can fix errors immediately without draining

infrastructure resources. When this early testing stage and the final

integration pipeline share historical information, the entire delivery system

becomes smarter and more efficient. Ultimately, addressing this validation

imbalance helps organizations safely increase their software output without

absorbing downstream failures.

According to a report on software delivery, development teams are writing more

code than ever, but less of it is actually reaching production. Analysis of

millions of workflows reveals that while development throughput has spiked,

main branch success rates have fallen to a five-year low of roughly seventy

percent. This drop stems from a gap in how software is validated. Traditional

continuous integration systems were designed for humans who commit code

gradually. Today, automated artificial intelligence tools generate code at a

rapid pace that completely overwhelms traditional review processes. When

errors are caught late in the shared integration system, it results in

expensive compute costs, wasted time, and broken focus as the automated tools

have already moved on to other tasks. To solve this dilemma, engineering teams

must shift testing much earlier into the initial writing phase. By running

smaller, targeted tests while the automated code generator is still actively

focused on a task, teams can fix errors immediately without draining

infrastructure resources. When this early testing stage and the final

integration pipeline share historical information, the entire delivery system

becomes smarter and more efficient. Ultimately, addressing this validation

imbalance helps organizations safely increase their software output without

absorbing downstream failures.Why Attack Surface Management Breaks in OT (and What Actually Works)

Traditional Attack Surface Management (ASM) fails in Operational Technology

(OT) environments because industrial infrastructure operates on fundamentally

different principles than standard enterprise IT systems. Many legacy

industrial protocols, such as Modbus, DNP3, and BACnet, were created decades

ago without built-in encryption, session management, or authentication

mechanisms. Consequently, their lack of security is an inherent property of

the system design rather than a simple configuration mistake that can easily

be patched. Furthermore, the active interrogation techniques standard in IT

security can severely disrupt operational networks; sending aggressive probes

often overwhelms the limited network stacks of Programmable Logic Controllers

(PLCs), causing critical physical machinery to misbehave or shut down

entirely. Because these industrial environments do not support software agents

or standard diagnostic queries, establishing a reliable asset inventory is

remarkably difficult. To mitigate risks effectively, security teams must

reverse their usual enterprise instincts by defaulting to passive network

monitoring and treating active probing as a tightly managed privilege.

Utilizing passive internet search data allows analysts to map exposed external

components safely without introducing disruptive traffic to live plants.

Ultimately, embedding clear safety workflows and strict rate limits into

automated security tools ensures that scanning efforts do not cause unintended

physical operational downtime.

Traditional Attack Surface Management (ASM) fails in Operational Technology

(OT) environments because industrial infrastructure operates on fundamentally

different principles than standard enterprise IT systems. Many legacy

industrial protocols, such as Modbus, DNP3, and BACnet, were created decades

ago without built-in encryption, session management, or authentication

mechanisms. Consequently, their lack of security is an inherent property of

the system design rather than a simple configuration mistake that can easily

be patched. Furthermore, the active interrogation techniques standard in IT

security can severely disrupt operational networks; sending aggressive probes

often overwhelms the limited network stacks of Programmable Logic Controllers

(PLCs), causing critical physical machinery to misbehave or shut down

entirely. Because these industrial environments do not support software agents

or standard diagnostic queries, establishing a reliable asset inventory is

remarkably difficult. To mitigate risks effectively, security teams must

reverse their usual enterprise instincts by defaulting to passive network

monitoring and treating active probing as a tightly managed privilege.

Utilizing passive internet search data allows analysts to map exposed external

components safely without introducing disruptive traffic to live plants.

Ultimately, embedding clear safety workflows and strict rate limits into

automated security tools ensures that scanning efforts do not cause unintended

physical operational downtime.Backup and recovery architecture best practices for UK SMEs

Challenging AI Assumptions

In his Forbes article, John Werner encourages readers to reconsider common

assumptions about artificial intelligence that might limit our ability to

effectively navigate the future. He notes that early technology milestones,

such as the IBM Watson era, conditioned the public to view machine

intelligence as a centralized database focused entirely on factual recall,

rapid calculation, and deterministic logic. However, as the field quickly

moves toward a future centered on autonomous software agents, Werner argues

that continuing to rely on these old centralized frameworks is a foundational

mistake. Drawing from insights shared at a recent MIT-linked conference, he

suggests that the true development of artificial intelligence will ultimately

mirror biological organisms and complex economic networks rather than

centralized computer hardware. Because the long-term impact of this technology

on global society is frequently compared to foundational discoveries like fire

or electricity, our structural approach must evolve accordingly. Instead of

designing isolated, top-down systems, we should foster collaborative,

decentralized, and biologically inspired ecosystems of digital agents. By

shifting our perspective away from rigid central control, human society can

establish cooperative frameworks that allow these increasingly autonomous

systems to be integrated smoothly, sustainably, and safely into everyday

life.

In his Forbes article, John Werner encourages readers to reconsider common

assumptions about artificial intelligence that might limit our ability to

effectively navigate the future. He notes that early technology milestones,

such as the IBM Watson era, conditioned the public to view machine

intelligence as a centralized database focused entirely on factual recall,

rapid calculation, and deterministic logic. However, as the field quickly

moves toward a future centered on autonomous software agents, Werner argues

that continuing to rely on these old centralized frameworks is a foundational

mistake. Drawing from insights shared at a recent MIT-linked conference, he

suggests that the true development of artificial intelligence will ultimately

mirror biological organisms and complex economic networks rather than

centralized computer hardware. Because the long-term impact of this technology

on global society is frequently compared to foundational discoveries like fire

or electricity, our structural approach must evolve accordingly. Instead of

designing isolated, top-down systems, we should foster collaborative,

decentralized, and biologically inspired ecosystems of digital agents. By

shifting our perspective away from rigid central control, human society can

establish cooperative frameworks that allow these increasingly autonomous

systems to be integrated smoothly, sustainably, and safely into everyday

life.The Architecture Questions I Ask Before an Initiative Starts

Building a Quantum-Safe Foundation: WWT and Cisco Accelerate Post-Quantum Readiness

The Next Wow Factor: A Conversation with Sidney Lu, Chairman and CEO, Foxconn Interconnect Technology (FIT)

In this interview, Sidney Lu, the chairman and chief executive officer of Foxconn Interconnect Technology, reflects on his forty year career and personal leadership philosophy. He oversees a large global workforce that manufactures vital electrical parts, such as connectors and cables, for common electronics like smartphones, electric vehicles, and computer servers. Lu credits his way of leading to a balance of Eastern discipline and Western workplace confidence, which he gained while studying and working in the United States. A foundational lesson from his mother taught him to take full responsibility, avoid self pity, and quickly move past mistakes, a clear mindset he later applied to difficult engineering problems. As a leader, Lu strongly emphasizes supporting his employees by taking personal blame for business setbacks rather than shifting it downward to others. To stay relevant and avoid falling behind, he consistently challenges his team to deliver an unexpected, fresh product or advancement every three years. Under his quiet guidance, the company has expanded significantly while building long lasting relationships with clients based on deep trust. Ultimately, Lu attributes his steady motivation to a simple, genuine enjoyment of his daily work and a constant curiosity about what comes next.Post-quantum cryptography is not the future. It is your current reality

The article explains that post-quantum cryptography is an immediate

operational necessity rather than a distant concern. Major tech companies and

governments are already deploying these new algorithms because waiting for a

functional quantum computer introduces severe, immediate risks to digital

infrastructure. Chief among these is the "Harvest Now, Decrypt Later"

strategy, where adversaries actively intercept and store encrypted network

traffic today with the intention of decrypting it once advanced quantum

hardware becomes available. Additionally, existing digital signatures and root

certificates face future retroactive forgery, threatening the core

authenticity of secure software supply chains. Successfully upgrading an

enterprise is rarely an issue of funding or algorithm selection; the real

challenge is an absolute lack of visibility. Modern corporate networks contain

countless forgotten encryption points hidden within legacy software, cloud

environments, and device firmware. To address this, organizations must

establish a continuous inventory, known as a Cryptography Bill of Materials,

to locate and evaluate their vulnerable assets. Once an organization maps

these internal elements, it can cultivate true cryptographic agility, enabling

systems to swap underlying protocols smoothly without disrupting daily

operations or breaking system compatibility. Rather than delaying, companies

must prioritize data based on its overall longevity and methodically adapt to

finalized standards, securing their systems before the available

implementation runway runs out entirely.

The article explains that post-quantum cryptography is an immediate

operational necessity rather than a distant concern. Major tech companies and

governments are already deploying these new algorithms because waiting for a

functional quantum computer introduces severe, immediate risks to digital

infrastructure. Chief among these is the "Harvest Now, Decrypt Later"

strategy, where adversaries actively intercept and store encrypted network

traffic today with the intention of decrypting it once advanced quantum

hardware becomes available. Additionally, existing digital signatures and root

certificates face future retroactive forgery, threatening the core

authenticity of secure software supply chains. Successfully upgrading an

enterprise is rarely an issue of funding or algorithm selection; the real

challenge is an absolute lack of visibility. Modern corporate networks contain

countless forgotten encryption points hidden within legacy software, cloud

environments, and device firmware. To address this, organizations must

establish a continuous inventory, known as a Cryptography Bill of Materials,

to locate and evaluate their vulnerable assets. Once an organization maps

these internal elements, it can cultivate true cryptographic agility, enabling

systems to swap underlying protocols smoothly without disrupting daily

operations or breaking system compatibility. Rather than delaying, companies

must prioritize data based on its overall longevity and methodically adapt to

finalized standards, securing their systems before the available

implementation runway runs out entirely.

/vnd/media/media_files/2026/04/21/from-pilots-to-platforms-2026-04-21-12-07-28.jpg)

/vnd/media/media_files/2026/04/11/meteoroid-meteor-or-meteorite-2026-04-11-14-38-14.jpg)