Quote for the day:

"Few things can help an individual more than to place responsibility on him, and to let him know that you trust him." -- Booker T. Washington

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 22 mins • Perfect for listening on the go.

Identity security risks are skyrocketing, and enterprises can’t keep up

According to recent studies from Sophos and Palo Alto Networks, identity

security has become the primary attack surface in modern cybersecurity,

leaving many enterprises struggling to keep pace. Research indicates that 71%

of organizations suffered at least one identity-related breach in 2025, with

victims experiencing an average of three separate incidents. These breaches

often result in devastating consequences, including data theft, ransomware,

and financial loss, with the mean recovery cost for ransomware attacks

reaching a staggering $1.64 million. A major driver of this escalating risk is

the explosion of non-human identities, as machine and AI agents now outnumber

human users by a hundred-to-one ratio. Despite the mounting threats,

enterprises face significant visibility challenges; only a quarter of

organizations continuously monitor for unusual login attempts, and many

struggle with fragmented security tools that create dangerous blind spots.

Furthermore, businesses finding compliance difficult are disproportionately

targeted, suffering breaches at higher rates. To address these

vulnerabilities, experts emphasize that security leaders must move beyond

manual processes and embrace end-to-end automation combined with unified

governance. Failing to secure these rapidly proliferating AI-driven identities

could lead to increasingly costly gaps that traditional security controls are

simply unequipped to close, making robust identity management more critical

than ever.

According to recent studies from Sophos and Palo Alto Networks, identity

security has become the primary attack surface in modern cybersecurity,

leaving many enterprises struggling to keep pace. Research indicates that 71%

of organizations suffered at least one identity-related breach in 2025, with

victims experiencing an average of three separate incidents. These breaches

often result in devastating consequences, including data theft, ransomware,

and financial loss, with the mean recovery cost for ransomware attacks

reaching a staggering $1.64 million. A major driver of this escalating risk is

the explosion of non-human identities, as machine and AI agents now outnumber

human users by a hundred-to-one ratio. Despite the mounting threats,

enterprises face significant visibility challenges; only a quarter of

organizations continuously monitor for unusual login attempts, and many

struggle with fragmented security tools that create dangerous blind spots.

Furthermore, businesses finding compliance difficult are disproportionately

targeted, suffering breaches at higher rates. To address these

vulnerabilities, experts emphasize that security leaders must move beyond

manual processes and embrace end-to-end automation combined with unified

governance. Failing to secure these rapidly proliferating AI-driven identities

could lead to increasingly costly gaps that traditional security controls are

simply unequipped to close, making robust identity management more critical

than ever.The Dashboard Delusion: Why Data-Rich Organizations Still Struggle to Make Decisions

The article "The Dashboard Delusion" explores why modern organizations,

despite having access to unprecedented amounts of data, frequently struggle to

make effective business decisions. It argues that many companies fall into the

trap of believing that sleek, colorful dashboards equate to actionable

insights, a phenomenon termed the "dashboard delusion." While these visual

tools excel at presenting historical data and backward-looking metrics, they

often fail to provide the context necessary to understand future outcomes or

current drivers. The primary issue lies in the disconnect between data

visualization and actual decision-making—the "last mile" of the data journey.

Dashboards frequently overwhelm users with "vanity metrics" and noise,

obscuring the signal needed for strategic pivots. To overcome this, the

article suggests transitioning from a pure focus on data visualization to

"Decision Intelligence," which prioritizes the "why" behind the numbers. This

requires a cultural shift where data is used not just to report what happened,

but to model potential scenarios and guide specific actions. Ultimately, the

piece emphasizes that technology alone cannot bridge the gap; organizations

must foster a data culture that values contextual understanding and aligns

analytical outputs with concrete business objectives to transform information

into genuine competitive advantages.

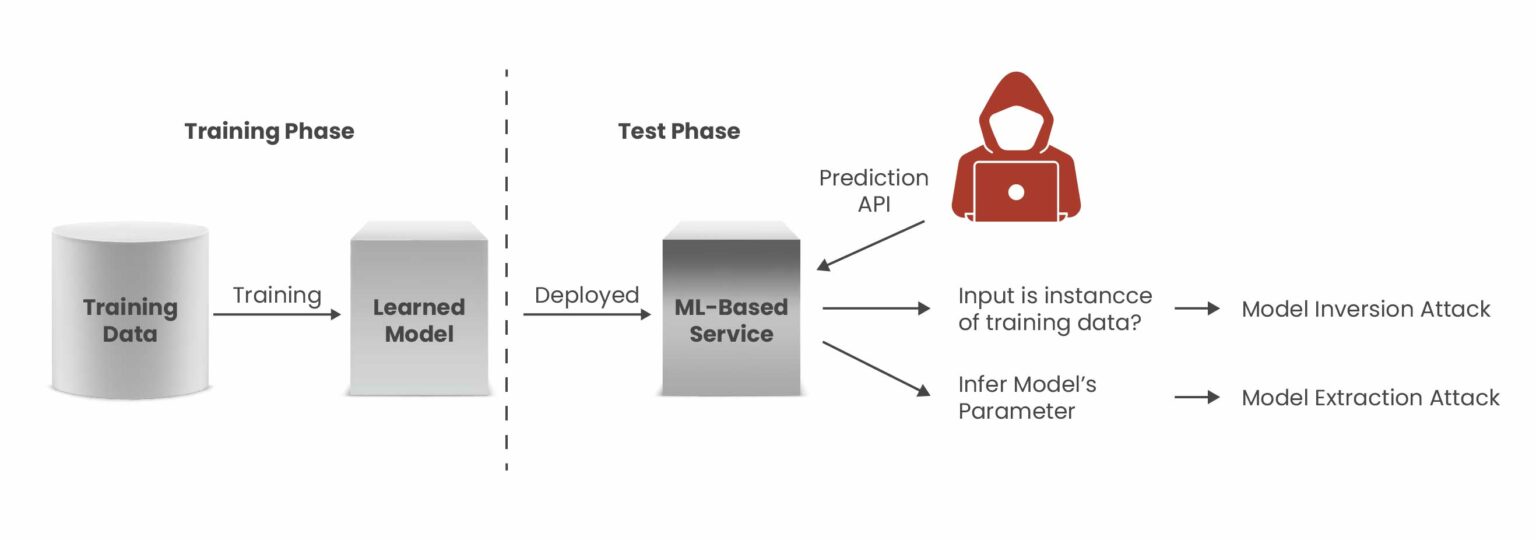

The Critical Cyber Skills Every Security Team Still Needs

In the Forbes Technology Council article, industry experts outline essential

cybersecurity skills that organizations must preserve as technological roles

evolve and specialize. A primary focus is bridging the gap between technical

discovery and business objectives. Security professionals must excel at

translating complex risks into tangible business impacts, such as revenue

protection and regulatory compliance, to ensure stakeholders prioritize

necessary investments. Furthermore, the council emphasizes the importance of

maintaining foundational technical knowledge, specifically core networking

fundamentals and system-specific institutional insights. As automated tools

increasingly abstract daily tasks, teams must still understand underlying

protocols and data locations to manage incidents when dashboards fail. Beyond

technical prowess, a human-centered approach remains vital; practitioners

should view security through the lens of non-technical employees to mitigate

human error and foster a culture of collective responsibility. The

contributors also highlight the need for “security invariants”—clear,

plain-language rules defining what a system must never allow—and a culture of

healthy skepticism that consistently questions aging configurations. By

integrating these soft skills with deep architectural understanding, security

teams can move beyond mere tool-based detection to achieve holistic

remediation and resilience. This strategic blend of business acumen,

fundamental expertise, and human psychology ensures that cybersecurity remains

an agile, business-aligned function rather than a siloed technical burden.

In the Forbes Technology Council article, industry experts outline essential

cybersecurity skills that organizations must preserve as technological roles

evolve and specialize. A primary focus is bridging the gap between technical

discovery and business objectives. Security professionals must excel at

translating complex risks into tangible business impacts, such as revenue

protection and regulatory compliance, to ensure stakeholders prioritize

necessary investments. Furthermore, the council emphasizes the importance of

maintaining foundational technical knowledge, specifically core networking

fundamentals and system-specific institutional insights. As automated tools

increasingly abstract daily tasks, teams must still understand underlying

protocols and data locations to manage incidents when dashboards fail. Beyond

technical prowess, a human-centered approach remains vital; practitioners

should view security through the lens of non-technical employees to mitigate

human error and foster a culture of collective responsibility. The

contributors also highlight the need for “security invariants”—clear,

plain-language rules defining what a system must never allow—and a culture of

healthy skepticism that consistently questions aging configurations. By

integrating these soft skills with deep architectural understanding, security

teams can move beyond mere tool-based detection to achieve holistic

remediation and resilience. This strategic blend of business acumen,

fundamental expertise, and human psychology ensures that cybersecurity remains

an agile, business-aligned function rather than a siloed technical burden.Building bankable, resilient data centers: From site to operation

The article "Building Bankable, Resilient Data Centers: From Site to Operation" emphasizes that achieving long-term project viability in the digital infrastructure sector requires a comprehensive, lifecycle-focused approach to risk management. The journey toward creating a facility that is both "bankable" and "resilient" begins with strategic site selection, which dictates the project's trajectory regarding power accessibility, regulatory hurdles, and physical exposure to natural catastrophes. Early risk engineering and stakeholder alignment are critical for securing the massive capital required for modern data centers, especially as asset values skyrocket. Several significant constraints currently challenge the industry, including extreme power dependency driven by the AI boom, unprecedented speed-to-market demands, and severe supply chain bottlenecks for critical infrastructure like transformers and generators. Furthermore, the concentrated value of these mega-scale campuses often exceeds traditional insurance limits, necessitating more sophisticated risk modeling and innovative coverage structures. These specialized programs must effectively bridge the dangerous "gray zones" that often emerge during the complex transition from phased construction to full-scale operations. Ultimately, by integrating meticulous risk planning from the initial feasibility stage through to daily operations, developers can successfully navigate sustainability mandates and persistent grid constraints. This proactive alignment ensures that data centers remain not only insurable but also capable of delivering the continuous uptime required by the global digital economy.Outage Report: AI Boom Threatens Years of Data Center Resiliency Gains

The "2026 Data Center Outage Analysis" from Uptime Institute highlights a

critical juncture for industry resiliency, noting that while general outage

rates have declined for five consecutive years, the rapid proliferation of

artificial intelligence (AI) threatens to reverse these gains. Currently,

power-related failures involving UPS systems and generators remain the primary

cause of downtime, with one in five incidents now exceeding $1 million in

costs. However, the report warns that AI-specific facilities introduce

unprecedented risks due to their massive scale and extreme energy intensity.

These high-density workloads create "spiky" power demands that can strain

regional grids and damage on-site infrastructure. To meet these demands,

operators are increasingly turning to behind-the-meter power solutions, such

as gas turbines and large-scale battery arrays, which bring a new class of

operational complexities. Additionally, the adoption of nascent technologies

like liquid cooling and higher-voltage distribution introduces further

variables into the reliability equation. As AI training sites prioritize scale

over traditional redundancy to manage costs, the systemic likelihood of

failure appears to be increasing. Ultimately, the industry must navigate these

evolving pressure points—balancing the relentless demand for AI capacity with

the foundational need for stable, resilient infrastructure—to prevent a

significant resurgence in severe and costly service disruptions.

The "2026 Data Center Outage Analysis" from Uptime Institute highlights a

critical juncture for industry resiliency, noting that while general outage

rates have declined for five consecutive years, the rapid proliferation of

artificial intelligence (AI) threatens to reverse these gains. Currently,

power-related failures involving UPS systems and generators remain the primary

cause of downtime, with one in five incidents now exceeding $1 million in

costs. However, the report warns that AI-specific facilities introduce

unprecedented risks due to their massive scale and extreme energy intensity.

These high-density workloads create "spiky" power demands that can strain

regional grids and damage on-site infrastructure. To meet these demands,

operators are increasingly turning to behind-the-meter power solutions, such

as gas turbines and large-scale battery arrays, which bring a new class of

operational complexities. Additionally, the adoption of nascent technologies

like liquid cooling and higher-voltage distribution introduces further

variables into the reliability equation. As AI training sites prioritize scale

over traditional redundancy to manage costs, the systemic likelihood of

failure appears to be increasing. Ultimately, the industry must navigate these

evolving pressure points—balancing the relentless demand for AI capacity with

the foundational need for stable, resilient infrastructure—to prevent a

significant resurgence in severe and costly service disruptions.Why resilience matters as much as innovation in NBFCs

In an interview with Express Computer, Mathew Panat, CTO of HDB Financial

Services, emphasizes that while innovation through AI, cloud computing, and

analytics is essential for Non-Banking Financial Companies (NBFCs),

operational resilience and governance are equally vital for long-term

sustainability. Panat highlights that a robust digital infrastructure,

including cloud-based data lakes and advanced cybersecurity, serves as the

necessary foundation for scaling diverse lending portfolios. Unlike fintech

startups that often prioritize speed to market, regulated NBFCs must balance

technological agility with security and strict regulatory compliance. HDB’s

strategy involves deploying AI across multiple themes—such as collections,

sales, and multilingual customer onboarding—while maintaining a cautious

approach to credit decisioning. By focusing on AI-assisted rather than fully

autonomous underwriting, the organization ensures explainability and

accountability within a complex regulatory landscape. Furthermore, centralized

data intelligence enables proactive risk management through early-warning

systems that track borrower behavior. The company also engages in ideathons

with startups to challenge institutional inertia and explore unconventional

ideas. Looking ahead, the focus remains on achieving predictability and

scalability through edge computing and privacy-first frameworks like DPDP

compliance. Ultimately, the integration of cutting-edge technology with

institutional resilience allows NBFCs to provide a seamless, secure customer

experience while navigating the evolving financial ecosystem.

Using continuous purple teaming to protect fast-paced enterprise environments

Modern enterprise environments are evolving rapidly through cloud adoption and

automated delivery pipelines, rendering traditional periodic security testing

insufficient. To bridge this gap, continuous purple teaming has emerged as a

vital strategy that integrates offensive and defensive operations into a

unified, ongoing workflow. By leveraging real-time threat intelligence mapped

to the MITRE ATT&CK framework, organizations can shift from generic

simulations to validating their defenses against the specific adversaries they

face today. This model operationalizes security validation by employing both

atomic testing for individual techniques and chain-based simulations for full

attack paths, ensuring that detection and response capabilities are robust

across the entire kill chain. Central to this approach is the use of automated

infrastructure and dedicated cyber ranges that mirror production environments,

allowing teams to safely refine logging strategies and response playbooks

without disrupting operations. Furthermore, continuous purple teaming prepares

enterprises for the next generation of AI-enabled threats by facilitating

controlled experimentation with emerging attack vectors. Ultimately, this

collaborative methodology fosters a culture of shared knowledge between red

and blue teams, transforming security from a series of isolated assessments

into a dynamic, measurable component of daily operations that maintains

resilience in a constantly shifting digital landscape.

Modern enterprise environments are evolving rapidly through cloud adoption and

automated delivery pipelines, rendering traditional periodic security testing

insufficient. To bridge this gap, continuous purple teaming has emerged as a

vital strategy that integrates offensive and defensive operations into a

unified, ongoing workflow. By leveraging real-time threat intelligence mapped

to the MITRE ATT&CK framework, organizations can shift from generic

simulations to validating their defenses against the specific adversaries they

face today. This model operationalizes security validation by employing both

atomic testing for individual techniques and chain-based simulations for full

attack paths, ensuring that detection and response capabilities are robust

across the entire kill chain. Central to this approach is the use of automated

infrastructure and dedicated cyber ranges that mirror production environments,

allowing teams to safely refine logging strategies and response playbooks

without disrupting operations. Furthermore, continuous purple teaming prepares

enterprises for the next generation of AI-enabled threats by facilitating

controlled experimentation with emerging attack vectors. Ultimately, this

collaborative methodology fosters a culture of shared knowledge between red

and blue teams, transforming security from a series of isolated assessments

into a dynamic, measurable component of daily operations that maintains

resilience in a constantly shifting digital landscape.Water and Cybersecurity: Digital Threats to Our Most Critical Resource

In the article "Water and Cybersecurity: Digital Threats to Our Most Critical

Resource," Peter Fletcher examines the escalating digital vulnerabilities

facing the global water supply, a resource fundamental to human survival.

Unlike other critical sectors like telecommunications or energy, water carries

a unique risk profile because it is directly ingested, making its protection

an existential necessity. The author highlights recent EPA advisories

regarding cyberattacks from state-sponsored actors, such as those affiliated

with the Iranian government, who have already targeted and disrupted domestic

process control systems. A significant challenge lies in the technological

disparity across the sector; while large utilities in regions like Silicon

Valley maintain robust defenses, countless smaller, under-resourced facilities

remain dangerously exposed. Furthermore, Fletcher notes that current security

frameworks are often too generic, leaving many providers without prescriptive

guidance for their specific operational technology. To address these gaps, the

piece champions collective action through initiatives like Project Franklin,

which pairs volunteer ethical hackers with rural utilities to shore up

defenses. Ultimately, the article argues that the water community must move

beyond isolated security postures toward a culture of radical transparency and

shared expertise to effectively safeguard our most vital liquid asset against

increasingly sophisticated global adversaries.

The cybersecurity industry is currently undergoing a radical transformation

driven by a massive influx of capital into artificial intelligence, according

to recent insights from Dark Reading. In the first quarter of 2026, financing

volume for AI-native startups reached $3.8 billion, notably surpassing M&A

activity for only the fourth time in history. While this investment surge

signals robust industry growth and job creation, it has simultaneously widened

the "valley of death" for traditional security firms struggling to pivot. This

perilous phase, where companies have exhausted initial funding but lack

sustainable revenue, is becoming more difficult to navigate as investors

prioritize cutting-edge AI technologies over legacy solutions. Experts note

that advanced frontier models, such as Anthropic’s Mythos, are disrupting

established sectors like vulnerability management, rendering some existing

vendors virtually obsolete. This technological shift is accelerating a

"Darwinian" consolidation wave, where an overcrowded market of overlapping

players will eventually be winnowed down. As major acquisitions become the

primary exit strategy for successful AI startups, the average enterprise will

likely consolidate its security stack from dozens of disparate tools to a few

integrated, AI-driven platforms. Ultimately, while AI acts as "gasoline on a

bonfire" for innovation, it demands that organizations rapidly adapt or face

irrelevance in an increasingly AI-centric landscape.

The cybersecurity industry is currently undergoing a radical transformation

driven by a massive influx of capital into artificial intelligence, according

to recent insights from Dark Reading. In the first quarter of 2026, financing

volume for AI-native startups reached $3.8 billion, notably surpassing M&A

activity for only the fourth time in history. While this investment surge

signals robust industry growth and job creation, it has simultaneously widened

the "valley of death" for traditional security firms struggling to pivot. This

perilous phase, where companies have exhausted initial funding but lack

sustainable revenue, is becoming more difficult to navigate as investors

prioritize cutting-edge AI technologies over legacy solutions. Experts note

that advanced frontier models, such as Anthropic’s Mythos, are disrupting

established sectors like vulnerability management, rendering some existing

vendors virtually obsolete. This technological shift is accelerating a

"Darwinian" consolidation wave, where an overcrowded market of overlapping

players will eventually be winnowed down. As major acquisitions become the

primary exit strategy for successful AI startups, the average enterprise will

likely consolidate its security stack from dozens of disparate tools to a few

integrated, AI-driven platforms. Ultimately, while AI acts as "gasoline on a

bonfire" for innovation, it demands that organizations rapidly adapt or face

irrelevance in an increasingly AI-centric landscape.

AI Drives Cybersecurity Investments, Widening 'Valley of Death'

The cybersecurity industry is currently undergoing a radical transformation

driven by a massive influx of capital into artificial intelligence, according

to recent insights from Dark Reading. In the first quarter of 2026, financing

volume for AI-native startups reached $3.8 billion, notably surpassing M&A

activity for only the fourth time in history. While this investment surge

signals robust industry growth and job creation, it has simultaneously widened

the "valley of death" for traditional security firms struggling to pivot. This

perilous phase, where companies have exhausted initial funding but lack

sustainable revenue, is becoming more difficult to navigate as investors

prioritize cutting-edge AI technologies over legacy solutions. Experts note

that advanced frontier models, such as Anthropic’s Mythos, are disrupting

established sectors like vulnerability management, rendering some existing

vendors virtually obsolete. This technological shift is accelerating a

"Darwinian" consolidation wave, where an overcrowded market of overlapping

players will eventually be winnowed down. As major acquisitions become the

primary exit strategy for successful AI startups, the average enterprise will

likely consolidate its security stack from dozens of disparate tools to a few

integrated, AI-driven platforms. Ultimately, while AI acts as "gasoline on a

bonfire" for innovation, it demands that organizations rapidly adapt or face

irrelevance in an increasingly AI-centric landscape.

The cybersecurity industry is currently undergoing a radical transformation

driven by a massive influx of capital into artificial intelligence, according

to recent insights from Dark Reading. In the first quarter of 2026, financing

volume for AI-native startups reached $3.8 billion, notably surpassing M&A

activity for only the fourth time in history. While this investment surge

signals robust industry growth and job creation, it has simultaneously widened

the "valley of death" for traditional security firms struggling to pivot. This

perilous phase, where companies have exhausted initial funding but lack

sustainable revenue, is becoming more difficult to navigate as investors

prioritize cutting-edge AI technologies over legacy solutions. Experts note

that advanced frontier models, such as Anthropic’s Mythos, are disrupting

established sectors like vulnerability management, rendering some existing

vendors virtually obsolete. This technological shift is accelerating a

"Darwinian" consolidation wave, where an overcrowded market of overlapping

players will eventually be winnowed down. As major acquisitions become the

primary exit strategy for successful AI startups, the average enterprise will

likely consolidate its security stack from dozens of disparate tools to a few

integrated, AI-driven platforms. Ultimately, while AI acts as "gasoline on a

bonfire" for innovation, it demands that organizations rapidly adapt or face

irrelevance in an increasingly AI-centric landscape.How AI Hallucinations Are Creating Real Security Risks

The article titled "How AI Hallucinations Are Creating Real Security Risks,"

published by The Hacker News in May 2026, explores the escalating dangers

posed by generative AI within critical infrastructure and cybersecurity

operations. As AI models increasingly assist in complex decision-making, their

inherent tendency to produce "hallucinations"—plausible-sounding but factually

incorrect outputs—presents a unique and systemic vulnerability. These errors

occur because large language models lack internal mechanisms for factual

verification, instead optimizing for statistical probability based on training

patterns. Consequently, models may confidently present fabricated data or

non-existent research as authoritative truth. The security implications

manifest in three primary ways: missed threats where genuine anomalies are

overlooked, fabricated threats leading to operational "alert fatigue," and

incorrect remediation advice that could inadvertently weaken critical system

defenses. The article emphasizes that these hallucinations transform into

real-world risks primarily when AI systems possess excessive autonomous access

or when human operators skip rigorous manual verification. To mitigate these

pervasive threats, the piece advocates for a strict "human-in-the-loop"

approach, comprehensive data governance to avoid the phenomenon of "model

collapse" from recycled synthetic data, and the implementation of

least-privilege access for all AI agents. Ultimately, treating AI outputs as

potential vulnerabilities is essential for maintaining robust organizational

security.

/articles/sovereign-fault-domains-cloud-resilience/en/smallimage/sovereign-fault-domains-cloud-resilience-thumbnail-1776430533702.jpg)