Quote for the day:

"Leadership seems mystical. It's actually methodical. The method is learnable and repeatable — and when followed, produces results that feel magical." -- Gordon Tredgold

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

The ghost in the machine: Why AI ROI dies at the human finish line

In "The Ghost in the Machine," Andrew Hallinson argues that the primary barrier

to achieving a return on investment for artificial intelligence is not technical

inadequacy but human psychological resistance. Despite multi-million dollar

investments in advanced data stacks, many organizations suffer from what

Hallinson terms an "aversion tax"—the significant loss of potential value caused

by low adoption rates and human friction. This resistance stems from three

psychological barriers: the "black box paradox," where lack of transparency

breeds distrust; "identity threat," where employees feel the technology

undermines their professional intuition and autonomy; and the "perfection trap,"

which involves holding algorithms to much higher standards than human peers.

Hallinson illustrates a solution through his experience at ADP, where success

was achieved by shifting the focus from restrictive data governance to

empowering data democratization. By treating employees as strategic partners and

behavioral architects rather than just data processors, leaders can overcome

these hurdles. Ultimately, the article posits that technical excellence is

wasted if cultural integration is ignored. For executives, the mandate is clear:

building an AI-ready culture is just as critical as the engineering itself, as

ignoring the human element transforms expensive AI tools into mere "shelfware"

that fails to deliver on its mathematical promise.

In "The Ghost in the Machine," Andrew Hallinson argues that the primary barrier

to achieving a return on investment for artificial intelligence is not technical

inadequacy but human psychological resistance. Despite multi-million dollar

investments in advanced data stacks, many organizations suffer from what

Hallinson terms an "aversion tax"—the significant loss of potential value caused

by low adoption rates and human friction. This resistance stems from three

psychological barriers: the "black box paradox," where lack of transparency

breeds distrust; "identity threat," where employees feel the technology

undermines their professional intuition and autonomy; and the "perfection trap,"

which involves holding algorithms to much higher standards than human peers.

Hallinson illustrates a solution through his experience at ADP, where success

was achieved by shifting the focus from restrictive data governance to

empowering data democratization. By treating employees as strategic partners and

behavioral architects rather than just data processors, leaders can overcome

these hurdles. Ultimately, the article posits that technical excellence is

wasted if cultural integration is ignored. For executives, the mandate is clear:

building an AI-ready culture is just as critical as the engineering itself, as

ignoring the human element transforms expensive AI tools into mere "shelfware"

that fails to deliver on its mathematical promise.

AI Finds Code Vulnerabilities – Fixing Them Is the Real Challenge

The article "AI Finds Code Vulnerabilities – Fixing Them is the Real Challenge," published on DevOps Digest, explores the double-edged sword of utilizing artificial intelligence in software security. While AI-driven tools have revolutionized the ability to scan vast codebases and identify potential security flaws with unprecedented speed, the author argues that the industry's bottleneck has shifted from detection to remediation. Automated scanners often generate an overwhelming volume of alerts, many of which are false positives or lack the necessary context for immediate action. This "security debt" places a significant burden on development teams who must manually verify and patch each issue. Furthermore, the piece highlights that while AI can identify a problem, it often struggles to understand the complex business logic required to fix it without breaking existing functionality. The real challenge lies in integrating AI into the developer's workflow in a way that provides actionable, verified suggestions rather than just a list of problems. The article concludes that for AI to truly enhance cybersecurity, organizations must focus on automating the "fix" phase through sophisticated generative AI and better developer-security collaboration, ensuring that the speed of remediation finally matches the efficiency of automated detection.Data Replication Strategies: Enterprise Resilience Guide

The article "Data Replication Strategies: Enterprise Resilience Guide" from

Scality explores the critical methodologies for ensuring data durability and

availability across physical systems. At its core, the guide highlights the

fundamental tradeoff between consistency and availability, a tension that

dictates how organizations architect their storage infrastructure. Synchronous

replication is presented as the gold standard for zero-data-loss scenarios (RPO

of zero) because it requires all replicas to acknowledge a write before

completion; however, this introduces significant write latency. Conversely,

asynchronous replication optimizes for performance and long-distance fault

tolerance by propagating changes in the background, which decouples write speed

from network latency but risks losing data not yet synchronized. Beyond timing,

the content details architectural models like active-passive, where one primary

site handles writes, and active-active, where multiple sites simultaneously

serve traffic. The article also addresses consistency models such as strong,

causal, and session consistency, emphasizing that the choice depends on specific

application requirements. By aligning replication strategies with Recovery Time

Objectives (RTO) and Recovery Point Objectives (RPO), the guide argues that

organizations can build a resilient infrastructure capable of surviving data

center failures while balancing cost, bandwidth, and performance.

The article "Data Replication Strategies: Enterprise Resilience Guide" from

Scality explores the critical methodologies for ensuring data durability and

availability across physical systems. At its core, the guide highlights the

fundamental tradeoff between consistency and availability, a tension that

dictates how organizations architect their storage infrastructure. Synchronous

replication is presented as the gold standard for zero-data-loss scenarios (RPO

of zero) because it requires all replicas to acknowledge a write before

completion; however, this introduces significant write latency. Conversely,

asynchronous replication optimizes for performance and long-distance fault

tolerance by propagating changes in the background, which decouples write speed

from network latency but risks losing data not yet synchronized. Beyond timing,

the content details architectural models like active-passive, where one primary

site handles writes, and active-active, where multiple sites simultaneously

serve traffic. The article also addresses consistency models such as strong,

causal, and session consistency, emphasizing that the choice depends on specific

application requirements. By aligning replication strategies with Recovery Time

Objectives (RTO) and Recovery Point Objectives (RPO), the guide argues that

organizations can build a resilient infrastructure capable of surviving data

center failures while balancing cost, bandwidth, and performance.When Should a DevOps Agent Act Without Human Approval?

The article titled "When Should a DevOps Agent Act Without Human Approval?" by

Bala Priya C. outlines a comprehensive framework for navigating the transition

from manual oversight to autonomous operations in DevOps. Central to this

transition is a six-point autonomy spectrum, ranging from basic observation at

Level 0 to full autonomy at Level 5. The author highlights that determining

the appropriate level of independence for an agent depends on four critical

factors: the reversibility of the action, the potential blast radius, the

quality of incoming signals, and time sensitivity. For most organizations, the

author suggests maintaining agents within Levels 1 through 3, where humans

remain primary decision-makers or provide explicit approval for suggested

actions. Level 4, which involves agents executing tasks and then notifying

humans with a defined override window, should be reserved for narrowly

defined, low-risk activities. Full Level 5 autonomy is only recommended after

an agent has established a consistent, documented track record of success at

lower levels. To manage these shifts safely, the article emphasizes the

necessity of robust guardrails, including progressive rollouts, granular

approval gates, and high signal-quality thresholds. This structured approach

ensures that automation enhances operational efficiency without compromising

the security or stability of the production environment, ultimately allowing

engineers to focus on higher-value strategic innovation and developmental

work.

8 guiding principles for reskilling the SOC for agentic AI

The article "8 guiding principles for reskilling the SOC for agentic AI"

outlines a strategic roadmap for Security Operations Centers (SOCs)

transitioning toward an AI-driven future. The first principle, embracing the

agentic imperative, highlights that moving at "machine speed" is essential to

counter advanced adversaries effectively. Leadership plays a critical role by

setting a tone of rapid experimentation and "failing fast" to foster internal

innovation. While cultural resistance—particularly fears regarding job

displacement—is common, the article suggests addressing this by redefining

roles around high-value tasks such as AI safety and governance. Hands-on

training in secure sandboxes is vital for building practitioner confidence and

"model intuition," allowing analysts to recognize when AI outputs are

structurally flawed. Crucially, the "human-in-the-loop" principle ensures that

non-deterministic AI remains under human oversight through clear escalation

paths and audit trails. Beyond technology, the shift requires rethinking

organizational structures to move from siloed disciplines to holistic,

outcome-based orchestration. Ultimately, fostering collaboration between

humans and machines allows analysts to relocate from "inside the process" to a

supervisory position above it. By reimagining the operating model, CISOs can

transform chaotic environments into calm, efficient hubs where agentic AI

handles automated triage while humans provide strategic judgment and effective

long-term accountability.

The article "8 guiding principles for reskilling the SOC for agentic AI"

outlines a strategic roadmap for Security Operations Centers (SOCs)

transitioning toward an AI-driven future. The first principle, embracing the

agentic imperative, highlights that moving at "machine speed" is essential to

counter advanced adversaries effectively. Leadership plays a critical role by

setting a tone of rapid experimentation and "failing fast" to foster internal

innovation. While cultural resistance—particularly fears regarding job

displacement—is common, the article suggests addressing this by redefining

roles around high-value tasks such as AI safety and governance. Hands-on

training in secure sandboxes is vital for building practitioner confidence and

"model intuition," allowing analysts to recognize when AI outputs are

structurally flawed. Crucially, the "human-in-the-loop" principle ensures that

non-deterministic AI remains under human oversight through clear escalation

paths and audit trails. Beyond technology, the shift requires rethinking

organizational structures to move from siloed disciplines to holistic,

outcome-based orchestration. Ultimately, fostering collaboration between

humans and machines allows analysts to relocate from "inside the process" to a

supervisory position above it. By reimagining the operating model, CISOs can

transform chaotic environments into calm, efficient hubs where agentic AI

handles automated triage while humans provide strategic judgment and effective

long-term accountability.New DORA Report Claims Strong Engineering Foundations Drive AI RoI

The May 2026 InfoQ article summarizes Google Cloud's DORA report, "ROI of

AI-Assisted Software Development," which offers a structured framework for

calculating financial returns from AI adoption. The research argues that AI

acts primarily as an amplifier; rather than repairing flawed processes, it

magnifies existing organizational strengths and weaknesses. Consequently,

achieving sustainable ROI necessitates robust engineering foundations,

including quality internal platforms, disciplined version control, and clear

workflows. A central concept introduced is the "J-Curve of value realization,"

where organizations typically face a temporary productivity dip due to the

"tuition cost of transformation"—incorporating learning curves, verification

taxes for AI-generated code, and essential process adaptations. Despite this

initial drop, the report models a substantial first-year ROI of 39% for a

typical 500-person organization, with a payback period of approximately eight

months. However, leaders are cautioned against an "instability tax," as

increased delivery speed may overwhelm manual review gates and elevate failure

rates if not balanced with automated testing and continuous integration.

Looking ahead, the research predicts compounding gains in years two and three,

potentially reaching a 727% return as teams transition toward autonomous

agentic workflows. Ultimately, the report emphasizes that AI’s true value lies

in clearing systemic bottlenecks and unlocking latent human creativity, rather

than pursuing simple headcount reduction.

Compliance Without Chaos In Modern Delivery

The article "Compliance Without Chaos In Modern Delivery" emphasizes

transforming compliance from a disruptive, quarterly hurdle into a seamless,

integrated component of the software delivery lifecycle. Rather than treating

audits as high-stakes oral exams, the author advocates for building automated

controls directly into existing engineering workflows. This "Policy as Code"

approach effectively eliminates the ambiguity of "folklore" policies by

enforcing rules through CI/CD gates, such as mandatory pull request reviews,

automated testing, and artifact traceability. To maintain a state of

continuous readiness, teams should implement automated evidence collection,

ensuring that audit trails for changes, access, and security checks are

generated as a natural byproduct of daily development work. The piece also

highlights the importance of robust access management, favoring short-lived

privileges and group-based permissions over static, high-risk credentials.

Furthermore, continuous monitoring is described as essential for identifying

silent failures in critical areas like encryption, log retention, and

vulnerability status before they escalate into major incidents. By maintaining

an updated evidence map and an "audit-ready pack" year-round, organizations

can achieve a "boring" compliance posture. Ultimately, the goal is to shift

from reactive manual efforts to a disciplined, automated machine that

consistently proves security and regulatory adherence without sacrificing

delivery speed or engineering focus.

The article "Compliance Without Chaos In Modern Delivery" emphasizes

transforming compliance from a disruptive, quarterly hurdle into a seamless,

integrated component of the software delivery lifecycle. Rather than treating

audits as high-stakes oral exams, the author advocates for building automated

controls directly into existing engineering workflows. This "Policy as Code"

approach effectively eliminates the ambiguity of "folklore" policies by

enforcing rules through CI/CD gates, such as mandatory pull request reviews,

automated testing, and artifact traceability. To maintain a state of

continuous readiness, teams should implement automated evidence collection,

ensuring that audit trails for changes, access, and security checks are

generated as a natural byproduct of daily development work. The piece also

highlights the importance of robust access management, favoring short-lived

privileges and group-based permissions over static, high-risk credentials.

Furthermore, continuous monitoring is described as essential for identifying

silent failures in critical areas like encryption, log retention, and

vulnerability status before they escalate into major incidents. By maintaining

an updated evidence map and an "audit-ready pack" year-round, organizations

can achieve a "boring" compliance posture. Ultimately, the goal is to shift

from reactive manual efforts to a disciplined, automated machine that

consistently proves security and regulatory adherence without sacrificing

delivery speed or engineering focus.Ask a Data Ethicist: What Are the Legal and Ethical Issues in Summarizing Text with an AI Tool?

The use of AI tools for text summarization introduces significant legal and

ethical challenges that organizations must navigate carefully. Legally, the

primary concern revolves around copyright infringement, as these tools are

often trained on large datasets containing proprietary data without explicit

consent, potentially leading to complex intellectual property disputes.

Furthermore, privacy risks emerge when users input sensitive or personally

identifiable information into external AI systems, potentially violating

strict regulations like the GDPR or CCPA. From an ethical standpoint, the

article highlights the danger of algorithmic bias, where AI might

inadvertently emphasize or distort certain viewpoints based on inherent flaws

in its training data. Hallucinations represent another critical ethical risk,

as AI can generate plausible-looking but factually incorrect summaries,

leading to the spread of misinformation. To mitigate these systemic issues,

the author emphasizes the importance of implementing robust data governance

frameworks and maintaining a consistent "human-in-the-loop" approach. This

ensures that summaries are rigorously reviewed for accuracy and fairness

before being utilized in professional decision-making processes. Transparency

regarding the use of automated tools is also paramount to maintaining public

and stakeholder trust. Ultimately, while AI summarization offers immense

efficiency, its deployment requires a balanced strategy that prioritizes legal

compliance and ethical integrity.

UK chief executives make AI priority but delay plans

A recent report from Dataiku, based on a Harris Poll survey of nine hundred

global chief executives, indicates that UK leaders are positioning artificial

intelligence as a paramount corporate priority while simultaneously exercising

significant caution in its implementation. The study, which focused on

organizations with annual revenues exceeding five hundred million dollars,

revealed that eighty-one percent of UK CEOs rank AI strategy as a top or high

priority, a figure that notably surpasses the global average of seventy-three

percent. However, this high level of ambition is tempered by a growing fear of

financial waste; seventy-seven percent of British respondents expressed

greater concern about over-investing in the technology than under-investing,

compared to sixty-five percent of their international peers. This fiscal

wariness has led to tangible delays in project rollouts across the country.

Specifically, fifty-one percent of UK executives admitted to postponing AI

initiatives due to regulatory uncertainty, a sharp increase from twenty-six

percent just one year prior. As questions regarding return on investment and

governance persist, a widening gap has emerged between boardroom aspirations

and practical execution. UK leaders are increasingly weighing their

expenditures more carefully, shifting from rapid adoption toward a more

calculated approach that prioritizes oversight and navigates the evolving

legislative landscape to avoid costly mistakes.

A recent report from Dataiku, based on a Harris Poll survey of nine hundred

global chief executives, indicates that UK leaders are positioning artificial

intelligence as a paramount corporate priority while simultaneously exercising

significant caution in its implementation. The study, which focused on

organizations with annual revenues exceeding five hundred million dollars,

revealed that eighty-one percent of UK CEOs rank AI strategy as a top or high

priority, a figure that notably surpasses the global average of seventy-three

percent. However, this high level of ambition is tempered by a growing fear of

financial waste; seventy-seven percent of British respondents expressed

greater concern about over-investing in the technology than under-investing,

compared to sixty-five percent of their international peers. This fiscal

wariness has led to tangible delays in project rollouts across the country.

Specifically, fifty-one percent of UK executives admitted to postponing AI

initiatives due to regulatory uncertainty, a sharp increase from twenty-six

percent just one year prior. As questions regarding return on investment and

governance persist, a widening gap has emerged between boardroom aspirations

and practical execution. UK leaders are increasingly weighing their

expenditures more carefully, shifting from rapid adoption toward a more

calculated approach that prioritizes oversight and navigates the evolving

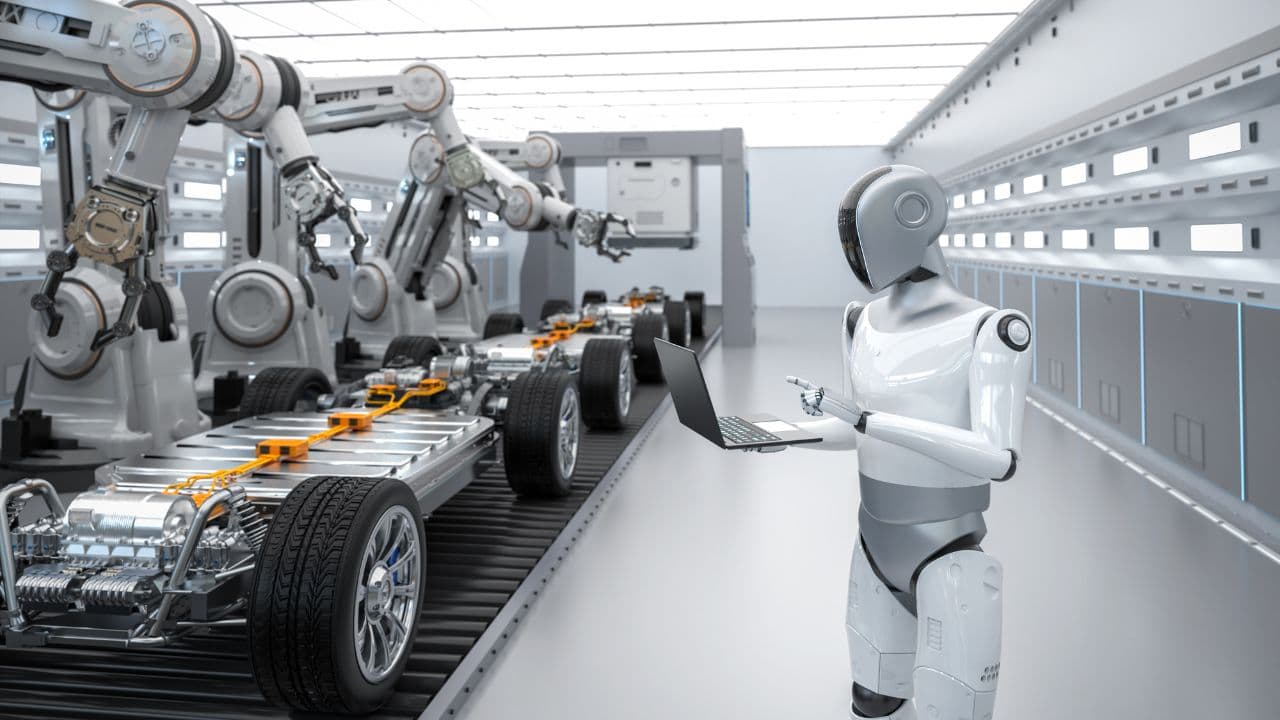

legislative landscape to avoid costly mistakes.Open Innovation and AI will define the next generation of manufacturing: Annika Olme, CTO, SKF

Annika Olme, the CTO of SKF, emphasizes that the future of manufacturing lies

at the intersection of open innovation and advanced technology like Artificial

Intelligence. She highlights how SKF is transitioning from being a traditional

bearing manufacturer to a digital-first, data-driven leader. By fostering a

culture of deep collaboration with startups, academia, and technology

partners, the company accelerates the development of smart solutions that

optimize industrial processes globally. AI and machine learning are central to

this evolution, particularly in predictive maintenance, which allows customers

to anticipate failures and reduce downtime significantly. Olme also

underscores the critical role of sustainability, noting that digital

transformation is intrinsically linked to circularity and energy efficiency.

By leveraging sensors and real-time data analysis, SKF helps various

industries minimize waste and lower their carbon footprint. The “Smart

Factory” vision involves integrating these technologies into every stage of

the product lifecycle, from design to end-of-use recycling. Ultimately, the

goal is to create a seamless synergy between human ingenuity and machine

intelligence, ensuring that manufacturing remains both competitive and

environmentally responsible. This holistic approach to innovation not only

boosts productivity but also redefines how global industrial leaders address

modern challenges like climate change, resource scarcity, and supply chain

volatility.

/filters:no_upscale()/articles/architects-ai-era/en/resources/128figure-2-1765966955803.jpg)