HR Leaders’ strategies for elevating employee engagement in global organisations

In the age of AI, HR technologies have emerged as powerful tools for enhancing

employee engagement by streamlining HR processes, improving communication, and

personalising the employee experience. Sreedhara added “By embracing HR Tech, we

can enhance the employee experience by reducing administrative burdens,

improving access to information, and enabling employees to focus on more

meaningful aspects of their work. Moreover, these technologies can contribute to

greater employee engagement. Enhancing employee experience via HR tech and tools

can improve efficiency, and empower employees to take more control of their

work-related tasks. We have also enabled some self-service technologies like:

Employee portal that serves all HR-related tasks, and access to policies and

processes across the employee life cycle - Onboarding, performance management,

benefits enrolment, and expense management; Employee feedback and surveys;

Databank for predictive analysis (early warning systems) and manage employee

engagement.”

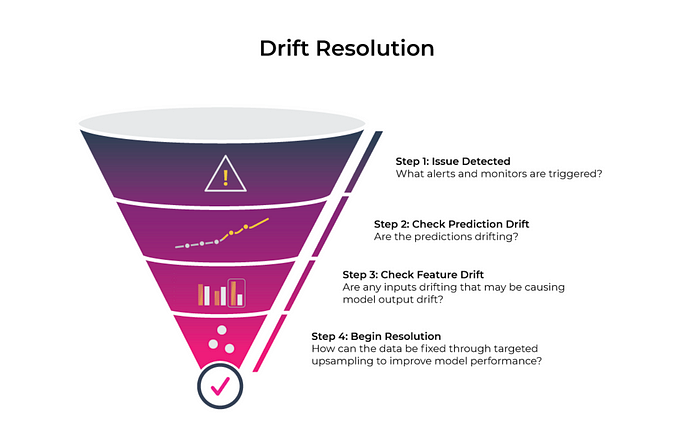

Bolstering enterprise LLMs with machine learning operations foundations

Risk mitigation is paramount throughout the entire lifecycle of the model.

Observability, logging, and tracing are core components of MLOps processes,

which help monitor models for accuracy, performance, data quality, and drift

after their release. This is critical for LLMs too, but there are additional

infrastructure layers to consider. LLMs can “hallucinate,” where they

occasionally output false knowledge. Organizations need proper

guardrails—controls that enforce a specific format or policy—to ensure LLMs in

production return acceptable responses. Traditional ML models rely on

quantitative, statistical approaches to apply root cause analyses to model

inaccuracy and drift in production. With LLMs, this is more subjective: it may

involve running a qualitative scoring of the LLM’s outputs, then running it

against an API with pre-set guardrails to ensure an acceptable answer.

Governance of enterprise LLMs will be both an art and science, and many

organizations are still understanding how to codify them into actionable risk

thresholds.

Reimagining Application Development with AI: A New Paradigm

AI-assisted pair programming is a collaborative coding approach where an AI

system — like GitHub Copilot or TestPilot — assists developers during coding.

It’s an increasingly common approach that significantly impacts developer

productivity. In fact, GitHub Copilot is now behind an average of 46 percent of

developers’ code and users are seeing 55 percent faster task completion on

average. For new software developers, or those interested in learning new

skills, AI-assisted pair programming are training wheels for coding. With the

benefits of code snippet suggestions, developers can avoid struggling with

beginner pitfalls like language syntax. Tools like ChatGPT can act as a

personal, on-demand tutor — answering questions, generating code samples, and

explaining complex code syntax and logic. These tools dramatically speed the

learning process and help developers gain confidence in their coding abilities.

Building applications with AI tools hastens development and provides more robust

code.

Don't Let AI Frenzy Lead to Overlooking Security Risks

"Everybody is talking about prompt injection or backporting models because it is

so cool and hot. But most people are still struggling with the basics when it

comes to security, and these basics continue to be wrong," said John Stone -

whose title at Google Cloud is "chaos coordinator" - while speaking at

Information Security Media Group's London Cybersecurity Summit. Successful AI

implementation requires a secure foundation, meaning that firms should focus on

remediating vulnerabilities in the supply chain, source code, and larger IT

infrastructure, Stone said. "There are always new things to think about. But the

older security risks are still going to happen. You still have infrastructure.

You still have your software supply chain and source code to think about." Andy

Chakraborty, head of technology platforms at Santander U.K., told the audience

that highly regulated sectors such as banking and finance must especially

exercise caution when deploying AI solutions that are trained on public data

sets.

The second coming of Microsoft's do-it-all laptop is more functional than ever

Microsoft's Surface Laptop Studio 2 is really unlike any other laptop on the

market right now. The screen is held up by a tiltable hinge that lets it switch

from what I'll call "regular laptop mode" to stage mode (the display is angled

like the image above) to studio mode (the display is laid flat, screen-side up,

like a tablet). The closest thing I can think of is, well, the previous Laptop

Studio model, which fields the same shape-shifting form factor. But after today,

if you're the customer for Microsoft's screen-tilting Surface device, then your

eyes will be all over the latest model, not the old. That's a good deal,

because, unlike the predecessor, the new Surface Laptop Studio 2 features an

improved 13th Gen Intel Core H-class processor, NVIDIA's latest RTX 4050/4060

GPUs, and an Intel NPU on Windows for video calling optimizations (which never

hurts to have). Every Microsoft expert on the demo floor made it clear to me

that gaming and content creation workflows are still the focus of the Studio

laptop, so the changes under the hood make sense.

Why more security doesn’t mean more effective compliance

Worse, the more tools there are to manage, the harder it might be to prove

compliance with an evolving patchwork of global cybersecurity rules and

regulations. That’s especially true of legislation like DORA, which focuses less

on prescriptive technology controls and more on providing evidence of why

policies were put in place, how they’re evolving, and how organizations can

prove they’re delivering the intended outcomes. In fact, it explicitly states

that security and IT tools must be continuously monitored and controlled to

minimize risk. This is a challenge when organizations rely on manual evidence

gathering. Panaseer research reveals that while 82% are confident they’re able

to meet compliance deadlines, 49% mostly or solely rely on manual, point-in-time

audits. This simply isn’t sustainable for IT teams, given the number of security

controls they must manage, the volume of data they generate, and continuous,

risk-based compliance requirements. They need a more automated way to

continuously measure and evidence KPIs and metrics across all security

controls.

EU Chips Act comes into force to ensure supply chain resilience

“With the entry into force today of the European Chips Act, Europe takes a

decisive step forward in determining its own destiny. Investment is already

happening, coupled with considerable public funding and a robust regulatory

framework,” said Thierry Breton, commissioner for Internal Market, in comments

posted alongside the announcement. “We are becoming an industrial powerhouse in

the markets of the future — capable of supplying ourselves and the world with

both mature and advanced semiconductors. Semiconductors that are essential

building blocks of the technologies that will shape our future, our industry,

and our defense base,” he said. The European Union’s Chips Act is not the only

government-backed plan aimed at shoring up domestic chip manufacturing in the

wake of the supply chain crisis that has plagued the semiconductor industry in

recent years. In the past year, the US, UK, Chinese, Taiwanese, South Korean,

and Japanese governments have all announced similar plans.

Microsoft Copilot Brings AI to Windows 11, Works Across Multiple Apps and Your Phone

With Copilot, it's possible to ask the AI to write a summary of a book in the

middle of a Word document, or to select an image and have the AI remove the

background. In one example, Microsoft showed a long email and demonstrated that

when you highlight the text, Copilot appears so you can ask it questions related

to the email. And that information can be cross-referenced to information found

online, such as asking Copilot for lunch spots nearby based on the email's

content. Copilot will be available on the Windows 11 desktop taskbar, making it

instantly available at one click. Microsoft says that whether you're using Word,

PowerPoint or Edge, you can call on Copilot to assist you with various tasks. It

can also be called on via voice. Copilot can connect to your phone, so, for

example, you can ask it when your next flight is and it'll look through your

text messages and find the necessary information. Edge, Microsoft's web browser,

will also have Copilot integrations.

What Are the Biggest Lessons from the MGM Ransomware Attack?

Ransomware groups increasingly focus on branding and reputation, according to

Ferhat Dikbiyik, head of research at third-party risk management software

company Black Kite. “When ransomware first made its appearance, the attacks were

relatively unsophisticated. Over the years, we have observed a marked elevation

in their capabilities and tactics,” he tells InformationWeek in a phone

interview. ... The group also called out: “The rumors about teenagers from the

US and UK breaking into this organization are still just that -- rumors. We are

waiting for these ostensibly respected cybersecurity firms who continue to make

this claim to start providing solid evidence to support it.” Dikbiyik also notes

that ransomware groups’ more nuanced selection of targets is an indication of

increased professionalism. “These groups are doing their homework. They have

resources. They acquire intelligence tools…they try to learn their targets,” he

says. While ransomware is lucrative, money isn’t the only goal. Selecting

high-profile targets, such as MGM, helps these groups to build a reputation,

according to Dikbiyik.

A Dimensional Modeling Primer with Mark Peco

“Dimensional models are made up of two elements: facts and dimensions,” he

explained. “A fact quantifies a property (e.g., a process cost or efficiency

score) and is a measurement that can be captured at a point in time. It’s

essentially just a number. A dimension provides the context for that number

(e.g., when it was measured, who was the customer, what was the product).” It’s

through combining facts and dimensions that we create information that can be

used to answer business questions, especially those that relate to process

improvement or business performance, Peco said. Peco went on to say that one of

the biggest challenges he sees with companies using dimensional models is with

integrating the potentially huge number of models into one coherent picture of

the business. “A company has many, many processes,” he said, “and each requires

its own dimensional model, so there has to be some way of joining these models

together to give a complete picture of the organization.”

Quote for the day:

"Things work out best for those who make

the best of how things work out." -- John Wooden

/cloudfront-eu-central-1.images.arcpublishing.com/prisa/ZZZ5T5IRVFDSZNYRI5ULBREEVE.jpg)

1552644002608.png?fm=png&auto=format)