Quote for the day:

"Always remember, your focus determines your reality." -- George Lucas

Agentic AI and Solution Architects

Agentic AI tools are intelligent systems designed to operate with

autonomy, agency, and authority—three foundational concepts that define their

ability to act independently, pursue goals on behalf of users, and make

impactful decisions within defined boundaries. These systems are often built

using a multi-agent architecture, where multiple specialized or generalist

agents collaborate, either in centralized or decentralized environments. ...

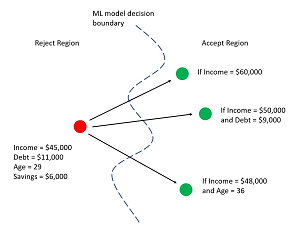

As (IT) architects we drive change that creates business

opportunities through technical innovation. One of the key activities of a

Solution Architect is to design solutions by applying methods and techniques

combined with technical and business expertise. The actual solution design

process will follow a similar pattern to that of a creative technology design

process. An architect will combine and group the different components together

according to stakeholder group and will, over several sessions, develop

concept views related to key architectural components, establishing different

options. Deciding the “right” option will mean balancing the various

criteria like functionality, value for money, compliance, quality, and

sustainability. IT architecture design involves complex decision-making,

planning, and problem-solving that require human expertise and experience.

That is where most of the architect’s work is focused on – using knowledge and

experience to research a particular subject, to apply design thinking and to

solve problems to establish a solution.

Agentic AI tools are intelligent systems designed to operate with

autonomy, agency, and authority—three foundational concepts that define their

ability to act independently, pursue goals on behalf of users, and make

impactful decisions within defined boundaries. These systems are often built

using a multi-agent architecture, where multiple specialized or generalist

agents collaborate, either in centralized or decentralized environments. ...

As (IT) architects we drive change that creates business

opportunities through technical innovation. One of the key activities of a

Solution Architect is to design solutions by applying methods and techniques

combined with technical and business expertise. The actual solution design

process will follow a similar pattern to that of a creative technology design

process. An architect will combine and group the different components together

according to stakeholder group and will, over several sessions, develop

concept views related to key architectural components, establishing different

options. Deciding the “right” option will mean balancing the various

criteria like functionality, value for money, compliance, quality, and

sustainability. IT architecture design involves complex decision-making,

planning, and problem-solving that require human expertise and experience.

That is where most of the architect’s work is focused on – using knowledge and

experience to research a particular subject, to apply design thinking and to

solve problems to establish a solution. Shadow AI risk: Navigating the growing threat of ungoverned AI adoption

Only half (52%) of global organizations claim to have comprehensive controls in

place, with smaller companies lagging even further behind. This lack of robust

governance and visibility leaves organizations vulnerable to data breaches,

compliance failures, and security risks. For many organizations, AI controls are

lacking. ... As AI systems become more autonomous and capable of acting on

behalf of users, the risks grow even more complex. The rise of agentic AI, which

can make decisions and take independent action within systems, amplifies the

impact of weak identity security controls. As these advanced AI systems are

given more control over critical systems and data, the potential risk of

security breaches and compliance failures grows exponentially. To keep pace,

security teams must evolve their identity security strategies to include these

emerging machine entities, treating them with the same rigor as human

identities. ... To effectively mitigate the risks associated with shadow AI and

ungoverned AI adoption, organizations need to start with a solid foundation of

governance and visibility. That means implementing clear acceptable use

guidelines, access controls, activity logging and auditing, and identity

governance for AI entities. By treating AI entities as identities that are

subject to the same authentication, authorization, and monitoring as human

users, organizations can safely harness the benefits of AI without compromising

security.

Only half (52%) of global organizations claim to have comprehensive controls in

place, with smaller companies lagging even further behind. This lack of robust

governance and visibility leaves organizations vulnerable to data breaches,

compliance failures, and security risks. For many organizations, AI controls are

lacking. ... As AI systems become more autonomous and capable of acting on

behalf of users, the risks grow even more complex. The rise of agentic AI, which

can make decisions and take independent action within systems, amplifies the

impact of weak identity security controls. As these advanced AI systems are

given more control over critical systems and data, the potential risk of

security breaches and compliance failures grows exponentially. To keep pace,

security teams must evolve their identity security strategies to include these

emerging machine entities, treating them with the same rigor as human

identities. ... To effectively mitigate the risks associated with shadow AI and

ungoverned AI adoption, organizations need to start with a solid foundation of

governance and visibility. That means implementing clear acceptable use

guidelines, access controls, activity logging and auditing, and identity

governance for AI entities. By treating AI entities as identities that are

subject to the same authentication, authorization, and monitoring as human

users, organizations can safely harness the benefits of AI without compromising

security.Secure Product Development Framework: More Than Just Compliance

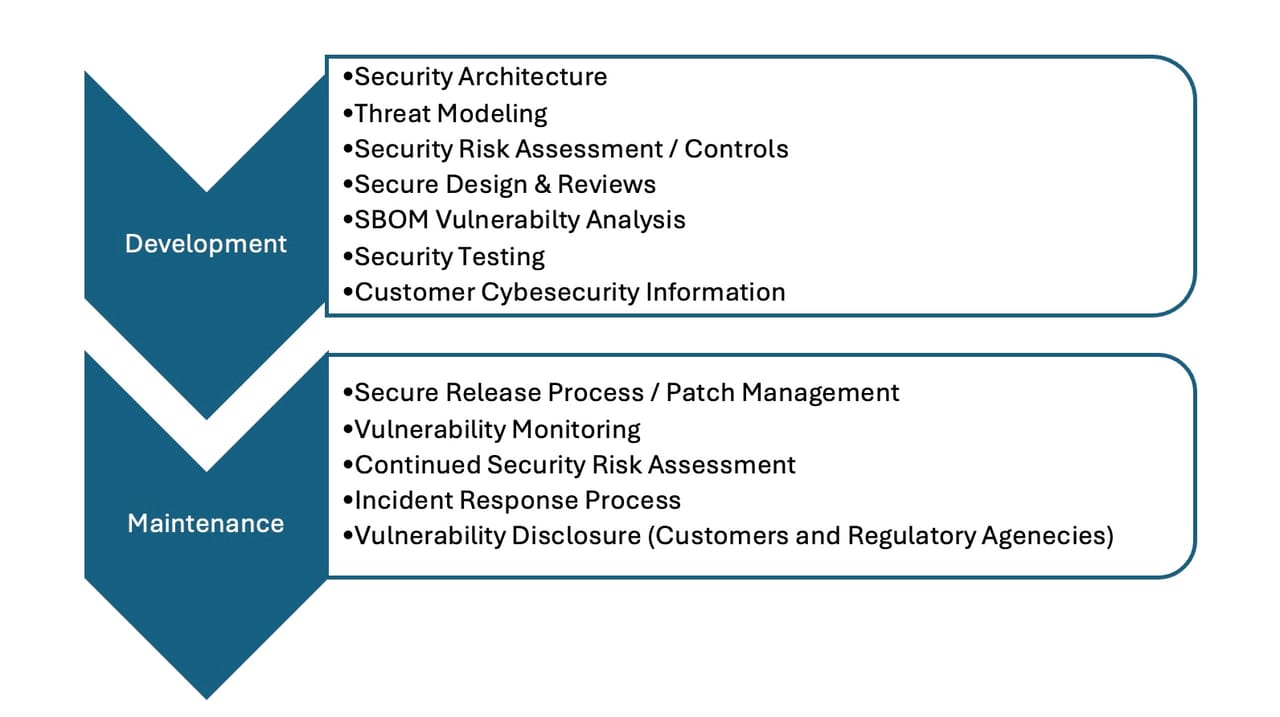

Security risk assessment is a key SPDF activity that starts early in development

and continues throughout the product life cycle through on-market support and

eventual product retirement. FDA guidance references AAMI SW96, “Standard for

medical device security - Security risk management for device manufacturers,” as

a recommended standard for a security risk assessment process. Security risk

assessment considers both safety and business security risks ... Implementing a

clear and consistent security risk assessment process within the SPDF can also

save time (and money). Focus can be placed on those areas of the design with the

highest security risk, instead of on design areas with little to no security

risk. Decisions on whether patches need to be applied in the field are easier to

make when based on security risk. Leveraging the same security risk process

across products and business areas allows teams to focus on execution rather

than designing a new process. Once a product is launched, an SPDF can assist

with managing that product. Postmarket SPDF activities include vulnerability

monitoring/disclosure, patch management, and incident response. A critical

component of vulnerability monitoring is the maintenance and continuous use of a

software bill of materials (SBOM). The SBOM provides a machine-readable

inventory of all custom, commercial, open-source, and third-party software

components within the device.

Security risk assessment is a key SPDF activity that starts early in development

and continues throughout the product life cycle through on-market support and

eventual product retirement. FDA guidance references AAMI SW96, “Standard for

medical device security - Security risk management for device manufacturers,” as

a recommended standard for a security risk assessment process. Security risk

assessment considers both safety and business security risks ... Implementing a

clear and consistent security risk assessment process within the SPDF can also

save time (and money). Focus can be placed on those areas of the design with the

highest security risk, instead of on design areas with little to no security

risk. Decisions on whether patches need to be applied in the field are easier to

make when based on security risk. Leveraging the same security risk process

across products and business areas allows teams to focus on execution rather

than designing a new process. Once a product is launched, an SPDF can assist

with managing that product. Postmarket SPDF activities include vulnerability

monitoring/disclosure, patch management, and incident response. A critical

component of vulnerability monitoring is the maintenance and continuous use of a

software bill of materials (SBOM). The SBOM provides a machine-readable

inventory of all custom, commercial, open-source, and third-party software

components within the device.

Vibe Coding Can Create Unseen Vulnerabilities

Vibe coding does accelerate app prototyping and makes software collaboration

easier, but it also has several shortcomings. Security is a serious concern.

Large language models (LLMs) are inherently vulnerable to security risks when

used by those without sufficient security experience. Moreover, the risk is

amplified by the fact that AI is so flexible that it’s impossible to give out

simple, universal rules on how to make AI write secure code for you. LLMs may

use outdated libraries, lack input validation, or fail to follow secure

practices. AI code generators also lack an understanding of trust boundaries and

system architectures. When using vibe coding, programmer oversight and review

are necessary to prevent these issues from entering production code. Working

with black-box code also makes it difficult to provide context about the app.

For example, improper configurations may expose internal logic by sending

sensitive code snippets to external APIs. This can be a real problem in highly

regulated industries with strict rules about code handling. Vibe coding also

tends to add technical debt, accumulating unreviewed or unexplained blocks of

code. Over time, these code blocks proliferate, creating a glut and making code

maintenance more difficult. Since less experienced developers tend to use vibe

coding, they can overlook security issues. Consider the recent Tea Dating Advice

hack. A hacker was able to access 72,000 images stored in a public

Vibe coding does accelerate app prototyping and makes software collaboration

easier, but it also has several shortcomings. Security is a serious concern.

Large language models (LLMs) are inherently vulnerable to security risks when

used by those without sufficient security experience. Moreover, the risk is

amplified by the fact that AI is so flexible that it’s impossible to give out

simple, universal rules on how to make AI write secure code for you. LLMs may

use outdated libraries, lack input validation, or fail to follow secure

practices. AI code generators also lack an understanding of trust boundaries and

system architectures. When using vibe coding, programmer oversight and review

are necessary to prevent these issues from entering production code. Working

with black-box code also makes it difficult to provide context about the app.

For example, improper configurations may expose internal logic by sending

sensitive code snippets to external APIs. This can be a real problem in highly

regulated industries with strict rules about code handling. Vibe coding also

tends to add technical debt, accumulating unreviewed or unexplained blocks of

code. Over time, these code blocks proliferate, creating a glut and making code

maintenance more difficult. Since less experienced developers tend to use vibe

coding, they can overlook security issues. Consider the recent Tea Dating Advice

hack. A hacker was able to access 72,000 images stored in a public The state of cloud-native computing in 2025

“We’ve reached a level of maturity in the cloud-native ecosystem that people

might think that things are now a bit boring. While AI is a natural extension of

Kubernetes and cloud-native architectures, there are changes required in the

architecture to support AI workloads compared to previous workloads. Platform

engineering continues to have strong customer interest… and new AI enhancements

allow for even greater productivity for developers and operators. ...” said

Miniman ... “However, runaway complexity and cost threaten to derail mass

enterprise success. The modern observability stack has become an exorbitant

black hole, delivering insufficient value for its exorbitant cost and demands a

fundamental rethink of data management. Simultaneously, the data lakehouse

gamble failed, proving too complex and expensive. The imperative is clear and

necessitates pulling workloads back from the brink with democratized data

management to pull workloads back onto central platforms,” said Zilka. ... “The

focus has shifted from how quickly I can deploy, to how I can get a handle on

costs and how resilient my platform is to changes or outages like we saw

recently with AWS. Teams are recognising the overhead these technologies have

introduced for developers and are centralising that work. We’re seeing more

platform teams set best practices, use tooling to enforce them and move from

“adoption mode” to “operational excellence,” said Rajabi.

“We’ve reached a level of maturity in the cloud-native ecosystem that people

might think that things are now a bit boring. While AI is a natural extension of

Kubernetes and cloud-native architectures, there are changes required in the

architecture to support AI workloads compared to previous workloads. Platform

engineering continues to have strong customer interest… and new AI enhancements

allow for even greater productivity for developers and operators. ...” said

Miniman ... “However, runaway complexity and cost threaten to derail mass

enterprise success. The modern observability stack has become an exorbitant

black hole, delivering insufficient value for its exorbitant cost and demands a

fundamental rethink of data management. Simultaneously, the data lakehouse

gamble failed, proving too complex and expensive. The imperative is clear and

necessitates pulling workloads back from the brink with democratized data

management to pull workloads back onto central platforms,” said Zilka. ... “The

focus has shifted from how quickly I can deploy, to how I can get a handle on

costs and how resilient my platform is to changes or outages like we saw

recently with AWS. Teams are recognising the overhead these technologies have

introduced for developers and are centralising that work. We’re seeing more

platform teams set best practices, use tooling to enforce them and move from

“adoption mode” to “operational excellence,” said Rajabi.

Insurability now a core test for boardroom AI & climate strategy

Organisations face growing threats from data poisoning and cyber-attacks,

prompting insurers to play a more decisive role in risk management. Levent

Ergin, Chief Climate, Sustainability & AI Strategist at Informatica,

highlighted the increasing scrutiny on what businesses can insure against. ...

AI is now a fixture at board meetings due to its direct impact on company

valuation. However, he observes a gap between current boardroom discussions and

the transformative potential of AI. "AI is now a standing item in every board

meeting because it directly shapes valuation. Investors see it as a signal of

how forward-thinking a company really is. But many boards are still asking the

wrong question: 'How can we use AI to automate or augment our existing

processes?' when they should be asking 'What's possible?' It's not just about

automating what already exists; it's about reimagining how things are done. ..."

said Hanson. ... "Too many businesses still treat AI projects like any other

investment, where the return has to be quantified against a specific outcome. In

truth, they should be budgeting for failure. The best innovators plan for things

not to work first time, just as pharmaceutical companies or tech giants do,

because even a 98% failure rate can still produce world-changing results. The

moment we stop fearing failure and start funding it, we'll see genuine AI

innovation break through," said Hanson.

Organisations face growing threats from data poisoning and cyber-attacks,

prompting insurers to play a more decisive role in risk management. Levent

Ergin, Chief Climate, Sustainability & AI Strategist at Informatica,

highlighted the increasing scrutiny on what businesses can insure against. ...

AI is now a fixture at board meetings due to its direct impact on company

valuation. However, he observes a gap between current boardroom discussions and

the transformative potential of AI. "AI is now a standing item in every board

meeting because it directly shapes valuation. Investors see it as a signal of

how forward-thinking a company really is. But many boards are still asking the

wrong question: 'How can we use AI to automate or augment our existing

processes?' when they should be asking 'What's possible?' It's not just about

automating what already exists; it's about reimagining how things are done. ..."

said Hanson. ... "Too many businesses still treat AI projects like any other

investment, where the return has to be quantified against a specific outcome. In

truth, they should be budgeting for failure. The best innovators plan for things

not to work first time, just as pharmaceutical companies or tech giants do,

because even a 98% failure rate can still produce world-changing results. The

moment we stop fearing failure and start funding it, we'll see genuine AI

innovation break through," said Hanson.

Are we in a cyber awareness crisis?

To improve cyber awareness, organizations need to move beyond box-ticking

exercises and build engagement through relevance and creativity. This is the

advice of Simon Backwell, a member of the Emerging Trends Working Group at

professional association ISACA, and head of information security at software

company Benefex. He advocates for interactive, rather than static training,

where employees can explore why something was suspicious, as they learn by

doing, rather than guessing the right answer and moving on. ... Not only does AI

present new risks from its use within the business, but also from the way

criminals are using it. “Email phishing attacks frequently use gen AI chatbots,

and vishing attacks, such as robocall scams, now use deepfakes,” notes Candrick.

“AI puts social engineering on steroids, yet cybersecurity leaders are still

using the same awareness measures that were already insufficient.” While

regulatory pressure will play a role in improving AI-related cybersecurity,

regulations will always struggle to keep pace, especially in the UK where the

process takes time. For example, the EU’s AI Act and Data Act is only now

filtering through, much like GDPR did back in 2018, says Backwell. But with how

fast AI is advancing – almost weekly – these rules risk becoming outdated as

soon as they’re released. ... “As board alignment weakens, CISOs have to work

harder to translate cyber risk into business impact, because boards now rank

business valuation as their top post-incident concern,” says Cooke.

To improve cyber awareness, organizations need to move beyond box-ticking

exercises and build engagement through relevance and creativity. This is the

advice of Simon Backwell, a member of the Emerging Trends Working Group at

professional association ISACA, and head of information security at software

company Benefex. He advocates for interactive, rather than static training,

where employees can explore why something was suspicious, as they learn by

doing, rather than guessing the right answer and moving on. ... Not only does AI

present new risks from its use within the business, but also from the way

criminals are using it. “Email phishing attacks frequently use gen AI chatbots,

and vishing attacks, such as robocall scams, now use deepfakes,” notes Candrick.

“AI puts social engineering on steroids, yet cybersecurity leaders are still

using the same awareness measures that were already insufficient.” While

regulatory pressure will play a role in improving AI-related cybersecurity,

regulations will always struggle to keep pace, especially in the UK where the

process takes time. For example, the EU’s AI Act and Data Act is only now

filtering through, much like GDPR did back in 2018, says Backwell. But with how

fast AI is advancing – almost weekly – these rules risk becoming outdated as

soon as they’re released. ... “As board alignment weakens, CISOs have to work

harder to translate cyber risk into business impact, because boards now rank

business valuation as their top post-incident concern,” says Cooke.

How to build a supercomputer

When it comes to Hunter’s architecture, Utz-Uwe Haus, head of HPC/AI EMEA research lab, at HPE, describes the Cray EX design as “the architecture that HPE, with its great heritage, builds for the top systems.” A single cabinet in an EX4000 system can hold up to 64 compute blades – high-density modular servers that share power, cooling, and network resources – within eight compute chassis, all of which are cooled by direct-attached liquid-cooled cold plates supported by a cooling distribution unit (CDU). “It's super integrated," he says. “The back part, which is the whole network infrastructure (HPE Slingshot), matches the front part, which contains the blades.” For Hunter, HLRS has selected AMD hardware, but Haus explains that with Cray EX systems, customers can, more or less, select their processing unit of choice from whichever vendor they want, and the compute infrastructure can be slotted into the system without the need to total reconfiguration. “Should HLRS decide at some point to swap [Hunter’s] AMD plates for the next generation, or use another competitor’s, the rest of the system stays the same. They could have also decided not to use our network – keep the plates and put a different network in, if we have that in the form factor. [HPE Cray EX architecture] is really tightly matched, but at the same time, it’s flexible," he says. Hunter itself is intended as a transitional system to the Herder exascale supercomputer, which is due to go online in 2027.The AI Reskilling Imperative: Bridging India's talent and gender gap

Policies should shift from less general policies to specific interventions.

Initiatives such as Digital India and Skill India need to be bolstered with

AI-specific courses available online in the local language. The government can:

Sponsor and encourage scholarships and mentorships for Women in AI. Develop

financial reward systems for companies reaching gender diversity in their AI

teams. Introduce AI literacy and ethics into the national education system,

beginning at the secondary school level. ... As the main consumer of AI talent,

the private sector should be at the forefront. The first one is the skills-first

approach to hiring, but reskilling as an ongoing investment is not an option.

Companies should: Devote a huge proportion of CSR budgets to simple AI and

digital literacy efforts, especially among women in low-income and rural

communities; Launch internal reskilling programs to shift existing workers out

of positions at risk of automation (e.g., manual software testing, simple data

entry) and into new roles, such as AI integrators or data annotators; Embrace

explicit ethical standards for the application of AI, including a workforce

transition and support strategy. ... The universities will be obliged to

redesign courses that incorporate AI's technical wisdom and infuse them with

morals, critical thinking, and subject knowledge. Collaboration between industry

and academia is important to ensure courses are practical and incorporate

real-world projects.

Policies should shift from less general policies to specific interventions.

Initiatives such as Digital India and Skill India need to be bolstered with

AI-specific courses available online in the local language. The government can:

Sponsor and encourage scholarships and mentorships for Women in AI. Develop

financial reward systems for companies reaching gender diversity in their AI

teams. Introduce AI literacy and ethics into the national education system,

beginning at the secondary school level. ... As the main consumer of AI talent,

the private sector should be at the forefront. The first one is the skills-first

approach to hiring, but reskilling as an ongoing investment is not an option.

Companies should: Devote a huge proportion of CSR budgets to simple AI and

digital literacy efforts, especially among women in low-income and rural

communities; Launch internal reskilling programs to shift existing workers out

of positions at risk of automation (e.g., manual software testing, simple data

entry) and into new roles, such as AI integrators or data annotators; Embrace

explicit ethical standards for the application of AI, including a workforce

transition and support strategy. ... The universities will be obliged to

redesign courses that incorporate AI's technical wisdom and infuse them with

morals, critical thinking, and subject knowledge. Collaboration between industry

and academia is important to ensure courses are practical and incorporate

real-world projects.Enterprises to focus AI spend on cost savings & data control

"CIOs will move from experimenting with AI to orchestrating it, governing

outcomes, agents, and data. AI leadership will evolve from pilots to

performance. CIOs will be accountable for tangible business outcomes, defining

clear frameworks that connect AI investments to enterprise KPIs and ROI. That

means managing a new hybrid workforce of humans and digital agents, complete

with job descriptions, correlated KPIs and measurement standards, and

governance guardrails. Yet none of this will succeed without secure

information management, ensuring that the data fueling and training these

agents is accurate, compliant, and trustworthy. Simply put, good data results

in good AI outcomes. As AI accelerates, traditional network and security

operations will be reimagined for an always-on, agent-driven enterprise, where

value is derived as much from data discipline as from innovation itself," said

Bell. ... "A Major brand fallout will force AI accountability. In the next

year, we'll likely see a major brand face real damage from AI misuse. It won't

be a cyberattack in the traditional sense but something more subtle, like a

plain text prompt injection that manipulates a model into acting against

intent. These attacks can force hallucinations, expose proprietary or

sensitive information, or break customer trust in seconds. Enterprises will

need to verify AI behavior the same way they secure their networks, by

checking every input and output. The companies that build AI systems with

accountability and transparency at the core will be those that keep their

reputations intact," said Berry.

"CIOs will move from experimenting with AI to orchestrating it, governing

outcomes, agents, and data. AI leadership will evolve from pilots to

performance. CIOs will be accountable for tangible business outcomes, defining

clear frameworks that connect AI investments to enterprise KPIs and ROI. That

means managing a new hybrid workforce of humans and digital agents, complete

with job descriptions, correlated KPIs and measurement standards, and

governance guardrails. Yet none of this will succeed without secure

information management, ensuring that the data fueling and training these

agents is accurate, compliant, and trustworthy. Simply put, good data results

in good AI outcomes. As AI accelerates, traditional network and security

operations will be reimagined for an always-on, agent-driven enterprise, where

value is derived as much from data discipline as from innovation itself," said

Bell. ... "A Major brand fallout will force AI accountability. In the next

year, we'll likely see a major brand face real damage from AI misuse. It won't

be a cyberattack in the traditional sense but something more subtle, like a

plain text prompt injection that manipulates a model into acting against

intent. These attacks can force hallucinations, expose proprietary or

sensitive information, or break customer trust in seconds. Enterprises will

need to verify AI behavior the same way they secure their networks, by

checking every input and output. The companies that build AI systems with

accountability and transparency at the core will be those that keep their

reputations intact," said Berry.

/articles/shadow-table-strategy-data-migration/en/smallimage/Shadow-Table-Strategy-for-Seamless-Service-Extractions-and-Data%20Migrations-thumbnail-1744019310168.jpg)