DevSecOps: Building security into each stage of development

While it is important and becoming invaluable, it’s difficult to know how well

open-source code has been maintained, Faus noted. A developer might incorporate

third-party code and inadvertently introduce a vulnerability. DevSecOps allows

security teams to flag that vulnerability and work with the development team to

identify whether the code should be written differently or if the vulnerability

is even dangerous. Ultimately, all parties can assure that they did everything

they could to produce the most secure code possible. In both DevOps and

DevSecOps, “the two primary principles are collaboration and transparency,” Faus

said. Another core tenet is automation, which creates repeatability and reuse.

If a developer knows how to resolve a specific vulnerability, they can reuse it

across every other project with that same vulnerability. ... One of the biggest

challenges in implementing security throughout the development cycle is the

legacy mindset in how security is treated, Faus pointed out. Organizations must

be willing to embrace cultural change and be open, transparent, and

collaborative about fixing security issues. Another challenge lies in building

in the right type of automation. “One of the first things is to make security a

requirement for every new project,” Faus said.

Curb Your Hallucination: Open Source Vector Search for AI

Vector search—especially implementing a RAG approach utilizing vector data

stores—is a stark alternative. Instead of relying on a traditional search engine

approach, vector search uses the numerical embeddings of vectors to resolve

queries. Therefore, searches examine a limited data set of more contextually

relevant data. The results include improved performance, earned by efficiently

utilizing massive data sets, and greatly decreased risk of AI hallucinations. At

the same time, the more accurate answers that AI applications provide when

backed by vector search enhance the outcomes and value delivered by those

solutions. Combining both vector and traditional search methods into hybrid

queries will give you the best of both worlds. Hybrid search ensures you cover

all semantically related context, and traditional search can provide the

specificity required for critical components ... Several open source

technologies offer an easy on-ramp to building vector search capabilities and a

path free from proprietary expenses, inflexibility, and vendor lock-in risks. To

offer specific examples, Apache Cassandra 5.0, PostgreSQL (with pgvector), and

OpenSearch are all open source data technologies that now offer enterprise-ready

vector search capabilities and underlying data infrastructure well-suited for AI

projects at scale.

Driving Serverless Productivity: More Responsibility for Developers

First, there are proactive controls, which prevent deployment of non-compliant

resources by instilling best practices from the get-go. Second, detective

controls, which identify violations that are already deployed, and then provide

remediation steps. It’s important to recognize these controls must not be

static. They need to evolve over time, just as your organization, processes, and

production environments evolve. Think of them as checks that place more

responsibility on developers to meet high standards, and also make it far easier

for them to do so. Going further, a key -- and often overlooked -- part of any

governance approach is its notification and supporting messaging system. As your

policies mature over time, it is vitally important to have a sense of lineage.

If we’re pushing for developers to take on more responsibility, and we’ve

established that the controls are constantly evolving and changing,

notifications cannot feel arbitrary or unsupported. Developers need to be able

to understand the source of the standard driving the control and the symptoms of

what they’re observing.

Mastering Observability: Unlocking Customer Insights

If we do something and the behaviour of our users changes in a negative way, if

they start doing things slower, less efficiently, then we're not delivering

value to the market. We're actually damaging the value we're delivering to the

market. We're disrupting our users' flows. So a really good way to think about

whether we are creating value or not is how is the behavior of our users, of our

stakeholders or our customers changing as a result of us shipping things out?

And this kind of behavior change is interesting because it is a measurement to

whether we are solving the problem, not whether we're delivering a solution. And

from that perspective, I can then offer five different solutions for the same

behavior change. I can say, "Well, if that's the behavior change we want to

create, this thing you proposed is going to cost five men millennia to make, but

I can do it with a shell script and it's going to be done tomorrow. Or we can do

it with an Excel export or we can do it with a PDF or we can do it through a

mobile website not building a completely new app". And all of these things can

address the same behavior change.

AI-generated code is causing major security headaches and slowing down development processes

The main priorities for DevSecOps in terms of security testing were the

sensitivity of the information being handled, industry best practice, and easing

the complexity of testing configuration through automation, all cited by around

a third. Most survey respondents (85%) said they had at least some measures in

place to address the challenges posed by AI-generated code, such as potential

IP, copyright, and license issues that an AI tool may introduce into proprietary

software. However, fewer than a quarter said they were ‘very confident' in their

policies and processes for testing this code. ... The big conflict here appears

to be security versus speed considerations, with around six-in-ten reporting

that security testing significantly slows development. Half of respondents also

said that most projects are still being added manually. Another major hurdle for

teams is the dizzying number of security tools in use, the study noted. More

than eight-in-ten organizations said they're using between six and 20 different

security testing tools. This growing array of tools makes it harder to integrate

and correlate results across platforms and pipelines, respondents noted, and is

making it harder to distinguish between genuine issues and false positives.

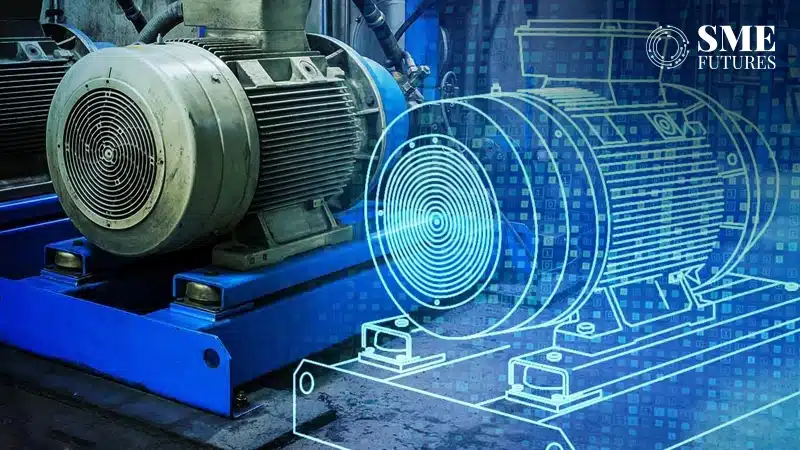

How digital twins are revolutionising real-time decision making across industries

Despite the promise of digital twins, Bhonsle acknowledges that there are

challenges to adoption. “Creating and maintaining a digital twin requires

substantial investments in infrastructure, including sensors, IoT devices, and

AI capabilities,” he points out. Security is another concern, particularly in

industries like healthcare and energy, where compromised data streams could lead

to life-threatening consequences. However, Bhonsle emphasises that the rewards

far outweigh the risks. “As digital twin technology matures, it will become more

accessible, even to smaller organisations, offering them a competitive edge

through optimised operations and data-driven decisions.” ... Digital twins are

transforming how businesses operate by providing real-time insights that drive

smarter decisions. From manufacturing floors to operating rooms, and from energy

grids to smart cities, this technology is reshaping industries in unprecedented

ways. As Bhonsle aptly puts it, “The rise of digital twins signals a new era of

efficiency and agility—an era where decisions are no longer based on assumptions

but driven by data in real time.” As organisations embrace this evolving

technology, they unlock new opportunities to optimise performance and stay ahead

in a fast-changing world.

AI and tech in banking: Half the industry lags behind

Gupta emphasised that a superficial approach to digitalisation—what he called

“putting lipstick on a pig”—is common in many institutions. These banks often

adopt digital tools without rethinking the processes behind them, resulting in

inefficiencies and missed opportunities for transformation. In addition, the

culture of risk aversion in many financial institutions makes them slow to

experiment with new technologies. According to a Deloitte survey, 62% of banking

executives cited corporate culture as the biggest barrier to successful digital

transformation. A fear of regulatory hurdles and data privacy issues also

compounds this reluctance to fully embrace AI. ... The rise of fintech companies

is also reshaping the financial landscape. Digital-first challengers like

Revolut and Monzo are making waves by offering streamlined, customer-centric

services that appeal to tech-savvy users. These companies, unencumbered by

legacy systems, have been able to rapidly adopt AI, providing highly

personalised products and services through their digital platforms. The UK

fintech sector alone saw record investment in 2021, with $11.6 billion pouring

into the industry, according to Innovate Finance. This influx of capital has

enabled fintech firms to invest in AI technologies, providing stiff competition

to traditional banks that are slower to adapt.

The Era Of Core Banking Transformation Trade-offs Is Finally Over

There must be a better way than forcing banks to choose their compromises.

Banking today needs a next-generation solution that blends the best of

configuration neo cores – speed to market, lower cost, derisked migration – and

combines it with the benefits of framework neo cores – full customization of

products and even the core, with massive scale built in as standard. If banks

and financial services aren’t forced to compromise because of their choice of

cloud-native core solution, they can accelerate their innovation. Our research

reveals that, while AI remains front of mind for many IT decision makers in

banking and financial services, only one in three (32%) have so far integrated

AI into their core solution. This is concerning. According to (McKinsey),

banking is one of the top four sectors set to capitalize on that market

opportunity – but that forecast will remain a pipe dream if banks can’t

integrate AI efficiently, securely and at massive scale. ... One thing is

certain, whether configuration or framework, neo cores are not the final

destination for banking. They have been a helpful stepping stone over the last

decade to cloud-native technology, but banks and financial services now need a

next-generation core technology that doesn’t demand so many

compromises.

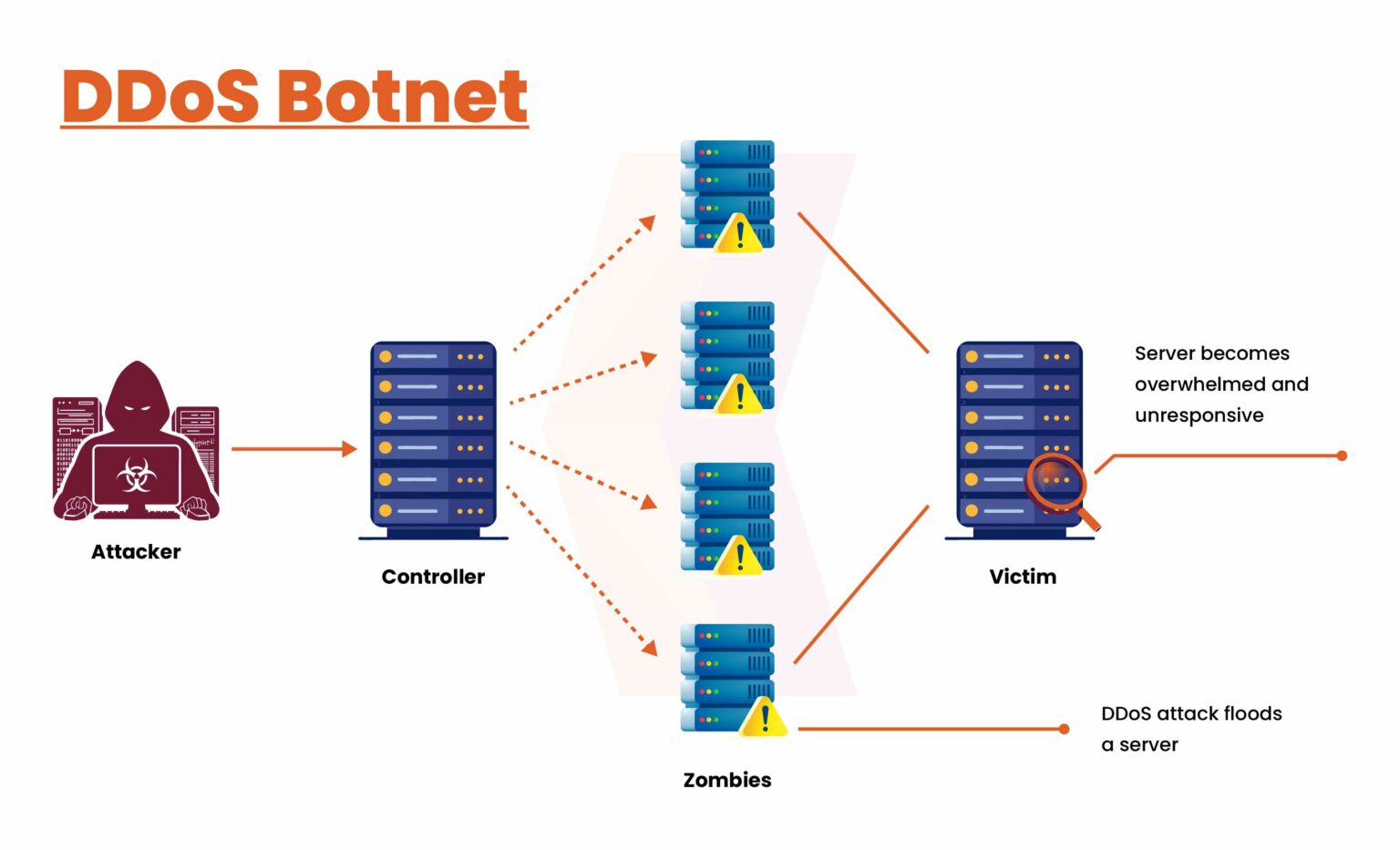

10 Risks of IoT Data Management

IoT data management faces significant security risks due to the large attack

surface created by interconnected devices. Each device presents a potential

entry point for cyberattacks, including data breaches and malware injections.

Attackers may exploit vulnerabilities in device firmware, weak authentication

methods, or unsecured network protocols. To mitigate these risks, implementing

end-to-end encryption, device authentication, and secure communication channels

is essential. ... IoT devices often collect sensitive personal information,

which raises concerns about user privacy. The lack of transparency in how data

is collected, processed, and shared can erode user trust, especially when data

is shared with third parties without explicit consent. Addressing privacy

concerns requires the anonymization and pseudonymization of personal data. ...

The massive influx of data generated by IoT devices can overwhelm traditional

storage systems, leading to data overload. Managing this data efficiently is a

challenge, as continuous data generation requires significant storage capacity

and processing power. To solve this, organizations can adopt edge computing,

which processes data closer to the source, reducing the need for centralized

storage.

Managing bank IT spending: Five questions for tech leaders

The demand for development resources and the need to manage tech debt are only

expected to increase. Tech talent has never been cheap, and inflation is pushing

up salaries. Cybersecurity threats are also becoming more urgent, demanding

greater funds to address them. And figuring out how to integrate generative AI

takes time, personnel, and money. Despite these competing priorities and

challenges, bank IT leaders have an opportunity to make their mark on their

organizations and position themselves as central to their success—if they can

address some key problems. ... In our experience, IT leaders should never

underestimate the importance of controlling and reducing tech debt whenever

possible. Actions to correct course could include conducting frequent

assessments to determine which areas are accumulating tech debt and developing

plans to reduce it as much as possible. More than many other industries, banking

is a hotbed of new app development. Leaders who address these key questions can

ensure they are directing their talent and resources to game-changing app

development that directly contributes to their bank’s bottom line.

Quote for the day:

“It's failure that gives you the proper

perspective on success.” -- Ellen DeGeneres