ConfusedPilot Attack Can Manipulate RAG-Based AI Systems

In a ConfusedPilot attack, a threat actor could introduce an innocuous document

that contains specifically crafted strings into the target’s environment. "This

could be achieved by any identity with access to save documents or data to an

environment indexed by the AI copilot," Mandy wrote. The attack flow that

follows from the user's perspective is this: When a user makes a relevant query,

the RAG system retrieves the document containing these strings. The malicious

document contains strings that could act as instructions to the AI system that

introduce a variety of malicious scenarios. These include: content suppression,

in which the malicious instructions cause the AI to disregard other relevant,

legitimate content; misinformation generation, in which the AI generates a

response using only the corrupted information; and false attribution, in which

the response may be falsely attributed to legitimate sources, increasing its

perceived credibility. Moreover, even if the malicious document is later

removed, the corrupted information may persist in the system’s responses for a

period of time because the AI system retains the instructions, the researchers

noted.

Open source package entry points could be used for command jacking

“Entry point attacks, while requiring user interaction, offer attackers a more

stealthy and persistent method of compromising systems [than other tactics],

potentially bypassing traditional security checks,” the report warns. Over the

past two years, many researchers have warned that open source package managers

are places where threat actors deposit malicious copies of legitimate tools or

libraries that developers want, often mimicking or copying the names of these

tools – a technique called typosquatting — to fool unsuspecting developers. ...

The tactic the researchers call command jacking involves using entry points to

masquerade as widely-used third-party tools. “This tactic is particularly

effective against developers who frequently use these tools in their workflows,”

the report notes. For instance, an attacker might create a package with a

malicious ‘aws’ entry point. When unsuspecting developers who regularly use AWS

services install this package and later execute the aws command, the fake ‘aws’

command could exfiltrate their AWS access keys and secrets. “This attack could

be devastating in CI/CD [continuous integration/continuous delivery]

environments, where AWS credentials are often stored for automated deployments,”

says the report

The Compelling Case for a Digital Transformation Revolution

A successful revolution of the Digital Transformation industry would result in

the following characteristics:DX initiatives would deliver a solution within the

time and budget constraints of the original estimate used to calculate the

Return on Investment (ROI) DX initiatives would measurably enhance the

transformed company’s ability to meet their stated business objectives DX

initiatives would be maintained and supported by the transformed company without

an indefinite dependence on consultants ... There is a need to adopt a set of

principles and corresponding values which, when followed, will lead to

successful outcomes in digital transformation. In today’s virtual world we have

the opportunity to call together DX practitioners from around the world to

participate in drafting those principles and values. If you have experience in

leading successful DX initiatives, I invite you to join me in this endeavor to

revolutionize DX. Following in the footsteps of the Agile Alliance, I have

decided to propose four sets of values and 12 principles upon which those values

are based. These values mirror the wording used by the Agile Alliance, but have

been updated to apply to digital transformation projects rather than software

development.

Leadership with a Purpose: The Transformative Impact of Corporate Retreats

Our retreats are carefully designed to balance introspection, relaxation, and

rejuvenation. We create bespoke itineraries tailored to the specific goals and

needs of the leadership team, with activities focused on mental clarity,

emotional well-being, and mindful leadership—critical for long-term

effectiveness in today’s high-pressure corporate world. Unlike conventional

retreats, Ekaanta is not just about unwinding; it's about equipping leaders with

tools (such as Super Brain Yog) that enable them to become more resilient and

purpose-driven when they return to work. What truly sets us apart is the blend

of ancient Eastern practices with modern scientific approaches to well-being. At

Ekaanta, leaders are not merely participants but learners. Each module provides

deep insights, helping them recalibrate their personal and professional lives.

Our setting by the Ganges, combined with nature-based practices like

Shinrin-Yoku (forest bathing), offers holistic rejuvenation that can’t be

replicated in traditional settings. We also offer Cognitive Flow Workshops,

integrating neuroscience with mindfulness to enhance decision-making, and

Leadership Circles, where participants engage in meaningful discussions on

leadership challenges and growth.

In with the new: how banking systems can use data more effectively

Technologies that help businesses capture and analyse their data can also help

to automate traditional back-office processes, such as those in trade finance

operations. “The work that Microsoft is doing in trade finance focuses on data,”

says Hazou. “In the current environment, trade finance documentation is

processed manually. There are said to be four billion pieces of paper in

circulation for trade finance every year. This is because it follows an old

business model that dates back to the House of Medici, an Italian banking family

in the 15th century. A lot of the documents – such as bills of lading and

exchange, invoices and certificates of inspections – have been mandated to be in

paper form due to pre-existing legislation.” In 2022, the International Chamber

of Commerce estimated that digitising trade documents could generate $25 billion

in new economic growth by 2024, and the industry is already making significant

changes to digitise and automate bank processing, paving the way for

increased efficiency globally. “There have been changes to the regulations for

trade paper,” says Hazou. ... “Users can ask simple natural language questions

and the copilot will transform them into queries about the business and respond

with the answers that they need,” says Martin McCann, CEO of Trade

Ledger.

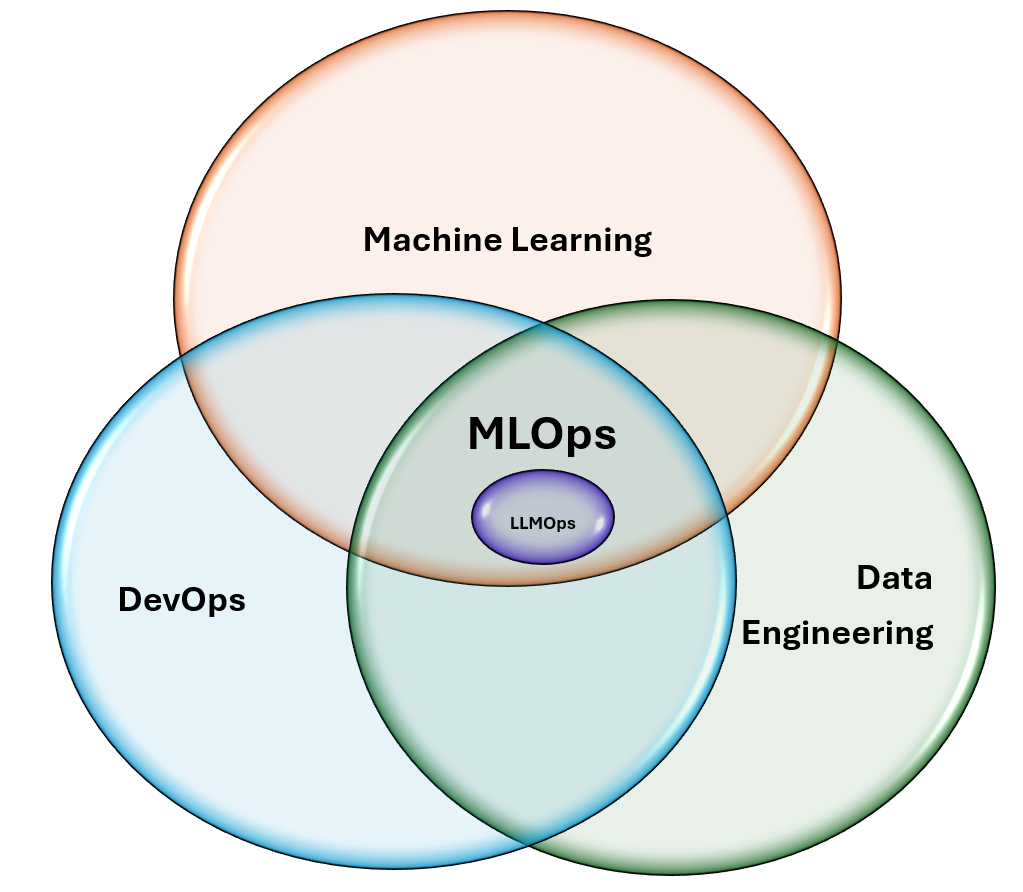

A Deep-Dive Into CodeOps or DevOps for Code

CodeOps is a relatively new concept within the context of DevOps that

addresses the challenges related to code automation and management. Its goal

is to speed up the development process by improving how code is written,

verified, released and maintained. By leveraging CodeOps, your code will

become more streamlined, effective, and coherent with your business

requirements. ... In recent times, DevOps emerged to modernize Agile software

development, enabling teams to not only build but also deploy the software

products and solutions as quickly as they can build them. This resulted in an

unprecedented surge in software creation worldwide. Several frameworks, such

as DevSecOps, MLOps, AIOps, DataOps, CloudOps and GitOps, have also emerged.

Each framework addresses specific engineering disciplines to enhance

operational efficiency. However, several challenges in software development

remain unaddressed. ... CodeOps leverages generative AI to drive innovation,

accelerate software development through reusable code and promote business

growth. Today’s businesses are implementing CodeOps as a revolutionary concept

for developing digital products. As a result, organizations can overcome

challenges, innovate and build as well as deploy software quickly.

Microservices Testing: Feature Flags vs. Preview Environments

In traditional monolithic applications, testing a new feature often involves

verifying the entire application as a whole. In microservices, each service is

developed, deployed and tested independently, making it harder to predict how

changes in one service might affect others. For example, a small change to an

authentication service could unexpectedly break the payment processor if their

interaction isn’t tested thoroughly. To ensure that such issues are caught

early and before they impact users, testing strategies must evolve. This is

where feature flags and preview environments come into play. Feature flags

provide a dynamic way to manage feature rollouts by decoupling deployment from

release. ... Effective microservices testing requires balancing speed and

reliability. Feature flags enable real-time testing in production but often

lack isolation for complex integration issues. Preview environments offer

isolation for premerge testing but can be resource-intensive and may not fully

replicate production traffic. The best approach? Combine both. Use preview

environments to catch bugs early, and then deploy with feature flags to

control the release in production. This ensures speed without sacrificing

quality.

The quantum dilemma: Game-changer or game-ender

Experts predict that a quantum computer can use the Shor algorithm to easily

crack encryption methods such as the RSA (Rivest-Shamir-Adleman), which is the

strongest and most common encryption method on the internet. Imagine if a

quantum computer could decrypt internet communications: it would enable

adversaries and rogue nations to gain access to sensitive and classified

information, posing a major threat to national and organizational security.

Cybersecurity experts believe that some threat actors and rogue nations may

have already kicked off a “harvest now, decrypt later” strategy, so that when

these quantum tools do arrive, they can immediately operationalize them for

malicious and strategic purposes. ... Quantum computing is a type of

breakthrough where government interference might be extremely high.

Organizations could find themselves cut off from quantum’s supercharged

processing power, because it may well be developed by a government for its own

ends, or restricted to protect national interests. Pending regulations could

also create uncertainty across industries, stifling innovation as companies

are forced to navigate the complexities of compliance and adjust their

strategies to meet new legal requirements.

Regulation with reward: How DORA can enhance businesses

Everyone now lives in an environment where what they do is either in the cloud

or attached to some kind of dedicated internet access service. If you have

just one internet connection and that goes down, you no longer have

operational resilience. That’s what DORA is trying to mitigate and where the

network operators get involved ahead of time to provide redundancy. This is

just one part of a series of regulations either introduced or coming down the

tracks. The likes of GDPR, NISD, and NIS2 are all working with essentially the

same goal in mind as DORA. Companies are being required to take ownership of

their security policies in the C-suite and ensure effective measures have been

taken. DORA addresses one of the pillars around operational resilience,

specifically on ensuring that connectivity aspect is maintained. Any

organisation working in the financial sector, including ICT providers, needs

to step up and meet the standards being set by DORA. The majority of

monitoring and threat awareness is now managed through the cloud. That

requires a resilient internet connection to ensure constant visibility and

observation of the regulations.

7 signs you may not be a transformational CIO

Functional CIOs “often lack the vision to reimagine business models and focus

too narrowly on maintaining existing systems rather than driving innovation,”

says Dr. Ina Sebastian, a research scientist at the MIT Center for Information

Systems Research (CISR), and co-author of the book Future Ready: Four Pathways

to Capturing Digital Value. “These CIOs might not prioritize aligning

technology investments with customer needs, creating a common framework and

language for discussing and prioritizing digital strategies, or developing a

clear strategy for navigating the complexities of digital transformation,”

Sebastian says. If a CIO can’t articulate a clear vision of how technology

will transform the business, it is unlikely they will inspire their staff.

Some CIOs are reluctant to invest in emerging technologies such as AI or

machine learning, viewing them as experimental rather than tools for gaining

competitive advantage. There’s also a tendency to focus on short-term gains

rather than long-term strategic goals. Another indicator is a lack of

engagement with other departments to understand their needs and challenges,

which can result in siloed operations and missed opportunities to foster

innovation.

Quote for the day:

"Your first and foremost job as a

leader is to take charge of your own energy and then help to orchestrate the

energy of those around you." -- Peter F. Drucker