Quote for the day:

"Risk management is a culture, not a cult. It only works if everyone lives it, not if it’s practiced by a few high priests." -- Tom Wilson

🎧 Listen to this digest on YouTube Music

▶ Play Audio DigestDuration: 21 mins • Perfect for listening on the go.

Reengineering AML in the Era of Instant Payments

The transition to high-value instant payments, underscored by the Federal

Reserve’s decision to raise FedNow transaction limits to $10 million,

necessitates a fundamental reengineering of Anti-Money Laundering (AML)

frameworks. Traditional monitoring systems, plagued by a 95% false-positive rate

and designed for retrospective reviews, are increasingly inadequate for

real-time rails where compliance decisions must occur within seconds.

Consequently, financial institutions are shifting their controls upstream,

prioritizing pre-settlement checks, robust customer due diligence, and

behavioral profiling.

The transition to high-value instant payments, underscored by the Federal

Reserve’s decision to raise FedNow transaction limits to $10 million,

necessitates a fundamental reengineering of Anti-Money Laundering (AML)

frameworks. Traditional monitoring systems, plagued by a 95% false-positive rate

and designed for retrospective reviews, are increasingly inadequate for

real-time rails where compliance decisions must occur within seconds.

Consequently, financial institutions are shifting their controls upstream,

prioritizing pre-settlement checks, robust customer due diligence, and

behavioral profiling.This evolution moves AML from a reactive back-end function to a preventive, intelligence-led process integrated throughout the customer life cycle. Enhanced data standards like ISO 20022 further enable nuanced, risk-based decisioning by providing richer transaction context. While industry experts argue that AI-powered tools can reconcile the perceived conflict between processing speed and rigorous control, the pace of adoption remains uneven across the sector. Larger institutions are aggressively modernizing their architectures, whereas smaller firms often struggle with legacy system constraints and vendor dependencies. Ultimately, the industry is moving toward a converged model where fraud and AML functions merge to address financial crime holistically. This strategic shift ensures that security does not come at the expense of the frictionless experience demanded by modern corporate treasury and retail sectors.

Inconsistent Privacy Labels Don't Tell Users What They Are Getting

The Dark Reading article "Inconsistent Privacy Labels Don't Tell Users What They

Are Getting" critiques the current effectiveness of mobile app privacy labels,

such as those found on Apple’s App Store and Google Play. While originally

designed to offer consumers transparency regarding data collection practices,

researcher Lorrie Cranor highlights that these labels remain largely inaccurate

and "not at all useful" in their present state. According to recent studies, the

discrepancies between an app’s actual data handling and its public label often

stem from developer misunderstandings and honest technical mistakes rather than

malicious intent. However, this inconsistency creates a deceptive environment

where companies appear to be prioritizing user privacy without actually doing

so. To address these failings, experts advocate for the standardization of

privacy reporting across platforms and the implementation of automated

verification tools to assist developers. Furthermore, placing these labels more

prominently within app store listings would ensure users can make informed

decisions before downloading software. Ultimately, without rigorous verification

and clearer presentation, the current privacy label system serves as more of a

performative gesture than a functional security tool, failing to provide the

level of protection and clarity that modern smartphone users require and expect

from major digital marketplaces.

The Dark Reading article "Inconsistent Privacy Labels Don't Tell Users What They

Are Getting" critiques the current effectiveness of mobile app privacy labels,

such as those found on Apple’s App Store and Google Play. While originally

designed to offer consumers transparency regarding data collection practices,

researcher Lorrie Cranor highlights that these labels remain largely inaccurate

and "not at all useful" in their present state. According to recent studies, the

discrepancies between an app’s actual data handling and its public label often

stem from developer misunderstandings and honest technical mistakes rather than

malicious intent. However, this inconsistency creates a deceptive environment

where companies appear to be prioritizing user privacy without actually doing

so. To address these failings, experts advocate for the standardization of

privacy reporting across platforms and the implementation of automated

verification tools to assist developers. Furthermore, placing these labels more

prominently within app store listings would ensure users can make informed

decisions before downloading software. Ultimately, without rigorous verification

and clearer presentation, the current privacy label system serves as more of a

performative gesture than a functional security tool, failing to provide the

level of protection and clarity that modern smartphone users require and expect

from major digital marketplaces.Cybersecurity and Operational Resilience: A Board-Level Imperative

In today's digital landscape, cybersecurity and operational resilience have evolved into critical boardroom imperatives, driven by a sophisticated threat environment and rigorous global regulations. The article highlights how sector-agnostic attacks, exemplified by the massive disruption at Change Healthcare, underscore the systemic risks posed to essential services. Contributing factors include the widespread monetization of "ransomware-as-a-service" and the emergence of AI-driven threats like deepfakes and automated phishing. Consequently, regulators in the EU and U.S. have introduced stringent frameworks—such as the NIS 2 Directive, the Digital Operational Resilience Act (DORA), and updated SEC rules—that demand proactive oversight, timely incident disclosure, and direct accountability from management bodies. Beyond mere legal compliance, boards are increasingly targeted by activist investors leveraging governance lapses as a catalyst for change. To navigate these challenges, the article advises directors to cultivate cyber expertise, rigorously oversee internal controls, and integrate AI governance into their broader strategic frameworks. Ultimately, organizations must shift from a reactive posture to a proactive, enterprise-wide resilience strategy to protect shareholders and ensure long-term stability amidst rapid technological shifts, quantum computing risks, and escalating financial losses associated with cyber breaches. This requires not only monitoring vulnerabilities but also investing in talent and technical controls that can withstand the dual pressures of legal liability and operational disruption.Biometric data sharing infrastructure matures as border control expectations evolve

The article outlines significant advancements and challenges in the global

biometric landscape as of April 2026, emphasizing the maturation of data-sharing

infrastructures and evolving border control expectations. A primary focus is the

centralization of digital trust, exemplified by Apple’s mandatory age

verification in the UK and EU, which shifts identity assurance to the device

level. Meanwhile, international travel is being streamlined by ICAO’s updated

Public Key Directory, allowing airports and airlines to authenticate documents

remotely via passenger smartphones. NIST has further modernized these systems by

transitioning biometric data exchange standards to fully machine-readable

formats. Despite these technical leaps, practical hurdles remain, such as

recurring delays in implementing Entry/Exit System checks at major UK-EU

borders. On a national level, digital identity programs are expanding, with

Niger launching biometric cards for regional integration and Spain granting full

legal status to its digital identity. Conversely, market pressures led to the

closure of Australia Post's Digital iD. Finally, the rise of AI agents has

sparked a debate over "proof of personhood," highlighting the urgent need for

robust digital frameworks to differentiate between human users and automated

entities within an increasingly complex and interconnected global digital

ecosystem.

The article outlines significant advancements and challenges in the global

biometric landscape as of April 2026, emphasizing the maturation of data-sharing

infrastructures and evolving border control expectations. A primary focus is the

centralization of digital trust, exemplified by Apple’s mandatory age

verification in the UK and EU, which shifts identity assurance to the device

level. Meanwhile, international travel is being streamlined by ICAO’s updated

Public Key Directory, allowing airports and airlines to authenticate documents

remotely via passenger smartphones. NIST has further modernized these systems by

transitioning biometric data exchange standards to fully machine-readable

formats. Despite these technical leaps, practical hurdles remain, such as

recurring delays in implementing Entry/Exit System checks at major UK-EU

borders. On a national level, digital identity programs are expanding, with

Niger launching biometric cards for regional integration and Spain granting full

legal status to its digital identity. Conversely, market pressures led to the

closure of Australia Post's Digital iD. Finally, the rise of AI agents has

sparked a debate over "proof of personhood," highlighting the urgent need for

robust digital frameworks to differentiate between human users and automated

entities within an increasingly complex and interconnected global digital

ecosystem.Learning to manage the cloud without losing control

In this insightful opinion piece, Vera Shulman, CEO of ProfiSea, addresses the critical challenges organizations face as they integrate generative artificial intelligence into their operations, specifically highlighting the surge in cloud spending. Shulman argues that while product teams focus on model capabilities, leadership often overlooks the strategic blind spot of runaway infrastructure costs. To prevent the estimated thirty percent of generative AI projects from failing after the proof-of-concept stage due to financial instability, she proposes a framework built on three fundamental pillars of cloud governance. First, she emphasizes token economics, suggesting that businesses must meticulously monitor token consumption and utilize retrieval-augmented generation to minimize data transfer costs. Second, Shulman advocates for a robust multi-cloud strategy to avoid vendor lock-in and provide the flexibility to route tasks to the most cost-efficient models. Finally, she stresses the necessity of automated financial management tools that can allocate resources in real-time and detect usage anomalies. Ultimately, the transition of artificial intelligence from a significant budget burden into a powerful strategic asset depends on intentionally designing cloud infrastructure around efficiency and governance. Decision-makers must shift their focus from mere model performance to ensuring their underlying systems are truly prepared for AI-centric business operations.Multi-Agent AI Patterns for Developers: Pick the Right Pattern for the Right Problem

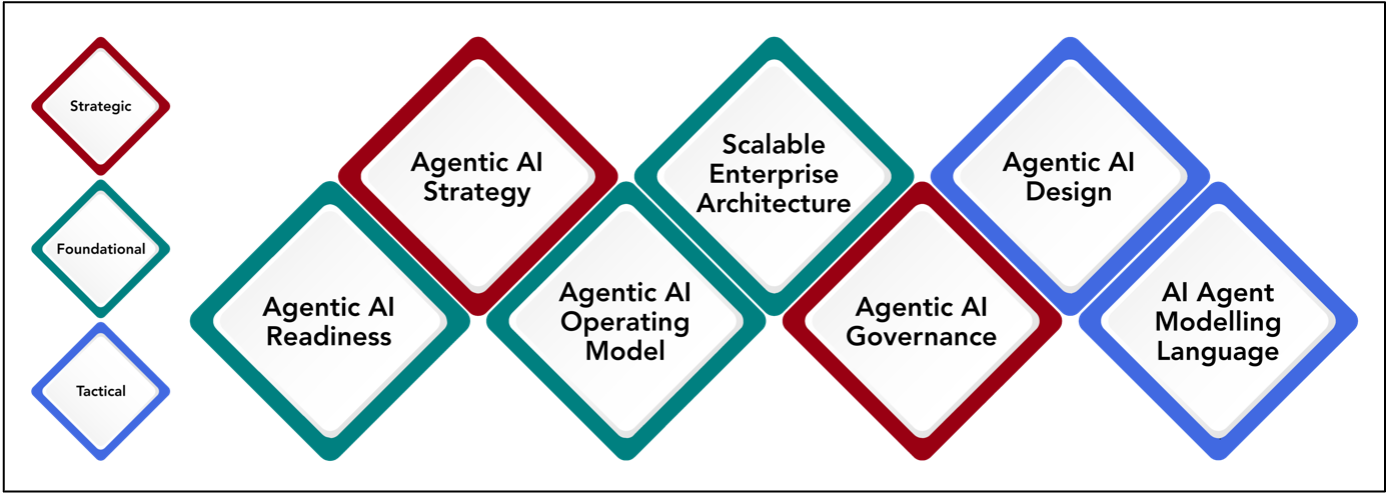

In "Multi-agent AI Patterns for Developers," the author examines the transition

from basic prompt engineering to sophisticated agentic architectures designed

for production-level reliability. The article outlines several fundamental

patterns, starting with the Router, which uses a classifier to direct queries to

specialized agents, and the Sequential Chain, which is ideal for linear,

multi-step processes. It emphasizes the Orchestrator-Workers model for complex

tasks requiring dynamic planning and delegation, alongside the Parallel/Voting

pattern for achieving consensus across multiple agent outputs. A significant

portion of the text is dedicated to the Evaluator-Optimizer loop, a pattern

where one agent refines work based on the critical feedback of another to ensure

high-quality results. By selecting patterns based on specific constraints—such

as latency, cost, and reasoning depth—developers can move beyond monolithic LLM

calls toward systems that handle error recovery and specialized tool usage

effectively. Ultimately, the guide suggests that the future of AI development

lies in these modular, collaborative frameworks, which provide the transparency

and control necessary to execute intricate business logic. This strategic

selection of architectures bridges the gap between experimental prototypes and

robust, autonomous AI agents capable of operating within complex real-world

environments.

In "Multi-agent AI Patterns for Developers," the author examines the transition

from basic prompt engineering to sophisticated agentic architectures designed

for production-level reliability. The article outlines several fundamental

patterns, starting with the Router, which uses a classifier to direct queries to

specialized agents, and the Sequential Chain, which is ideal for linear,

multi-step processes. It emphasizes the Orchestrator-Workers model for complex

tasks requiring dynamic planning and delegation, alongside the Parallel/Voting

pattern for achieving consensus across multiple agent outputs. A significant

portion of the text is dedicated to the Evaluator-Optimizer loop, a pattern

where one agent refines work based on the critical feedback of another to ensure

high-quality results. By selecting patterns based on specific constraints—such

as latency, cost, and reasoning depth—developers can move beyond monolithic LLM

calls toward systems that handle error recovery and specialized tool usage

effectively. Ultimately, the guide suggests that the future of AI development

lies in these modular, collaborative frameworks, which provide the transparency

and control necessary to execute intricate business logic. This strategic

selection of architectures bridges the gap between experimental prototypes and

robust, autonomous AI agents capable of operating within complex real-world

environments.How digital twins are redefining visibility and control in supply chain and logistics

Digital twins are revolutionizing supply chain and logistics by bridging the

gap between physical operations and digital data. This technology creates a

granular, real-time mirror of reality, enabling businesses to move beyond

simple tracking to deep operational intelligence. By integrating warehouse and

transport management systems with IoT sensors, digital twins provide a unified

data backbone that identifies process risks and SLA breaches before they

impact customers. This transformation shifts supply chains from reactive

systems to intelligent, anticipatory ones that offer predictive insights and

prescriptive models. The practical benefits include accelerated

decision-making, optimized resource utilization, and significant cost

reductions through smarter labor planning and routing. Furthermore, digital

twins enhance service quality by providing early warning signals for potential

delivery failures. However, successful implementation demands rigorous data

governance and automated anomaly detection to ensure accuracy. As these models

evolve, they progress toward autonomous orchestration, recommending strategic

actions like inventory rebalancing and order reallocation. Ultimately,

treating the digital twin as a strategic asset allows companies to achieve

unprecedented precision and reliability. By fostering a shared operational

truth across departments, organizations can compress planning cycles and set

new benchmarks for excellence in an increasingly competitive market where

customer experience is paramount.

Without controls, an AI agent can cost more than an employee

The article "Without controls, an AI agent can cost more than an employee"

explores the financial risks of deploying AI agents without rigorous

oversight. Industry experts, including Jason Calacanis and Chamath

Palihapitiya, note that uncontrolled API usage—particularly for complex tasks

like coding—can drive agent costs to $300 daily, effectively rivaling a

$100,000 annual salary. This "sloppy" deployment often occurs when

organizations use frontier models for broad, unmonitored tasks, leading to

excessive token consumption that may only replace a fraction of human labor.

Furthermore, experts emphasize that while agents can perform high-impact

shipping of features, blindly trusting them with code leads to significant

quality and security concerns. To mitigate these expenses, IT leaders must

transition from treating AI as a fixed utility to managing it as a

variable-cost resource. Key strategies include implementing hard spending

caps, assigning unique API keys to teams, and utilizing smaller, fine-tuned

models for specific, bounded tasks. While AI agents offer significant

productivity gains, their economic viability depends on benchmarking inference

costs against actual labor value. Ultimately, successful integration requires

clear governance, where agents are treated with the same accountability and

budgetary controls as any other department asset to ensure they remain a

cost-effective tool.

The article "Without controls, an AI agent can cost more than an employee"

explores the financial risks of deploying AI agents without rigorous

oversight. Industry experts, including Jason Calacanis and Chamath

Palihapitiya, note that uncontrolled API usage—particularly for complex tasks

like coding—can drive agent costs to $300 daily, effectively rivaling a

$100,000 annual salary. This "sloppy" deployment often occurs when

organizations use frontier models for broad, unmonitored tasks, leading to

excessive token consumption that may only replace a fraction of human labor.

Furthermore, experts emphasize that while agents can perform high-impact

shipping of features, blindly trusting them with code leads to significant

quality and security concerns. To mitigate these expenses, IT leaders must

transition from treating AI as a fixed utility to managing it as a

variable-cost resource. Key strategies include implementing hard spending

caps, assigning unique API keys to teams, and utilizing smaller, fine-tuned

models for specific, bounded tasks. While AI agents offer significant

productivity gains, their economic viability depends on benchmarking inference

costs against actual labor value. Ultimately, successful integration requires

clear governance, where agents are treated with the same accountability and

budgetary controls as any other department asset to ensure they remain a

cost-effective tool.