3 Steps To Include AI In Your Future Strategic Plans

AI is complex and multifaceted, so adopting it is not as simple as replacing

legacy systems with new technology. Leaders would need to dig deeper to

uncover barriers and opportunities. This can involve inviting external experts

to discuss AI's benefits and challenges, hosting workshops where team members

can explore different case studies, or creating internal discussion groups

focused on various aspects of AI technology and potential barriers to

adoption. ... A strong strategic plan should clearly link prospective

investments to the organization's purpose and mission. For example, if

customer centricity is central to the mission, any investment in new

technology should directly connect to improving customer outcomes. ... A

strategy plan should not only outline planned AI initiatives but also provide

a clear roadmap for implementation. Given that AI is still evolving, it's

crucial not to create a roadmap in isolation from ever-changing business

challenges, market dynamics, or technological advancements. ... In this

context, an AI strategy roadmap should be emergent— meaning it should be

grounded in key strategic intentions while also being flexible enough to adapt

to unforeseen events or black swan occurrences that necessitate rethinking and

adjustments.

Can Pure Scrum Actually Work?

“Pure Scrum,” described in the Scrum Guide, is an idiosyncratic framework that

helps create customer value in a complex environment. However, five main

issues are challenging its general corporate application:Pure Scrum focuses on

delivery: How can we avoid running in the wrong direction by building things

that do not solve our customers’ problems? Pure Scrum ignores product

discovery in particular and product management in general. If you think of the

Double Diamond, to use a popular picture, Scrum is focused on the right side;

see above. Pure Scrum is designed around one team focused on supporting one

product or service. Pure Scrum does not address portfolio management. It is

not designed to align and manage multiple product initiatives or projects to

achieve strategic business objectives. Pure Scrum is based on far-reaching

team autonomy: The Product Owner decides what to build, the Developers decide

how to build it, and the Scrum team self-manages. ... At its core, pure Scrum

is less a project management framework and more a reflection of an

organization’s fundamental approach to creating value. It requires a profound

shift from seeing work as a series of prescribed steps to viewing it as a

continuous journey of discovery and adaptation.

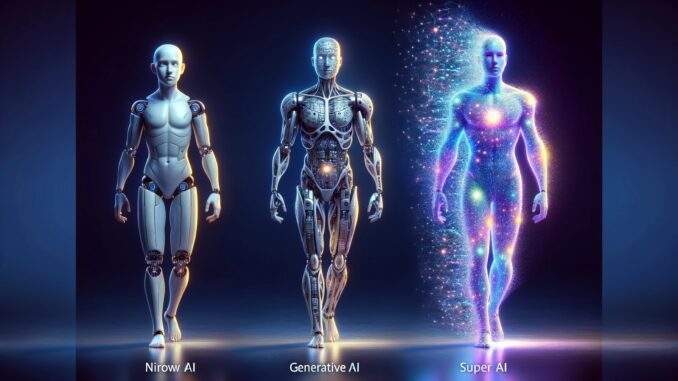

The Rise of Agentic AI: How Hyper-Automation is Reshaping Cybersecurity and the Workforce

As AI advances, concerns about job displacement grow louder. For years,

organizations have reassured employees that AI will “enhance, not replace”

human roles. Smith offered a more nuanced perspective: “AI will replace tasks,

not people—at least in the near term. Human oversight remains critical because

we still don’t fully understand AI behavior.” In cybersecurity, AI acts as a

force multiplier, streamlining tedious tasks like data analysis and incident

documentation while enabling humans to focus on strategic decisions. This

collaboration allows professionals to do more with less, amplifying

productivity without eliminating the need for human expertise. However, Smith

acknowledged long-term challenges. ... The rise of agentic AI marks a

transformative moment for cybersecurity and the workforce. As organizations

move beyond static workflows and embrace dynamic, autonomous systems, they

gain the ability to respond to threats faster and more efficiently than ever

before. However, this evolution demands a strategic approach—one that balances

automation with human oversight, strengthens defenses against AI-driven

attacks, and prepares for the societal shifts AI will bring.

If ChatGPT produces AI-generated code for your app, who does it really belong to?

From a contractual point of view, Santalesa contends that most companies

producing AI-generated code will, "as with all of their other IP, deem their

provided materials -- including AI-generated code -- as their property."

OpenAI (the company behind ChatGPT) does not claim ownership of generated

content. According to their terms of service, "OpenAI hereby assigns to you

all its right, title, and interest in and to Output." Clearly, though, if

you're creating an application that uses code written by an AI, you'll need to

carefully investigate who owns (or claims to own) what. For a view of code

ownership outside the US, ZDNET turned to Robert Piasentin, a Vancouver-based

partner in the Technology Group at McMillan LLP, a Canadian business law firm.

He says that ownership, as it pertains to AI-generated works, is still an

"unsettled area of the law." ... Piasenten says there may already be some UK

case law precedent, based not on AI but on video game litigation. A case

before the High Court (roughly analogous to the US Supreme Court) determined

that images produced in a video game were the property of the game developer,

not the player -- even though the player manipulated the game to produce a

unique arrangement of game assets on the screen.

Supply Chain Risk Mitigation Must Be a Priority in 2025

_Michael_Burrell_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)

Implementing impactful supply chain protections is far easier said than

accomplished, due to the complexity, scale, and integration of modern supply

chain ecosystems. While there isn't a silver bullet for eradicating threats

entirely, prioritizing a targeted focus on effective supply chain risk

management principles in 2025 is a critical place to start. It will require an

optimal balance of rigorous supplier validation, purposeful data exposure, and

meticulous preparation. ... As supply chain attacks accelerate, organizations

must operate under the assumption that a breach isn't just possible — it's

probable. An "assumption of breach" mindset shift will help drive more

meticulous approaches to preparation via comprehensive supply chain incident

response and risk mitigation. Preparation measures should begin with

developing and regularly updating agile incident response processes that

specifically cater to third-party and supply chain risks. For effectiveness,

these processes will need to be well-documented and frequently practiced

through realistic simulations and tabletop exercises. Such drills help

identify potential gaps in the response strategy and ensure that all team

members understand their roles and responsibilities during a crisis.

The End of Bureaucracy — How Leadership Must Evolve in the Age of Artificial Intelligence

AI doesn't just optimize — it transforms. It flattens hierarchies, demands

transparency and dismantles traditional power structures. For those managers

who thrive on gatekeeping, AI represents a fundamental threat, eliminating

barriers they've spent careers building. Consider this: AI thrives on

efficiency, speed and clarity. Tasks that once consumed hours of human effort

— like vetting vendor contracts or managing customer service inquiries — are

now handled instantly by AI systems. Employees can experiment with bold ideas

without wading through endless committee approvals. But the true power of AI

lies in decentralizing decision-making. By analyzing vast datasets, AI equips

frontline employees with actionable insights that previously required

executive oversight. This creates organizations that are faster, more agile

and less dependent on gatekeepers. ... In an AI-first world, hierarchies will

begin to collapse as real-time data eliminates the need for multiple layers of

oversight, enabling faster and more efficient decision-making. At the same

time, workflows will be reimagined as leaders take on the critical task of

redesigning processes to seamlessly integrate AI, ensuring organizations can

adapt quickly and effectively.

GAO report says DHS, other agencies need to up their game in AI risk assessment

The GAO said it is “recommending that DHS act quickly to update its guidance

and template for AI risk assessments to address the remaining gaps identified

in this report.” DHS, in turn, it said, “agreed with our recommendation and

stated it plans to provide agencies with additional guidance that addresses

gaps in the report including identifying potential risks and evaluating the

level of risk.” ... AI, he said, “is being pushed out to businesses and

consumers by organizations that profit from doing so, and assessing and

addressing the potential harm it may cause has until recently been an

afterthought. We are now seeing more focus on these potential negative

effects, but efforts to contain them, let alone prevent them, will always be

far behind the steamroller of new innovations in the AI realm.” Thomas

Randall, research lead at Info-Tech Research Group, said, “it is interesting

that the DHS had no assessments that evaluated the level of risk for AI use

and implementation, but had largely identified mitigation strategies. What

this may mean is the DHS is taking a precautionary approach in the time it was

given to complete this assessment.” Some risks, he said, “may be identified as

significant enough to warrant mitigation regardless of precise quantification

of that risk.

How CI/CD Helps Minimize Technical Debt in Software Projects

One of the foundational principles of CI/CD is the enforcement of automated

testing. Automated tests, such as unit tests, integration tests, and

end-to-end tests, ensure that code changes do not break existing

functionality. By integrating testing into the CI pipeline, developers are

alerted to issues immediately after they commit code. ... CI/CD pipelines

facilitate incremental and iterative development by encouraging small,

frequent code commits. Large, monolithic changes often introduce complexity

and technical debt because they are harder to test, debug, and review

effectively. ... Technical debt often arises from manual processes that are

error-prone and time-consuming. CI/CD eliminates many of these inefficiencies

by automating repetitive tasks, such as building, testing, and deploying

applications. Automation ensures that these steps are performed consistently

and accurately, reducing the risk of human error. ... Code reviews are a

critical component of maintaining high-quality software. CI/CD tools enhance

the code review process by providing automated feedback on every commit. This

feedback loop fosters a culture of accountability and continuous improvement

among developers.

Cost-conscious repatriation strategies

First, this is not a pushback on cloud technology as a concept; cloud works

and has worked for the past 15 years. This repatriation trend highlights

concerns about the unexpectedly high costs of cloud services, especially when

enterprises feel they were promised lowered IT expenses during the earlier

“cloud-only” revolutions. Leaders must adopt a more strategic perspective on

their cloud architecture. It’s no longer just about lifting and shifting

workloads into the cloud; it’s about effectively tailoring applications to

leverage cloud-native capabilities—a lesson GEICO learned too late. A holistic

approach to data management and technology strategies that aligns with an

organization’s unique needs is the path to success and lower bills.

Organizations are now exploring hybrid environments that blend public cloud

capabilities with private infrastructure. A dual approach, which is nothing

new, allows for greater data control, reduced storage and processing costs,

and improved service reliability. Weekly noted that there are ways to manage

capital expenditures in an operational expense model through on-premises

solutions. On-prem systems tend to be more predictable and cost-effective over

time.

Cyber Resilience: Adapting to Threats in the Cloud Era

/dq/media/media_files/2024/12/19/BwEmBRQxo98CUYRRuo86.png)

Use cloud-native security solutions that offer automated threat detection,

incident response, and monitoring. These technologies ought to be flexible

enough to adjust to changes in the cloud environment and defend against new

risks as they arise. ... Effective cyber resilience plans enable businesses to

recover quickly from emergencies by reducing downtime and maintaining

continuous service delivery. Businesses that put flexibility first can manage

emergencies with few problems, which helps them keep the confidence and trust

of their clients. Cyber resilience strongly emphasizes flexibility, enabling

companies to address new risks in the ever-evolving digital environment.

Businesses can lower financial losses and safeguard their reputation by

concentrating on data protection and breach remediation. Finding and fixing

common setup mistakes in cloud systems that could lead to security issues and

data breaches requires using Cloud Security Posture Management (CSPM) tools.

... Because criminals frequently use these configuration errors to cause data

breaches and security errors, it is essential to identify them. Organizations

may monitor their cloud environments and ensure that settings follow security

best practices and regulations by using CSPM solutions.

Quote for the day:

"Listen with curiosity, speak with

honesty act with integrity." -- Roy T Bennett

_Dzmitry_Skazau_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)