6 best practices to develop a corporate use policy for generative AI

The first step to craft your corporate use policy is to consider the scope. For

example, will this cover all forms of AI or just generative AI? Focusing on

generative AI may be a useful approach since it addresses large language models

(LLMs), including ChatGPT, without having to boil the ocean across the AI

universe. ... Involve all relevant stakeholders across your organization – This

may include HR, legal, sales, marketing, business development, operations, and

IT. Each group may see different use cases and different ramifications of how

the content may be used or mis-used. Involving IT and innovation groups can help

show that the policy isn’t just a clamp-down from a risk management perspective,

but a balanced set of recommendations that seek to maximize productive use and

business benefit while at the same time manage business risk. Consider how

generative AI is used now and may be used in the future – Working with all

stakeholders, itemize all your internal and external use cases that are being

applied today, and those envisioned for the future.

There Is No Resilience without Chaos

Chaos engineering has emerged as an increasingly essential process to maintain

reliability for applications — or in not only cloud native but any IT

environment. Unlike pre-production testing, chaos engineering involves

determining when and how software might break in production by testing it in a

non-production scenario. In this way, chaos engineering becomes an essential way

to prevent outages long before they happen. ... Chaos engineering, when done

properly, requires observability. Problems and issues that can cause outages and

the greater performance can be detected well ahead of time as bugs, poor

performance, security vulnerabilities, etc. become manifest during a proper

chaos engineering experiment. Once these bugs and kinks that can potentially

lead to outages if left unheeded are detected and resolved, true continued

resiliency in DevOps can be achieved. In the event of a failure, the SRE or

operations person seeking the source of error is often overloaded with

information.

Data Governance: Simple and Practical

Purpose-driven data governance programs narrow their focus to deliver urgent

business needs and defer much of the rest, with a couple caveats. First, data

governance programs are doomed to fail without senior executive buy-in and

continuous engagement of key stakeholders. Without them, no purpose can be

fulfilled. Second, data governance programs must identify and gain commitment

from relevant (but perhaps not all) data owners and stewards, but that doesn’t

necessarily mean roles and responsibilities need to be fully fleshed out right

away. Identify the primary purpose then focus on it – sounds like a simple

formula, but it’s not obvious. Many data governance leaders are quick to

define and pursue their three practices or five pillars or seven elements, and

why shouldn’t they? They need those capabilities, but wanting it all comes at

the sacrifice of getting it now. Generate business value with your primary

purpose before expanding. ... An insurer explained to me their dashboards

weren’t always refreshed, and when they were, wide fluctuations in values made

it impossible to make informed decisions.

What is platform engineering? Evolving devops

The developer portal is the main mechanism and expression of platform

engineering. Its main purpose is to gather together the developer's tooling,

documentation, and interactivity in one place. It is a kind of front end to

the organization's developer infrastructure. Developer portals (aka internal

developer platforms) have evolved out of several needs and trends. This primer

on developer portals delineates these tools into three types: universal

service catalog, API catalog tied to API gateway, and microservices catalog.

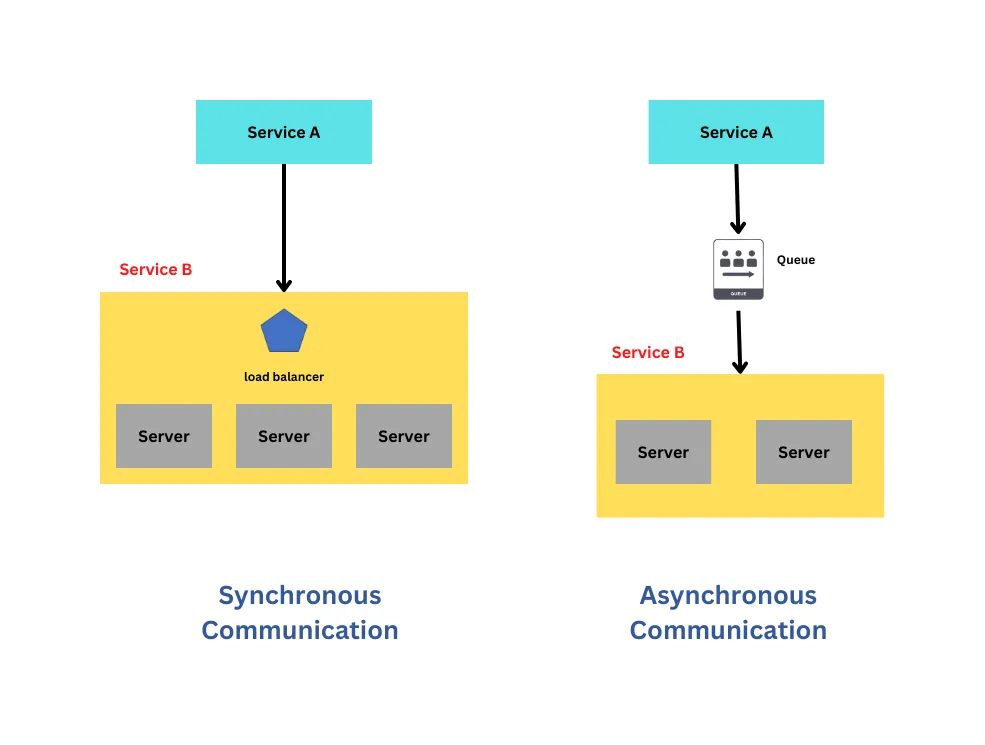

APIs figure large in platform engineering because the uptake of microservices

architecture has caused a great deal of increased complexity for modern

software teams. Orchestrating microservices in a large organization can be

very challenging. Just understanding what microservices are involved in a

given use case can be difficult. A developer portal offers a unified view into

the overall web of microservices. Another aspect of the developer portal is

offering a standard framework to combine the tools used by the

organization.

EU privacy regulators to create task force to investigate ChatGPT

In a statement posted on its website, the EDPB said the task force was

intended to “foster cooperation and to exchange information on possible

enforcement actions conducted by data protection authorities.” Last month,

Italy’s data privacy regulator issued a temporary ban against ChatGPT over

alleged privacy violations relating to the chatbot’s collection and storage of

personal data. Italy's guarantor for the protection of personal data ordered

the temporary halt on the processing of Italian users’ data by ChatGPT’s

parent firm OpenAI, unless it complied with EU privacy laws. In order to have

the service reinstated, the Italian guarantor outlined a list of data

protection requirements that OpenAI must comply with, including increased

transparency into how ChatGPT processes data, the right for nonusers to opt

out of having their data processed, and an age-gating system for signing up to

the service. In the wake of the ban, OpenAI CEO Sam Altman tweeted: “We of

course defer to the Italian government and have ceased offering ChatGPT in

Italy (though we think we are following all privacy laws).”

The mechanics of entrepreneurship

Lidow codifies this innovative shove, arguing that entrepreneurs invent and

create enduring change in one of three ways: by scaling supply, scaling

demand, or scaling simplicity. The first category includes those who scaled up

their supply by devising an efficient system and then repeating it. In the

late 1700s, the enterprising coin-maker Matthew Boulton, for example,

leveraged his superior knowledge of metalworking to create a new process for

producing coins quickly and uniformly—spawning countless societal changes.

This included the swarm of entrepreneurs in the early- to mid-1800s who

conceived the modern railway. Titans of the second category, scaled demand,

include cultivators of desire like Wedgwood, Selfridge, and the American PR

pioneer Edward Bernays, who coined the phrase “public relations” and created

the industry. Through carefully cultivated propaganda campaigns, Bernays

convinced wide swaths of folks in the US to support the country’s efforts in

World War I and, later, stimulated broad demand for products such as bacon and

tobacco.

3 IT leadership mistakes to avoid

The first exercise we undertook was brainstorming and agreeing on a set of

operating principles, such as all ideas would be respected regardless of which

side they came from; facts and data—not emotion—would drive decision-making;

and creating a positive client experience would be our collective North Star.

These principles became our rallying cry and helped lead the team to a very

successful client conversion. Contrast that with leaders who set rigid rules

for their teams to follow. Leading by a set of hard rules will limit

innovation, hinder individual and team development, and create a constant need

to add or modify the rules as situations change. ... There is no such thing as

a perfect organizational structure—there’s only an array of alternatives, each

with its own respective strengths and weaknesses. The only way to make an

inherently flawed organizational structure work is to have individuals

collaborate under a common strategy, purpose, and shared goals. Great teams

also take individuals who are willing to sacrifice for the good of the

whole.

Data leader Tejasvi Addagada on the value of data governance

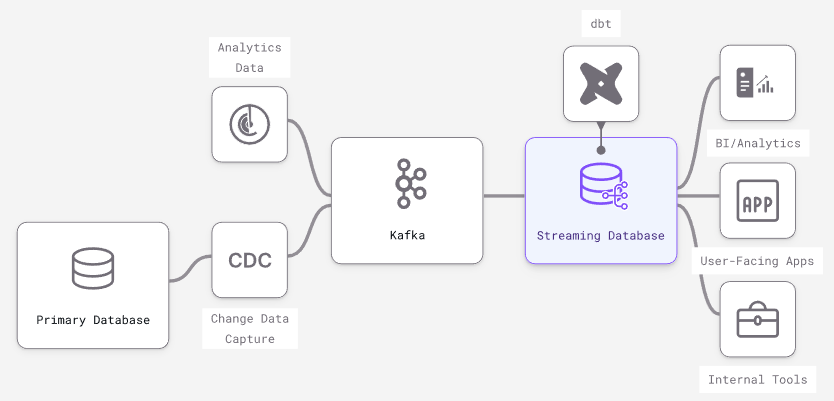

If data is siloed, it cannot be used for developing insights and products. For

an organization that is yet to invest in managing its data and thinks

centralization is costly or a bottleneck, a data mesh architecture is a

decentralized approach at its core, with its domain team ingesting its

operational and analytical data and developing data products. ... From the

initial concept of corporate governance, IT governance has evolved into the

recent concept of data governance. Globally, the adoption of cloud services,

the evolution of modern data stacks, and improved data literacy have led to a

greater interest in governing data over the past years. Implementing data

governance is necessary to get sustainable value from data. A subfunction can

be formalized as an authorized provisioning service. It can support activities

that help ensure that a data element can be rightfully sourced from a

designated provisioning point. In addition, it can have the domain team

express their trust in certifying data as a system of record as well as

authorized to provision.

Google Cloud Unveils AI Tools to Streamline Preauthorizations

“The Claims Acceleration Suite’s Claims Data Activator uses Document AI,

Healthcare Natural Language API, and Healthcare API to convert this

unstructured data to structured data and establish data interoperability,”

Waldron says. “This speeds up the process, and significantly reduces

administrative burdens and costs, enabling experts to make faster, more

informed decisions that improve patient care.” A quick prior authorization

process is essential to speeding up the process for a patient who may need

approval for transportation to an important medical procedure such as a

colonoscopy, according to Waldron. Patients also seek prior authorizations to

use a digital device as part of weight management or a care management plan

for conditions such as diabetes. A goal of Google’s Claims Data Activator is

to make healthcare prior authorization data more interoperable, or accessible

for all parties.

Data sharing between public and private is the answer to cybersecurity

Businesses and governments are already interlinked in their attempts to keep

ahead of cybercriminals. You only need to look at examples of the recent Royal

Mail attack, which saw the NCSC and the business working together to reduce

its impact. And across the Pond, Biden’s newly announced Cybersecurity

Strategy will focus on ensuring closer collaboration on cyber between

government and industry. Whilst all of this is moving in the right direction

in this regard, there’s more work to be done to create more intentional and

systematic cross-sharing and learning from one another. To kickstart the open

flow of knowledge in the industry, both public and private organizations could

sponsor a wider peer network for security experts that streamlines

intelligence from private to public or vice versa and offers support. Gartner

offers a Peer Connect network of business leaders that encourages the open

discussion of trends and ideas, critical to business decision-making.

Quote for the day:

"A leader's dynamic does not come from

special powers. It comes from a strong belief in a purpose and a willingness

to express that conviction." -- Kouzes & Posner