14 lessons CISOs learned in 2022

Ransomware attacks have increased in 2022, with companies and government

entities among the most prominent targets. Nvidia, Toyota, SpiceJet, Optus,

Medibank, the city of Palermo, Italy, and government agencies in Costa Rica,

Argentina, and the Dominican Republic were among the victims in 2022, a year in

which the lines between financially and politically motivated ransomware groups

continued to be blurred. A critical piece of any organization's defense strategy

should be employee awareness and training because "employees continue to be

targeted in threat actor strategies through phishing and other social

engineering means," says Gary Brickhouse, CISO at GuidePoint Security. ...

Organizations should also do more to keep up with vulnerabilities in both open-

and closed-source software. However, this is no easy task since thousands of

bugs surface yearly. Vulnerability management tools can help identify and

prioritize vulnerabilities found in operating systems applications.

Grow your own CIO: Building leadership and succession plans

To ensure the long-term health of the company, tech chiefs must focus on

building up that middle tier of IT leaders, a reality many CIOs are only now

recognizing the need to address. “There are not enough people out there — you

have to develop your own people,’’ says Roberts, who estimates that only 10%

to 20% of companies are “being intentional about doing formal development

programs.’’ Mike Eichenwald, a senior client partner at Korn Ferry Consulting,

agrees that it’s important to elevate individuals from vertical leadership

roles within the pillars of infrastructure, engineering, product, and security

to enterprise leadership roles. With technology converging in all aspects of

the business, doing so will help organizations leverage the diversity of

experience those midlevel managers have under their belts, and their learning

curve and degree of risk will be minimized, Eichenwald says. “Unfortunately,

organizations miss an opportunity to cultivate that talent internally and

often find themselves needing to reach out to the [external] market to bring

it in,’’ he adds.

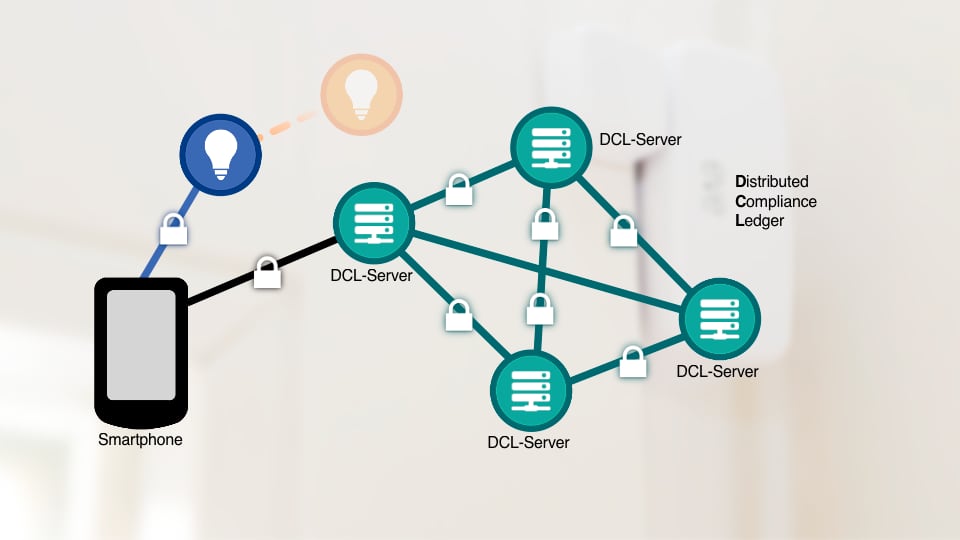

Open source security fought back in 2022

Anyone paying attention to open source for the past 20 years—or even the past

two—will not be surprised to see commercial interests start to flourish around

these popular open source technologies. As has become standard, that commercial

success is usually spelled c-l-o-u-d. Here's one prominent example: On December

8, 2022, Chainguard, the company whose founders cocreated Sigstore while at

Google, released Chainguard Enforce Signing, which enables customers to use

Sigstore-as-a-service to generate digital signatures for software artifacts

inside their own organization using their individual identities and one-time-use

keys. This new capability helps organizations ensure the integrity of container

images, code commits, and other artifacts with private signatures that can be

validated at any point an artifact needs to be verified. It also allows a

dividing line where open source software artifacts are signed in the open in a

public transparency log; however, enterprises can sign their own software with

the same flow, but with private versions that aren’t in the public log.

Turning the vision of a utopic smart city into reality

It’s critical to consider what success looks like, and this can be measured by

how user-friendly and efficient a service is, as well as cost efficiencies. For

instance, reducing the time to find a parking space in a new city from an hour

to just a few minutes when using parking apps which can indicate spaces and

process payment. It’s almost impossible to consider smart cities without

thinking about the efficient energy management benefits of smart buildings.

Sustainable initiatives such as integrated workplace management systems already

have the capability to monitor over 50,000 data points per second, analyse data,

and send it to mobile apps. This could see millions of users saving energy. With

a long-term vision for smart city platforms to become unified or standardised,

one solution can potentially work seamlessly anywhere in the world. Platforms

could integrate city infrastructure and navigation, and access to emergency and

city services. Transformation will be driven by users empowered with the right

data, perhaps even according to their user type of student, tourist, or city

resident.

Can real-time data visualisation deliver trust and opportunity?

What is interesting is that so much of this is driven through an ecosystem of

partners. No one organisation can deliver the breadth and depth of data and

tools needed to make such projects work and there is much to learn from that.

Collaborations and partnerships can elevate and enhance real-time data

visualisation and value. For many organisations however, real-time data is still

virgin territory and real-time visualisation is one of those technologies where

reality cannot hope to match expectation, at least according to Jaco Vermeulen,

CTO of tech consultancy BML Digital. “Almost every customer says they want

real-time visualisation, but then nine out of 10 can’t qualify why they need it,

especially when it comes to what decisions or actions it will enable,” says

Vermeulen. “This is usually because they start from the belief that the data is

always available and therefore should be immediately understandable and yield

profound insight. The truth is a bit more challenging.” ... “It is the real-time

decisions that create impact,” he says. “Optimising supply chains, reducing

waste and pollution, optimising operations, and informing and satisfying

consumers.

IBM’s Krishnan Talks Finding the Right Balance for AI Governance

The challenge comes essentially from not knowing how the sausage was made. One

client, for instance, had built 700 models but had no idea how they were

constructed or what stages the models were in, Krishnan said. “They had no

automated way to even see what was going on.” The models had been built with

each engineer’s tool of choice with no way to know further details. As result,

the client could not make decisions fast enough, Krishnan said, or move the

models into production. She said it is important to think about explainability

and transparency for the entire life cycle rather than fall into the tendency to

focus on models already in production. Krishnan suggested that organizations

should ask whether the right data is being used even before something gets

built. They should also ask if they have the right kind of model and if there is

bias in the models. Further, she said automation needs to scale as more data and

models come in. The second trend Krishan cited was the increased responsible use

of AI to manage risk and reputation to instill and maintain confidence in the

organization.

13 tech predictions for 2023

“Different edges are implemented for different purposes. Edge servers and

gateways may aggregate multiple servers and devices in a distributed location,

such as a manufacturing plant. An end-user premises edge might look more like

a traditional remote/branch office (ROBO) configuration, often consisting of a

rack of blade servers. Telecommunications providers have their own

architectures that break down into a provider far edge, a provider access

edge, and a provider aggregation edge. ... As we enter 2023, CIOs have earned

a seat among the decision-makers and are now at the helm of company-wide

technology decision-making. Amid a volatile economic climate, IT leaders must

prioritize reducing costs, but they are finding themselves pulled between

contrasting concerns of managing spend, dealing with security risks, and

fostering innovation. As they navigate an uncertain market, CIOs will need to

analyze company usage, along with their previous experience, to rethink

business approaches and make decisions. The goal is to identify ways to reduce

spend across the company, but not at the expense of key areas like

cybersecurity and innovation.

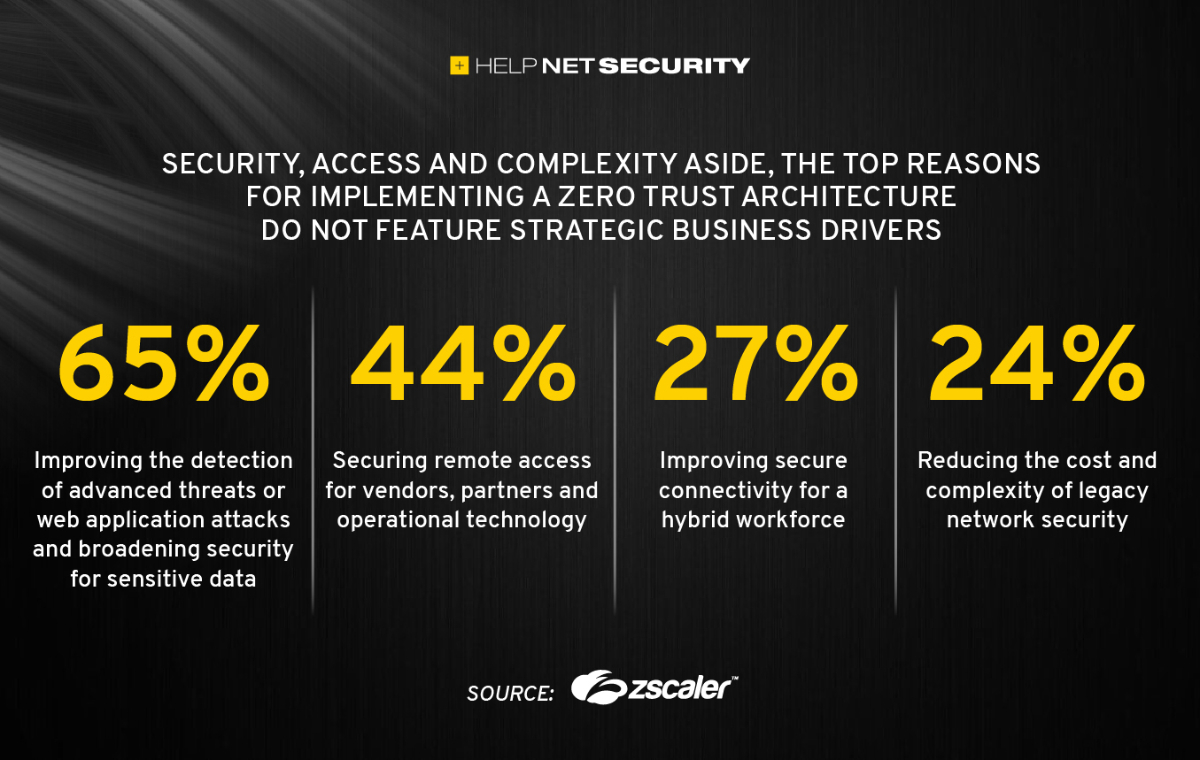

Preventing a ransomware attack with intelligence: Strategies for CISOs

One of the most effective ways to stop a ransomware attack is to deny them

access in the first place; without access, there is no attack. The adversary

only needs one route of access, and yet the defender has to be aware and

prevent all entry points into a network. Various types of intelligence can

illuminate risk across the pre-attack chain—and help organizations monitor and

defend their attack surfaces before they’re targeted by attackers. The best

vulnerability intelligence should be robust and actionable. For instance, with

vulnerability intelligence that includes exploit availability, attack type,

impact, disclosure patterns, and other characteristics, vulnerability

management teams predict the likelihood that a vulnerability could be used in

a ransomware attack. With this information in hand, vulnerability management

teams, who are often under-resourced, can prioritize patching and preemptively

defend against vulnerabilities that could lead to a ransomware attack. Having

a deep and active understanding of the illicit online communities where

ransomware groups operate can also help inform methodology, and prevent

compromise.

What to do when your devops team is downsized

If you lead teams or manage people, your first thought must be how they feel

or how they are personally impacted by the layoffs. Some will be angry if

they’ve seen friends and confidants let go; others may be fearful they’re

next. Even when leadership does a reasonable job at communication (which is

all too often not the case), chances are your teams and colleagues will have

unanswered questions. Your first task after layoffs are announced is to open a

dialogue, ask people how they feel, and dial up your active listening skills.

Other steps to help teammates feel safe include building empathy for personal

situations, energizing everyone around a mission, and thanking team members

for the smallest wins. Use your listening skills to identify the people who

have greater concerns and fears or who may be flight risks. You’ll want to

talk to them individually and find ways to help them through their anxieties

or recognize when they need professional help. You should also give people and

teams time to reflect and adjust. Asking everyone to get back to their sprint

commitments and IT tickets is insensitive and unrealistic, especially if the

company laid off many people.

Our ChatGPT Interview Shows AI Future in Banking Is Scary-Good

ChatGPT is a large, advanced language processing model that is trained using a

technique called generative pre-trained transformer, or GPT. This allows

ChatGPT to generate human-like responses to questions and statements in a

conversation, making it a powerful tool for a wide range of applications.

Compared to traditional chatbots, which are often limited in their ability to

understand and generate natural language, ChatGPT has the advantage of being

able to provide more accurate and detailed responses. Additionally, because it

is trained using a large amount of data, ChatGPT is able to learn and adapt to

different conversational styles and contexts, making it more versatile and

capable of handling a wider range of scenarios. ... The banking industry can

use ChatGPT technology in a number of ways to improve their operations and

provide better service to their customers. For example, ChatGPT can be used to

automate customer service tasks, such as answering frequently asked questions

or providing detailed information about products and services. This can free

up customer service representatives to focus on more complex or high-value

tasks, improving overall efficiency and customer satisfaction.

Quote for the day:

"Strong leaders encourage you to do

things for your own benefit, not just theirs." -- Tim Tebow