Navigating the digital economy: Innovation, risk, and opportunity

As we move towards the era of Industry 5.0, Digital Economy needs to adopt Human

Centred Design (HCD) approach where technology layers revolve around the Human’s

as the core. By 2030, it is envisaged to have Organoid Intelligence (OI) to rule

the digital economy space with its potential across multi-disciplines with Super

Intelligent capabilities. This capability shall democratize digital economy

services across sectors in a seamless manner. This rapid technology adoption

exposes the system to cyber risks which calls for advanced future security

solutions such as Quantum Security embedded with digital currencies such as

e-Rupee, crypto-currency, etc. ‘e-rupee’, a virtual equivalent of cash stored in

a digital wallet, offers anonymity in payments. ... Indian banks are already

piloting blockchain for issuing Letters of Credit, and integrating UPI with

blockchain could combine the strengths of both systems, ensuring greater

security, ease of use, and instant transactions. Such cyber security threats,

also create opportunity for Bit-coin or Crypto-currencies to expand from its

current offering towards sectors such as gaming, etc.

From DevOps to Platform Engineering: Powering Business Success

Platform engineering provides a solution with the tools and frameworks needed to

scale software delivery processes, ensuring that organizations can handle

increasing workloads without sacrificing quality or speed. It also leads to

improved consistency and reliability. By standardizing workflows and automating

processes, platform engineering reduces the variability and risk associated with

manual interventions. This leads to more consistent and reliable deployments,

enhancing the overall stability of applications in production. Further

productivity comes from the efficiency it offers developers themselves.

Developers are most productive when they can focus on writing code and solving

business problems. Platform engineering removes the friction associated with

provisioning resources, managing environments, and handling operational tasks,

allowing developers to concentrate on what they do best. It also provides the

infrastructure and tools needed to experiment, iterate, and deploy new features

rapidly, enabling organizations to stay ahead of the curve.

Scaling Databases To Meet Enterprise GenAI Demands

A hybrid approach combines vertical and horizontal scalability, providing

flexibility and maximizing resource utilization. Organizations can begin with

vertical scaling to enhance the performance of individual nodes and then

transition to horizontal scaling as data volumes and processing demands

increase. This strategy allows businesses to leverage their existing

infrastructure while preparing for future growth — for example, initially

upgrading servers to improve performance and then distributing the database

across multiple nodes as the application scales. ... Data partitioning and

sharding involve dividing large datasets into smaller, more manageable pieces

distributed across multiple servers. This approach is particularly beneficial

for vector databases, where partitioning data improves query performance and

reduces the load on individual nodes. Sharding allows a vector database to

handle large-scale data more efficiently by distributing the data across

different nodes based on a predefined shard key. This ensures that each node

only processes a subset of the data, optimizing performance and

scalability.

Safeguarding Expanding Networks: The Role of NDR in Cybersecurity

NDR plays a crucial role in risk management by continuously monitoring the

network for any unusual activities or anomalies. This real-time detection

allows security teams to catch potential breaches early, often before they can

cause serious damage. By tracking lateral movements within the network, NDR

helps to contain threats, preventing them from spreading. Plus, it offers deep

insights into how an attack occurred, making it easier to respond effectively

and reduce the impact. ... When it comes to NDR, key stakeholders who benefit

from its implementation include Security Operations Centre (SOC) teams, IT

security leaders, and executives responsible for risk management. SOC teams

gain comprehensive visibility into network traffic, which reduces false

positives and allows them to focus on real threats, ultimately lowering stress

and improving their efficiency. IT security leaders benefit from a more robust

defence mechanism that ensures complete network coverage, especially in hybrid

environments where both managed and unmanaged devices need protection.

Application detection and response is the gap-bridging technology we need

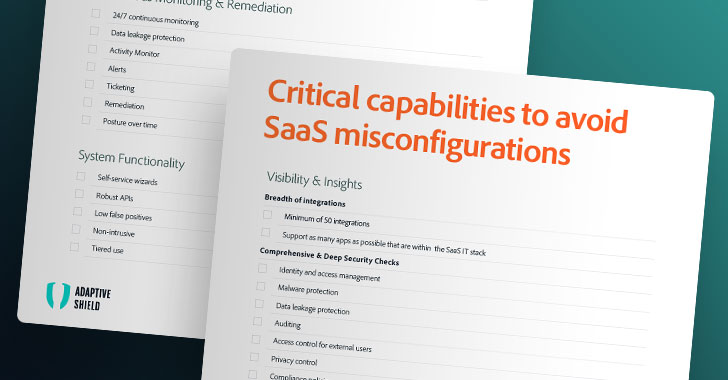

In the shared-responsibility model, not only is there the underlying cloud

service provider (CSP) to consider, but there are external SaaS integrations

and internal development and platform teams, as well as autonomous teams

across the organization often leading to opaque systems with a lack of clarity

around where responsibilities begin and end. On top of that, there are

considerations around third-party dependencies, components, and

vulnerabilities to address. Taking that further, the modern distributed nature

of systems creates more opportunities for exploitation and abuse. One example

is modern authentication and identity providers, each of which is a potential

attack vector over which you have limited visibility due to not owning the

underlying infrastructure and logging. Finally, there’s the reality that we’re

dealing with an ever-increasing velocity of change. As the industry continues

further adoption of DevOps and automation, software delivery cycles continue

to accelerate. That trend is only likely to increase with the use of

genAI-driven copilots.

Data Is King. It Is Also Often Unlicensed or Faulty

A report published in the Nature Machine Intelligence journal presents a

large-scale audit of dataset licensing and attribution in AI, analyzing over

1,800 datasets used in training AI models on platforms such as Hugging Face.

The study revealed widespread miscategorization, with over 70% of datasets

omitting licensing information and over 50% containing errors. In 66% of the

cases, the licensing category was more permissive than intended by the

authors. The report cautions against a "crisis in misattribution and informed

use of popular datasets" that is driving recent AI breakthroughs but also

raising serious legal risks. "Data that includes private information should be

used with care because it is possible that this information will be reproduced

in a model output," said Robert Mahari, co-author of the report and JD-PhD at

MIT and Harvard Law School. In the vast ocean of data, licensing defines the

legal boundaries of how data can be used. ... "The rise in restrictive data

licensing has already caused legal battles and will continue to plague AI

development with uncertainty," said Shayne Longpre, co-author of the report

and research Ph.D. candidate at MIT.

AI interest is driving mainframe modernization projects

AI and generative AI promise to transform the mainframe environment by

delivering insights into complex unstructured data, augmenting human action

with advances in speed, efficiency and error reduction, while helping to

understand and modernize existing applications. Generative AI also has the

potential to illuminate the inner workings of monolithic applications, Kyndryl

stated. “Enterprises clearly see the potential with 86% of respondents

confirmed they are deploying, or planning to deploy, generative AI tools and

applications in their mainframe environments, while 71% say that they are

already implementing generative AI-driven insights as part of their mainframe

modernization strategy,” Kyndryl stated. ... While AI will likely shape the

future for mainframes, a familiar subject remains a key driver for mainframe

investments: security. “Given the ongoing threat from cyberattacks, increasing

regulatory pressures, and an uptick in exposure to IT risk, security remains a

key focus for respondents this year with almost half (49%) of the survey

respondents cited security as the number one driver of their mainframe

modernization investments in the year ahead,” Kyndryl stated.

How AI Is Propelling Data Visualization Techniques

.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)

AI has improved data processing and cleaning. AI identifies missing data and

inconsistencies, which means we end up with more reliable datasets for

effective visualization. Personalization is yet another benefit AI has

brought. AI-powered tools can tailor visualizations based on set goals,

context, and preferences. For example, a user can provide their business

requirements, and AI will provide a customized chart and information layout

based on these requirements. This saves time and can also be helpful when

creativity isn’t flowing as well as we’d like. ... It’s useful for geographic

data visualization in particular. While traditional maps provide a top-down

perspective, AR mapping systems use existing mapping technologies, such as

GPS, satellite images, and 3D models, and combine them with real-time data.

For example, Google’s Lens in Maps feature uses AI and AR to help users

navigate their surroundings by lifting their phones and getting instant

feedback about the nearest points of interest. Business users will appreciate

how AI automates insights with natural language generation (NGL).

Framing The Role Of The Board Around Cybersecurity Is No Longer About Risk

Having set an unequivocal level of accountability with one executive for

cybersecurity, the Board may want to revisit the history of the firm with

regards to cyber protection, to ensure that mistakes are not repeated, that

funding is sufficient and overall, that the right timeframes are set and

respected, in particular over the mid to long-term horizon if large scale

transformative efforts are required around cybersecurity. We start to see a

list of topics emerging, broadly matching my earlier pieces, around the “key

questions the Board should ask”, but more than ever, executive accountability

is key in the face of current threats to start building up a meaningful and

powerful top-down dialogue around cybersecurity. Readers may notice that I

have not used the word “risk” even once in this article. Ultimately, risk is

about things that may or may not happen: In the face of the “when-not-if”

paradigm around cyber threats – and increasingly other threats as well – it is

essential for the Board to frame and own business protection as a topic rooted

in the reality of the world we live in, not some hypothetical matter which

could be somehow mitigated, transferred or accepted.

Embracing First-Party Data in a Cookie-Alternative World

Unfortunately, the transition away from third-party cookies presents

significant challenges that extend beyond shifting customer interactions. Many

businesses are particularly concerned about the implications for data security

and privacy. When looking into alternative data sources, businesses may

inadvertently expose themselves to increased security risks. The shift to

first-party data collection methods requires careful evaluation and

implementation of advanced security measures to protect against data breaches

and fraud. It is also crucial to ensure the transition is secure and compliant

with evolving data privacy regulations. To ensure the data is secure,

businesses should go beyond standard encryption practices and adopt advanced

security measures such as tokenization for sensitive data fields, which

minimizes the risk of exposing real data in the event of a breach.

Additionally, regular security audits are crucial. Organizations should

leverage automated tools for continuous security monitoring and compliance

checks that can provide real-time alerts on suspicious activities, helping to

preempt potential security incidents.

Quote for the day:

“It's not about who wants you. It's

about who values and respects you.” -- Unknown