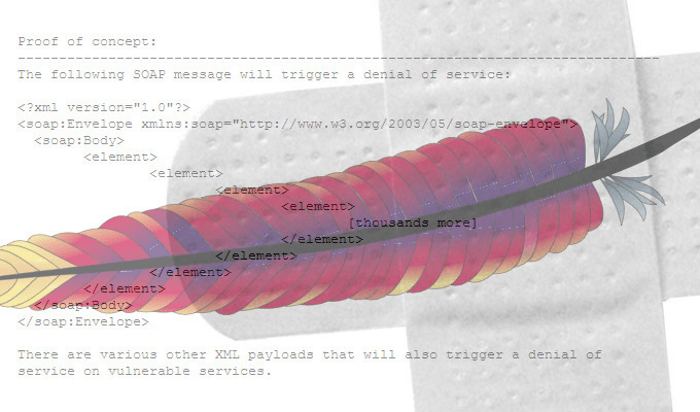

TrickBot Ravages Customers of Amazon, PayPal and Other Top Brands

The TrickBot malware was originally a banking trojan, but it has evolved well

beyond those humble beginnings to become a wide-ranging credential-stealer and

initial-access threat, often responsible for fetching second-stage binaries such

as ransomware. Since the well-publicized law-enforcement takedown of its

infrastructure in October 2020, the threat has clawed its way back, now sporting

more than 20 different modules that can be downloaded and executed on demand. It

typically spreads via emails, though the latest campaign adds self-propagation

via the EternalRomance vulnerability. “Such modules allow the execution of all

kinds of malicious activities and pose great danger to the customers of 60

high-profile financial (including cryptocurrency) and technology companies,” CPR

researchers warned. “We see that the malware is very selective in how it chooses

its targets.” It has also been seen working in concert with a similar malware,

Emotet, which suffered its own takedown in January 2021.

‘Ice phishing’ on the blockchain

There are multiple types of phishing attacks in the web3 world. The technology

is still nascent, and new types of attacks may emerge. Some attacks look similar

to traditional credential phishing attacks observed on web2, but some are unique

to web3. One aspect that the immutable and public blockchain enables is complete

transparency, so an attack can be observed and studied after it occurred. It

also allows assessment of the financial impact of attacks, which is challenging

in traditional web2 phishing attacks. Recall that with the cryptographic keys

(usually stored in a wallet), you hold the key to your cryptocurrency coins.

Disclose that key to an unauthorized party and your funds may be moved without

your consent. Stealing these keys is analogous to stealing credentials to web2

accounts. Web2 credentials are usually stolen by directing users to an

illegitimate web site through a set of phishing emails. While attackers can

utilize a similar tactic on web3 to get to your private key, given the current

adoption, the likelihood of an email landing on the inbox of a cryptocurrency

user is relatively low.

Cloud Security Alliance publishes guidelines to bridge compliance and DevOps

As for tooling, CSA called for organisations to embrace infrastructure as-code

to eliminate manual provisioning of infrastructure. They can do so through

services such as AWS Cloud Formation or capabilities from the likes of Chef,

Ansible and Terraform, paving the way for automation, version control and

governance. Organisations can also establish guardrails to constantly monitor

software deployments to ensure alignment with their goals and objectives,

including compliance. These guardrails can be represented as high-level rules

with detective and preventive policies. Guardrails may be implemented as a

means of compliance reporting, such as the number of machines running approved

operating systems (OSes), or as remedies to non-compliance, such as shutting

down machines running unapproved OSes. With a tendency to address risk

directly through tooling, organisations can easily overlook the importance of

having the appropriate mindset in DevSecOps transformation. CSA defines

mindset as the ways to bring security teams and software developers closer

together.

Use of Artificial Intelligence in the Banking World 2022

Chatbots are one of the most-used applications of artificial intelligence, not

only in banking but across the spectrum. Once deployed, AI chatbots can work

24/7 to be available for customers. In fact, in several surveys and market

research studies, it has been found that people actually prefer interacting

with bots instead of humans. This can be attributed to the use of natural

language processing for AI chatbots. With NLP, AI chatbots are better able to

understand user queries and communicate in a seemingly humane way. An example

of AI chatbots in banking can be seen in the Bank of America with Erica, the

virtual assistant. Erica handled 50 million client requests in 2019 and can

handle requests including card security updates and credit card debt

reduction. Digital-savvy banking customers today need more than what

traditional banking can offer. With AI, banks can deliver the personalized

solutions that customers are seeking. An Accenture survey suggested that 54%

of banking customers wanted an automated tool to help monitor budgets and

suggest real-time spending adjustments.

Data democratisation and AI—The superpowers to augment customer experience in 2022

With the powerful combination of data and AI at their fingertips, teams can

gain deeper insights into their customers. Such technologies can also provide

recommendations to the next-best-action. Critical decisions such as the right

message, right channel, and time can be optimised to boost efficiency as well

as delight consumers. For example, ecommerce brands can identify customers who

buy from a specific luxury brand and personalise offers. Banks can determine

customers who have not completed the onboarding journey and eliminate

roadblocks to help them move towards completion. Music streaming apps can

create custom playlists for each listener based on their preferred music and

artists. In the past, these insights were gathered from multiple platforms,

most times with the help of technology or data teams running Big Data queries.

The time required to run these queries, draw insights, and then apply them was

often long. Which meant, brands could not go to the market faster.

Federated Machine Learning and Edge Systems

/filters:no_upscale()/articles/federated-ml-edge/en/resources/1Picture5-3-1645004953358.jpg)

It helps to look at a practical use case. We're going to look at Federated

Learning of Cohorts, or FLoCs, also developed by Google. It's essentially a

proposal to do away with third-party cookies, because they're awful and nobody

likes them, and they are a privacy nightmare. Many browsers are removing

functionality for third-party cookies or automatically blocking third-party

cookies. But what should we use in order to do targeted, personalized

advertising if we don't have third-party cookies? That's what Google proposed

FLoCs would do. Their idea for FLoCs is that you get assigned a cohort based

on something you like, your browsing history, and so on. In the diagram below,

we have two different cohorts: a group that likes plums and a group that likes

oranges. Perhaps, if you were a fruit seller, you might want to target the

plum cohort with plum ads and the orange cohort with orange ads, for example.

The goal was to resolve the privacy problems and the poor user experience of

online targeted ads, where sometimes a user would click on something and it

would follow them for days.

Inside Look at an Ugly Alleged Insider Data Breach Dispute

The Premier lawsuit alleges that changes to the company's security controls -

including disabling endpoint security - allowed Sohail's continued access to

Premier "trade secrets" and other sensitive information after his resignation

as CIO. It says that Sohail "colluded with or coerced" Pakistan-based Sajid

Fiaz, who served as Premier's IT administrator while also being employed at

Wiseman Innovations as an IT infrastructure manager and HIPAA officer. Premier

alleges that Fiaz's actions related to its data security provided Sohail

"unfettered access to the master password for endpoint security that enabled

that data theft and misuse through USB drives connected to secure IT systems."

It says Sohail had unrestricted access to copying data to and from the company

laptops and that a forensic report showed that he retained and accessed .PST

files of emails from Premier after resigning as CIO in. ".PST files are an

aggregated archive of all emails sent to and from an email address including

all attachments," Premier says in court documents.

How challenging is corporate data protection?

When employees quit their jobs, there is a 37% chance an organization will

lose IP. With 96% of companies noting they experience challenges in protecting

corporate data from insider risk, it’s clear insider risk must be prioritized.

However, ownership of the problem remains vaguely defined. Only 21% of

companies’ cybersecurity budgets have a dedicated component to mitigate

insider risk, and 91% of senior cybersecurity leaders still believe that their

companies’ Board requires better understanding of insider risk. “With employee

turnover and the shift to remote and collaborative work, security teams are

struggling to protect IP, source code and customer information. This research

highlights that the challenge is even more acute when a third of employees who

quit take IP with them when they leave. On top of that, three-quarters of

security teams admit that they don’t know what data is leaving when employees

depart their organizations,” said Joe Payne, Code42 president and CEO.

“Companies must fundamentally shift to a modern data protection approach –

insider risk management (IRM) – that aligns with today’s cloud-based,

hybrid-remote work environment and can protect the data that fuels their

innovation, market differentiation and growth.”

High-Severity RCE Bug Found in Popular Apache Cassandra Database

John Bambenek, principal threat hunter at the digital IT and security

operations company Netenrich, told Threatpost on Wednesday that he suspects

that the non-default settings are “common in many applications around the

world.” The situation isn’t looking as bad as

Log4j, but it could still potentially be widespread, and it’s going to be a chore

to dig out vulnerable installations, Bambenek said via email. “Unfortunately,

there is no way to know exactly how many installations are vulnerable, and

this is likely the kind of vulnerability that will be missed by automated

vulnerability scanners,” he said. “Enterprises will have to go into the

configuration files of every Cassandra instance to determine what their risk

is.” Casey Bisson, head of product and developer relations at code-security

solutions provider BluBracket, told Threatpost that the issue could have “a

broad impact with very serious consequences,” as in, “Threat actors may be

able to read or manipulate sensitive data in vulnerable configurations.”

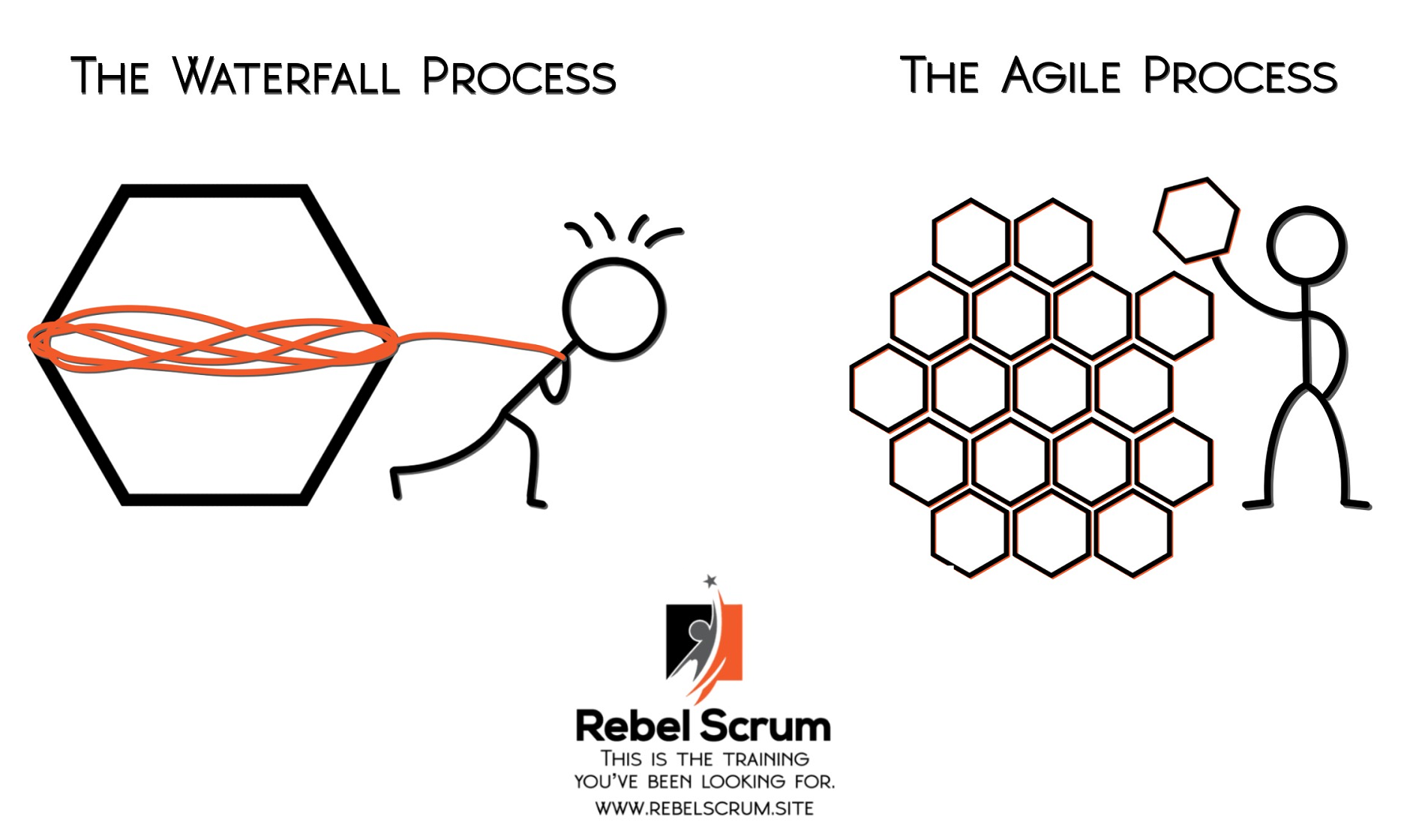

Top tips for entering an IT partnership for the first time

For businesses looking to strike up an IT partnership, it is crucial to ensure

that potential partners are on the same page and are both working towards

similar outcomes. Mutual visions lead to increased understanding, trust, and

judgement throughout the project. Therefore, it is highly recommended for

organisations to spend sufficient time when selecting their partner to

understand their exact values, ideas, goals and ambitions. In order to

accomplish this, it is recommended to physically visit potential partners,

understand their culture and apply a human-to-human approach – whilst

understanding that this is not a one-time project but an ongoing process to

improve and build a solid relationship. Businesses must ensure the relevancy

of the partner to the project by matching the usefulness of their skills,

ideas, and experience to the client’s project. Establishing a partnership

contrary to client needs will not only lead to customer dissatisfaction, but

also to the overlooked understanding of the value of a partnership.

Quote for the day:

"A leader should demonstrate his

thoughts and opinions through his actions, not through his words." --

Jack Weatherford

/filters:no_upscale()/articles/data-patterns-edge/en/resources/51-1644849493259.jpeg)