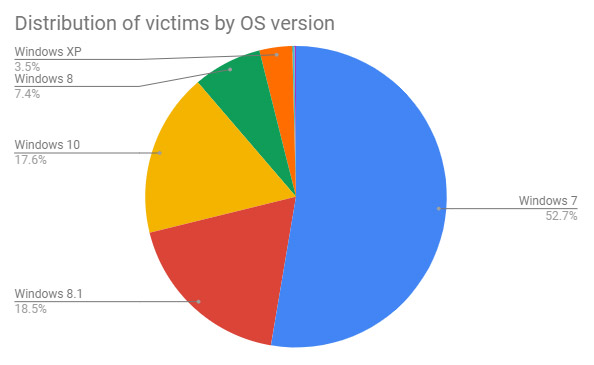

CERT-In cyber security norms bar use of Anydesk, Teamviewer by govt dept

Cyber security watchdog CERTin has barred the use of remote desktop softwares

like Anydesk and Teamviewer in the government department under new security

guidelines released on Friday. The guidelines prescribe government departments

use virtual private networks (VPN) for accessing network resources from remote

locations and enable multi-factor authentication (MFA) for VPN accounts.

"Ensure to block access to any remote desktop applications, such as Anydesk,

Teamviewer, Ammyy admin etc," Guidelines on Information Security Practices for

Government Entities said. CERT-In (Indian Computer Emergency Response Team )

said the purpose of these guidelines is to establish a prioritised baseline

for cyber security measures and controls within government organisations and

their associated organisations. Minister of State for Electronics and IT

Rajeev Chandrasekhar in an official statement said the government has taken

several initiatives to ensure an open, safe and trusted and accountable

digital space.

Navigating Product Owner Accountability in Scrum: Debunking Myths and Overcoming Anti-Patterns

In a misguided attempt to ‘help’ Product Owners with their important

responsibilities, some organizations establish two Product Owners for a single

Product. However, while this may seem, at first, to be helpful, this actually

causes a lot of problems for both Product Owners involved. When multiple

Product Owners exist, conflicting ideas and visions may arise, diluting the

product's direction and impeding progress. ... Instead, the Product Owner can

delegate tasks such as creating Product Backlog items, maintaining the

roadmap, or gathering metrics to Developers on the Scrum team. However, it is

important to note that while the Product Owner may delegate as needed, the

Product Owner ultimately remains accountable for items in the Product Backlog

as well as the product forecast or roadmap, thus ensuring that there is a

single, unifying vision and goal for the product and that the Product Backlog

is in alignment with that vision. If the Product Owner is delegating the

creation of Product Backlog items to Developers, what does that mean?

Cisco firewall upgrade boosts visibility into encrypted traffic

“What our competitors are saying is ‘just decrypt everything.’ But we know in

the real world, customers refrain from doing that due to data privacy concerns

and to meet legal/compliance requirements. Furthermore, decrypting and

re-encrypting data requires technical prowess not everyone has, increases the

attack surface, and also causes severe performance challenges,” Miles said.

EVE works by extracting two primary types of data features from the initial

packet of a network connection, according to a blog written by Blake Anderson,

a software engineer in Cisco’s advanced security research group. First,

information about the client is represented by the Network Protocol

Fingerprint (NPF), which extracts sequences of bytes from the initial packet

and is indicative of the process, library, and/or operating system that

initiated the connection. Second, it extracts information about the server

such as its IP address, port, and domain name (for example a TLS server_name

or HTTP Host).

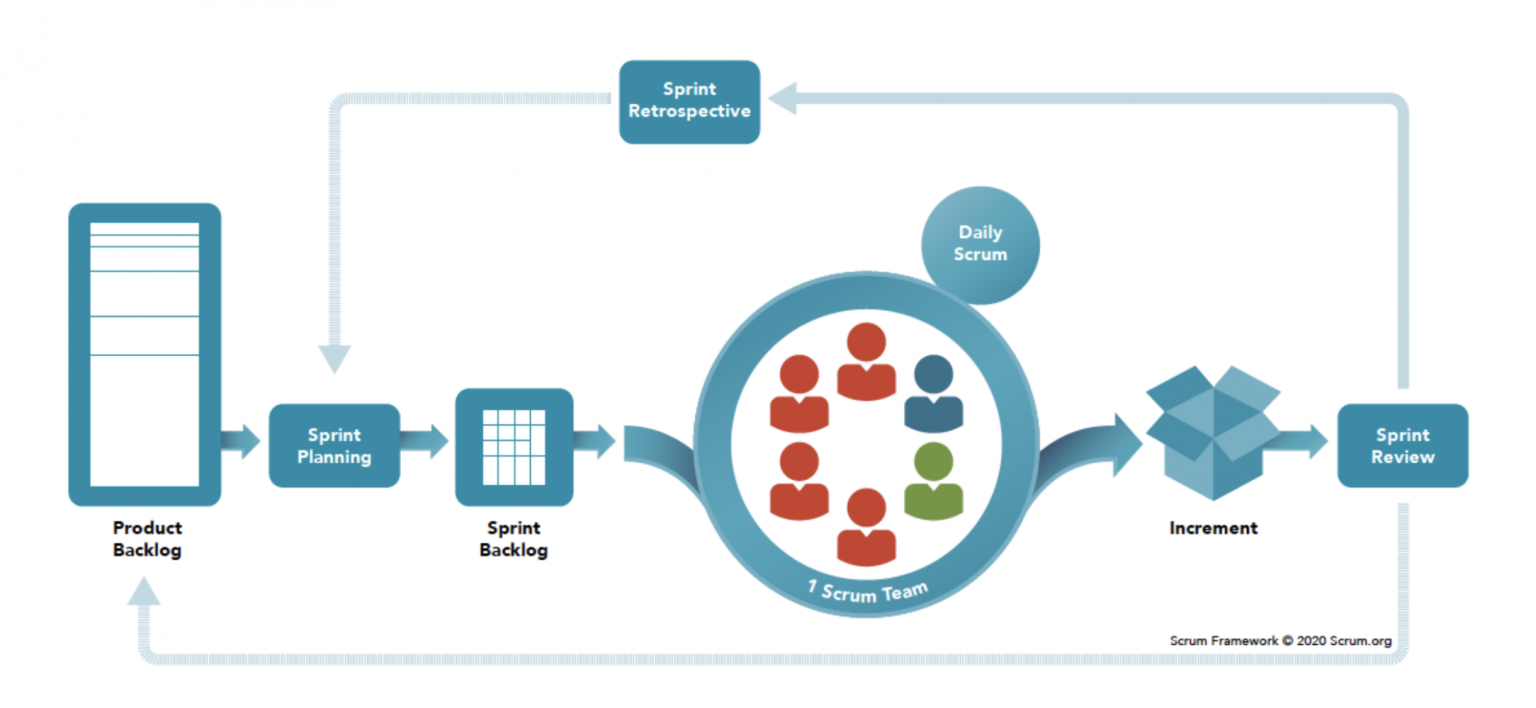

Scrum vs. Kanban vs. Lean: Choosing Your Path in Agile Development

While Scrum is commonly associated with software development teams, its

principles and lessons have broad applicability across various domains of

teamwork. This versatility is one of the key factors contributing to the

widespread popularity of Scrum. Scrum is founded upon the concept of

time-boxed iterations called sprints, which are designed to enhance team

efficiency within cyclical development cycles. ... Kanban is well-suited for

organizations seeking to embrace the benefits of agility while minimizing

drastic workflow changes. It is particularly suitable for projects where

priorities frequently shift, and ad hoc tasks can arise anytime. Kanban is a

flexible methodology that can be applied to various domains and teams beyond

software development. ... Lean methodology strongly emphasizes market

validation and creating successful products that provide value to users. It

is particularly well-suited for new product development teams or startups

operating in emerging niches where a finished product may not yet exist, and

resources are limited.

3 Ways to Build a More Skilled Cybersecurity Workforce

In addition to insights around highly sought-after skill sets and job

titles, OECD's report also reveals that demand for cybersecurity

professionals has spread beyond the confines of major urban centers. It

calls for a more decentralized workforce to meet demand in underserved

areas. ... If companies are to close the skills gap and meet the current

demand for cybersecurity workers, they will need to broaden their horizons

to account for more nontraditional cybersecurity career paths. In doing so,

they will enhance the industry with a broader range of unique experiences

and life skills. Recruiting more diverse candidates also allows companies to

approach security challenges from different angles and identify solutions

that may not have been considered otherwise. When a workforce is as diverse

as the cybersecurity threats an organization faces, it can pull from a

broader range of professional and personal experiences to more effectively

and inclusively protect themselves and their end users.

AI's Teachable Moment: How ChatGPT Is Transforming the Classroom

"Teachers could say, 'Hey my students are really interested in TikTok,' then

feed that to the AI," says Liu. "An AI could come up with three analogies

related to TikTok that connect students to their needs and interests." Liu

believes we absolutely need to acknowledge the immediate threats surrounding

AI and its initial impact on teachers, particularly around skills

assessments and cheating. One approach he takes is to speak openly with

students and acknowledge that AI is the new thing and that we're all

learning about it – what it can do, where it might lead. The more open

conversations educators have with students, he says, the better. In the near

term, students are going to cheat. That's impossible to avoid. YouTube and

TikTok are bulging at the seams with tricks to help students avoid

plagiarism trackers. In the medium term, Liu believes, we need to reevaluate

what it means to grade students. Does that mean allowing students to use AI

in assessments? Or changing how to teach topics? Liu isn't 100% sure.

Top 5 Benefits Of Blockchain Technology

Transparency within the Blockchain ecosystem refers to the open visibility

of transactions, enabling all participants to validate and verify the

recorded data. Unlike traditional systems that rely on centralized

authorities, Blockchain operates on a decentralized network, where each

transaction is recorded on a public ledger known as the Blockchain. ...

Immutability is a cornerstone of Blockchain technology. It guarantees that

once a transaction is recorded on the Blockchain, it becomes virtually

impossible to alter or tamper with the data. This is achieved through a

combination of cryptographic techniques and consensus mechanisms. Blockchain

achieves data immutability by using cryptographic hashing. Each transaction

is assigned a unique cryptographic hash, which is essentially a digital

fingerprint. This hash is created by applying complex mathematical

algorithms to the transaction data, resulting in a fixed-length string of

characters. Furthermore, Blockchain relies on consensus mechanisms such as

Proof of Work (PoW) or Proof of Stake (PoS) to validate and verify

transactions.

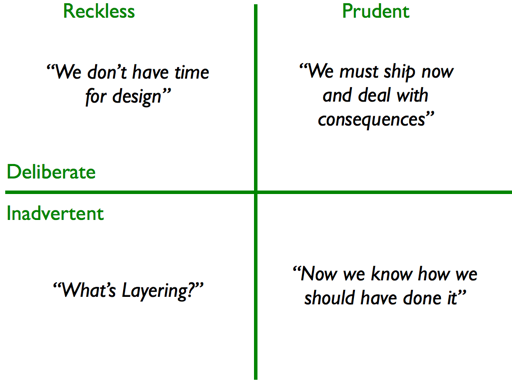

Technical Debt tracking supports projects to “do it right”

For decades, there have been logs of outstanding bugs found in testing but

not corrected before the project is implemented. The term technical debt

adds the concept that there are consequences to those decisions, and that

there are strong reasons to prioritize the follow-up to fix things and clear

that list. Most of us are aware of workarounds that were left in place

permanently and eventually cost too much. We may have seen a system with

poor performance that slowed the work of key workers and/or was missing

functionality that impacted the customer experience. All of these are

important reasons that technical debt should be cleared up. There are other

reasons too. Generally, people do not purposefully create poor designs or

code with bugs. ... One of the interesting concepts that has been

offered by Martin Fowler is the Technical Debt Quadrant that talks about the

prudent but inadvertent technical debt that is created as we learn during a

project and realize how the project should have been done.

Successful digital transformation requires simplistic thinking

While organizations are aggressively pursuing transformation goals, Chaudhry

warned that antiquated mindsets and a range of internal factors can

seriously inhibit innovation and prevent businesses from achieving their

goals. Most notable among these is a complacent culture among some IT

leaders who are stuck in a loop of traditional, outdated practices. “IT

plays the most important role in driving transformation. You play the most

important role, but you also need to act fast to drive change,” he said.

“You can’t sit back and say ‘this is how things have been done for the last

30 years, so let’s keep doing so’.” ... Inertia, as he puts it, is a

powerful inhibitor that locks IT leaders and organizations into an outdated

mindset which prevents them from embracing change. “Inertia is powerful, and

it holds you back because we are comfortable with what we’ve been doing for

the last 10, 20 years or so,” he said. Research has often identified inertia

as a common inhibitor in digital transformation, whereby teams are reluctant

- or unwilling - to accept change.

Strategies to drive the Data Mesh cultural transformation

It’s important to have consistent and clear communication to ensure that

everyone understands the reasons and the effects of change. Leaders must

communicate the vision and benefits of Data Mesh. They also need to guide on

how the new ways of working are going to be adopted through well-defined

structures, roles and responsibilities for the new data product teams. To

ensure data product ownership and accountability, defining clear KPIs and

metrics for each data product team to measure success and track progress is

critical. ... Rather than trying to adopt Data Mesh all at once,

organizations can start with small pilot projects and gradually expand. This

approach can help understand how processes defined in vitro work in real

life. It also comes with lessons learned which help followers avoid the

initial mistakes. ... This ensures that everyone in the

organizationunderstands the new concepts and ways of working. It could

include training sessions and coaching on Data Mesh, product thinking,

design, user research, agile methodologies, cross-functional team

collaboration, and data product ownership.

Quote for the day:

"Leaders need to strike a balance between action and patience." --

Doug Smith