Apache Pulsar: A Unified Queueing and Streaming Platform

While streaming systems like Apache Kafka were capable of scaling — with a lot

of manual effort around data rebalancing — the capabilities of streaming API

were not always the right fit. It required developers to work around limitations

of a pure streaming model while also requiring developers to learn a new way of

thinking and designing, which made adoption for messaging use cases more

difficult. With Pulsar, the situation is different. Developers can use a

familiar API that works in a familiar way while offering more scalability and

the capabilities of a streaming system. The need for scalable messaging plus

streaming messaging is a challenge my team at Instructure faced. In the effort

to solve this problem, we discovered Pulsar. At Instructure, we were dealing

with high-scale situations where we needed higher scale messaging. Initially, we

tried to build this by re-architecting around streaming tech. Then, we found

that Apache Pulsar was the perfect fit to help teams get the capabilities they

needed but without the complexity of re-architecting around a streaming-based

model.

Thriving in the Complexity of Software Development Using Open Sociotechnical Systems Design

How can companies then make headway in such a turbulent environment? And how

can agile and the pipe-dream of a true product team come true? Let us once

more take a look at the theoretical underpinnings of STSD, especially the OST

extension developed by Fred Emery during the Norwegian Industrial Democracy

Program in 1967. He identified what he referred to as the two genotypical

organisational design principles, simply called DP1 and DP2 (DP – design

principle) and they were defined by the way organisations get redundancy in

order to operate efficiently. DP1 is where there is redundancy of parts, i.e.

each part is so simple that it can easily and cheaply be replaced. The

simpler, the better, but that also means that they need to be coordinated or

supervised in order to complete a whole task. This is what we all know as the

classical bureaucratic hierarchy with maximum division of labour. The critical

feature of DP1 is that responsibility for coordination and control is located

at least one level above where the action is being performed.

Learn how Microsoft strengthens IoT and OT security with Zero Trust

The practice of adopting multiple tools to monitor different tiers of

suppliers increases complexity, which in turn increases the odds that a

cyberattack can produce a significant return for your adversary. Siloes can

create additional problems—different teams have different priorities, which

may lead to different risk priorities and practices. This inconsistency can

create a duplication of efforts and gaps in risk analysis. Suppliers’

personnel also are a top concern. Organizations want to know who has access to

their data; so they can protect themselves from human liability, shadow IT,

and other insider threats. For supplier risk management, an always-on,

automated, integrated approach is needed, but current processes aren’t

well-suited to the task. To secure your supply chain, it’s important to have a

repeatable process that will scale as your organization innovates. ... With

the prevalence of cloud connectivity, IoT and OT have become another part of

your network. And because IoT and OT devices are typically deployed in diverse

environments—from inside factories or office buildings to remote worksites or

critical infrastructure—they’re exposed in ways that can make them easy

targets.

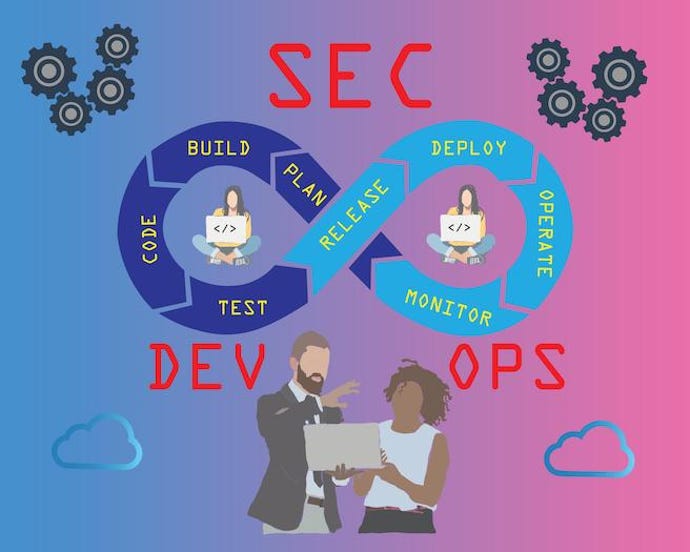

Humanizing hackers: Entering the minds of those behind the attacks

Developers are invariably specialists for only the front-end, API development,

or databases. Their ability to perceive the entire system as one whole is

somewhat challenged by their role in the organization and by their limitations

of systemic understanding. Typically, developers identify a problem and look

for the simplest and fastest solution possible (patch-by-patch formula)

without having the full context. A developer’s primary focus is on user

experience or the quality of the application. If the immediate customer is

satisfied, not through security but by delivering functionality, the company

is unconcerned. Furthermore, developers are not always trained on security and

compliance, and security officers have little input on protocol or policy.

Security teams only retroactively review applications and ecosystem security

when systems are already in production – by that time, it is already too late.

What should be ingrained into the company DNA has become an after-the-fact

consideration. If you have an infinite number of holes on a boat, it will

eventually sink – that’s why companies are becoming obvious targets for

hackers.

Dridex Banking Malware Turns Up in Mexico

Metabase Q noticed three Dridex campaigns in Mexico starting in April of this

year, writes José Zorrilla of Metabase Q's Offensive Security Team, Ocelot, in

a blog post. The hosting and distribution point for Dridex was the website of

Odette Carolina Lastra García, who is a representative for the Green Party -

Partido Verde Ecologista de México - in the Congress of the state of Tabasco.

Her website may have been vulnerable to compromise and was used to pass on

Dridex, Zorrilla writes. The site was suspended around mid-October. Zorrilla

writes that there were three observed campaigns. In April, phishing emails

with Dridex were sent around the world that lead to a version of Dridex placed

on Lastra García's website. In August, deceptive SMS messages made the rounds

that purported to come from the bank Citibanamex. Those messages contained a

link that redirected to Lastra García's infected website. There was also a

third ruse using the SocGholish framework. SocGholish uses several types of

social engineering frameworks to try to entice people to download a bogus

software update, which is actually a remote access Trojan.

How leaders can help teams fight fatigue: 7 practical tips

Perhaps the most novel idea is to get ahead of it, name it and point to it as

a thing that people should be on the lookout for – managers in particular –

and encourage the known efforts of prevention before it becomes a problem that

needs to be solved. More importantly, encourage everyone to look out for each

other, because caring for others is proven to help stave off your own burnout.

We coach people to look for the signs, not necessarily to ask about them – it

can be hard for people to self-diagnose burnout, but easier for others to

observe changes in their behaviors. We look for cynicism, dissatisfaction,

lack of motivation, irritability, impatience, and tiredness. We ask questions

about how they've experienced changes in their feeling, thinking, and behaving

and even if they're aware of what the known signs of burnout are. When the

earliest signs are spotted, we want to get ahead of it with overt

encouragement. We use team-wide Slack conversations to demonstrate and

celebrate self-care in order to remove the stigma and the sense that it needs

to be offline or undisclosed use of time.

How an as-a-service model lends itself to achieving climate change goals

More importantly, a circular economy also requires a shift in the way we do

business, from purchasing and installing huge amounts of equipment that are

underutilised to an as-needed model. Adopting a “product-as-a-service”

business model is one of the most impactful changes we can make today. It not

only makes our economy more circular by breaking established patterns of

mismatched supply and demand; it also has the potential to generate

significant growth opportunities for any industry. As-a-service is a radical

departure from a commoditised business model whereby companies sell a product

and consider their job done. Instead, the producer retains ownership of – and

responsibility for – the product throughout its entire life cycle. The

customer has full use of the product for as long as is needed, paying only for

when it is actually used, instead of for the product itself or its upkeep. The

producer, in turn, is responsible for building a quality product that lasts,

and is energy and material efficient. It is also their role to take the

product back and prepare it (or its components) for reuse.

NFT is enough: why digital art is much more than copy and paste

NFTs aren’t just a buyers’ game though, far from it. The creation of digital

artwork and the sale of it using blockchain technology has opened up a whole

new marketplace for budding artists and is blurring geographical boundaries.

While artists creating physical work can often be tied to their local markets

or one specific place displaying pieces in galleries, NFTs and the internet

enables those producing digital pieces to have a global audience at their

fingertips. The role blockchain plays in providing ownership and authenticity

is vital for these artists too. Without a large following already, many

artists including those from remote parts of the world, may struggle to prove

their credibility. Whether or not the art itself is appealing, art lovers are

unlikely to purchase an item if they don’t have concrete proof of the

authenticity of the piece. NFTs essentially level the playing field and create

opportunities for millions of artists to get their pieces recognised

worldwide. While reputation will still ultimately be a contributing factor, as

one would expect, it enables artists to let the artwork speak for itself.

The New Enterprise Risk Management Strategy

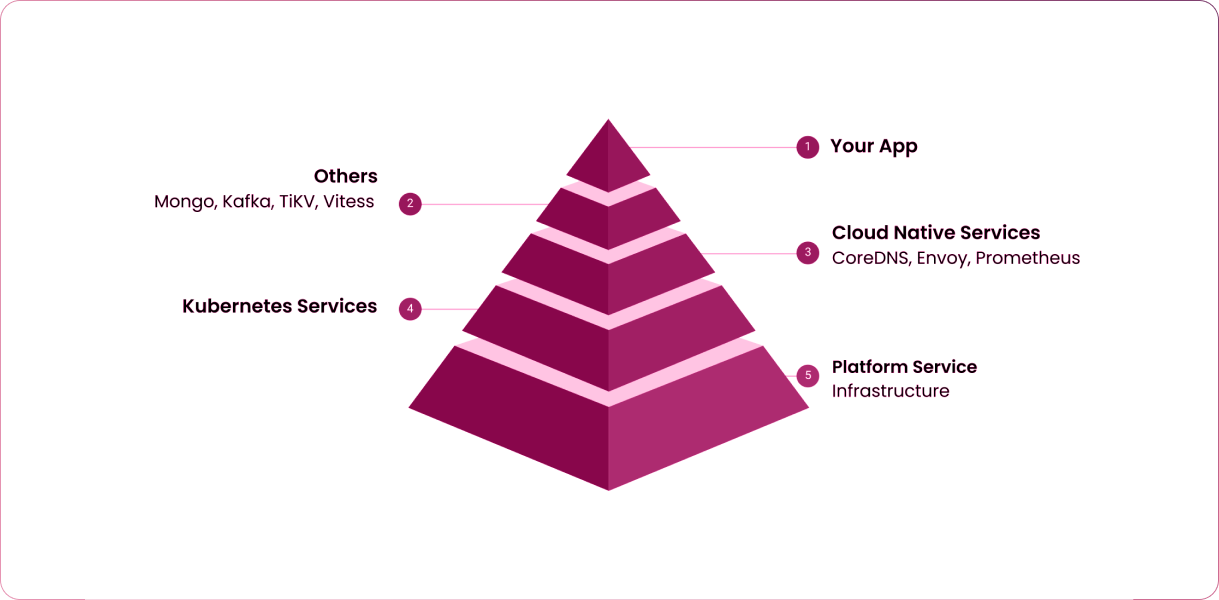

As more applications, systems and infrastructure are now designed and built in

a highly distributed and always available manner, they are highly resilient,

fault tolerant, elastic and scalable - in the cloud and/or on-premises. This

help addresses the availability aspect of the C.I.A. triad. Because the

applications, systems, and infrastructure are created to be immutable, small

changes are detected very easily. This removes the need to maintain integrity.

Integrity problems occur when we have the ability to make changes, either

intentionally or unintentionally, that are very hard to detect. That affected

the integrity aspect of the C.I.A. triad. ... According to Rinehart, the

co-founder and CTO of Verica, Security Chaos Engineering is a way to approach

security differently. The idea is to test the resiliency of the security

controls continuously and automatically in the face of chaos - or simulated

real-life events on real production systems in a controlled manner - without

affecting other systems. This helps security practitioners build confidence

and learn about and improve the resiliency and effectiveness of those controls

over time.

How to manage endpoint security in a hybrid work environment

The issue with remote working is that lots of employees leave their work

devices at the office – or don’t have any at all – and end up using their own

personal electronics for work purposes. Often, these are insecure and put

business data at risk. Moore says employees can access secure office-based

machines while working from home through virtual desktop infrastructure (VDI),

but they still need to use their own computer to do this. He warns: “Endpoint

security may not be the first thought on employees’ minds, which can cause

issues when data is transferred to these devices not owned by the company.

Even with regulations drawn up, employees are able to transfer data relatively

easily.” Hybrid working can also exacerbate the risk of illicit data transfers

by people within an organisation. “This can be where the employee is in the

early stages of exiting a company and considering taking company information

with them,” says Moore. “Furthermore, there is a threat of the employee who

wants to damage the company by stealing sensitive data, which is made much

harder to police when remote working.”

Quote for the day:

"It is the capacity to develop and

improve their skills that distinguishes leaders from followers." --

Warren G. Bennis