All your serverless are belong to us

Though serverless has been enabled by the clouds, serverless functions aren’t

simply a big cloud game. As Vercel CEO (and Next.js founder) Guillermo Rauch

details in the Datadog report, “Two years ago, Next.js introduced first-class

support for serverless functions, which helps power dynamic server-side

rendering (SSR) and API routes. Since then, we’ve seen incredible growth in

serverless adoption among Vercel users, with invocations going from 262 million

a month to 7.4 billion a month, a 28x increase.” From such examples, and many

others (including ever shorter function invocation times, which indicate that

enterprises are becoming more proficient with functions), it’s clear that

serverless computing has taken off. Vendors will continue to press the “no

lock-in” marketing button, but customers don’t seem to care. Rather, they may

care about lock-in, but they care much more about accelerating their time to

customer value. In enterprise computing, as in life, there are always

trade-offs. The cost of a perfectly lock-in-free existence is

lowest-common-denominator code that is generic across hardware/cloud platforms.

5 Things To Remember When Upgrading Your Legacy Solution

In opposition to a common idea, a legacy framework does not necessarily be old.

The most negative part of these frameworks is that they are still employed, even

despite the fact that they frequently fail to meet critical demands and support

core business operations as they are meant to. So, let’s face it - when there is

legacy software you cannot replace, you should at least go for modernization.

"Legacy systems are not that safe," says Daniela Sawyer, Founder and Business

Development Strategist of FindPeopleFast.net. "This happens because, being the

older technology, they are not usually supported by the company or the vendor

who created it in the first place. Also, it lacks having regular updates and

patches to maintain the pace with the modern world. So the new update should

ensure the security aspect precisely." Although it might appear as though you're

saving costs when you don't spend money updating your digital product, that

might cost you much more over the long haul.

The role of visibility and analytics in zero trust architectures

There are three NIST architecture approaches for ZTA that have network

visibility implications. The first is using enhanced identity governance,

which (for example) means using identity of users to only allow access to

specific resources once verified. The second is using micro-segmentation,

e.g., when dividing cloud or data center assets or workloads, segmenting that

traffic from others to contain but also prevent lateral movement. And finally,

using network infrastructure and software defined perimeters, such as zero

trust network access (ZTNA) which for example allows remote workers to connect

to only specific resources. NIST also describes monitoring of ZTA deployments.

Outlining that network performance monitoring will need security capabilities

for visibility. This includes that traffic should be inspected and logged on

the network (and analyzed to identify and reach to potential attacks),

including asset logs, network traffic and resource access actions.

Furthermore, NIST expresses concern about the inability to access all relevant

and encrypted traffic – which may originate from non-enterprise-owned assets

or applications and/or services that are resistant to passive monitoring.

How business and IT can overcome the data governance challenge

Business heads and their teams, after all, are the ones who have the

knowledge about the data – what it is, what it means, who and what processes

use it and why. As well as what rules and policies should apply to it.

Without their perspective and participation in data governance, the

enterprise’s ability to intelligently lockdown risks and enable growth will

be seriously compromised. However, with their engagement, sustainable

payback will be achieved and the case for continuing commitment by the

enterprise to data governance will be easier to justify. It is vital,

however, that modern data governance is a strategic initiative. A data

governance strategy is the foundation upon which to build a muscular

data-driven organization. Appropriately implemented – with business data

stakeholders driving alignment between data governance and strategic

enterprise goals and IT handling the technical mechanics of data management

– the door opens to trusting data and using it effectively. Data definitions

can be reconciled and understood across business divisions, knowledge base

quality can be guaranteed, and security and compliance do not have to be

sacrificed even as information accessibility expands.

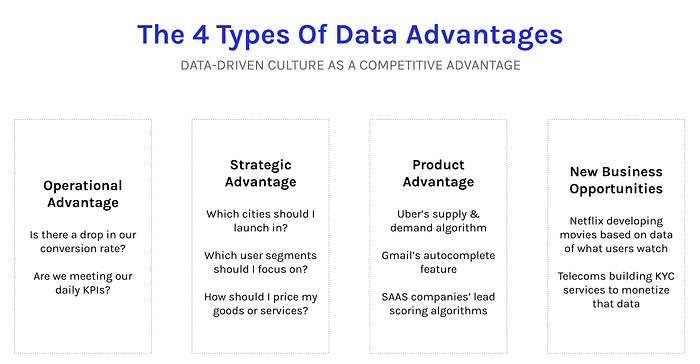

Data Advantage Matrix: A New Way to Think About Data Strategy

For SAAS companies, the funnel is everything. Optimizing metrics at every

stage of the funnel is what accelerates SAAS companies from average to

exponential growth. So for any SAAS founder, if you don’t have basic

operational analytics set up on day one, you’re probably doing something

wrong. This fictional SAAS startup would start at the top left of the matrix

with basic operational analytics. These analytics don’t have to be

complicated. At Stage 1, it’s all about getting the basics right — measuring

the number of leads per day, users converting on the site, users signing up

on the product, free trials that end up paying, etc. Given the importance of

operational analytics, it would make sense for this startup to move to Stage

2 pretty quickly — converting its basic analytics into something more

scalable like a centralized intelligence engine. This would include

investing in a data warehouse that brings all data into one place,

adding a BI tool, and hiring the first analysts to drive data-driven

decisions where it matters most.

Fintech has a gender diversity problem — here’s how we tackle it

Diversity can both empower individuals and spark feelings of inclusion

across society. It encourages different perspectives and promotes tolerance

and understanding amongst workplaces. And in business, quite rightly, the

topic has entered the mainstream. Take the engineering industry as an

example, where gender equality figures are showing an encouraging steady

upwards trajectory. In law, female representation across the world is also

reputable. Both show gender equality is slowly, but surely, moving in the

right direction, but sadly in financial technology (Fintech), the same is

yet to be realised. A report by Innovate Finance found women still account

for less than 30% of the Fintech workforce, with less than 20% in executive

positions. By 2026, the industry is estimated to grow by 20%, hitting the

$324 billion mark in value, meaning that the gender gap will soon widen even

further. But how can Fintech continue to progress and thrive if it isn’t a

desirable industry for all? Clearly, the industry needs to do more. The

question is, how?

The IT Talent Crisis: 2 Ways to Hire and Retain

While CompTIA’s research notes that money is the top reason for workers to

leave, another factor is the lack of opportunity. “Our research indicates

that a top reason tech workers consider leaving is a lack of career growth

opportunities, a telling message to employers not to underestimate the value

of investing in staff training and professional development,” said Tim

Herbert, executive VP for research and market intelligence at CompTIA, in a

press release. Investing in employee training during a labor crunch can also

have downsides if employees take advantage of training and then use those

added skills to parlay their way into a new opportunity elsewhere. But if

employees are leaving, they are also going somewhere, too. Pyle recommends

that organizations not only look carefully at their compensation package

offers but also consider casting a wider net for candidates by looking

outside of your usual geography. “The hybrid work environment works,” he

says. “People can work from anywhere. If we are bringing in the right talent

we can bring them in from anywhere, as long as they can do the job.”

CIO role: How to move from gatekeeper to advisor

Historically, the role of the CIO focused on identifying, implementing, and

maintaining business IT systems, with budget set aside to explore and drive

innovation within the organization. Moving into the 2000s and 2010s, CIOs

were tasked with spearheading digital transformation and the journey to the

cloud. Today’s CIO must work as an advisor and partner to departments across

the organization, seeking to understand the needs of the wider business and

ensuring that those needs are met in a way that works both for the

individual and the wider business aims. However, this distributed approach

brings challenges: How, for example, does IT respond to a vulnerability in a

piece of software that IT did not know was running? Many IT leaders tell us

that the barrier between shadow IT and business-led IT is becoming more and

more blurred and is forcing tradeoffs around risk vs. flexibility. It is

IT’s role to not only facilitate business needs but also ensure compliance

and security. This creates tension between offering advice vs. imposing

governance.

Hackers Disrupt Canadian Healthcare and Steal Medical Data

The attack has resulted in ongoing disruptions to care in addition to

exposed data. The province is comprised of four regional health authorities,

although data was not stolen from all of them: Western Health - no data

believed to have been stolen, Central Health - data exposure unclear,

Eastern Health - 14 years of data exposed, and Labrador-Grenfell - 9 years

of data exposed. Officials say they're attempting to restore systems from

backups, and that the process remains underway and is not yet complete. On

Thursday, for example, public broadcaster CBC reported that while the Health

Sciences Center hospital in the city of St. John's had restored its Meditech

system, which handles patient health information and financial details, it

only included information from before the attack. Each health authority has

been publishing its own updates on the ongoing disruptions it continues to

face. Through at least Wednesday, for example, Western Health noted that

only some appointments would be proceeding, including chemotherapy

appointments "at a reduced capacity."

The Renaissance of Code Documentation: Introducing Code Walkthrough

As inline comments describe the specific code area they are attached to

without a broader scope, they are always limited. As for high-level

documentation, they can indeed provide the big picture, but they lack the

details that developers need for their work. For example, in the documentation

about extending git’s source code, you can definitely describe something like

the general process of creating a new git command in a high-level document.

However, you won’t be able to do so effectively without getting into specific

details and giving examples from the code itself. ... Code-Walkthrough

Documentation takes the reader on a “walk” made up of at least two stations

within the code. They describe flows and interactions and they may rely on

incorporating code snippets or tokens to do so. In other words, they are

code-coupled, in accordance with the principles of Continuous Documentation.

This kind of document provides an experience similar to getting familiarized

with a codebase with the help of an experienced contributor to the codebase -

when the latter walks you through the code.

Quote for the day:

"You can't be a leader if you can't

influence others to act." -- Dale E. Zand

No comments:

Post a Comment