Cyber Security In Cars

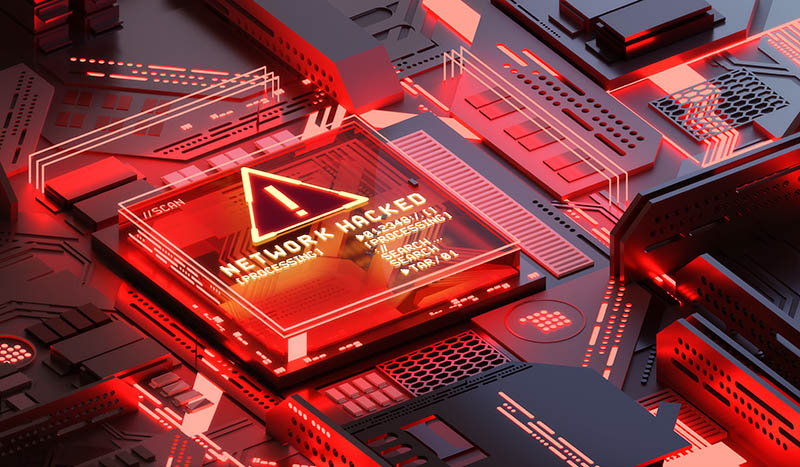

ISO/SAE 21434, Road vehicles – Cybersecurity engineering, addresses the

cybersecurity perspective in engineering of electrical and electronic (E/E)

systems within road vehicles. It will help manufacturers keep abreast of

changing technologies and cyber-attack methods, and defines the vocabulary,

objectives, requirements and guidelines related to cybersecurity engineering for

a common understanding throughout the supply chain. The standard, developed in

collaboration with SAE International, a global association of engineers and a

key ISO partner, draws on the recommendations detailed in SAE J3061,

Cybersecurity guidebook for cyber-physical vehicle systems, offering more

comprehensive guidance and the input of experts all around the world. Dr Gido

Scharfenberger-Fabian, Convenor of the group of ISO experts that developed the

standard, said it will enable organizations to define cybersecurity policies and

processes, manage cybersecurity risk and foster a cybersecurity culture.

“ISO/SAE 21434 will help consider cybersecurity issues at every stage of the

development process and in the field, increasing the vehicle’s own cybersecurity

defences and mitigating the risk of potential vulnerabilities for every

component,” he said.

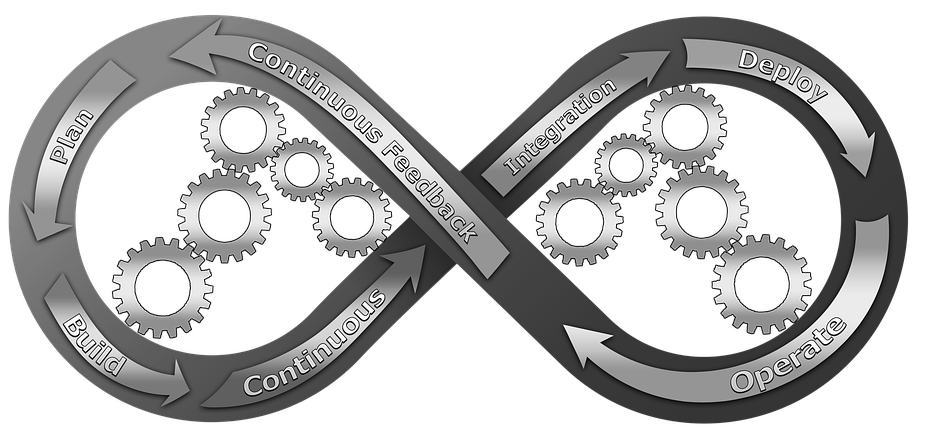

Ultimate Guide to Becoming a DevOps Engineer

The job title DevOps Engineer is thrown around a lot and it means different

things to different people. Some people claim that the title DevOps

Engineer shouldn’t exist, because DevOps is ‘a culture’ or ‘a way of

working’—not a role. The same people would argue that creating an additional

silo defeats the purpose of overlapping responsibilities and having different

teams working together. These arguments are not wrong. In fact, some companies

that understand and do DevOps engineering very well don’t even have a role with

that name (like Google!). The truth is that whenever you see DevOps Engineer

jobs advertised, the ad might actually be for an infrastructure engineer, a

systems reliability engineer (SRE), a CI/CD engineer, a sysadmin, etc. So the

definition for DevOps engineer is rather broad. One thing that’s certain though

is to be a DevOps engineer, you must have a solid understanding of the DevOps

culture and practices and you should be able to bridge any communication gaps

between teams in order to achieve software delivery velocity.

WhatsApp fined a record 225 mln euro by Ireland over privacy

/cloudfront-us-east-2.images.arcpublishing.com/reuters/BL3X4VW4RJOB7OA75TFWXTADSY.jpg)

A WhatsApp spokesperson said in a statement the issues in question related to

policies in place in 2018 and the company had provided comprehensive

information. "We disagree with the decision today regarding the transparency

we provided to people in 2018 and the penalties are entirely

disproportionate," the spokesperson said. EU privacy watchdog the European

Data Protection Board said it had given several pointers to the Irish agency

in July to address criticism from its peers for taking too long to decide in

cases involving tech giants and for not fining them enough for any breaches.

It said a WhatsApp fine should take into account Facebook's turnover and that

the company should be given three months instead of six months to comply.

Europe's landmark privacy rules, known as GDPR, are finally showing some teeth

even if the lead regulator for some tech giants appears otherwise, said Ulrich

Kelber, Germany's federal commissioner for data protection and freedom of

information. "What is important now is that the many other open cases on

WhatsApp in Ireland are finally decided on so that we can take faster and

longer strides towards the uniform enforcement of data protection law in

Europe," he told Reuters.

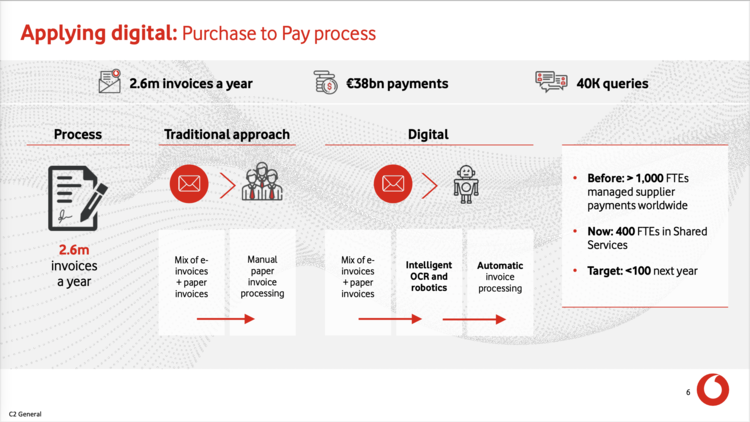

DevOps, Low-Code and RPA: Pros and Cons

RPA programs enable companies to automate repetitive tasks by creating

software scripts using a recorder. For those of us who remember using the

macro recorder in Microsoft Excel, it’s a similar concept. Once the script is

created, users can then use a visual editor to modify, reorder and edit its

steps. Speaking to the growing popularity of these solutions was the UiPath

IPO on April 21, 2021, which ended up being one of the largest software IOPs

in history. The use cases for RPA programs are unlimited—any repetitive task

done via a UI is a candidate. RPA is an area where we’ve seen an intersection

of business-user designed apps (UiPath and Blue Prism) with more traditional

DevOps tools specifically in the test automation space (Tricentis, Worksoft,

and Egglplant) and new conversational-based solutions like Krista. In the case

of test automation, a lightweight recorder is given to a business user who can

then record a business process. The recording is then fed to the automation

team, which creates a hardened test case that in turn is fed into a CI/CD

system.

IBM quantum computing: From healthcare to automotive to energy, real use cases are in play

Quantum computers are better at that than classical computers, Utz said.

Anthem is running different models on IBM's quantum cloud. Right now, company

officials are building a roadmap around how Anthem wants to deliver its

platform using quantum technology, so "I can't say quantum is ready for

primetime yet," Utz said. "The plan is to get there over the next year or so

and have something working in production." A good place to start with anomaly

detection is in finding fraud, he said. "Classical computers will tap out at

some point and can't get to the same place as quantum computers." Other use

cases are around longitudinal population health modeling, meaning that as

Anthem looks at providing more of a digital platform for health, one of the

challenges is that there is "almost an infinite number of relationships," he

said. This includes different health conditions, providers patients see,

outcomes and figuring out where there are outliers, he said. "There's only so

much a classical system can do there, so we're looking for more opportunities

to improve healthcare for our members and the population at large," and the

ability to proactively predict risk, Utz said.

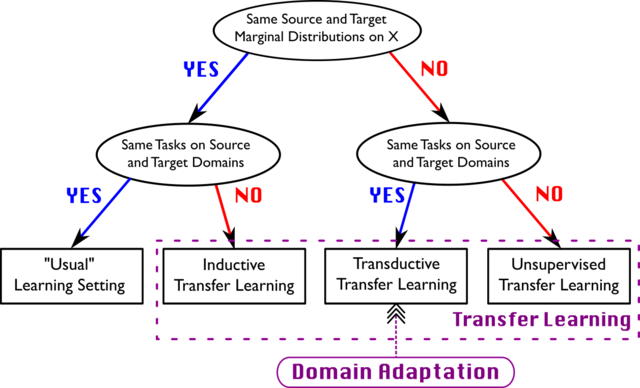

How to Implement Domain-Driven Design (DDD) in Golang

Domain-Driven Design is a way of structuring and modeling the software after

the Domain it belongs to. What this means is that a domain first has to be

considered for the software that is written. The domain is the topic or

problem that the software intends to work on. The software should be written

to reflect the domain. DDD advocates that the engineering team has to meet up

with the Subject Matter Experts, SME, which are the experts inside the domain.

The reason for this is because the SME holds the knowledge about the domain

and that knowledge should be reflected in the software. It makes pretty much

sense when you think about it, If I were to build a stock trading platform, do

I as an engineer know the domain well enough to build a good stock trading

platform? The platform would probably be a lot better off if I had a few

sessions with Warren Buffet about the domain The architecture in the code

should also reflect on the domain.

China’s Personal Information Protection Law and Its Global Impact

The law’s restrictions on cross-border data transfers may not affect

retailers that operate domestically, and hence have no need to transfer

information abroad. However, the story is vastly different for two types of

companies: those in possession of large amount of personal information and

those in possession of information on critical infrastructure. Moreover,

PIPL declares that the authority of domestic regulators supersedes that of

international treaties. PIPL will help foreign companies operating in China

without cross-border data transfers to develop privacy policies in

compliance with the law. Before PIPL, the lack of a domestic PI protection

law led to the broad adoption of the EU’s GDPR as a privacy policy among

foreign companies. However, the GDPR’s decision-making is based on

agreements among EU member states, which does not apply in the case of

China. Since PIPL will come into effect in November 2021, foreign firms in

China will need to revise their privacy policies to fit the requirements of

the new law.

10 Characteristics of an AI-Powered Enterprise

Digital transformation makes the inclusion of AI as part of the business

strategy even more important than it would be otherwise because digital

organizations are software companies. Since commercial applications and

tools are increasingly taking advantage of AI, the logical development by

extension is AI embedded in enterprise-built applications. After all,

businesses are moving more data and compute to the cloud and their new

applications are being designed as cloud-first applications. Of course, AI

and machine learning tooling is also available in the cloud, so developers

have what they need to build “intelligent” applications. AI and machine

learning don't just work, however. They require testing and monitoring.

“Losing trust in AI-infused applications is a high risk for AI-based

innovation,” said Diego Lo Giudice, VP and principal analyst at Forrester,

in a blog post. “Forrester Analytics data shows that 73% of enterprises

claim to be adopting AI for building new solutions in 2021, up from 68% in

2020, and testing those AI-infused applications becomes even more critical.”

Trust and safety are things that need to be proven through testing.

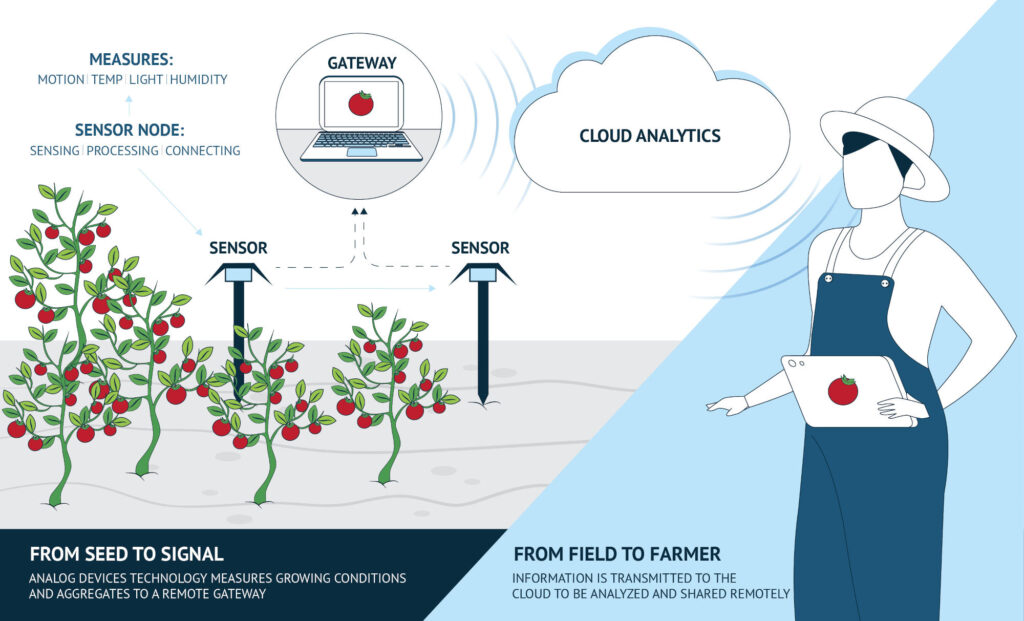

Why Rust is the best language for IoT development

Internet of Things (IoT) technology is rapidly terraforming the landscape of

modern society right in front of our very eyes, and propelling us all into

the future. It does this by providing solutions to everything from tracking

your daily personal fitness goals with an Apple watch, to completely

revolutionising the entire transport sector. These devices connect to each

other and form the great network required for something like a digital twin;

they are constantly collating data in real time from the surrounding

environment which means that the system is always using entirely current

information. As amazing and powerful as this technology is, it is slightly

held back by the fact that, by their very nature, IoT devices have far less

processing power than your average piece of equipment. This requires a much

more efficient code to be written to fully take advantage of its raw

potential without affecting the device’s performance. This is where Rust

comes into the picture as one of the very few languages that can provide a

faster runtime for IoT technology.

Are Tesla’s Dojo supercomputer claims valid?

The D1, according to Tesla, features 362teraFLOPS of processing power. This

means it can perform 362 trillion floating-point operations per second

(FLOPS), Tesla says. Now imagine harnessing the processing power of 25 D1

chips into a training tile, and then linking together 120 training tiles

through multiple servers. That’s what Tesla is doing with the Dojo

supercomputer for its autonomous cars. And with each training tile

containing 9PFLOPS of computing power, Dojo has (by my possibly inaccurate

calculations) 1.08 exaFLOPS of power under its hood (Tesla calls it

1.1EFLOPS). That kind of horsepower would make Dojo more than twice as fast

as the currently acknowledged fastest supercomputer in the world, Fugaku.

Built by Fujitsu, this supercomputer reaches speeds of 442PFLOPS.

Supercomputers already are being used to accelerate medical research and

drug development because they are capable of quickly processing massive

amounts of data. Indeed, researchers have relied on supercomputers to power

COVID-19 research since the pandemic began in early 2020.

Quote for the day:

"Great leaders go forward without

stopping, remain firm without tiring and remain enthusiastic while

growing." -- Reed Markham

/cloudfront-us-east-2.images.arcpublishing.com/reuters/N45WXQTJYFIIBNBHNKPEHHVXO4.jpg)