Cloud Applications Are The Major Catalysts For Cyber Attachks

Those cybersecurity threats have sky-high substantially in recent because

criminals have built lucrative businesses from stealing data and nation-states

have come to see cybercrime as an opportunity to acquire information, influence,

and advantage over their rivals. This has made a path for potential catastrophic

attacks such as the WannaCrypt ransomware campaign which was being displayed in

recent headlines. This evolving threat landscape has begun to change the way

customers view the cloud. “It was only a few years ago when most of my customer

conversations started with, ‘I can’t go to the cloud because of security. It’s

not possible,’” said Julia White, Microsoft’s corporate vice president for Azure

and security. “And now I have people, more often than not, saying, ‘I need to go

to the cloud because of security.’” It’s not an exaggeration to say that cloud

computing is completely changing our society. It’s ending major industries such

as the retail sector, enabling the type of mathematical computation that is

uplifting an artificial intelligence revolution and even having a profound

impact on how we communicate with friends, family, and colleagues.

Intel AI chief Wei Li: Someone has to bring today's AI supercomputing to the masses

As is often the case in technology, everything old is new again. Suddenly, says

Li, everything in deep learning is coming back to the innovations of compilers

back in the day. "Compilers had become irrelevant" in recent years, he said, an

area of computer science viewed as largely settled. "But because of deep

learning, the compiler is coming back," he said. "We are in the middle of that

transition." In his his PhD dissertation at Cornell, Li developed a computer

framework for processing code in very large systems with what are called

"non-uniform memory access," or NUMA. His program refashioned code loops for the

most amount of parallel processing possible. But it also did something else

particularly important: it decided which code should run depending on which

memories the code needed to access at any given time. Today, says Li, deep

learning is approaching the point where those same problems dominate. Deep

learning's potential is mostly gated not by how many matrix multiplications can

be computed but by how efficiently the program can access memory and

bandwidth.

Event Streaming and Event Sourcing: The Key Differences

Event streaming employs the pub-sub approach to enable more accessible

communication between systems. In the pub-sub architectural pattern, consumers

subscribe to a topic or event, and producers post to these topics for consumers’

consumption. The pub-sub design decouples the publisher and subscriber systems,

making it easier to scale each system individually. The publisher and subscriber

systems communicate through a message broker like Apache Pulsar. When a state

changes or an event occurs, the producer sends the data (data sources include

web apps, social media and IoT devices) to the broker, after which the broker

relates the event to the subscriber, who then consumes the event. Event

streaming involves the continuous flow of data from sources like applications,

databases, sensors and IoT devices. Event streams employ stream processing, in

which data undergoes processing and analysis during generation. This quick

processing translates to faster results, which is valuable for businesses with a

limited time window for taking action, as with any real-time application.

Big cloud rivals hit back over Microsoft licensing changes

In a nutshell, the changes that come into effect from October allow customers

with Software Assurance or subscription licenses to use these existing licenses

"to install software on any outsourcers' infrastructure" of their choice. But as

The Register noted at the time, this specifically excludes "Listed Providers", a

group that just happens to include Microsoft's biggest cloud rivals – AWS,

Google and Alibaba – as well as Microsoft's own Azure cloud, in a bid to steer

customers to Microsoft's partner network. ... These criticisms are not entirely

new, and some in the cloud sector made similar points following Microsoft's

disclosure of some of the licensing changes it intended to make back in May. One

cloud operator who requested anonymity told The Register in June that Redmond's

proposed changes fail to "move the needle" and ignore the company's "other

problematic practices." Another AWS exec, Matt Garman, posted on LinkedIn in

July that Microsoft's proposed changes did not represent fair licensing practice

and were not what customers wanted.

Machine learning at the edge: The AI chip company challenging Nvidia and Qualcomm

Built on 16nm technology, the MLSoC’s processing system consists of computer

vision processors for image pre- and post-processing, coupled with dedicated ML

acceleration and high-performance application processors. Surrounding the

real-time intelligent video processing are memory interfaces, communication

interfaces, and system management — all connected via a network-on-chip (NoC).

The MLSoC features low operating power and high ML processing capacity, making

it ideal as a standalone edge-based system controller, or to add an ML-offload

accelerator for processors, ASICs and other devices. The software-first approach

includes carefully-defined intermediate representations (including the TVM Relay

IR), along with novel compiler-optimization techniques. ... Many ML startups are

focused on building only pure ML accelerators and not an SoC that has a

computer-vision processor, applications processors, CODECs, and external memory

interfaces that enable the MLSoC to be used as a stand-alone solution not

needing to connect to a host processor. Other solutions usually lack network

flexibility, performance per watt, and push-button efficiency – all of which are

required to make ML effortless for the embedded edge.

Why CIOs Need to Be Even More Dominant in the C-Suite Right Now

“Now more than ever, we’re seeing a pressing demand for CIOs to deliver digital

transformation that enables business growth to energize the top line or optimize

operations to eliminate cost and help the bottom line,” says Savio Lobo, CIO of

Ensono. This requires the CIO to have a deep understanding of the business and

surface decisions that may influence these objectives. Large-scale digital

solutions and capabilities, however, often cannot be implemented simultaneously,

especially when they require significant change in how customers and staff

engage with people and processes. This means ruthless prioritization decisions

may need to be made that include what is moving forward at any given time and

equally importantly, what is not. “While executing a large initiative, there

will also be people, process and technology choices to be made and these need to

be made in a timely manner,” Lobo adds. This may look unique for every

organization but should include collaboration on the discovery and

implementation and an open feedback loop for how systems and processes are

working or not working in each stakeholder’s favor.

Ensuring security of data systems in the wake of rogue AI

A ‘Trusted Computing’ model, like the one developed by the Trusted Computing

Group (TCG), can be easily

applied to all four of these AI elements in order to fully secure a rogue AI.

Considering the data set element of an AI, a Trusted Platform Module (TPM) can

be used to sign and verify that data has come from a trusted source. A hardware

Root of Trust, such as the Device Identifier Composition Engine (DICE), can make

sure that sensors and other connected devices maintain high levels of integrity

and continue to provide accurate data. Boot layers within a system each receive

a DICE secret, which combines the preceding secret on the previous layer with

the measurement of the current one. This ensures that when there is a successful

exploit, the exposed layer’s measurements and secrets will be different,

securing data and protecting itself from any data disclosure. DICE also

automatically re-keys the device if a flaw is unearthed within the device

firmware. The strong attestation offered by the hardware makes it a great tool

to discover any vulnerabilities in any required updates.

The Implication of Feedback Loops for Value Streams

The practical implication for software engineering management is to first

address feedback loops that generate a lot of bugs/issues to get your capacity

back. For example, if you have a fragile architecture or code of low

maintainability that requires a lot of rework after any new change

implementation, it is obvious that refactoring is necessary to regain

engineering productivity; otherwise, engineering team capacity will be low. The

last observation is that the lead time will depend on the simulation duration,

the longer you run the value stream, the higher the number of lead times

variants you will get. Such behavior is the direct implication of the value

stream structure with the redo feedback loop and its probability distribution

between the output queue and the redo queue. If you are an engineering manager

who inherited legacy code with significant accumulated debt, it might be

reasonable to consider incremental solution rewriting. Otherwise, the speed of

delivery will be very slow forever, not only for the modernization time. The art

of simplicity; greater complexity yields more variations which increase the

probability of results occurring outside of acceptable parameters.

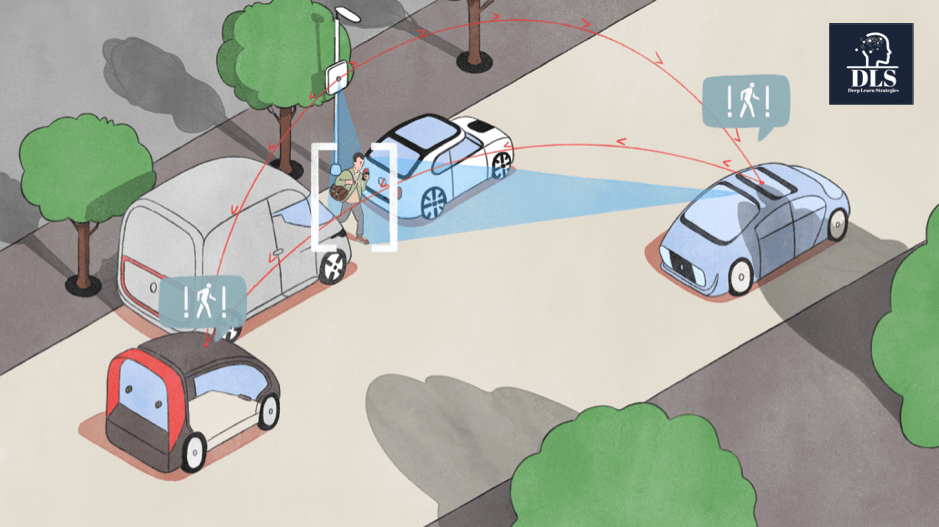

Beat these common edge computing challenges

Realizing the benefits of edge computing depends on a thoughtful strategy and

careful evaluation of your use cases, in part to ensure that the upside will

dwarf the natural complexity of edge environments. “CIOs shouldn’t adopt or

force edge computing just because it’s the trendy thing – there are real

problems that it’s intended to solve, and not all scenarios have those

problems,” says Jeremy Linden, senior director of product management at Asimily.

Part of the intrinsic challenge here is that one of edge computing’s biggest

problem-solution fits – latency – has sweeping appeal. Not many IT leaders are

pining for slower applications. But that doesn’t mean it’s a good idea (or even

feasible) to move everything out of your datacenter or cloud to the edge. “So

for example, an autonomous car may have some of the workload in the cloud, but

it inherently needs to react to events very quickly (to avoid danger) and do so

in situations where internet connectivity may not be available,” Linden says.

“This is a scenario where edge computing makes sense.” In Linden’s own work –

Asimily does IoT security for healthcare and medical devices – optimizing the

cost-benefit evaluation requires a granular look at workloads.

Tenable CEO on What's New in Cyber Exposure Management

Tenable wants to provide customers with more context around what threat actors

are exploiting in the wild to both refine and leverage the analytics

capabilities the company has honed, Yoran says. Tenable must have context

around what's mission-critical in a customer's organization to help clients

truly understand their risk and exposure rather than just add to their cyber

noise, he adds. Tenable has spent more on vulnerability management-focused

R&D over the past half-decade than its two closest competitors combined,

which has allowed the firm to deliver differentiated capabilities, Yoran says.

Unlike competitors who have expanded their offerings to include everything

from logging and SIEM to EDR and managed security services, Yoran says Tenable

has remained laser-focused on risk. "The three primary vulnerability

management vendors have three very different strategies and they've been on

divergent paths for a long time," Yoran says. "For us, the key to success has

been and will continue to be that focus on helping people assess and

understand risk."

Quote for the day:

"Get your facts first, then you can

distort them as you please." -- Mark Twain

/cloudfront-us-east-2.images.arcpublishing.com/reuters/BL3X4VW4RJOB7OA75TFWXTADSY.jpg)