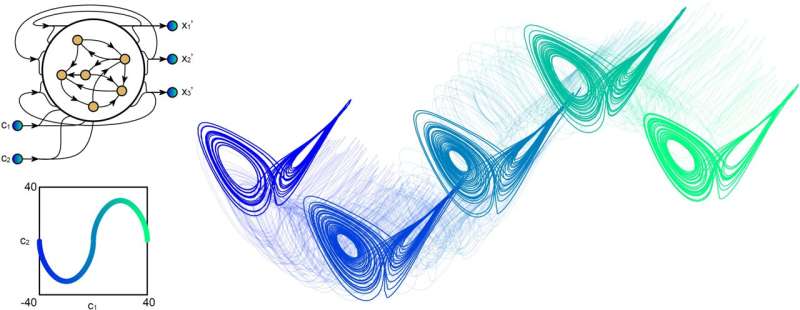

A recurrent neural network that infers the global temporal structure based on local examples

"Every day, we manipulate information about the world to make predictions,"

Jason Kim, one of the researchers who carried out the study, told TechXplore.

"How much longer can I cook this pasta before it becomes soggy? How much later

can I leave for work before rush hour? Such information representation and

computation broadly fall into the category of working memory. While we can

program a computer to build models of pasta texture or commute times, our

primary objective was to understand how a neural network learns to build models

and make predictions only by observing examples." Kim, his mentor Danielle S.

Bassett and the rest of their team showed that the two key mechanisms through

which a neural network learns to make predictions are associations and context.

For instance, if they wanted to teach their RNN to change the pitch of a song,

they fed it the original song and two other versions of it, one with a slightly

higher pitch and the other with a slightly lower pitch. For each shift in pitch,

the researchers 'biased' the RNN with a context variable. Subsequently, they

trained it to store the original and modified songs within its working

memory.

"Every day, we manipulate information about the world to make predictions,"

Jason Kim, one of the researchers who carried out the study, told TechXplore.

"How much longer can I cook this pasta before it becomes soggy? How much later

can I leave for work before rush hour? Such information representation and

computation broadly fall into the category of working memory. While we can

program a computer to build models of pasta texture or commute times, our

primary objective was to understand how a neural network learns to build models

and make predictions only by observing examples." Kim, his mentor Danielle S.

Bassett and the rest of their team showed that the two key mechanisms through

which a neural network learns to make predictions are associations and context.

For instance, if they wanted to teach their RNN to change the pitch of a song,

they fed it the original song and two other versions of it, one with a slightly

higher pitch and the other with a slightly lower pitch. For each shift in pitch,

the researchers 'biased' the RNN with a context variable. Subsequently, they

trained it to store the original and modified songs within its working

memory.Cybersecurity industry analysis: Another recurring vulnerability we must correct

Security tooling is a must-have, but we need to look wider and restore balance to the people component of security defense. Automation is the future. Why should we care about the human element of cybersecurity? Virtually everything in our lives is powered by software, and it’s true that automation is replacing the human elements that were once present in so many industries. It’s a sign of progress in a world digitizing at warp speed, with AI and machine learning hot topics keeping many organizations future-focused. So, why, then, would a human-focused approach to cybersecurity be anything other than an antiquated solution to a technologically advancing problem? The fact that billions of data records have been stolen in breaches in the past year, including the most recent Facebook breach affecting over half a billion accounts, should indicate that we’re not doing enough (or taking the right approach) to make a serious counter-punch against threat actors. Cybersecurity tooling is a much-needed component of cyber defense, and tools will always have a place. Analysts have been absolutely on point in recommending the latest tools in a risk mitigation approach for enterprises, and that will not change.Researchers Confront Major Hurdle in Quantum Computing

A time crystal is a strange state of matter in which interactions between the

particles that make up the crystal can stabilize oscillations of the system in

time indefinitely. Imagine a clock that keeps ticking forever; the pendulum of

the clock oscillates in time, much like the oscillating time crystal. By

implementing a series of electric-field pulses on electrons, the researchers

were able to create a state similar to a time crystal. They found that they

could then exploit this state to improve the transfer of an electron’s spin

state in a chain of semiconductor quantum dots. “Our work takes the first steps

toward showing how strange and exotic states of matter, like time crystals, can

potentially by used for quantum information processing applications, such as

transferring information between qubits,” Nichol says. “We also theoretically

show how this scenario can implement other single- and multi-qubit operations

that could be used to improve the performance of quantum computers.” Both AQT

and time crystals, while different, could be used simultaneously with quantum

computing systems to improve performance.

A time crystal is a strange state of matter in which interactions between the

particles that make up the crystal can stabilize oscillations of the system in

time indefinitely. Imagine a clock that keeps ticking forever; the pendulum of

the clock oscillates in time, much like the oscillating time crystal. By

implementing a series of electric-field pulses on electrons, the researchers

were able to create a state similar to a time crystal. They found that they

could then exploit this state to improve the transfer of an electron’s spin

state in a chain of semiconductor quantum dots. “Our work takes the first steps

toward showing how strange and exotic states of matter, like time crystals, can

potentially by used for quantum information processing applications, such as

transferring information between qubits,” Nichol says. “We also theoretically

show how this scenario can implement other single- and multi-qubit operations

that could be used to improve the performance of quantum computers.” Both AQT

and time crystals, while different, could be used simultaneously with quantum

computing systems to improve performance.How Ethical Hackers Play An Important Role In Protecting Enterprise Data

Data is an essential asset in the current dynamic setting. The value of data has made big organizations more vulnerable to cyberattacks. But believing that a big company can only suffer from a data breach incident is wrong. In reality, No one is immune to data theft, whether you’re an individual, an SME, a large enterprise, or even a state. A surer way by which organizations can protect themselves from the possibility of an effective malicious attack is to engage with competent, ethical hackers. It would help if your organization structure had someone who understands how malicious hackers think. In such scenarios, it makes sense to take the help of ethical hackers. Ethical hacking in cybersecurity has its groundwork on data protection. Unlike cybercriminals, ethical hackers operate with the consent of the client. They use the same tools and techniques as malicious attackers. However, cybersecurity and ethical hacking experts intend to protect and secure your network as they can think like the bad guys. They can quickly discover your system vulnerabilities and suggest how you can resolve them before they are exploited.Microsoft, GPT-3, and the future of OpenAI

There’s a clear line between academic research and commercial product

development. In academic AI research, the goal is to push the boundaries of

science. This is exactly what GPT-3 did. OpenAI’s researchers showed that with

enough parameters and training data, a single deep learning model could perform

several tasks without the need for retraining. And they have tested the model on

several popular natural language processing benchmarks. But in commercial

product development, you’re not running against benchmarks such as GLUE and

SQuAD. You must solve a specific problem, solve it ten times better than the

incumbents, and be able to run it at scale and in a cost-effective manner.

Therefore, if you have a large and expensive deep learning model that can

perform ten different tasks at 90 percent accuracy, it’s a great scientific

achievement. But when there are already ten lighter neural networks that perform

each of those tasks at 99 percent accuracy and a fraction of the price, then

your jack-of-all-trades model will not be able to compete in a profit-driven

market.

There’s a clear line between academic research and commercial product

development. In academic AI research, the goal is to push the boundaries of

science. This is exactly what GPT-3 did. OpenAI’s researchers showed that with

enough parameters and training data, a single deep learning model could perform

several tasks without the need for retraining. And they have tested the model on

several popular natural language processing benchmarks. But in commercial

product development, you’re not running against benchmarks such as GLUE and

SQuAD. You must solve a specific problem, solve it ten times better than the

incumbents, and be able to run it at scale and in a cost-effective manner.

Therefore, if you have a large and expensive deep learning model that can

perform ten different tasks at 90 percent accuracy, it’s a great scientific

achievement. But when there are already ten lighter neural networks that perform

each of those tasks at 99 percent accuracy and a fraction of the price, then

your jack-of-all-trades model will not be able to compete in a profit-driven

market.Has DevOps killed the BA/QA/DBA Roles?

As the industry continues towards DevOps and Cloud, these fields will thin

out. Each of the roles will trend towards more of a specialization, especially

the DBA, since the operational overhead of maintaining a DB is rapidly

decreasing. They’ll last longer at big companies, but the tolerance for lower

performers will drastically decline. However, simultaneously the demand for

data expertise will keep accelerating as shown in the forecast below. Growth

in warehousing and data science should ensure data specialization remains

lucrative, and DBAs are well-poised to transition. Of the three, the BA role

seems safest. The average software developer simply does not have the time

(nor often capabilities) to maintain the social network of a strong BA.

However, as more companies migrate to DevOps/Agile, the feedback barrier

between users and developers will continue to shrink. As it does, BAs that are

not technically competent will be pushed out. The QA role is the hardest to

predict. As automation improves, demand for QA persons to run manual scripts

and “catch bugs” will disappear.

There are a bunch of certifications, from CompTIA’s Security+ to others that

will help signal your readiness for cybersecurity jobs. Some are more

entry-level and require IT competencies such as the A+. But some will require

you to have job experience in cybersecurity (such as the CISSP). There’s a bit

of a chicken and egg situation and you might wonder – how can you get job

experience if you need job experience to get the job in the first place?

Adjacent job experience can often make a difference here. Many people

transition into cybersecurity from IT roles, such as network administration,

system administration, or being on helpdesk for IT, which is an entry-level

role. You can gain experience here and transition over. There are also

programs tailored for veterans and people with law enforcement backgrounds to

get into cybersecurity. Lastly there are many cybersecurity internships being

offered to bridge this gap – though with the right backing, training, and the

right experience, you can skip ahead to junior-level analyst roles. SOC

analyst roles are a good way to break into the cybersecurity industry.

Security operations centers need analysts to parse through different

threats.

There are a bunch of certifications, from CompTIA’s Security+ to others that

will help signal your readiness for cybersecurity jobs. Some are more

entry-level and require IT competencies such as the A+. But some will require

you to have job experience in cybersecurity (such as the CISSP). There’s a bit

of a chicken and egg situation and you might wonder – how can you get job

experience if you need job experience to get the job in the first place?

Adjacent job experience can often make a difference here. Many people

transition into cybersecurity from IT roles, such as network administration,

system administration, or being on helpdesk for IT, which is an entry-level

role. You can gain experience here and transition over. There are also

programs tailored for veterans and people with law enforcement backgrounds to

get into cybersecurity. Lastly there are many cybersecurity internships being

offered to bridge this gap – though with the right backing, training, and the

right experience, you can skip ahead to junior-level analyst roles. SOC

analyst roles are a good way to break into the cybersecurity industry.

Security operations centers need analysts to parse through different

threats.

The first benefit of serverless machine learning is that it is very scalable.

It can stack up to 10k requests at the same time without having to write any

additional logic. It doesn’t consume extra time to scale which makes it

perfect for handling random high loads. Secondly, with a pay-as-you-go

architecture of serverless machine learning a person doesn’t have to pay

unused server time. It can save an enormous amount of money. For example, if a

user has 50k requests a month, he is obliged to pay only for 50k requests.

Thirdly, infrastructure management becomes very easy as a user doesn’t have to

hire a special person to look into it, it can be done very easily by a backend

developer. For instance, AWS Lambda is one of the most popular serverless

cloud services that has these advantages. It lets users run code without

managing servers. It obviated the need for developers to explicitly configure,

deploy, and manage long-term computing units. Training in Serverless Machine

Learning does not require extensive programming knowledge. Basic knowledge of

Python, Machine Learning, Linux, and Terminal along with an AWS account is

enough to get one started.

The first benefit of serverless machine learning is that it is very scalable.

It can stack up to 10k requests at the same time without having to write any

additional logic. It doesn’t consume extra time to scale which makes it

perfect for handling random high loads. Secondly, with a pay-as-you-go

architecture of serverless machine learning a person doesn’t have to pay

unused server time. It can save an enormous amount of money. For example, if a

user has 50k requests a month, he is obliged to pay only for 50k requests.

Thirdly, infrastructure management becomes very easy as a user doesn’t have to

hire a special person to look into it, it can be done very easily by a backend

developer. For instance, AWS Lambda is one of the most popular serverless

cloud services that has these advantages. It lets users run code without

managing servers. It obviated the need for developers to explicitly configure,

deploy, and manage long-term computing units. Training in Serverless Machine

Learning does not require extensive programming knowledge. Basic knowledge of

Python, Machine Learning, Linux, and Terminal along with an AWS account is

enough to get one started.

The distributed aspect of blockchain means that data are replicated across

several computers. This fact makes the hacking more challenging since there

are now several target devices. The redundancy in storage brought by

blockchain technology brings extra security and enhances data access since

users in IoT ecosystems can submit to and retrieve their data from different

devices, Carvahlo said. Continuing with this example, say the burglar is

captured and claims in court that the recorded video is forged evidence. The

immutability nature of blockchain technology means that any change to the

stored data can be easily detected. Thus, the burglar’s claim can be verified

by looking at attempts to tamper with the data, he said. However, the

decentralization aspect of blockchain technology can be a major issue when

storing data from IoT devices, according to Carvahlo. “Decentralization means

that the computers used to store data [in a distributed fashion] might belong

to different entities,” he said. “In other words, if not implemented

appropriately, there is a risk that users’ sensitive data can now be by

default stored by and available to third parties.”

The distributed aspect of blockchain means that data are replicated across

several computers. This fact makes the hacking more challenging since there

are now several target devices. The redundancy in storage brought by

blockchain technology brings extra security and enhances data access since

users in IoT ecosystems can submit to and retrieve their data from different

devices, Carvahlo said. Continuing with this example, say the burglar is

captured and claims in court that the recorded video is forged evidence. The

immutability nature of blockchain technology means that any change to the

stored data can be easily detected. Thus, the burglar’s claim can be verified

by looking at attempts to tamper with the data, he said. However, the

decentralization aspect of blockchain technology can be a major issue when

storing data from IoT devices, according to Carvahlo. “Decentralization means

that the computers used to store data [in a distributed fashion] might belong

to different entities,” he said. “In other words, if not implemented

appropriately, there is a risk that users’ sensitive data can now be by

default stored by and available to third parties.”

How to Get a Cybersecurity Job in 2021

There are a bunch of certifications, from CompTIA’s Security+ to others that

will help signal your readiness for cybersecurity jobs. Some are more

entry-level and require IT competencies such as the A+. But some will require

you to have job experience in cybersecurity (such as the CISSP). There’s a bit

of a chicken and egg situation and you might wonder – how can you get job

experience if you need job experience to get the job in the first place?

Adjacent job experience can often make a difference here. Many people

transition into cybersecurity from IT roles, such as network administration,

system administration, or being on helpdesk for IT, which is an entry-level

role. You can gain experience here and transition over. There are also

programs tailored for veterans and people with law enforcement backgrounds to

get into cybersecurity. Lastly there are many cybersecurity internships being

offered to bridge this gap – though with the right backing, training, and the

right experience, you can skip ahead to junior-level analyst roles. SOC

analyst roles are a good way to break into the cybersecurity industry.

Security operations centers need analysts to parse through different

threats.

There are a bunch of certifications, from CompTIA’s Security+ to others that

will help signal your readiness for cybersecurity jobs. Some are more

entry-level and require IT competencies such as the A+. But some will require

you to have job experience in cybersecurity (such as the CISSP). There’s a bit

of a chicken and egg situation and you might wonder – how can you get job

experience if you need job experience to get the job in the first place?

Adjacent job experience can often make a difference here. Many people

transition into cybersecurity from IT roles, such as network administration,

system administration, or being on helpdesk for IT, which is an entry-level

role. You can gain experience here and transition over. There are also

programs tailored for veterans and people with law enforcement backgrounds to

get into cybersecurity. Lastly there are many cybersecurity internships being

offered to bridge this gap – though with the right backing, training, and the

right experience, you can skip ahead to junior-level analyst roles. SOC

analyst roles are a good way to break into the cybersecurity industry.

Security operations centers need analysts to parse through different

threats.Making A Case For Serverless Machine Learning

The first benefit of serverless machine learning is that it is very scalable.

It can stack up to 10k requests at the same time without having to write any

additional logic. It doesn’t consume extra time to scale which makes it

perfect for handling random high loads. Secondly, with a pay-as-you-go

architecture of serverless machine learning a person doesn’t have to pay

unused server time. It can save an enormous amount of money. For example, if a

user has 50k requests a month, he is obliged to pay only for 50k requests.

Thirdly, infrastructure management becomes very easy as a user doesn’t have to

hire a special person to look into it, it can be done very easily by a backend

developer. For instance, AWS Lambda is one of the most popular serverless

cloud services that has these advantages. It lets users run code without

managing servers. It obviated the need for developers to explicitly configure,

deploy, and manage long-term computing units. Training in Serverless Machine

Learning does not require extensive programming knowledge. Basic knowledge of

Python, Machine Learning, Linux, and Terminal along with an AWS account is

enough to get one started.

The first benefit of serverless machine learning is that it is very scalable.

It can stack up to 10k requests at the same time without having to write any

additional logic. It doesn’t consume extra time to scale which makes it

perfect for handling random high loads. Secondly, with a pay-as-you-go

architecture of serverless machine learning a person doesn’t have to pay

unused server time. It can save an enormous amount of money. For example, if a

user has 50k requests a month, he is obliged to pay only for 50k requests.

Thirdly, infrastructure management becomes very easy as a user doesn’t have to

hire a special person to look into it, it can be done very easily by a backend

developer. For instance, AWS Lambda is one of the most popular serverless

cloud services that has these advantages. It lets users run code without

managing servers. It obviated the need for developers to explicitly configure,

deploy, and manage long-term computing units. Training in Serverless Machine

Learning does not require extensive programming knowledge. Basic knowledge of

Python, Machine Learning, Linux, and Terminal along with an AWS account is

enough to get one started.How Blockchain Technology Can Benefit the Internet of Things

The distributed aspect of blockchain means that data are replicated across

several computers. This fact makes the hacking more challenging since there

are now several target devices. The redundancy in storage brought by

blockchain technology brings extra security and enhances data access since

users in IoT ecosystems can submit to and retrieve their data from different

devices, Carvahlo said. Continuing with this example, say the burglar is

captured and claims in court that the recorded video is forged evidence. The

immutability nature of blockchain technology means that any change to the

stored data can be easily detected. Thus, the burglar’s claim can be verified

by looking at attempts to tamper with the data, he said. However, the

decentralization aspect of blockchain technology can be a major issue when

storing data from IoT devices, according to Carvahlo. “Decentralization means

that the computers used to store data [in a distributed fashion] might belong

to different entities,” he said. “In other words, if not implemented

appropriately, there is a risk that users’ sensitive data can now be by

default stored by and available to third parties.”

The distributed aspect of blockchain means that data are replicated across

several computers. This fact makes the hacking more challenging since there

are now several target devices. The redundancy in storage brought by

blockchain technology brings extra security and enhances data access since

users in IoT ecosystems can submit to and retrieve their data from different

devices, Carvahlo said. Continuing with this example, say the burglar is

captured and claims in court that the recorded video is forged evidence. The

immutability nature of blockchain technology means that any change to the

stored data can be easily detected. Thus, the burglar’s claim can be verified

by looking at attempts to tamper with the data, he said. However, the

decentralization aspect of blockchain technology can be a major issue when

storing data from IoT devices, according to Carvahlo. “Decentralization means

that the computers used to store data [in a distributed fashion] might belong

to different entities,” he said. “In other words, if not implemented

appropriately, there is a risk that users’ sensitive data can now be by

default stored by and available to third parties.”Software Engineering at Google: Practices, Tools, Values, and Culture

The skills required for developing good software are not the same skills that

were required (at one point) to mass produce automobiles, etc. We need

engineers to respond creatively, and to continually learn, not do one thing

over and over. If they don’t have creative freedom, they will not be able to

evolve with the industry as it, too, rapidly changes. To foster that

creativity, we have to allow people to be human, and to foster a team climate

of trust, humility, and respect. Trust to do the right thing. Humility to

realize you can’t do it alone and can make mistakes. ... Building with a

diverse team is, in our belief, critical to making sure that the needs of a

more diverse user base are met. We see that historically: first-generation

airbags were terribly dangerous for anyone that wasn’t built like the people

on the engineering teams designing those safety systems. Crash test dummies

were built for the average man, and the results were bad for women and

children, for instance. In other words, we’re not just working to build for

everyone, we’re working to build with everyone. It takes a lot of

institutional support and local energy to really build multicultural capacity

in an organization. We need allies, training, and support structures.

Quote for the day:

"Nothing so conclusively proves a man's ability to lead others as what he does from day to day to lead himself." -- Thomas J. Watson

Quote for the day:

"Nothing so conclusively proves a man's ability to lead others as what he does from day to day to lead himself." -- Thomas J. Watson