Can behavioural banking drive financial literacy and inclusion?

In good times, the need to improve financial literacy is widely accepted by

banking industry leaders and consumers alike. This important topic is

regularly discussed by experts at the World Economic Forum and built into

initiatives sponsored by the United Nations. Regarded as an economic good,

financial literacy is critical to achieving financial inclusion. What about

now, in decidedly less-than-good times? How are banks prepared to promote

financial literacy for millennials and especially Gen Z, as they face a world

in financial turmoil? ... The right systems helped the bank get up and running

just 18 months after its initial launch announcement. Powerful, reliable

technology also helped the company create a customer onboarding application

that can open a new account within just five minutes. “The technology is

extremely important for us,” says Frey. “It has to be fast, agile, and robust.

We needed a solid workhorse with a huge amount of flexibility at the

configuration level.” In 2020, Discovery will begin looking for ways to

incorporate rapidly developing technologies such as artificial intelligence

and machine learning into its solutions. Most important, however, is listening

to customers and ensuring that the bank delivers the most pleasant, rewarding

experience possible.

With DevOps, security is everybody’s responsibility. OK, so what’s next?

DevSecOps solutions are by nature designed to be preventative. The idea is to

remove complexity by baking robust security methodologies into software

development from the earliest stages. Get it right from the outset, and

reactive firefighting is greatly reduced. Conveniently, this model – “shifting

security left” to the coder rather than the expert in a fixed hierarchy – also

makes sense when developing on cloud platforms that assume rapid deployment

and collaboration. There is no development team, security team, or IT

deployment team because they are one and the same person. In theory, that’s

how security misconfigurations can be caught before they do harm. However,

when it comes to cloud development, “shift left” is more talked about than

practised. This situation has crept up on organisations that haven’t realised

how programming culture has changed rapidly in the cloud era. “There is a lack

of control in this model. With the shift into cloud development and the fact

that coders can always get a better answer of Stack Overflow and GitHub, it’s

become practically impossible to track the supply chain. It’s a governance

problem,” says Guy Eisenkot

Surface Duo: Microsoft's $1,400 dual-screen Android phone coming September 10

Microsoft is counting on users seeing the Duo as filling an untapped niche.

But for people used to thinking about carrying no more than two devices --

usually a PC/tablet or phone -- where does the Duo fit? In its first

iteration, with a seemingly mediocre 11 MP camera, an older Snapdragon 855

processor and a relatively heavy form factor (about half a pound), the Duo is

not going to replace my Pixel 3XL Android phone. And with a total screen size

when open of 8.1 inches, the Duo is just too small to replace my PC. Panay and

team are touting the Duo as a device that will give people a better way to get

things done, to create and to connect. As was the case with the currently

postponed, Windows 10X-based Surface Neo device, Microsoft's contention is two

separate screens connected via a hinge help people work smarter and faster

than they could with a single screen of any size. Officials say they've got

research and years of work that backs up this claim. I do think more screen is

better for almost everything, but for now, I am having trouble buying the idea

that a hinge/division in the middle of two screens is going to make any kind

of magic happen in my brain.

The clear Sky strategy

You need to have your eyes to the horizon and your feet on the floor. At all

times. And it’s quite a discipline to do that. You see a lot of people who are

consumed about managing the now, and then if you look at the last few months,

there’s not been a lot of forward thinking. Then you also see other people

who, perhaps the longer they are in their roles, spend more and more time

thinking about the future horizon. That’s all very alluring and appealing, but

they disconnect with the immediacy of what’s important today. You must try to

think of both of those things and also encourage everybody else to think of

their own role in that way. So, if you’re in broadcast technology today and

you’re running that function or department, how do you get your colleagues to

look at the future broadcast technologies and at the same time equip people to

shoot with their iPhones and get the news out quickly? What you end up with is

this networked brain. Everybody in Sky should be thinking about where the

company should go, but also “How do I personally make sure I’m doing what is

needed?”

Did Intel fail to protect proprietary secrets, or misconfigure servers? Lessons from the leak

Regardless of the circumstances, there are key takeaways from the incident.

First and foremost, the unauthorized disclosure of source code and other

sensitive intellectual property could potentially be a boon for those seeking

to steal corporate secrets. “Intel’s technology is almost ubiquitous, and the

leaked device designs and firmware source code can put businesses and

individuals at risk,” said Ilia Sotnikov, VP of product management at Netwrix.

“Hackers and Intel’s own security research team are probably racing now to

identify flaws in the leaked source code that can be exploited. Companies

should take steps to identify what technology may be impacted and stay tuned

for advisory and hotfix announcements from Intel.” “While we often think of

data breaches in the context of customer data lost and potential PII leakage,

it is very important that we also consider the value of intellectual property,

especially for very innovative organizations and organizations with a large

market share,” said Erich Kron, security awareness advocate at KnowBe4. This

intellectual property can be very valuable to potential competitors, and even

nation states, who often hope to capitalize on the research and development

done by others.”

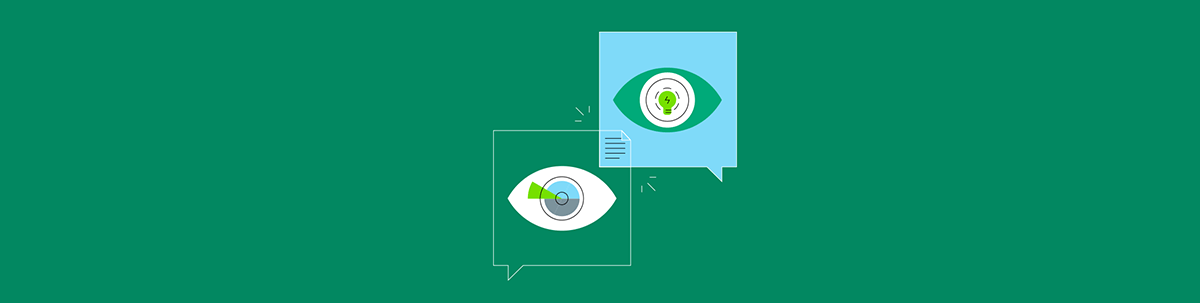

Researchers Trick Facial-Recognition Systems

The model then continuously created and tested fake images of the two

individuals by blending the facial features of both subjects. Over hundreds of

training loops, the machine-learning model eventually got to a point where it

was generating images that looked like a valid passport photo of one of the

individuals: even as the facial recognition system identified the photo as the

other person. Povolny says the passport-verification system attack scenario —

though not the primary focus of the research — is theoretically possible to

carry out. Because digital passport photos are now accepted, an attacker can

produce a fake image of an accomplice, submit a passport application, and have

the image saved in the passport database. So if a live photo of the attacker

later gets taken at an airport — at an automated passport-verification kiosk,

for instance — the image would be identified as that of the accomplice. "This

does not require the attacker to have any access at all to the passport

system; simply that the passport-system database contains the photo of the

accomplice submitted when they apply for the passport," he says.

The problems AI has today go back centuries

The ties between algorithmic discrimination and colonial racism are perhaps

the most obvious: algorithms built to automate procedures and trained on data

within a racially unjust society end up replicating those racist outcomes in

their results. But much of the scholarship on this type of harm from AI

focuses on examples in the US. Examining it in the context of coloniality

allows for a global perspective: America isn’t the only place with social

inequities. “There are always groups that are identified and subjected,” Isaac

says. The phenomenon of ghost work, the invisible data labor required to

support AI innovation, neatly extends the historical economic relationship

between colonizer and colonized. Many former US and UK colonies—the

Philippines, Kenya, and India—have become ghost-working hubs for US and UK

companies. The countries’ cheap, English-speaking labor forces, which make

them a natural fit for data work, exist because of their colonial histories.

AI systems are sometimes tried out on more vulnerable groups before being

implemented for “real” users. Cambridge Analytica, for example, beta-tested

its algorithms on the 2015

The State of AI-Driven Digital Transformation

Governments are transforming service delivery through AI as well. In China, a

number of AI pilot programmes are rolling out across the court system,

including an “AI robot” that can answer legal questions in real time, tools to

automate evidence analysis and the automated transcribing of court proceedings

that would remove the need for judicial clerks to double as stenographers.

These technological developments point to a future in which routine court

procedures are mostly handled by machines, so that judges can reserve their

attention for more complex and demanding cases. The other major use of AI

would be in the areas of security and data privacy. In fact, the Forrester

study found that 61 percent of firms in APAC are already enhancing or

implementing their data privacy and security-related capabilities using AI.

For example, financial services giant AXA IT has been leveraging machine

learning and AI to thwart online security threats. They’ve partnered with

cybersecurity firm Darktrace whose Enterprise Immune System learns how normal

users behave so as to detect dangerous anomalies with the help of AI. Data lie

at the heart of AI. The success of AI-driven digital transformation,

therefore, relies greatly on the ability to draw insights from big data.

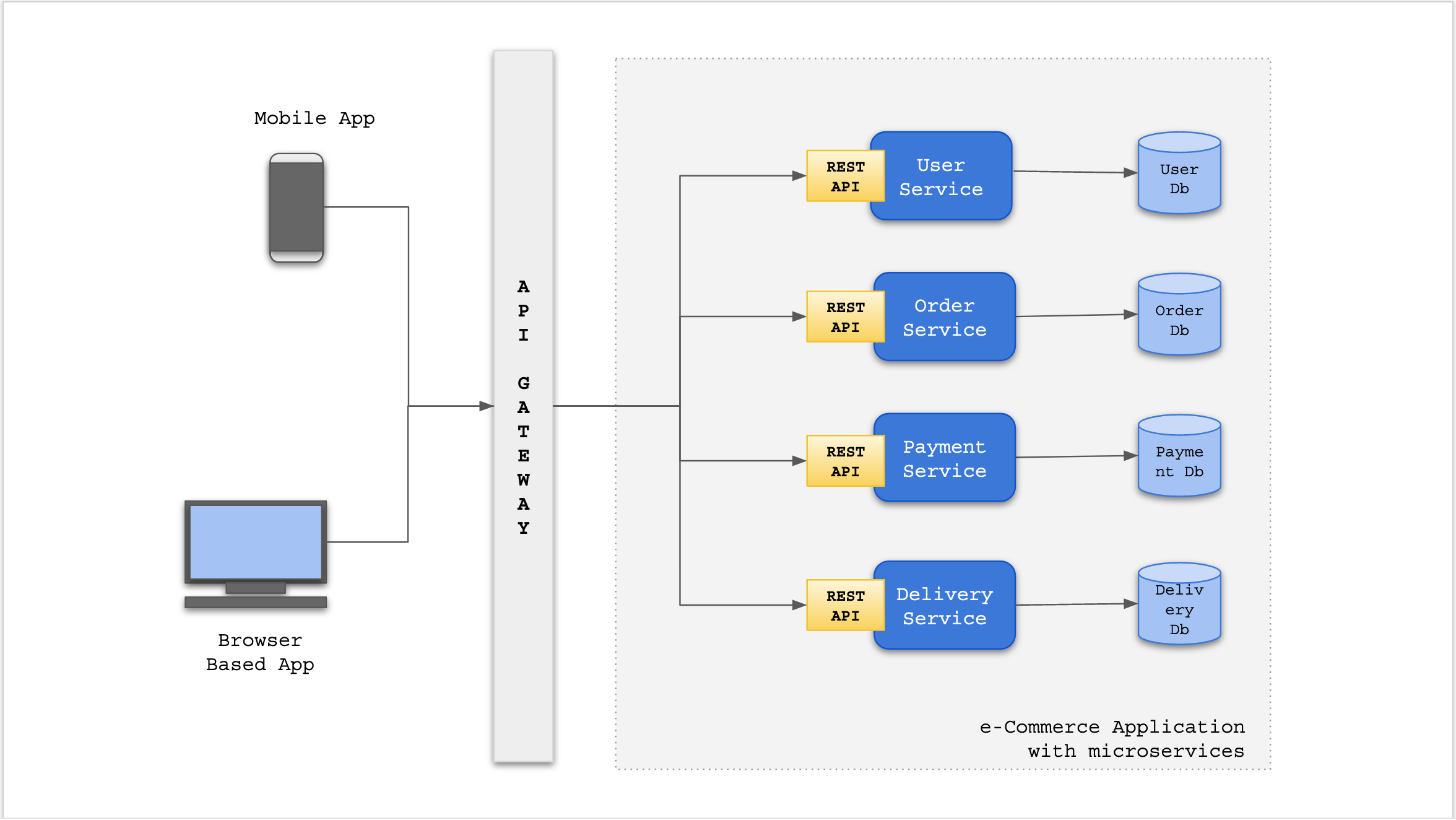

How to Keep APIs Secure From Bot Attacks

Many APIs do not check authentication status when the request comes from a

genuine user. Attackers exploit such flaws in different ways, such as session

hijacking and account aggregation, to imitate genuine API calls. Attackers

also reverse engineer mobile applications to discover how APIs are invoked. If

API keys are embedded into the application, an API breach may occur. API keys

should not be used for user authentication. Cybercriminals also perform

credential stuffing attacks to takeover user accounts. ... Many APIs lack

robust encryption between the API client and server. Attackers exploit

vulnerabilities through man-in-the-middle attacks. Attackers intercept

unencrypted or poorly protected API transactions to steal sensitive

information or alter transaction data. Also, the ubiquitous use of mobile

devices, cloud systems and microservice patterns further complicate API

security because multiple gateways are now involved in facilitating

interoperability among diverse web applications. The encryption of data

flowing through all these channels is paramount. ... APIs are vulnerable to

business logic abuse. This is exactly why a dedicated bot management solution

is required and why applying detection heuristics that are good for both web

and mobile apps can generate many errors — false positives and false

negatives.

Blazor vs Angular

Blazor is also a framework that enables you to build client web applications

that run in the browser, but using C# instead of TypeScript. When you create a

new Blazor app it arrives with a few carefully selected packages (the

essentials needed to make everything work) and you can install additional

packages using NuGet. From here, you build your app as a series of components,

using the Razor markup language, with your UI logic written using C#. The

browser can't run C# code directly, so just like the Angular AOT approach

you'll lean on the C# compiler to compile your C# and Razor code into a series

of .dll files. To publish your app, you can use dot net's built-in publish

command, which bundles up your application into a number of files (HTML, CSS,

JavaScript and DLLs), which can then be published to any web server that can

serve static files. When a user accesses your Blazor WASM application, a

Blazor JavaScript file takes over, which downloads the .NET runtime, your

application and its dependencies before running your app using WebAssembly.

Blazor then takes care of updating the DOM, rendering elements and forwarding

events (such as button clicks) to your application code.

AI company pivots to helping people who lost their job find a new source of health insurance

In addition to making health insurance somewhat easier to get, the Affordable

Care Act funded navigators who helped individuals choose the right insurance

plan. The Trump administration cut funding for the navigators from $63 million

in 2016 to $10 million in 2018. During the 2019 open enrollment period for the

federal ACA health insurance marketplace, overall enrollment dropped by 306,000

people. "While that may not seem like a lot, the average annual medical expense

is around $3,000 per person, and a shortfall of covered patients could represent

over $900,000,000 of medical expenses will not be paid by health insurance,"

Showalter said. When states banned elective medical procedures temporarily

during the early months of the pandemic, this cut off an important revenue

stream for hospitals and many laid off workers. Some of these layoffs included

patient navigators who helped patients enroll in health insurance, particularly

Medicaid. Showalter said that all Jvion customers have had at least a few

navigators on staff but not enough to reach every patient in need of assistance.

Quote for the day:

"A good general not only sees the way to victory; he also knows when victory is impossible." -- Polybius