Quote for the day:

"Success is what happens after you have survived all of your mistakes." -- Anonymous

3 Stages of Building Self-Healing IT Systems With Multiagent AI

Multiagent AI systems can allow significant improvements to existing processes

across the operations management lifecycle. From intelligent ticketing and

triage to autonomous debugging and proactive infrastructure maintenance, these

systems can pave the way for IT environments that are largely

self-healing. ... When an incident is detected, AI agents can attempt to

debug issues with known fixes using past incident information. When multiple

agents are combined within a network, they can work out alternative solutions

if the initial remediation effort doesn’t work, while communicating the

ongoing process with engineers. Keeping a human in the loop (HITL) is vital to

verifying the outputs of an AI model, but agents must be trusted to work

autonomously within a system to identify fixes and then report these back to

engineers. ... The most important step in creating a self-healing system is

training AI agents to be able to learn from each incident, as well as from

each other, to become truly autonomous. For this to happen, AI agents cannot

be siloed into incident response. Instead, they must be incorporated into an

organization’s wider system, communicate with third-party agents and allow

them to draw correlations from each action taken to resolve each incident. In

this way, each organization’s incident history becomes the training data for

its AI agents, ensuring that the actions they take are organization-specific

and relevant.

The three refactorings every developer needs most

If I had to rely on only one refactoring, it would be Extract Method, because

it is the best weapon against creating a big ball of mud. The single best

thing you can do for your code is to never let methods get bigger than 10 or

15 lines. The mess created when you have nested if statements with big chunks

of code in between the curly braces is almost always ripe for extracting

methods. One could even make the case that an if statement should have only a

single method call within it. ... It’s a common motif that naming things is

hard. It’s common because it is true. We all know it. We all struggle to name

things well, and we all read legacy code with badly named variables, methods,

and classes. Often, you name something and you know what the subtleties are,

but the next person that comes along does not. Sometimes you name something,

and it changes meaning as things develop. But let’s be honest, we are going

too fast most of the time and as a result we name things badly. ... In other

words, we pass a function result directly into another function as part of a

boolean expression. This is… problematic. First, it’s hard to read. You have

to stop and think about all the steps. Second, and more importantly, it is

hard to debug. If you set a breakpoint on that line, it is hard to know where

the code is going to go next.

ENISA launches EU Vulnerability Database to strengthen cybersecurity under NIS2 Directive, boost cyber resilience

The EU Vulnerability Database is publicly accessible and serves various

stakeholders, including the general public seeking information on

vulnerabilities affecting IT products and services, suppliers of network and

information systems, and organizations that rely on those systems and

services. ... To meet the requirements of the NIS2 Directive, ENISA initiated

a cooperation with different EU and international organisations, including

MITRE’s CVE Programme. ENISA is in contact with MITRE to understand the impact

and next steps following the announcement of the funding to the Common

Vulnerabilities and Exposures Program. CVE data, data provided by Information

and Communication Technology (ICT) vendors disclosing vulnerability

information through advisories, and relevant information, such as CISA’s Known

Exploited Vulnerability Catalogue, are automatically transferred into the EU

Vulnerability Database. This will also be achieved with the support of member

states, who established national Coordinated Vulnerability Disclosure (CVD)

policies and designated one of their CSIRTs as the coordinator, ultimately

making the EUVD a trusted source for enhanced situational awareness in the

EU.

Welcome to the age of paranoia as deepfakes and scams abound

Welcome to the Age of Paranoia, when someone might ask you to send them an

email while you’re mid-conversation on the phone, slide into your Instagram

DMs to ensure the LinkedIn message you sent was really from you, or request

you text a selfie with a time stamp, proving you are who you claim to be. Some

colleagues say they even share code words with each other, so they have a way

to ensure they’re not being misled if an encounter feels off. ... Ken

Schumacher, founder of the recruitment verification service Ropes, says he’s

worked with hiring managers who ask job candidates rapid-fire questions about

the city where they claim to live on their résumé, such as their favorite

coffee shops and places to hang out. If the applicant is actually based in

that geographic region, Schumacher says, they should be able to respond

quickly with accurate details. Another verification tactic some people use,

Schumacher says, is what he calls the “phone camera trick.” If someone

suspects the person they’re talking to over video chat is being deceitful,

they can ask them to hold up their phone camera to show their laptop. The idea

is to verify whether the individual may be running deepfake technology on

their computer, obscuring their true identity or surroundings.

CEOs Sound Alarm: C-Suite Behind in AI Savviness

According to the survey, CEOs now see upskilling internal teams as the

cornerstone of AI strategy. The top two limiting factors impacting AI's

deployment and use, they said, are the inability to hire adequate numbers of

skilled people and to calculate value or outcomes. "CEOs have shifted their view

of AI from just a tool to a transformative way of working," said Jennifer

Carter, senior principal analyst at Gartner. Contrary to the CEOs' assessments

by Gartner, most CIOs view themselves as the key drivers and leaders of their

organizations' AI strategies. According to a recent report by CIO.com, 80% of

CIOs said they are responsible for researching and evaluating AI products,

positioning them as "central figures in their organizations' AI strategies." As

CEOs increasingly prioritize AI, customer experience and digital transformation,

these agenda items are directly shaping the evolving role and responsibilities

of the CIO. But 66% of CEOs say their business models are not fit for AI

purposes. Billions continue to be spent on enterprisewide AI use cases but

little has come in way of returns. Gartner's forecast predicts a 76.4% surge in

worldwide spending on gen AI by 2024, fueled by better foundational models and a

global quest for AI-powered everything. But organizations are yet to see

consistent results despite the surge in investment.

Dropping the SBOM, why software supply chains are too flaky

“Mounting software supply chain risk is driving organisations to take action.

[There is a] 200% increase in organistions making software supply chain security

a top priority and growing use of SBOMs,” said Josh Bressers, vice president of

security at Anchore. ... “There’s a clear disconnect between security goals and

real-world implementation. Since open source code is the backbone of today’s

software supply chains, any weakness in dependencies or artifacts can create

widespread risk. To effectively reduce these risks, security measures need to be

built into the core of artifact management processes, ensuring constant and

proactive protection,” said Douglas. If we take anything from these market

analysis pieces, it may be true that organisations struggle to balance the

demands of delivering software at speed while addressing security

vulnerabilities to a level which is commensurate with the composable

interconnectedness of modern cloud-native applications in the Kubernetes

universe. ... Alan Carson, Cloudsmith’s CSO and co-founder, remarked, “Without

visibility, you can’t control your software supply chain… and without control,

there’s no security. When we speak to enterprises, security is high up on their

list of most urgent priorities. But security doesn’t have to come at the cost of

speed. ...”

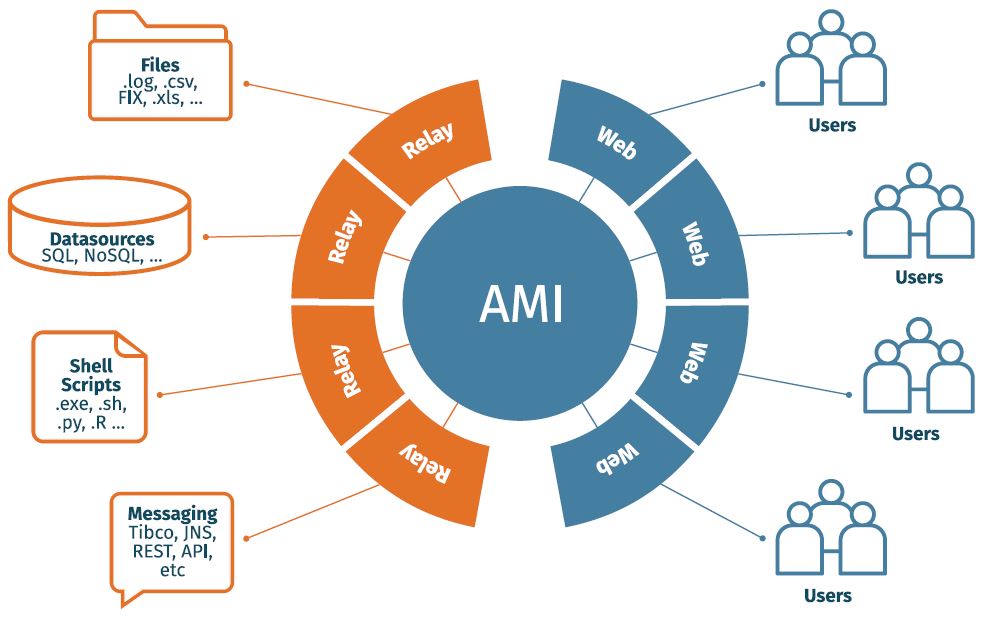

Does agentic AI spell doom for SaaS?

The reason agentic AI is perceived as a threat to SaaS and not traditional apps

is that traditional apps have all but disappeared, replaced in favor of

on-demand versions of former client software. But it goes beyond that. AI is

considered a potential threat to SaaS for several reasons, mostly because of how

it changes who is in control and how software is used. Agentic AI changes how

work gets done because agents act on behalf of users, performing tasks across

software platforms. If users no longer need to open and use SaaS apps directly

because the agents are doing it for them, those apps lose their engagement and

perceived usefulness. That ultimately translates into lost revenue, since SaaS

apps typically charge either per user or by usage. An advanced AI agent can

automate the workflows of an entire department, which may be covered by multiple

SaaS products. So instead of all those subscriptions, you just use an agent to

do it all. That can lead to significant savings in software costs. On top

of the cost savings are time savings. Jeremiah Stone, CTO with enterprise

integration platform vendor SnapLogic, said agents have resulted in a 90%

reduction in time for data entry and reporting into the company’s Salesforce

system.

Ask a CIO Recruiter: Where Is the ‘I’ in the Modern CIO Role?

First, there are obviously huge opportunities AI can provide the business,

whether it’s cost optimization or efficiencies, so there is a lot of pressure

from boards and sometimes CEOs themselves saying ‘what are we doing in AI?’ The

second side is that there are significant opportunities AI can enable the

business in decision-making. The third leg is that AI is not fully leveraged

today; it’s not in a very easy-to-use space. That is coming, and CIOs need to be

able to prepare the organization for that change. CIOs need to prepare their

teams, as well as business users, and say ‘hey, this is coming, we’ve already

experimented with a few things. There are a lot of use cases applied in certain

industries; how are we prepared for that?’ ... Just having that vision to see

where technology is going and trying to stay ahead of it is important. Not

necessarily chasing the shiny new toy,, new technology, but just being ahead of

it is the most important skill set. Look around the corner and prepare the

organization for the change that will come. Also, if you retrained some of the

people, you have to be more analytical, more business minded. Those are good

skills. That’s not easy to find. A lot of people [who] move into the CIO role

are very technical, whether it is coding or heavily on the infrastructure side.

That is a commodity today; you need to be beyond that.

Insider risk management needs a human strategy

A technical-only response to insider risk can miss the mark, we need to

understand the human side. That means paying attention to patterns, motivations,

and culture. Over-monitoring without context can drive good people away and

increase risk instead of reducing it. When it comes to workplace monitoring,

clarity and openness matter. “Transparency starts with intentional

communication,” said Itai Schwartz, CTO of MIND. That means being upfront with

employees, not just that monitoring is happening, but what’s being monitored,

why it matters, and how it helps protect both the company and its people.

According to Schwartz, organizations often gain employee support when they

clearly connect monitoring to security, rather than surveillance. “Employees

deserve to know that monitoring is about securing data – not surveilling

individuals,” he said. If people can see how it benefits them and the business,

they’re more likely to support it. Being specific is key. Schwartz advises

clearly outlining what kinds of activities, data, or systems are being watched,

and explaining how alerts are triggered. ... Ethical monitoring also means

drawing boundaries. Schwartz emphasized the importance of proportionality:

collecting only what’s relevant and necessary. “Allow employees to understand

how their behavior impacts risk, and use that information to guide, not punish,”

he said.

Sharing Intelligence Beyond CTI Teams, Across Wider Functions and Departments

As companies’ digital footprints expand exponentially, so too do their attack

surfaces. And since most phishing attacks can be carried out by even the least

sophisticated hackers due to the prevalence of phishing kits sold in cybercrime

forums, it has never been harder for security teams to plug all the holes, let

alone other departments who might be undertaking online initiatives which leave

them vulnerable. CTI, digital brand protection and other cyber risk initiatives

shouldn’t only be utilized by security and cyber teams. Think about legal teams,

looking to protect IP and brand identities, marketing teams looking to drive

website traffic or demand generation campaigns. They might need to implement

digital brand protection to safeguard their organization’s online presence

against threats like phishing websites, spoofed domains, malicious mobile apps,

social engineering, and malware. In fact, deepfakes targeting customers and

employees now rank as the most frequently observed threat by banks, according to

Accenture’s Cyber Threat Intelligence Research. For example, there have even

been instances where hackers are tricking large language models into creating

malware that can be used to hack customers’ passwords.

_Federico_Caputo_Alamy.jpg?width=1280&auto=webp&quality=95&format=jpg&disable=upscale)