The firm's Risk in Review study said when risk management is at the top of its game, "leaders have a clear line of sight into threats for informed decision making." The report is based on a global survey of 2,073 CEOs, board members, and professionals in risk management, internal audit, and compliance, conducted in October and November 2018, and described six habits risk functions follow that help their companies set a course for sustainable growth. Digital transformations don't work well in isolation, the report said, because of the many connection points that can be exploited without proper controls. A well-thought-out and communicated digital strategy with growth targets and values anchors a risk culture. As organizations go all-in with transformations, the entire organization should prioritize items such as new technology, while risk functions set controls that map back to the strategy. In another survey recently conducted by the firm, CEOs globally said they expect the artificial intelligence (AI) "revolution to be bigger than the Internet revolution."

UK IoT research centre to tackle cyber risk

The centre’s research focus will be on the opportunities and threats that arise from edge computing, an innovative way to collect and analyse data in machine learning and artificial intelligence (AI) technology. When implemented successfully, edge computing can improve network performance by reducing latency, which is the time taken for data to traverse a system. “The centre’s ultimate aim is, by creating a trustworthy and secure infrastructure for the internet of things, to deliver a step change in socio-economic benefit for the UK with visible improvements for citizen wellbeing and quality of life,” said Jeremy Watson, Petras director and professor at University College London department of science, technology, engineering and public policy (STEaPP). “I expect productivity improvements and cost savings across a range of sectors including healthcare, transport and construction. In bringing together academics, industry technologists and government officials, our research will create accessible and relevant knowledge with clearly visible economic, societal or cultural impact that will help to cement the UK’s position as a world leader in this area.”

5 steps employers can take to retain project managers

Many project managers recognize their impact on the overall morale of the company and understand the need to remain positive and put on a "good face" for their teams, sponsors, and other stakeholders. The trouble is, employers may make the assumption that what they see is what truly exists, and this can create a sense of complacency. As an employer, it's important to keep in regular contact with your project management professionals to ensure that there exist no issues impacting their job satisfaction. Although your project managers are likely to remain the continent professional and push through to ensure that their projects are executed successfully; they could experience concern in some areas, yet not feel supported enough to say anything. In fact, many project managers experience a great deal of responsibility to put the needs of others ahead of their own, sometimes to their own detriment. Take the time to regularly sit down with your project management professionals and keep up-to-date with the issues that impact their level of job and employee satisfaction.

Mashreq Bank’s Lean Agile Journey

Snowdon stated that the goal of agile was to work in a more collaborative way, to get decisions closer to the customer, and to provide a better structure so that they could more quickly respond to the customer-driven demand, rather than push products/services at them. Capaldi stated, "I believe in kaizen and kaikaku as central concepts all companies must value; I will therefore only get involved in a transformation if I see these. In this case I was pleasantly surprised by the passion Steve and his team had in wanting to fully understand agile and these concepts were clearly there, and the fact they also come from a lean background just like me helped." "The head of the division was also massively behind the transformation and we very quickly agreed the metrics that would track progress," Capaldi mentioned. He said that agile is a journey; he prefers to challenge his clients in that they aren’t really trying to be agile, and that it’s ok to start with "fake agile". Fake it till you make it!, he said

Mind the overlap between GDPR and ePD, warns privacy lawyer

According to Ustaran and Campion, as the digital economy progresses, European data protection law is likely to lead to a more harmonised approach to its interpretation and enforcement, as reflected by the EDPB’s opinion. However, the situation going forward it far from clearcut as the ePD was initially intended to be replaced by the proposed European ePrivacy Regulation (ePR) in May 2018, but then was expected to be implemented at some point in 2019 and now looks likely to take a little longer. “The whole e-Privacy Directive / forthcoming Regulation and GDPR debate is one of the most complex legal conundrums going on at the moment in this space,” Ustaran told Computer Weekly. “The recent EDPB opinion is very helpful in terms of understanding the regulators’ thinking, but where the e-Privacy Regulation fits in is a big missing piece,” he said. According to Ustaran, the e-Privacy Regulation is unlikely to be fully effective before 2020, given that the European Council has not decided on a preferred draft, which will then need to be discussed in detail with the European Parliament and the European Commission before being formally adopted.

Site reliability engineer shift creates IT ops dilemma

In some ways, the transitional struggle described by the SREcon attendee is unavoidable, according to experienced SREs who presented here this week. "If you talk to experienced veterans in the field, they might get a faraway look in their eye and say, 'Oh, yes, I remember that,'" said Jaren Glover, infrastructure ghostwriter at Robinhood, a fintech startup in Palo Alto, Calif. "A bit of this pain is par for the course." There are, unfortunately, no easy solutions to the problem, SREs said, though support from employers to hire new engineers and scale up site reliability engineer teams is crucial. "It's also a matter of prioritization," said Arnaud Lawson, senior infrastructure software engineer at Squarespace, a website creation company in New York, in an interview after his SREcon presentation on service-level objectives. "Even if 80% of the team is dedicated to firefighting, the rest can tap into automation to get rid of tedious work." At large enough companies, such as the professional networking site LinkedIn, SREs are sometimes repurposed from other teams to help those that struggle to meet team performance targets or who are overwhelmed by pager alerts.

Shared learning: Establishing a culture of peers training peers

“After you walk your teammates through how you apply a skill, let them test it out on their own to see whether they can repeat the process you used and achieve the same or a similar result,” he says. With so many organizations relying on technology for training, this hands-on aspect is key. “We’re moving from a world where just watching online tutorials and going to classes was enough to one that emphasizes experiential learning. Just knowing isn’t enough — it’s about doing,” Schawbel says. “If you’re lucky, your organization will give you access to learning, training, educational materials or subscriptions to various resources, but they aren’t actually providing the hands-on, peer-to-peer learning, mentorship, situational and project-based knowledge.” ... Once your coworkers have attempted to complete a task using the skill you taught them, review it, Schawbel says, but understand that nowadays, people don’t even like using the word “feedback,” and prefer “suggestions for improvement.” Here, the key is starting with the positive.

Understanding the role and need of a data protection officer

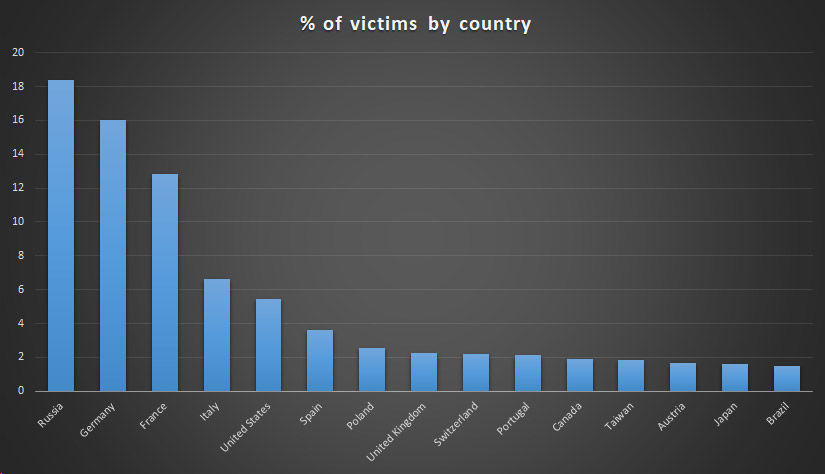

The DPO works alongside of the other C-suite officers at your firm and maintains Data Protection Authority rules and regulations. This means that they should be expert or well-versed in the GDPR and all of its requirements, but it also means that the DPO needs to understand other jurisdictional requirements around the world in places your business operates. This responsibility is a serious one, and you should review the information available at the International Association of Privacy Professionals (IAPP) for further clarity. The IAPP is the world’s largest information privacy community and provides comprehensive data privacy and regulatory certification training. Because you have gotten this far, you must believe that your business has opportunities to create value through your data and data partnerships. You have also certainly noticed the seemingly daily disastrous headlines about data breaches plaguing companies. There have been hundreds of different data breaches involving more than 30,000 records each; some of these breaches affected hundreds of millions of data subjects.

Identifying exceptional user experience (UX) in IoT platforms

Enterprises should pick IoT platforms with superlative access to on-platform configuration functionality with an emphasis on declarative interfaces for configuration management. Although many platform administrators are capable of working with RESTful API endpoints, good UX design should not require that platform administrators use third-party tools to automate basic functionality or execute bulk tasks. Some programmatic interfaces, such as SQL syntax for limiting monitoring views or dashboards for setting event processing trigger criteria, are acceptable and expected, although a fully declarative solution that maintains similar functionality is preferred. ... In general, the UX should be focused on providing information immediately required for the execution of day-to-day operational tasks while removing more complex functionality. These platforms should have easy access to well-defined and well-constrained operational functions or data visualization. An effective UX should enable easy creation and modification of data views, graphs, dashboards, and other visualizations by allowing operators to select devices using a declarative rather than SQL or other programmatic interfaces.

How IoT can transform four industries this year

"Among providers, IoT enablement will be leveraged toward the triple aim of cost, quality, and population health," Khaled said. Simple, embedded digital tools are already being piloted at large scale to mitigate infection risk around replaceable medical instruments, while smart threads and sticker or patch sensors have improved in their fidelity, tracking everything from cardiac readouts to body chemistry and sleep patterns. Among payers, IoT presents a distinct opportunity to enable smarter population risk management and accompanying reimbursement rate adjustments. IoT-enabled, long-term care facilities will be able to negotiate better rates if their sensor data supports fall risk and infection likelihood mitigation, Khaled said. The growing ecosystem of wearable fitness devices will help insurers recognize members who are (literally) taking steps to actively change their individual risk. IoT technologies supporting patient medication adherence will help both of these groups see major cost-saving and health improvement opportunities.

Quote for the day:

"True success is a silence inner process that can empower the mind, heart and soul through strong aspiration." -- Nur Sakinah Thomas