GPUs are already not commoditized relative to CPUs, and what we’re seeing with the huge surge of investment in AI chips is that GPUs will ultimately be replaced by something even more specialized. There is a bit of irony here considering Nvidia came into existence with the premise that Intel’s x86 CPU technology was too generalized to meet the growing demand for graphics intensive applications. This time, neither Intel nor Nvidia are going to sit on the sidelines and let startups devour this new market. The opportunity is too great. The likely scenario is that we’ll see Nvidia and Intel continue to invest heavily in Volta and Nervana. AMD has been struggling due to interoperability issues but will most likely come up with something usable soon. Microsoft and Google are making moves with Brainwave and the TPU, and a host of other projects; and then there are all the startups. The list seems to grow weekly, and you’d be hard-pressed to find a venture capital fund that hasn’t made a sizable bet on at least one of the players.

Will AI Disrupt Our Financial Systems?

According to the WEF report, “unlocking the full potential of AI requires an extensive network of partnerships.” Financial institutions will become “ecosystem curators” with “massive scale of data and insight.” China-based Ping An’s One Connect provides hundreds of small and medium-sized banks services developed on AI technology. The company has data from “over 880 million users, 70 million businesses and 300 partners” that fuel its suite of applications for finance, insurance, payments, and even telemedicine. Partnerships between financial service institutions and technology companies are on the rise. For example, WEF highlights the recently announced joint venture between Amazon, Berkshire Hathaway and JPMorgan Chase to develop a health plan for employees. The challenges of this new dynamic include protecting proprietary data between institutional partners, selecting the right services that will generate revenue, and regulating third-party services.

TensorFlow 2.0 Is Coming; Here’s What You Should Look Forward To

TensorFlow would soon be holding a series of public design reviews covering the planned changes. “This process will clarify the features that will be part of TensorFlow 2.0, and allow the community to propose changes and voice concerns,” said Wicke. Wicke also added that to ease the transition, they would be creating a conversion tool which would update Python code to use TensorFlow 2.0 compatible APIs, or at least warn the user in cases where such a conversion is not possible automatically. “TensorFlow’s tf.contrib module has grown beyond what can be maintained and supported in a single repository. Larger projects are better maintained separately, while we will incubate smaller extensions along with the main TensorFlow code. Consequently, as part of releasing TensorFlow 2.0, we will stop distributing tf.contrib. We will work with the respective owners on detailed migration plans in the coming months, including how to publicise your TensorFlow extension in our community pages and documentation,” Wicke concluded.

Alibaba, through its subsidiary Lynx International, integrated blockchain technology to track information in its cross-border logistics services. With the successful application of blockchain, Lynx can all keep an immutable record of shipment information such as production, transportation, customs, inspection and any third party verification. Although the concept of blockchain has only recently started to emerge, it has a very wide range of applications” Tang Ren -The technical Director, Lynxx For a shipping and logistics arm like Lynx, security and transparency cannot be overemphasized. It’s really no surprise Alibaba looked no further than blockchain. More recently, another of Alibaba’s subsidiaries, T-Mall in partnership with Cainiao adopted blockchain technology for its cross-border supply chain. Similar to the Lynx project, blockchain is being used to track information about shipments from over 50 countries.

Is data science a bubble?

Though that sounds like prime bubble, I’m actually pretty optimistic. Growing data means growing opportunities — it all just needs good management. My friend, for example, ended up conquering a lot of his problems by recognizing that the rest of his organization needed training in how to work with data scientists. Since then, his teams have been more thoughtful about how to assign work and great things followed. Training decision-makers in how to make use of data science saved the day!

Check that your decision-makers have the right skills for working with data scientists. If a bubble exists, that might be the root of it. The challenge for today’s data science leaders is help decision-makers get training like that, creating more people with the skills to point the technical brilliance of data scientists in valuable directions. Once data scientists are able to make themselves useful, keeping them around becomes a no-brainer, rather than a matter of fashion. Will we manage it before their data scientist title falls out of favor and they scramble towards another rebranding?

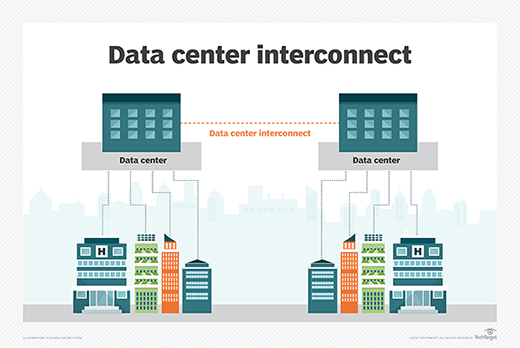

CBP launching blockchain testing

“Data without borders,” a fundamental principle of blockchain technology, “sounds good if you’re only looking at shipping, but you have to take into account that we have importation entry data coming in, and we have 47 agencies ... that aren’t just going to give up their sovereignty of their laws and their rules,”Annunziato said. “So it’s a very interesting time right now, but I think it’s a good time for the government to be involved because we’re starting to really push forward and make sure things are honest and working the way they’re supposed to.” CBP also is working with the Commercial Customs Operations Advisory Committee (COAC) on a proof-of-concept exercise exploring the use of blockchain in the intellectual property environment to identify IP licensees and licensors, Annunziato said. “So if you have a rights holder that is granting licenses to Company A, and then did they also grant the right for Company A to license out? You can now follow generationally what’s going on. So in a way the government’s got a view of that interaction with the company, and we see it as a worthwhile venture for the rights holders,” he said.

How to prevent phishing by studying the psychology behind digital fraud

Two researchers working in Carnegie Mellon University's Department of Social and Decision Science decided to look beyond the reasons why users fall for online fraud attacks. "Psychological research on human adversarial behavior is necessary to uncover factors that determine how deception and phishing strategies originally manifest in phishing emails," explain Prashanth Rajivan and Cleotilde Gonzalez in their coauthored paper Creative Persuasion: A Study on Adversarial Behaviors and Strategies in Phishing Attacks. "Currently, there is a severe lack of work on the psychology of criminal behaviors in cybersecurity." The two decided to change that, looking specifically at: The importance of incentives; How much of a role creativity plays; and The effect of adversarial strategies on attack success. To determine the importance of each item above, Rajivan and Gonzalez developed a two-part experiment consisting of these phases.

Google Is Turning Itself Into An AI Company

To maintain its foothold and protect its main source of revenue, Alphabet (Google’s parent company) is positioning itself to dominate adjacent sectors — such as digital commerce, branded hardware products, and content — and attempting to integrate its services into every aspect of the digital user experience. The company is also seeking out new streams of revenue in sectors with large addressable markets, namely on the enterprise side with cloud computing and services. Furthermore, it’s looking at industries ripe for disruption, such as transportation, logistics, and healthcare. Unifying Alphabet’s approach across initiatives is its expertise in AI and machine learning, which the company believes will help it become an all-encompassing service for both consumers and enterprises. In this teardown, we dive into Google’s approach to maintaining its search platform dominance, outlining the strategic investments, acquisitions, and partnerships across its top priorities moving forward.

On Becoming A Scientific HR Function – Learning From Amazon And Google

As Amazon puts it, “We manage HR as a business.” So, rather than simply “aligning with business goals,” the scientific model focuses on HR actions and resources so that they produce the maximum direct, measurable impact on business results. The two primary areas where HR can traditionally produce the highest business impacts include increasing the productivity of the workforce and improving the volume and speed of product and process innovation. Under this scientific approach, HR focuses on solving broad strategic business problems (e.g., decreased sales, product development or missed deadlines) rather than tactical HR problems. And finally, under this model, HR problems and results are converted to their dollar impact on revenue (e.g., the retention efforts on salespeople allowed us to maintain $2.5 million in sales revenue). Reporting results in revenue impact dollars allow executives to quickly compare HR’s dollar impacts to those from other business functions.

Hybrid optical-electronic CNN with optimized diffractive optics for image classification

Convolutional neural networks (CNNs) excel in a wide variety of computer vision applications, but their high performance also comes at a high computational cost. Despite efforts to increase efficiency both algorithmically and with specialized hardware, it remains difficult to deploy CNNs in embedded systems due to tight power budgets. Here we explore a complementary strategy that incorporates a layer of optical computing prior to electronic computing, improving performance on image classification tasks while adding minimal electronic computational cost or processing time. We propose a design for an optical convolutional layer based on an optimized diffractive optical element and test our design in two simulations: a learned optical correlator and an optoelectronic two-layer CNN. We demonstrate in simulation and with an optical prototype that the classification accuracies of our optical systems rival those of the analogous electronic implementations, while providing substantial savings on computational cost.

Quote for the day:

"Good leaders make people feel that they're at the very heart of things, not at the periphery." -- Warren G. Bennis