Disentangling The Data Centre ‘Skills Shortage’ Conundrum?

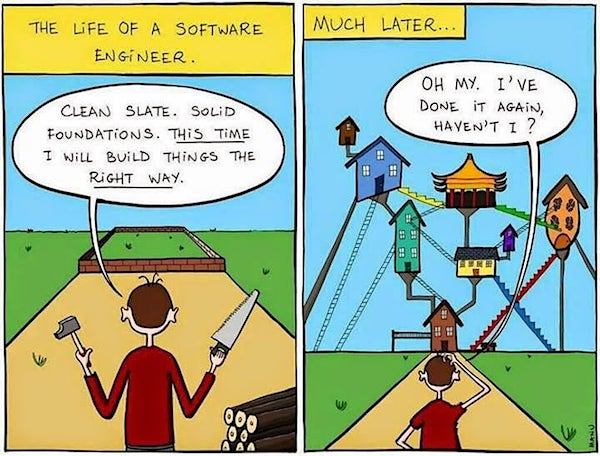

In principle, a skills shortage appears where there is a mismatch of the capabilities available to role vacancies. We certainly have that, but compounding this issue is the physical lack of people. Genuine skills shortages can be resolved by retraining the people available to work in available roles. It’s a pretty simple equation. Find people, train or retrain and employ them. Vocational and specific training programs resolve this issue in a highly effective manner and should not be discounted as part of a broader response. This is particularly so where existing labour forces are provided with the vocational skills to keep up with changes associated with technology, customer demand or process shifts, for example. Sadly for the data centre sector, we have an underlying labour shortage too. We simply do not have enough people coming into the sector to train into the roles available or to keep up with expected shifts in demand. We have both skills AND labour shortages. Each one demands a different suite of interventions and this is just where the complexity starts.

What’s the difference between a BCMS and a BCP?

Organisations and regulators don’t often agree on how businesses should be run, but lately both have championed the adoption of business continuity – a method that enables organisations to keep functioning during an incident, and address the prevention of and response to disruptions. Business continuity has proved essential in the modern landscape, with the number of cyber attacks on the rise and the amount of information being stored by organisations growing rapidly. But for all the agreement over the importance of business continuity, there is one area of disconnect. Some organisations have adopted a BCMS (business continuity management system) and others a BCP (business continuity plan). This might sound like it’s two names for the same thing, but there’s an important difference. ... It’s possible to have a BCP but not a fully-fledged BCMS. That’s because there are further steps to a BCMS after the plan is in place – namely: developing, testing and reviewing the BCP. Completing these steps obviously involves a bigger investment in time and resources

4 Artificial Intelligence Use Cases That Don’t Require A Data Scientist

Today, your IT operations team likely spends a huge amount of time and mental energy tending to performance thresholds—for example, when an application slows down too much, the system generates an alert. But as the application code, the configurations, or the infrastructure change, the ops team must constantly reset and manage those thresholds. The amount of monitoring data generated is also growing significantly, which means the IT ops team is doing a lot of work just managing logs, which provide the data for setting thresholds. A better way is to put all the web, application, and database performance data, the user experience data, and the log data into one cloud-based data platform. Then let that system—using baseline-setting algorithms in machine learning—learn what the thresholds should be. With the baseline established, another technique called anomaly detection can identify when application performance is trending toward these thresholds, and trigger alerts with suggested corrective actions or automatically take corrective action.

Raspberry Pi and machine learning: How to get started

Although the relatively low-specced Pi isn't an obvious choice for machine learning, the board's compact size and low power consumption mean it's well suited to building mobile homemade gadgets and robots. Machine learning can help these devices handle new tasks, using image recognition to "see" and speech recognition to "hear". However, there are definite limits to the Pi's ML capabilities. There are two main stages to machine learning, training, during which the model learns how to perform a given task, and inference, when the trained model is used to perform that task. The Pi's limited processing power means it's not suitable for training anything but the simplest machine-learning models. Instead this stage is typically carried on a machine with at least a mid- to high-end GPU. However, the Pi is capable of performing inference, of actually running the trained machine learning model, albeit rather slowly.

How Connected Cars And Insurance Are Influenced By Big Data

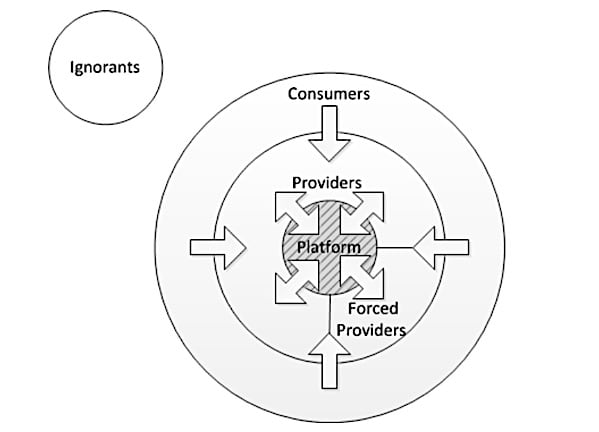

Many insurance carriers deploy the acquired customer driving patterns and put forth insurance premium rates accordingly. The likes of premium discounts emanating out of driving behavior, mileage, and other metrics are slowly becoming realities in this highly innovative arena. There are a host of other evaluating models like PAYD, PHYD and MHYD; which are postulated as different versions of the Usage Based Insurance plan. Each one of these models target a specific driving metric; thereby offering insurance premium rates by analyzing the quality of the concerned driver. With premiums directly related to the driving performance, the connected cars can blur the lines between vehicle usage and customer privacy as anything and everything inside the vehicle can be tracked, rather seamlessly. ... With the applications growing in large numbers, a relatively stronger ecosystem is being created around the connected cars. The participants include sensor manufacturers, telecommunication firms, insurance companies, and even the automakers; with each one connected to the other by the threads of Big Data.

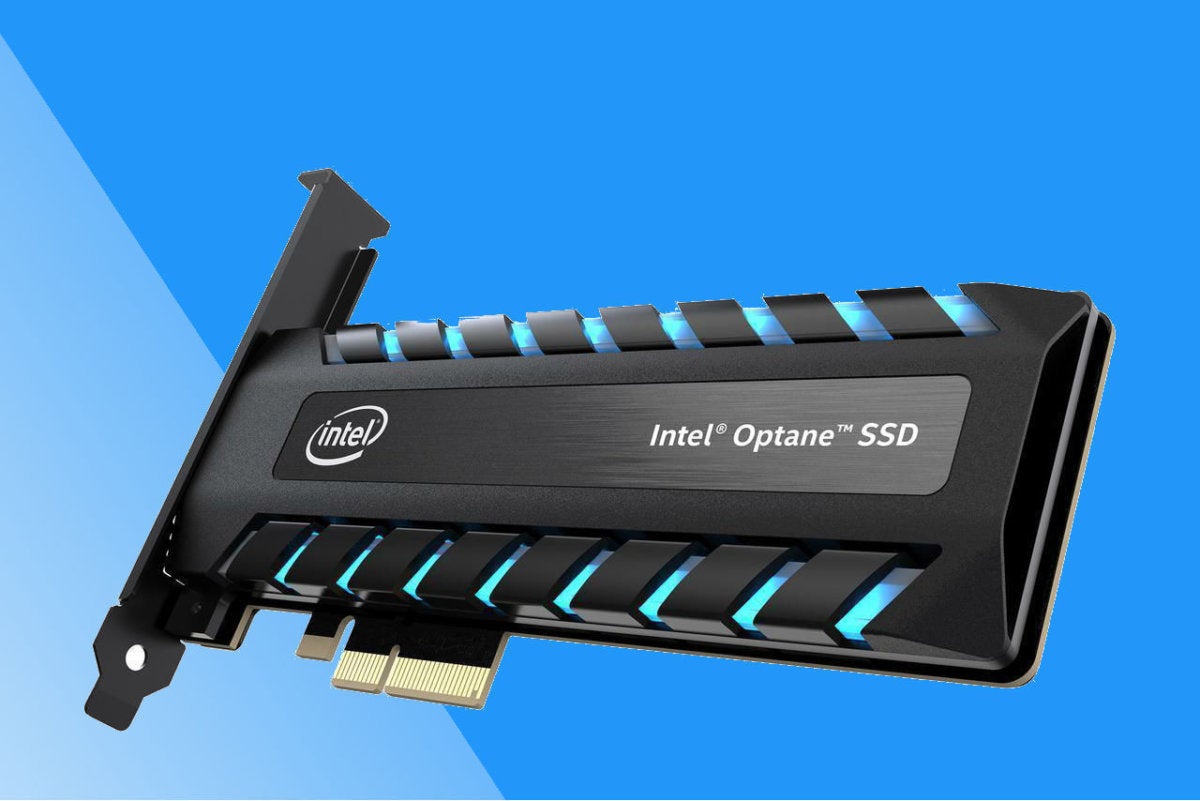

World's first four-bit 4TB SSDs for consumer devices coming this year

The downside of moving up to four bits per memory cell, according to Samsung, is that makes it harder to maintain a device's performance and speed because the extra density would cause the electrical charge to fall by as much as half. However, Samsung says its new SSDs are on par with the performance of its three-bit SSDs, achieved by using a three-bit SSD control, its TurboWrite technology, and boosting capacity by using 32 chips based on its 64-layer fourth-gen 1TB V-NAND chip. Samsung boasts that its QLC SSDs will improve efficiency for consumer computing, including in smartphone storage where the 1TB four-bit V-NAND chip will allow it to efficiently churn out 128GB memory cards for smartphones. ... Samsung is planning on releasing four-bit consumer SSDs later this year with 1TB, 2TB, and 4TB capacities in the widely used 2.5-inch form factor. As Samsung notes, this is a massive step up from the 32GB one-bit SSD it launched in 2006, followed by its two-bit 512GB SSDs in 2010, and three-bit or triple-level cell SSD in 2012.

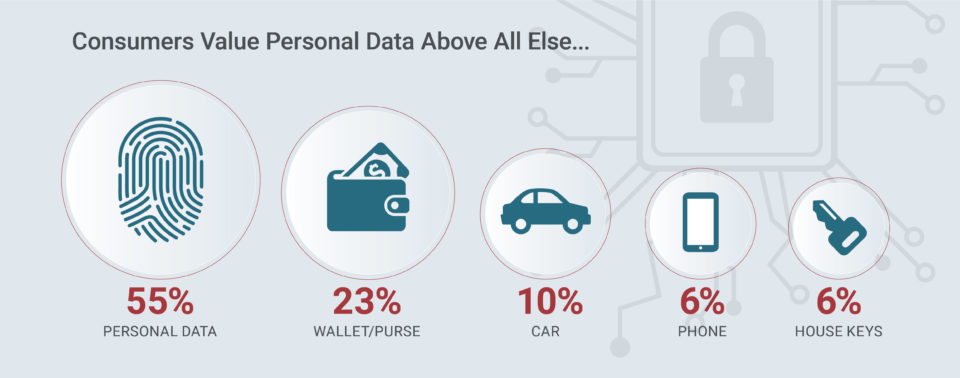

Consumer Sentiments About Cybersecurity and What It Means for Your Organization

While suffering a data breach is never ideal, the survey also shows that honesty, transparency and a timely emergency response plan is critical. Companies must clearly communicate that a breach has occurred, those likely impacted and planned remediation actions to address the issue. Organizations that don’t admit to compromised consumer records until long after the breach took place suffer the greatest wrath from consumers. Successful organizations must create a secure climate for customers by embracing technology and cultural change. Security threats and data breaches can seriously impact a customer’s loyalty, thereby damaging the corporate brand, increasing customer churn, and incurring lawsuits. Corporate leaders must recognize the multiple pressures on their organizations to integrate new network technologies, transform their businesses and to defend against cyberattacks. Executives that are willing to embrace technology, cultural change and prioritize cybersecurity will be the ones to win the trust and loyalty of the 21st century consumer.

Adapting Blockchain for GDPR Compliance

Perhaps the most interesting — and most controversial article — related to Blockchain’s applicability to GDPR is Article 25, “Data protection by design and by default,” which addresses pseudonymization techniques for consumers’ stored data. Hashing is Blockchain’s pseudonymization technique, and there are two critical interpretations for the pseudonym linkage using Blockchain relative to Article 25. The first one states that because data pseudonymization is accomplished in Blockchain hashing, but not anonymization, the data linkage is no longer considered personal when it is established, and if this linkage is deleted, it also complies with Article 17. However, the second interpretation is that pseudonymization, even with all cryptographic hashes, can still be linked back to the original PII data. There still may, however, need to be some mathematical proof that brute-force cyberattack of off-chain data linkage using hashing can compromise this assumption.

BGP hijacking attacks target payment systems

Justin Jett, director of audit and compliance for Plixer, said BGP hijacking attacks are "extremely dangerous because they don't require the attacker to break into the machines of those they want to steal from." "Instead, they poison the DNS cache at the resolver level, which can then be used to deceive the users. When a DNS resolver's cache is poisoned with invalid information, it can take a long time post-attacked to clear the problem. This is because of how DNS TTL works," Jett wrote via email. "As Oracle Dyn mentioned, the TTL of the forged response was set to about five days. This means that once the response has been cached, it will take about five days before it will even check for the updated record, and therefore is how long the problem will remain, even once the BGP hijack has been resolved." Madory was not optimistic about what these BGP hijacking attacks might portend because of how fundamental BGP is to the structure of the internet.

The 14 soft skills every IT pro needs

“Great knowledge in a vacuum doesn’t benefit an organization,” says Wilgus. “Every IT project — and position — is going to conclude with a deliverable, for example a design document, presentation, attestation report or updated code base. Without the necessary soft skills, the intended message being expressed in the deliverable could be lost. Candidates that have presented at conferences, or have been published, will have a leg up on other candidates. ... If there are errors in a two-page resume, what’s the likelihood this candidate can produce a formal report of more substantial length? Candidates should expect hiring organizations will ask for a writing sample." ... “Active listening is the process of reflecting back not only what you hear the other person saying but also to validate and verbalize the nontechnical aspects of the conversation,” Adato says. “This is one way to demonstrate emotional intelligence. Leveraging this technique gives the individual speaking the opportunity to clarify, while simultaneously demonstrating that this information matters to you personally.”

Quote for the day:

"It is easy to lead from the front when there are no obstacles before you, the true colors of a leader are exposed when placed under fire." -- Mark W. Boyer