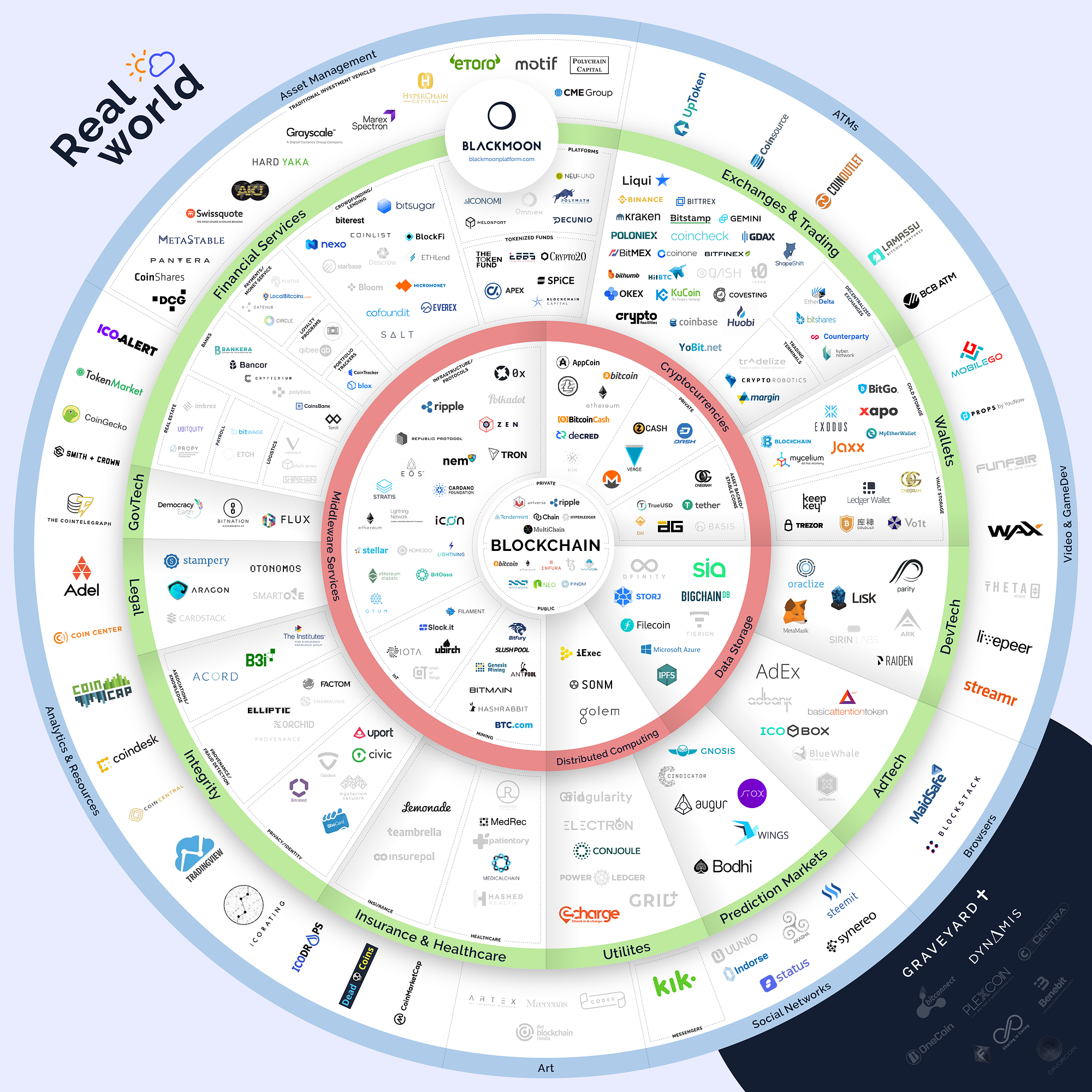

Globally, businesses are expected to invest $3.1bn in blockchain-based systems in 2018 according to IDC, more than double the figure from the previous year. If these predictions are correct, RSA warns that security teams could be left blind to cyber attack because many traditional Siem tools are unable to baseline the ‘new normal’ behaviours associated with blockchain and could allow hackers to gain entry to corporate networks. “Opinions are mixed on whether blockchain is a flash in the pan, or the next major disruptor. However, there is evidence – particularly in financial services – that blockchain adoption is gaining momentum,” said Azeem Aleem, global director of RSA’s Advanced Cyber Defence Practice. “If this is the case, then organisations need to be prepared for the impact this could have on their security operations teams,” he said. As with any new technology, Aleem said cyber attackers will look for vulnerabilities in how businesses implement blockchain, adding that any disruption or security breach due to a blockchain-related vulnerability could have a serious impact on operations.

PKO launches blockchain-based documentation verification platform

Trudatum has been piloted by PKO BP for over a year, as the result of the “Let’s Fintech” accelerator programme, and alongside a number of other successful Coinfirm pilots with clients across Western Europe, the United States and Japan. The first stage of implementing Trudatum across the bank will focus on integrating it with PKO’s current systems and providing a solution which makes it possible to verify the authenticity of various bank documents. Every document recorded in the blockchain (e.g. proof of a transaction, or bank’s terms and conditions for a given product) will be issued in the form of irreversible abbreviation (“hash”) signed with the bank’s private key. This will allow a client to verify remotely if the files he received from a business partner or from the bank are true, or if a modification of the document was attempted. Thanks to Coinfirm’s solution, PKO BP can now provide more efficient supervision of transaction history and data verification, which will be beneficial both in terms of time savings and costs of managing these processes. Trudatum is not only a solution for the challenges above, but it also permits cryptographic security for digital signatures.

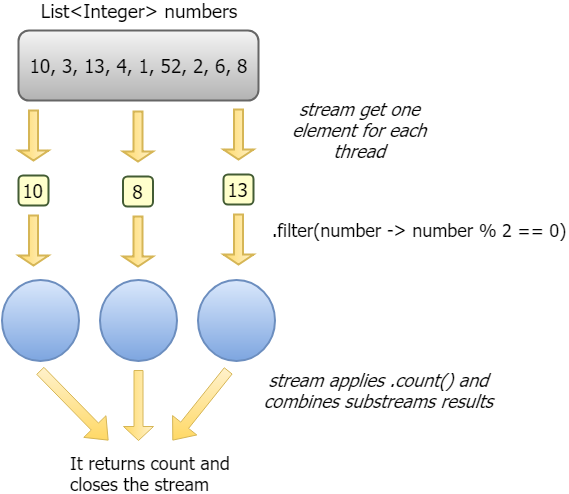

IT infrastructure management software learns analytics tricks

While some users remain cautious or even skeptical of AIOps, IT infrastructure management software of every description -- from container orchestration tools to IT monitoring and incident response utilities -- now offer some form of analytics-driven automation. That ubiquity indicates at least some user demand, and IT pros everywhere must grapple with AIOps, as tools they already use add AI and analytics features. PagerDuty, for example, has concentrated on data analytics and AI additions to its IT incident response software in 2017 and 2018. A new AI feature added in June 2018, Event Intelligence, identifies patterns in human incident remediation behavior and uses those patterns to understand service dependencies and communicate incident response suggestions to operators when new incidents occur. "The best predictor of what someone will do in the future is what they actually do, not what they think they will do," said Rachel Obstler, vice president of products at PagerDuty, based in San Francisco.

BOE tells U.K. banks cyber attacks are coming, now get ready

Financial regulators told firms to come up with a detailed plan for restoring services such as payments, lending and insurance after a disruption, and to invest in the staff and technology to make it work. The plan should include time limits on how long an outage could last. “Boards and senior management should assume that individual systems and processes that support business services will be disrupted, and increase the focus on back-up plans, responses and recovery options,” the Bank of England and the Financial Conduct Authority said. The discussion paper published on Thursday is part of the regulators’ effort to bolster the resilience of financial firms in response to a rising number of operational failures. The focus is on ensuring continuity of business services that are essential for the economy. The regulators underlined the role that firms’ senior officials have to play in improving their ability to bounce back in a crisis. Thursday’s paper is intended to spark a debate with industry and consumers on how best to respond to inevitable disruptions.

Collaborative Intelligence: Humans and AI Are Joining Forces

As AIs increasingly reach conclusions through processes that are opaque (the so-called black-box problem), they require human experts in the field to explain their behavior to nonexpert users. These “explainers” are particularly important in evidence-based industries, such as law and medicine, where a practitioner needs to understand how an AI weighed inputs into, say, a sentencing or medical recommendation. Explainers are similarly important in helping insurers and law enforcement understand why an autonomous car took actions that led to an accident—or failed to avoid one. And explainers are becoming integral in regulated industries—indeed, in any consumer-facing industry where a machine’s output could be challenged as unfair, illegal, or just plain wrong. For instance, the European Union’s new General Data Protection Regulation (GDPR) gives consumers the right to receive an explanation for any algorithm-based decision, such as the rate offer on a credit card or mortgage. This is one area where AI will contribute to increased employment: Experts estimate that companies will have to create about 75,000 new jobs to administer the GDPR requirements.

Nasdaq CIO Puts AI to Work

“There’s not an industry that I can see that won’t benefit (from AI),” he said. Technology executives at Nasdaq and other firms say the big value in AI comes when it’s paired with human workers, in what’s known as “AI augmentation.” In 2021, AI augmentation will generate $2.9 trillion in business value and recover 6.2 billion hours of worker productivity, according to forecasts from Gartner Inc. Last year, Nasdaq’s team of in-house team of data scientists and data engineers built an AI system that helps analysts write change-of-ownership reports. Such reports typically include information for chief executives and investor relations officers about institutional activity, including top buyers and sellers, as well as shareholder analysis, price performance and valuation. In the past, the reports were higher quality when the humans writing them had a lot of experience. But when those analysts moved on to other jobs, it took time to train new employees to write the reports, and in turn, to ramp up the quality, Mr. Peterson said. The AI system, currently in pilot phase, helps generate some portions of the report quickly and at a high quality, freeing up human analysts to spend more time providing deeper context and advising clients, Nasdaq said.

While no one was looking, California passed its own GDPR

What happens now? If you do business in California, you have to comply with the law, and so does any company that you sell customer data. If they violate the law, you are on the hook for it. And you have to add a “Do Not Sell My Personal Information” link to your site. No doubt the law will be challenged, and the ballot can always come back if the law is weakened or overturned. If you are potentially impacted by GDPR in any way, you should have already done some compliance. Now, if you do business in California you will have to, even if you aren’t in the state. Basically, all the best practices for GDPR apply here. This means making sure all of your data is accurate. Now would be a really good time to revisit customer and mailing lists because if there are inaccuracies you can find it will save the trouble of doing it later. Old, outdated or obsolete data can be removed. Make sure all data collection channels know of the new rules and adjust accordingly to take in correct data and quickly get at it to make changes or removals. Make sure to document data handling rules so everyone who handles data, either for intake, editing or management, knows what is expected.

Reactive or Proactive? Making the Case for New Kill Chains

Organizations won't see these employees looking at job search websites. Instead, they will visit websites where they can circumvent web proxies. These are websites that allow them to hide, and then jump to the Dark Web, for example, to move data and bypass controls. The next stage of the chain is when they persistently try logging into systems to which they typically do not have access. They quietly "jiggle doors" looking for sensitive data that is outside the scope of their, their peers', and overall team's role. Combining these two stages — visiting suspicious websites and jiggling doors — are good examples that indicate a person may be a persistent threat. The next stage is when the person acts. For example, on a regular basis, s/he may encrypt small amounts of sensitive data and exfiltrate it outside the network. By breaking the data down into small amounts, the person aims to evade detection, and by encrypting it, makes it even more difficult because the company cannot see what's inside. Obviously, the goal is to stop the person before getting to the final stage of exfiltration.

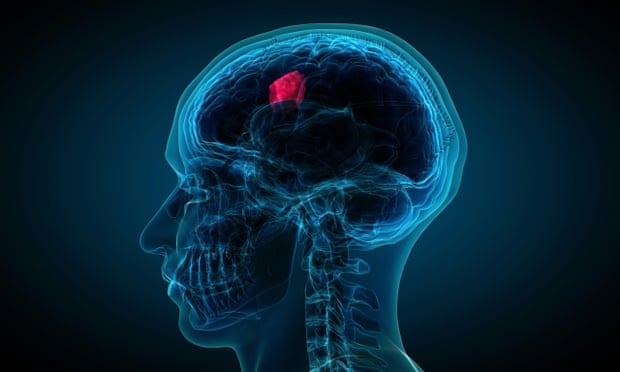

You can no longer afford to indulge cloud blockers

Many enterprises are today highly successful with cloud computing, and the evidence clearly shows that the cloud is more secure than on-premises systems, costs less to operate, and provides key strategic capabilities such as agility and reduced time to market. But there are still those people who have kept cloud computing out of their companies for the last decade, at first through active resistance and dismissal, now by being quietly passive-aggressive. Today, they are faced with a boss, board of directors, and staffers who are all looking at new information, and perhaps facing competition that is faster and more agile with cloud computing. These cloud resisters are in a full-blown state of cognitive dissonance. This cognitive dissonance is bad for both them and their companies. Many of these people are seen as blockers, and so they lose their jobs; CIOs top the list. What a waste of talent! Worse, they also end up wasting their companies’ time and money trying to prove to everyone that they were indeed right about something they are not right about.

The Generational Shift in IT Drives Change for IT Pros

Instead of focusing on the challenges that emerging technologies bring focus on the new opportunities they offer, just as they did when the Internet arrived and mobile devices became more commonplace. IT professionals can play a key role in using technology-driven creativity to enable innovation, standardization, and simplicity into the business, helping the whole organization get ahead of the curve. In order to do this, IT has to move away from patching and backups to value-creating activities such as design-thinking, application development, user adoption and learning management. Even the smallest step, such as creating a chatbot that serves as an IT helpdesk, can transform organizational performance and invidiual productivity. Further, emerging technologies like artificial intelligence, natural language processing, blockchain and the Internet of Things are being built on cloud technology. Understanding these emerging technologies, the data they rely on, and how they can be applied to the business will be critical as IT professionals become strategic partners in deploying these technologies in the enterprise.

Quote for the day:

"The greatest single problem in communication is the illusion that it has occurred." -- G.B. Shaw