Evolution of Data Governance with Eric Falthzik

Falthzik explained that although those policies and guardrails are still important, business now moves too quickly to allow for such a slow-moving process. Workers need self-service access to data and analytics to remain competitive in the future. He added, “Enabling self-service involves some new areas of governance -- for example, pursuing active metadata management and being more diligent data quality. We also need to discuss how we’re going to go forward in a world of data products and AI.” Another key component of modern data governance Falthzik recommends is implementing a federated architecture. “A centralized environment is part of the old-school process of a small group maintaining tight control over data; it won’t work in a self-service environment,” he said. “Business workers want to feel some sense of involvement in the process of governing the data they use daily. Additionally, some new concepts such as the data mesh recommend that the data domains be given far more autonomy, which can’t be done in a centralized environment.” He also noted that an added benefit of assigning more data operations to the business is that it will help identify those who would make the best data stewards.

Tech Works: How to Build a Career Like a Pragmatic Engineer

Specialist or Generalist? This is the question Orosz is asked in his continued conversations with developers: Should they dive deep into one technology or go broad? In last month’s issue of Tech Works, Kelsey Hightower argued you have to go deep to then be able to back up and take in the big picture. “It depends on the context of your company,” Orosz said. He offered an example: “If you’re, let’s say, a native mobile engineer, and everyone around you is a native mobile engineer, and there’s no opportunities to do web development, then probably the right thing is to go deep into that technology.” After all, you will have expert native mobile engineers around you to help you become an expert, too. At another point in your career, you may find yourself at a larger company that has many opportunities to learn from different teammates, tools and contexts. Take advantage. “As a software engineer, you don’t need any book, if you’re in the right environment — you have your peers, your colleagues, your mentors, your managers,” Orosz said. “And, if you’re in a good environment, they’ll help you grow with them.”

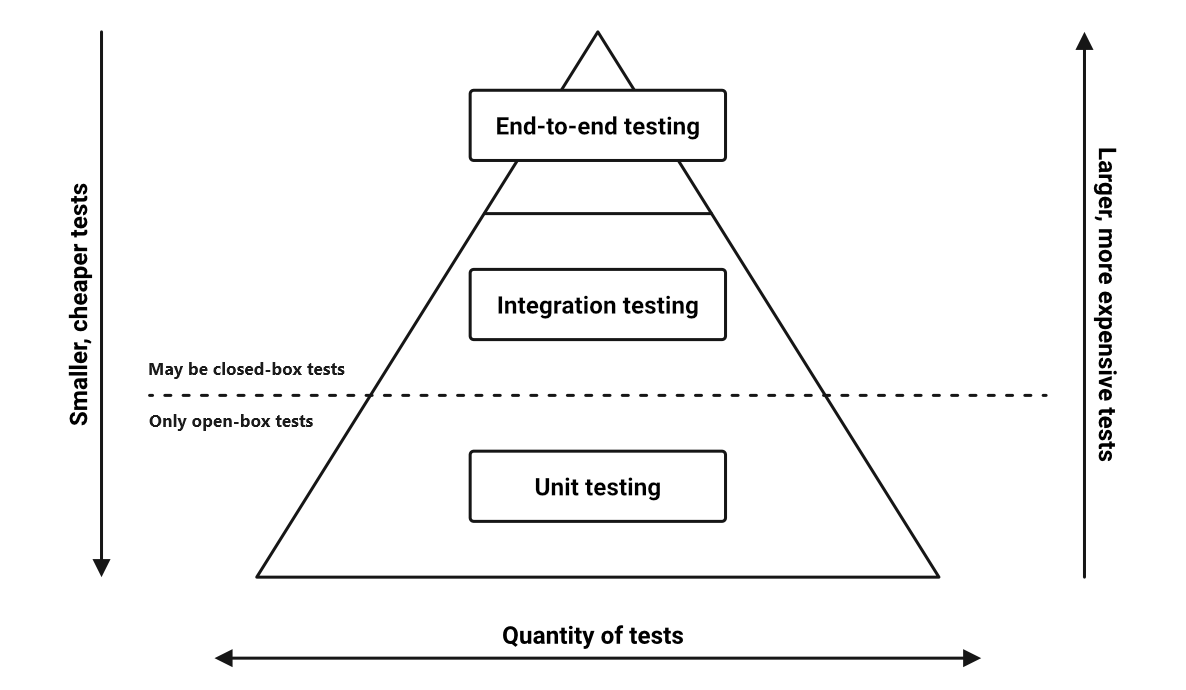

The testing pyramid: Strategic software testing for Agile teams

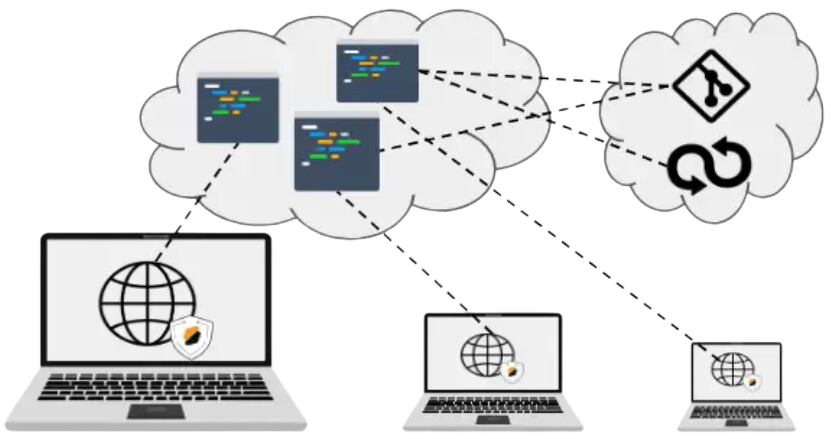

CI/CD automates the process of building, testing, and deploying your code,

giving you complete control over how and when your tests and other development

tasks are executed. The iterative nature of CI/CD processes means they integrate

perfectly with the testing pyramid model, particularly in Agile environments. A

typical CI/CD pipeline executes unit tests on every commit to a development

branch, providing immediate feedback to help developers catch issues early in

the development cycle. Integration tests typically run after unit tests have

successfully passed and before a merge into the main branch, ensuring that

different components work well together before significant changes are

integrated into the broader application. End-to-end (E2E) tests are usually

executed after all changes have been merged into the main branch and before

deployment to staging or production environments, serving as a final

verification that the application meets all requirements and functions correctly

in an environment that closely mimics the production setting. This approach is a

boon to Agile teams, facilitating rapid development and deployment.

Digital tools transforming approach to omnichannel: Cloud, AI ensure seamless customer experience, data security

According to McKinsey, offering an omnichannel experience is no longer an

option for retail organisations – it is vital to their very survival. In its

report, the consulting firm pointed out that, while organisations may well

look at omnichannel operations in isolation, customers do not, expecting a

seamless experience regardless of whether they are at the store, or browsing

online. The role of digitisation in transforming the retail sector’s

operations has been comprehensive – from marketing all the way to tailoring

customer experience across channels. ... McKinsey estimates that concerted

efforts made to offer a personalised omnichannel experience to the customer

can help organisations register an uptick in revenue between 5% to 15%. The

results that Starbucks registered a decade after after it launched a campaign

allowing customers to place orders online, offered cashback and personalised

rewards – USD one billion in prepaid mobile deposits – offers but a glimpse of

the impact customisation of experience can have on an organisation’s

bottomline. Personalisation of experience across all touchpoints would require

organisations to compile large datasets on every customer to enhance the

quality of one’s engagement with that specific brand.

Google’s New AI Is Learning to Diagnose Patients

Navigating health care systems as a patient can be daunting at the best of

times, whether you’re interpreting jargon-filled diagnoses or determining

which specialists to see next. Similarly, doctors often have grueling

schedules that make it difficult to offer personalized attention to all their

patients. These issues are only exacerbated in areas with limited physicians

and medical infrastructure. Bringing AI into the doctor’s office to alleviate

these problems is a dream that researchers have been working toward since

IBM’s Watson made its debut over a decade ago, but progress toward these goals

has been slow-moving. Now, large language models (LLMs), including ChatGPT,

could have the potential to reinvigorate those ambitions. The team behind

Google DeepMind have proposed a new AI model called AMIE (Articulate Medical

Intelligence Explorer), in a recent preprint paper published 11 January on

arXiv. The model could take in information from patients and provide clear

explanations of medical conditions in a wellness visit

consultation. Vivek Natarajan is an AI researcher at Google and lead

author on the recent paper.

Agile Methodologies for Edge Computing in IoT

Agile methodologies, with their iterative and incremental approach, are

well-suited for the dynamic nature of IoT projects. They allow for continuous

adaptation to changing requirements and rapid problem-solving, which is

crucial in the IoT landscape where technologies and user needs evolve quickly.

In the realm of IoT and edge computing, the dynamic and often unpredictable

nature of projects necessitates an approach that is both flexible and robust.

Agile methodologies stand out as a beacon in this landscape, offering a

framework that can adapt to rapid changes and technological advancements. By

embracing key Agile practices, developers and project managers can navigate

the complexities of IoT and edge computing with greater ease and precision.

These practices, ranging from adaptive planning and evolutionary development

to early delivery and continuous improvement, are tailored to meet the unique

demands of IoT projects. They facilitate efficient handling of high volumes of

data, security concerns, and the integration of new technologies at the edge

of networks.

Human-Written Or Machine-Generated: Finding Intelligence In Laungauge Models

What is intelligence? Most succinctly, it is the ability to reason and

reflect, as well as to learn and to possess awareness of not just the present,

but also the past and future. Yet as simple as this sounds, we humans have

trouble applying it in a rational fashion to everything from pets to babies

born with anencephaly, where instinct and unconscious actions are mistaken for

intelligence and reasoning. Much as our brains will happily see patterns and

shapes where they do not exist, these same brains will accept something as

human-created when it fits our preconceived notions. People will often point

to the output of ChatGPT – which is usually backed by the GPT-4 LLM – as an

example of ‘artificial intelligence’, but what is not mentioned here is the

enormous amount of human labor involved in keeping up this appearance. A 2023

investigation by New York Magazine and The Verge uncovered the sheer numbers

of so-called annotators: people who are tasked with identifying, categorizing

and otherwise annotating everything from customer responses to text fragments

to endless amounts of images, depending on whether the LLM and its frontend is

being used for customer support

How to Navigate the Pitfalls of Toxic Positivity in the Workplace

The shift from a culture of toxic positivity to one of authenticity requires a

conscious effort from organizational leaders. It involves acknowledging and

embracing the full spectrum of human emotions, not just the positive ones.

Leaders must create a space where employees feel safe to express their genuine

feelings, whether they are positive or negative. To cultivate an authentic

workplace culture, leaders must first recognize the signs of toxic positivity.

These signs include a lack of genuine communication, a culture of forced

niceness and an avoidance of addressing real issues. Once identified, leaders

can implement strategies that foster authenticity, such as encouraging open

and honest communication, creating forums for sharing diverse perspectives and

recognizing and addressing the challenges employees face. ... This means

celebrating successes and joys, as well as being open to hearing and

understanding the challenges and struggles. It involves shifting focus from

external roles, often associated with a facade of positivity, to a more

profound connection with our authentic selves. When we operate from a place of

authenticity, the dichotomy of toxic positivity and negativity naturally

dissolves.

Embracing Software Architecture

An architect cannot be pro-active with more than 3-5 teams without changing

their work (for example becoming review focused instead of design focused).

Meaning a software architect will be optimally engaged with roughly this

number of teams. However, software architects may scale their practice and

maturity by leading larger and larger initiatives of architects/teams as long

as they keep their own working relationship with a team or two. This ratio of

3-5 major stakeholders, teams, projects reoccurs a great deal when

interviewing architects. The ratio isn’t just to teams, it is architects to

the organization and business model. How many project/products there are in

the organization is related to their size and complexity. This number of new

change initiatives to architects is deeply telling. In places where that

number is closer to 5% of IT, or 1 senior solution architect per medium

project or larger. And where the largest projects have more than one type of

architect, the surveys, interviews and success measures rise significantly.

... We say strategy and execution all the time but in fact only pay attention

to strategy OR execution. Then we let ‘the technical people handle it’ or say

‘that’s a business problem’ and we keep the two separate.

Key dimensions of cloud compliance and regulations in 2024

Firstly, organisations must identify and adhere to relevant regulations and

industry standards. This involves a comprehensive understanding of the

regulatory ecosystem and compliance requirements specific to their industry.

They must ensure that data management practices align with established

guidelines. Corporations must also acknowledge and embrace the responsibility

for data stored in the cloud and place a secure configuration of the services

being used. An organisation’s Internal processes are pivotal in determining

the security parameters of its cloud environment, encompassing elements such

as access controls, encryption, and data classification. There has to be a

comprehensive understanding of the intricacies of the cloud environment’s

service and deployment models. They must identify and categorise whether a

service is Software as a Service (SaaS), Infrastructure as a Service (IaaS),

or Platform as a Service (PaaS). Consequently, by understanding deployment

models like hybrid, public, and private organisations can tailor their

compliance strategies accordingly.

Quote for the day:

"Great leaders do not desire to lead

but to serve." -- Myles Munroe

/filters:no_upscale()/articles/mva-enough-architecture/en/resources/1figure-1-resized-1705943271137.jpg)