What’s the deal with cross-border data transfers after Brexit?

It remains unclear whether the UK will receive an adequacy decision after the

end of the Brexit transition period. The main legal argument in favour of the

UK receiving an adequacy decision is that no other Third Country has laws that

are as similar to the GDPR as the Data Protection Act 2018. Since the EU has

already granted adequacy decisions to several jurisdictions that have less

similar laws, the argument goes that the UK is the most deserving candidate

for an adequacy decision. The main legal argument against the UK receiving an

adequacy decision is that the UK conducts extensive surveillance for the

purposes of national security, and that this is the same activity that

resulted in the Privacy Shield being overturned by the CJEU in Schrems II. On

16 September 2020, the European Parliament, released comments on the Schrems

II decision, in which it formally acknowledged the argument that the UK might

not receive an adequacy decision due to its national security surveillance

activities. This also creates doubts as to whether existing adequacy decisions

will be impacted in jurisdictions that have laws that are much less similar to

the GDPR, and that have significant national security operations.

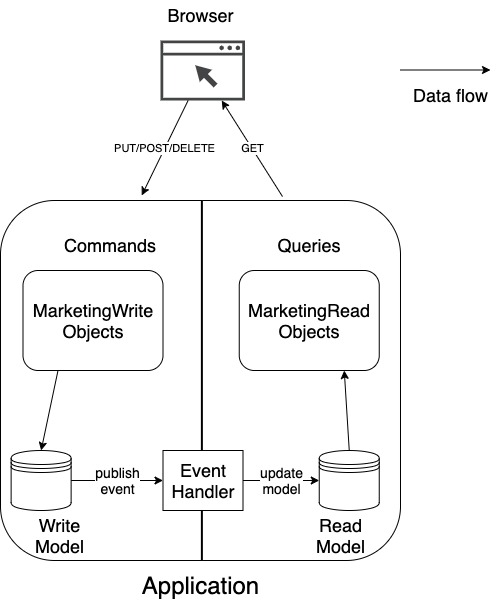

CQRS Is an Anti-Pattern for DDD

CQRS conflicts with one of the main principles for writing software – low

coupling. “If changing one module in a program requires changing another

module, then coupling exists”. Almost every pattern in software is referring

to this problem directly or indirectly. How do you divide your system into

components, in such a way, that you can change one component with minimum

impact on the other components? Or what is the right responsibility in the

Single Responsibility Principle? It is really hard for me to accept, that you

can evolve the read and write part of the system separately. Reading and

writing are not the right responsibilities for building domain models, because

business people do not think in terms of reading and writing. The real value

lies in process flows. Only the most minor changes in a process flow would

affect only the read or only the write part of the domain model. Maybe you are

thinking of my example with the marketing application? It does sound a bit

like a CRUD application, right? Not the best candidate for CQRS. Well, there

were indeed more complex requirements in my original project. For example,

when you assign a salesperson to a customer, the system must decide, whether

he/she is the primary salesperson or a supporting salesperson.

Working with Local Storage in a Blazor Progressive Web App

Fortunately, accessing local storage is easy once you've added Chris Sainty's

Blazored.LocalStorage NuGet Package to your application (the project and its

documentation can be found on GitHub). Before anything else, to use Sainty's

package, you need to add it your project's Services collection. Normally, I'd

do that in my project's Startup class but the Visual Studio template for a PWA

doesn't include a Startup class. So, in a PWA, you'll need to add Sainty's

package to the Services collection in the Program.cs file. The Program.cs file

in the PWA template already includes code to add an HttpClient to the Services

collection. You can add Sainty's package by tacking on a call to his

AddBlazoredLocalStorage extension method ... It's easy to check to see what's

in local storage: Press F12 to bring up the Developer's tools panel in either

the browser or PWA version of your app, click on the Application tab (which

may be hidden under the tools overflow menu icon), and select Storage from the

left-hand list. While the code is straightforward, I found debugging the

resulting application ... problematic.

Credential stuffing is just the tip of the iceberg

Credential stuffing attacks are a key concern for good reason. High profile

breaches—such as those of Equifax and LinkedIn, to name two of many—have

resulted in billions of compromised credentials floating around on the dark

web, feeding an underground industry of malicious activity. For several

years now, about 80% of breaches that have resulted from hacking have

involved stolen and/or weak passwords, according to Verizon’s annual Data

Breach Investigations Report. Additionally, research by Akamai determined

that three-quarters of credential abuse attacks against the financial

services industry in 2019 were aimed at APIs. Many of those attacks are

conducted on a large scale to overwhelm organizations with millions of

automated login attempts. The majority of threats to APIs move beyond

credential stuffing, which is only one of many threats to APIs as defined in

the 2019 OWASP API Security Top 10. In many instances they are not

automated, are much more subtle and come from authenticated users. APIs,

which are essential to an increasing number of applications, are specialized

entities performing particular functions for specific organizations. Someone

exploiting a vulnerability in an API used by a bank, retailer or other

institution could, with a couple of subtle calls, dump the database, drain

an account, cause an outage or do all kinds of other damage to impact

revenue and brand reputation.

CISA: LokiBot Stealer Storms Into a Resurgence

“LokiBot has stolen credentials from multiple applications and data sources,

including Windows operating system credentials, email clients, File Transfer

Protocol and Secure File Transfer Protocol clients,” according to the alert,

issued Tuesday. “LokiBot has [also] demonstrated the ability to steal

credentials from…Safari and Chromium and Mozilla Firefox-based web

browsers.” To boot, LokiBot can also act as a backdoor into infected systems

to pave the way for additional payloads. Like its Viking namesake, LokiBot

is a bit of a trickster, and disguises itself in diverse attachment types,

sometimes using steganography for maximum obfuscation. For instance, the

malware has been disguised as a .ZIP attachment hidden inside a .PNG file

that can slip past some email security gateways, or hidden as an ISO disk

image file attachment. It also uses a number of application guises. Since

LokiBot was first reported in 2015, cyber actors have used it across a range

of targeted applications,” CISA noted. For instance, in February, it was

seen impersonating a launcher for the popular Fortnite video game. Other

tactics include the use of zipped files along with malicious macros in

Microsoft Word and Excel, and leveraging the exploit CVE-2017-11882.

Does Cybersecurity Have a Public Image Problem?

“In effect, the portrayal in media assigns an attribute of quick decisive

thinking to the process – an attribute that potential cybersecurity

candidates might not view themselves as possessing,” he said. “The reality

is that most cybersecurity incidents aren’t as adversarial as portrayed on

TV, and that two of the most important skills to become a professional in a

cybersecurity discipline are strong problem solving abilities and attention

to detail.” Chris Hauk, consumer privacy champion at Pixel Privacy, argued

that “most people think cybersecurity involves maneuvering a 3D maze filled

with grinning skeletons that represent malware that must be zapped by the

BFG virus zapper” rather than applying patches to keep operating systems and

applications up-to-date and ensuring a firewall is blocking what it is

supposed to be guarding against. “It is all character based or a bit of

point and click, and quite boring.” He claimed that a lot of the skills

for cybersecurity mostly consist of common sense, and this means guarding

yourself against everyday threats on the internet by running anti-virus and

anti-malware protection, and avoiding clicking on links and attachments in

email and text messages.”

Microservices: 5 Questions to Ask before Making that Decision

When it comes to Microservices, the success stories and the concepts are

truly mesmerizing. Having a collection of services of each doing one thing

in the business domain builds a perfect image of a lean architecture.

However, we shouldn’t forget that these services need to work together to

deliver business value to their end-users. ... Knowing the business domain

inside out and the experience with the domain-driven design is crucial to

identify the bounded context of each service. Since we allocate teams per

each Microservice and allow them to work with minimal interference, getting

the bounded context wrong would increase the communication overhead and

inter-team dependencies, impacting the overall development speed. So for a

project starting from scratch, selecting Microservices is a risky move. ...

Microservices isn’t a silver bullet or a superb architecture style that is

for everyone. Since we need to deal with distributed systems, it could be an

overkill for many. Therefore, it’s essential to assess whether the issues

you are experiencing with the Monolith are solvable by Microservices.

To Deliver Better Customer Experience Brands Need To Develop An Empathetic Musculature

To become more empathetic brands need to start thinking holistically about

it. In fact, I believe, that they need to start thinking about developing an

empathetic musculature for their organization, a concept that I started

musing about in Punk CX. If they don't then, according to Rana el Kaliouby,

CEO of Affectiva, the danger is that "the need to build empathy will get

reduced down to a training course." So, what's it going to take to build an

empathetic musculature at an organizational level? Well, if you look up

'musculature' in the dictionary, it is defined as 'the system or arrangement

of muscles in a body or a body part.' So, to develop muscles, you have to

train. But, you have to train with a purpose whether that is to stay fit,

lose weight, rehabilitate after an injury or to compete. This will

take time, discipline and commitment as it is both a habit and capability

that we will need to develop, nurture and maintain if we are to see the

benefits. That, in turn, will require strategy, systems, processes, design,

technology, leadership and the right sort of people and training to help us

get there. Without a doubt, it will be hard, and we won't necessarily get it

right first time.

The perseverance of resilient leadership: Sustaining impact on the road to Thrive

As leaders, we need to empathize with and acknowledge the myriad challenges

our people are currently coping with—many of which have no end in sight.

Psychologists describe “ambiguous loss” as losses that are inexplicable,

outside one’s control, and have no definitive endpoint.3

Typically experienced when loved ones are missing or suffering from

progressive chronic illness, the uncertainties our colleagues are enduring

today surely also constitute ambiguous loss:4

The loss of our familiar way of being in the world is difficult to understand,

beyond our control, and uncertain as to when we can return to some semblance

of normal. As we discuss in our Bridge across uncertainty guide for leaders,

there are three types of stress: good stress, tolerable stress, and toxic

stress, the last of which is critical to relieve before people become

overwhelmed.5

With both ambiguous loss and toxic stress, the better definition of an

endpoint and a reduction in uncertainty are important ways we can support our

teams. For example, Deloitte has hosted Zoom-based workshops where a

cross-section of our people helped to inform return-to-the workplace

programs—giving them a greater sense of control.

Q&A on the Book- Problem? What Problem? with Ben Linders

If an organization is working in an agile way, their approach to solving

problems should also be agile-based. It has to fit in and be congruent with the

company's and people's agile mindset to be effective. What does problem-solving

look like when we are using an agile mindset and agile thinking? Here's my view

on this. Many problems relate to the way people work together. Where every

person does the best they can, problems often arise when things come together.

Problem-solving practices should help us to understand how individuals interact

and to solve collaboration issues. There are often too many problems to solve.

We need to focus our effort on solving impediments that have the biggest impact

on outcomes. Solve the ones that affect our ability to deliver something that is

working, right now. Collaboration is key, not only within teams but also

between teams and when working with stakeholders. Problem-solving practices

should enable us to visualize the system and collaboratively look for solutions.

They should engage people from the start and enable them to self-organize and

come up with solutions that work for them. While we're working on a problem,

things will change. We'll learn new things along the way.

Quote for the day:

"The signs of outstanding leadership are found among the followers." -- Max DePree