Open Source Democratized Software. Now Let’s Democratize Security

Now, imagine what would happen if the world of cybersecurity were democratized

in the way that software development has been democratized by open source. It

would create a world where security would cease to be the domain of elite

security experts alone. Instead, anyone would be able to help identify the

security problems that they face, then build the solutions they need to address

them, just as software users today can envision the software platforms and

features they’d like to see, then help implement them through open source

projects. In other words, users wouldn’t need to wait on middlemen — that is,

the experts who hold the reins — to build the security solutions they needed.

They could build them themselves. That doesn’t mean that security experts would

go away. They’d still be critical, just as professional programmers working for

major corporations continue to play an important role alongside independent

programmers in open source software development. But instead of requiring small

groups of security specialists to identify all the cybersecurity risks and solve

them on their own, these tasks would be democratized and shared across

organizations as a whole.

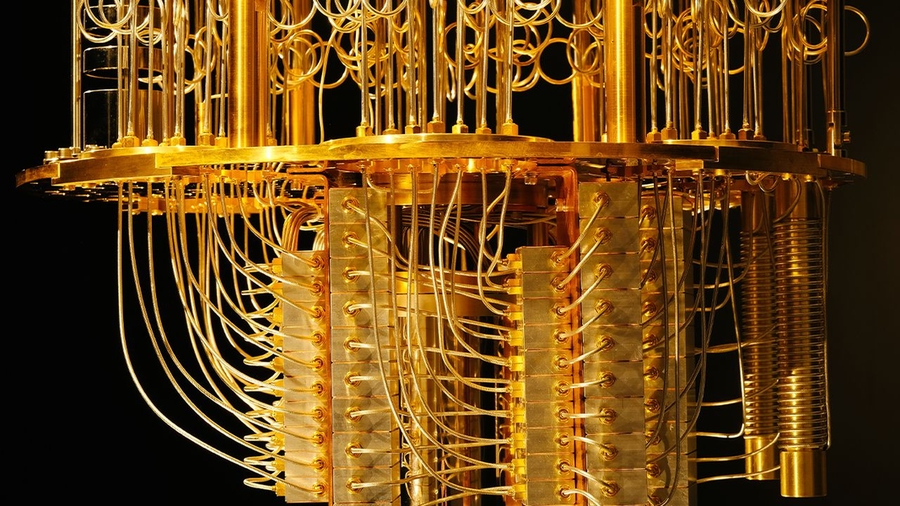

A new language for quantum computing

Programming quantum computers requires awareness of something called

“entanglement,” a computational multiplier for qubits of sorts, which translates

to a lot of power. When two qubits are entangled, actions on one qubit can

change the value of the other, even when they are physically separated, giving

rise to Einstein’s characterization of “spooky action at a distance.” But that

potency is equal parts a source of weakness. When programming, discarding one

qubit without being mindful of its entanglement with another qubit can destroy

the data stored in the other, jeopardizing the correctness of the program.

Scientists from MIT’s Computer Science and Artificial Intelligence (CSAIL) aimed

to do some unraveling by creating their own programming language for quantum

computing called Twist. Twist can describe and verify which pieces of data are

entangled in a quantum program, through a language a classical programmer can

understand. The language uses a concept called purity, which enforces the

absence of entanglement and results in more intuitive programs, with ideally

fewer bugs.

Understanding Linus's Law for open source security

Linus's Law asserts that given enough eyeballs, all bugs are shallow, but we

don't really know how many eyeballs are "enough." However, don't underestimate

the number. Software is very often reviewed by more people than you might

imagine. The original developer or developers obviously know the code that

they've written. However, open source is often a group effort, so the longer

code is open, the more software developers end up seeing it. A developer must

review major portions of a project's code because they must learn a codebase to

write new features for it. Open source packagers also get involved with many

projects in order to make them available to a Linux distribution. Sometimes an

application can be packaged with almost no familiarity with the code, but often

a packager gets familiar with a project's code, both because they don't want to

sign off on software they don't trust and because they may have to make

modifications to get it to compile correctly. Bug reporters and triagers also

sometimes get familiar with a codebase as they try to solve anomalies ranging

from quirks to major crashes.

UN launches privacy lab pilot to unlock cross-border data sharing benefits

The lab’s first use case will see NSOs share data relating to the import and

export of certain commodities recorded between their own country and all other

countries in the group. From here, each pair of countries will use PETs to

discreetly check whether the amount of their bilateral trade corresponds or not.

The learning exercise will use pre-approved, publicly available data, and will

aim to ‘iron out’ any technical, security, or bureaucratic challenges. “Senior

leaders are now talking about Privacy Enhancing Technologies to enable

cross-border and cross-sector collaboration to solve shared challenges,” said

Stefan Schweinfest, director of the UN Statistics Division. “At the same time,

PETs will protect shared values such as privacy, accountability, and

transparency. This is an important moment for PETs to help improve official

statistics, and support democratic societies, honouring citizens’ entitlement to

trusted public information.” Dr Jack Fitzsimons, founder of Oblivious,

commented: When you send data to a server, there is well-established technology

to make sure it lands at the right place.

Surge in Malicious QR Codes Sparks FBI Alert

Menus, event ticket sales, quick site access — QR codes have become a common way

to interact as a result of the COVID-19 pandemic. But the smart little matrix

bar codes are easily tampered with and can be used to direct victims to

malicious sites, the FBI warned in an alert. QR codes are the square, scannable

codes familiar from applications like touchless menus at restaurants, and have

gained in popularity over the pandemic as contactless interactions have become

the norm. Simply navigating a smartphone camera over the image allows the

device’s QR translator – built into most mobile phones – to “read” the code and

open a corresponding website. “A victim scans what they think to be a legitimate

code, but the tampered code directs victims to a malicious site, which prompts

them to enter login and financial information,” the FBI alert explained. “Access

to this victim information gives the cybercriminal the ability to potentially

steal funds through victim accounts.”

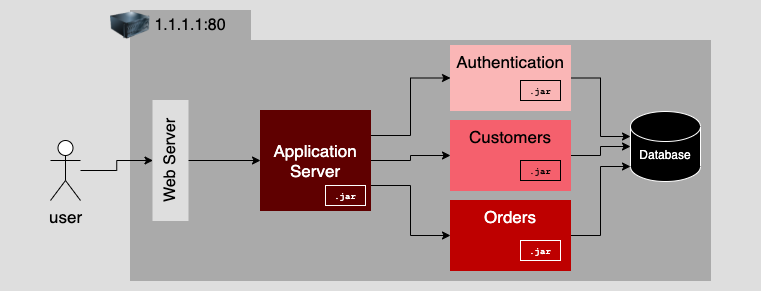

Palo Alto Networks and IBM Are Automating 5G Security for Business Growth

The evolution of mobile networks has long focused on moving faster, and moving

toward more distributed and abstracted network architectures. The payoff has

been remarkable: networks that are massively scalable, extremely adaptable,

and highly resilient. But modern networks are also incredibly dynamic and

complex. It's impossible to manage them or to deploy a modern service-based

architecture without also implementing the automation tools required to manage

and maintain them. The same imperative applies to 5G security automation. As

part of our network slice creation process, for example, IBM Cloud Pak for

Network Automation serves as a master orchestrator, delivering the Network

Slice Management Function (NSMF) to create a network slice across a

cloud-native 5G network, running on Red Hat OpenShift. Security parameters are

passed on to the orchestrator, and then instantiated and configured for the

Palo Alto Networks CN-Series firewall. NSMF also deploys Prisma Cloud Compute

Edition to protect the Kubernetes container environment supporting the core

network functions.

Red vs. blue vs. purple teams: How to run an effective exercise

“Red teams don’t just test for vulnerabilities, but do so using the tools,

tips and techniques of their likely threat actors, and in campaigns that run

continuously for an extended period of time,” wrote Daniel Miessler, a

security consultant who has witnessed numerous red/blue exercises, in a blog

post. “A great red team can be an early warning system to find common origins

of attacks and to track an adversary’s techniques.” John, a retired IBM

architect who has worked in large IT shops, tells CSO that “threats are going

to emerge that red teams will never test for. There are threats that can

overwhelm blue teams and possibly put companies out of business.” ... Let’s

also talk about the color purple. This carries several different meanings,

depending on how this team is constructed. The color gives you the idea that

this is a combination of both red and blue teams, so that both can collaborate

and improve their skills. This combination could mean that there are

representatives from both sides working together on the exercise, or even as

part of their jobs.

Top 3 cloud-based drivers of digital transformation in 2022

How people feel doesn’t change between being a consumer to being at work. They

want options around hybrid work, equity and wellness. Much of the language

today around the “Great Resignation,” or people leaving their jobs, is less

about money and time at the office, and more about finding work with meaning

that ultimately contributes to a better world. Beyond wanting to be heard at

the workplace, people are curious to know how their work makes a positive

contribution. For example, companies want more visibility into the carbon

footprint of their technology platforms and options to reduce it, offering

positive contributions that are appreciated by both staff and customers. This

is in part in response to people bringing their values to work and companies

responding to those values. We’ll see this increase moving forward. According

to Deloitte, Gen Z is the first generation to make choices about the work they

do based on personal ethics. And McKinsey says two-thirds of millennials take

an employer’s social and environmental commitments into account when deciding

where to work.

The Web3 Stack: What Web 2.0 Developers Need to Know

One of the trickiest parts of Web3 development is storing and using data.

While blockchains are good at being “trustless” chains of immutable data, they

are also incredibly inefficient at storing and processing large amounts of

data — especially for dapps. This is where file storage protocols like IPFS,

Arweave and Filecoin come in. Arweave is an open source project that

describes itself as “a protocol that allows you to store data permanently,

sustainably, with a single upfront fee.” It’s essentially a peer-to-peer (P2P)

network, but has its own set of crypto buzzwords — its mining mechanism is

called “Succinct Proofs of Random Access (SPoRAs)” and developers can deploy

apps to the “permaweb” (“a permanent and decentralized web built on top of the

Arweave”). To complicate matters further, dapp developers have the option to

use “off-chain” solutions, where the data is stored somewhere other than the

main blockchain. Two common forms of this are “sidechains” (secondary

blockchains) and so-called “Layer 2” (L2) solutions, like Bitcoin Lightning

Network and Ethereum Plasma.

Hybrid & Remote Work in 2022 and Beyond

Mental health and wellbeing is in the spotlight - with hundreds of thousands

of people shifting to a new working environment in the midst of the chaos

caused by the COVID-19 pandemic, anxiety, fear, sadness, anger and frustration

are normal reactions. InfoQ reported on some resources and advice to help

maintain mental health when under stress. We need to be kind to ourselves, and

accept that these emotions will happen, without minimising or denying them.

There are things that you can do to help overcome the stress; empathic

responding is one way to positively deal with the stresses we all find

ourselves under. Research shows that mental health is still not well addressed

in most workplaces, mainly because it is still stigmatized in society despite

impacting at least one in five people at any given time, and the importance of

training managers to support the mental health of their teams. The World

Health Organisation has stated that maintaining physical and mental health are

key to resilience during the COVID-19 pandemic, and provides advice on how to

look after yourself and support those around you.

Quote for the day:

"Leaders are people who believe so

passionately that they can seduce other people into sharing their dream."

-- Warren G. Bennis,